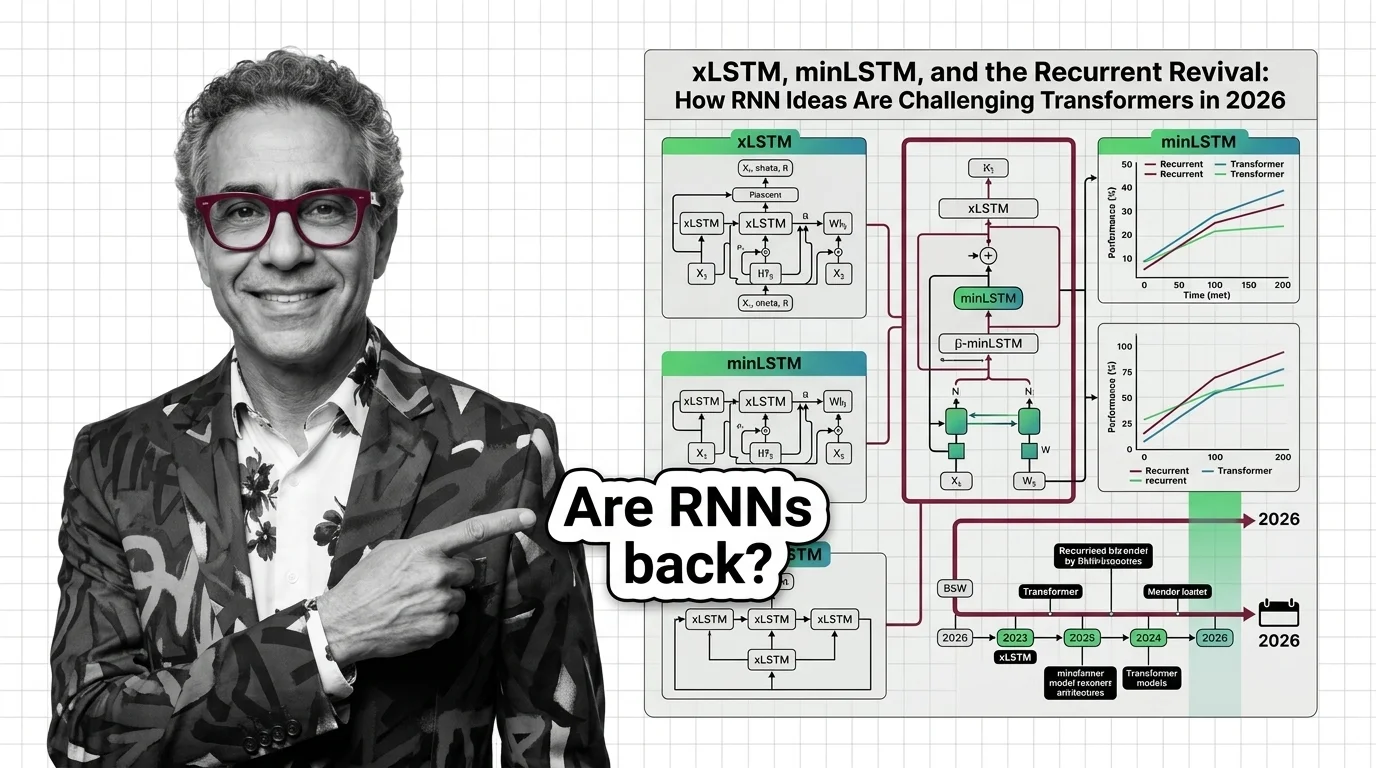

xLSTM, minLSTM, and the Recurrent Revival: How RNN Ideas Are Challenging Transformers in 2026

Table of Contents

TL;DR

- The shift: Multiple independent research threads prove recurrent architectures match transformer quality at linear inference cost — and the industry is converging on hybrid models.

- Why it matters: Inference cost is the dominant constraint, and recurrence offers O(N) time with constant memory where transformers burn O(N^2).

- What’s next: Pure-transformer deployments face a cost reckoning as hybrid architectures move from papers to production.

The transformer didn’t get dethroned. It got a bill.

Quadratic attention has been the price of admission for frontier language modeling. That price is becoming unsustainable — and three independent research teams just demonstrated cheaper architectures without sacrificing quality. The Recurrent Neural Network just became relevant again. The inference bill is why.

The Inference Tax Nobody Budgeted For

Thesis: The transformer monopoly is fracturing — not because transformers got worse, but because inference economics made alternatives necessary.

The signal is structural. Sepp Hochreiter — LSTM’s original inventor — shipped xLSTM at NeurIPS 2024 with two key innovations: exponential gating and a matrix memory system with a covariance update rule (xLSTM Paper). It processes sequences in O(N) time with O(1) memory. Transformers run O(N^2) on both (NXAI).

That’s not a theoretical advantage. That’s a cost curve that diverges with every token.

By early 2025, Hochreiter’s team at NXAI in Linz scaled to xLSTM 7B: 7 billion parameters, trained on 2.3 trillion tokens, fully open-source (xLSTM 7B Paper). Prefill throughput runs roughly 70% higher across batch sizes, and memory holds constant at around 500MB while transformer KV caches keep growing.

Quality? Slightly behind. On the Hugging Face Leaderboard v2, xLSTM 7B scores 0.260 against Llama 3.1 8B’s 0.275 — a real gap, but a narrowing one (xLSTM 7B Paper). Long-context performance still degrades against transformers with specialized fine-tuning.

The honest read: comparable quality at radically lower cost — with a context-length asterisk.

Three Research Programs, One Direction

Hochreiter wasn’t working alone. The convergence is what tells the story.

In late 2024, a team from Mila and Borealis AI — Yoshua Bengio among them — asked “Were RNNs All We Needed?” (minRNN Paper). Strip the Hidden State dependencies from LSTM and GRU gates, and the old architectures become parallelizable. No Backpropagation Through Time required.

minLSTM ran 1,361 times faster than traditional LSTM on 4,096-token sequences. Training speed matched Mamba — the State Space Models architecture that started this conversation in late 2023.

Then Mamba evolved. Mamba-3 arrived at ICLR 2026 with complex-valued states and multi-input multi-output processing, though published results cover 1.5B parameter scale only (Mamba-3 Paper). Gated DeltaNet landed at ICLR 2025 and was adopted into Qwen3.5 earlier this year.

Three independent programs. Three approaches to the same bottleneck. All reaching production in the same window.

That’s not coincidence. That’s a market correction.

Who Moves First Wins

AI21’s Jamba proved the template: 398 billion total parameters, 94 billion active, 256K context window — transformer attention where it matters, recurrent layers where it’s cheaper (AI21 Blog). Qwen3.5 and OLMo Hybrid followed the same playbook.

Infrastructure teams are the obvious beneficiaries. Constant-memory architectures cut hardware costs per query on long sequences.

Open-source first movers have zero barrier to evaluation. xLSTM 7B shipped weights, model code, and training code. Teams benchmarking these architectures against their workloads now will have the data when the budget conversation arrives next quarter.

Who Gets Left Behind

The industry assumed the architecture question was settled — Convolutional Neural Network for vision, transformers for Neural Network Basics for LLMs, done. That assumption got expensive.

Pure-transformer maximalists are the most exposed. Attention is powerful. It’s also quadratic. Refusing to evaluate alternatives isn’t principled — it’s a concentrated bet.

Late evaluators face a compounding problem. Hybrid architectures demand different optimization strategies. Teams waiting for a “clear winner” will find themselves two tooling generations behind teams that started benchmarking last year.

You’re either diversifying your architecture portfolio or you’re concentrated in a single bet that just got more expensive.

What Happens Next

Base case (most likely): Hybrid transformer-recurrent architectures become the default for new large-scale deployments by late 2026. Pure transformers persist for short-context, high-accuracy tasks. Recurrent layers handle long-context and cost-sensitive inference. Signal to watch: A top-5 foundation model lab ships a flagship hybrid model. Timeline: 6-12 months.

Bull case: xLSTM or Mamba-3 derivatives close the quality gap entirely at scale — xLSTM scaling results at ICLR 2026 already show competitive performance with linear time-complexity (xLSTM Scaling). Signal: Replication at 70B+ parameters with no quality degradation. Timeline: 12-18 months.

Bear case: Recurrent architectures plateau on complex reasoning. Transformers with efficient attention variants close the cost gap from their side. The hybrid moment stalls at niche applications. Signal: No major lab adopts a hybrid architecture for a flagship release through 2026. Timeline: 6-9 months.

Frequently Asked Questions

Q: What is xLSTM and how does Hochreiter’s new architecture improve on the original LSTM? A: xLSTM adds exponential gating and matrix memory to the classic LSTM design. These changes enable linear-time processing with constant memory — eliminating the quadratic cost of transformer attention while retaining sequential reasoning capability.

Q: What did the “Were RNNs All We Needed” paper prove about minLSTM and minGRU performance? A: By removing hidden-state dependencies from gates, minLSTM achieved 1,361x speedup over traditional LSTM while matching Mamba’s training speed. The paper proved classic RNN designs were bottlenecked by implementation, not architecture.

Q: Are RNNs making a comeback with xLSTM, state space models, and linear recurrence in 2026? A: The framing matters. This isn’t a comeback — it’s a convergence. Hybrid architectures blending recurrent and transformer layers are entering production. Pure recurrence won’t replace transformers, but the pure-transformer default is ending.

The Bottom Line

The transformer monopoly is fracturing along the inference cost line. xLSTM, minLSTM, and Mamba-3 proved — independently — that recurrence matches transformer quality at a fraction of the compute. The industry is converging on hybrid architectures. Budget accordingly.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors