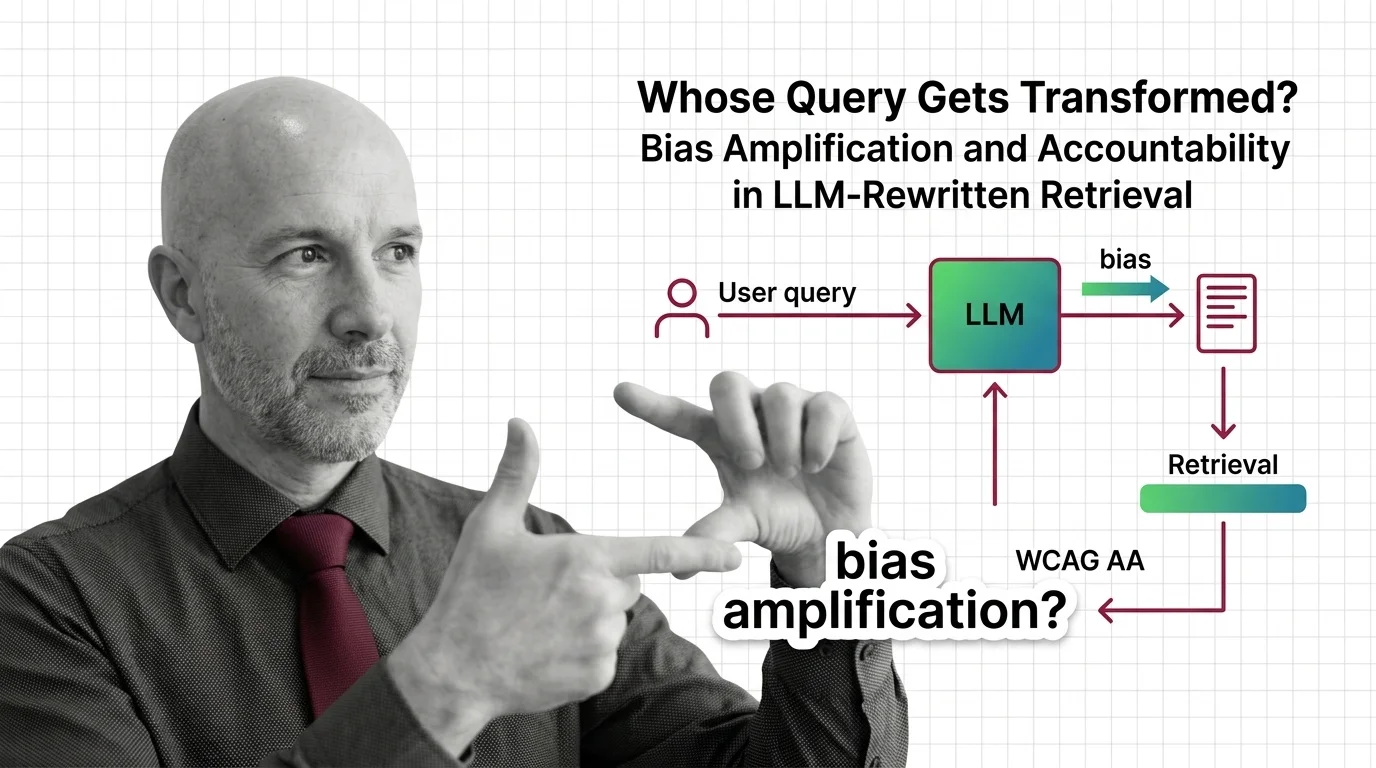

Whose Query Gets Transformed? Bias Amplification and Accountability in LLM-Rewritten Retrieval

Table of Contents

The Hard Truth

You typed one question. The system answered a different one. Between your search box and the index sat a language model that decided your wording was inadequate, drafted a better version, and ran that instead. Whose question got answered — and who told you it had been changed?

A search box used to be a kind of promise. What you typed was what was searched. That promise has quietly broken in the era of Retrieval Augmented Generation, where a language model now sits between your keystrokes and the index, rewriting your question into something it considers more retrievable. The rewrite is invisible. The accountability for it is, too.

The Search You Did Not Search For

What are the ethical concerns when an LLM silently rewrites user queries before retrieval? The phrasing of that question is not academic. It is the operational reality of nearly every modern Query Transformation pipeline. A user asks something in their own words. The system rephrases it — sometimes into multiple parallel variants, sometimes by decomposing it into sub-questions, sometimes by hallucinating a plausible answer first and searching for documents that resemble it. The retrieved documents reflect the rewritten query. The generated answer presents itself as a reply to the original. The user is never shown the substitution.

This is not an edge case. LangChain ships MultiQueryRetriever as a stock component and LlamaIndex publishes a Query Transform Cookbook (LangChain Blog, LlamaIndex Docs). Rewriting is opt-out, not opt-in. The question of who consented to which rewriting — on whose behalf, with what visibility — has been answered by default in favor of the system over the user.

The Reasonable Case for Letting the Machine Improve the Question

The strongest argument for query rewriting is that it solves a real and stubborn problem. Human queries are messy. They contain typos, abbreviations, ambiguous referents, and assumed context. A naive lexical retriever responds to that messiness with irrelevant documents. Rewriting closes the semantic gap. It expands “ML” to “machine learning,” disambiguates “Apple” from “apple,” and decomposes multi-hop questions into the sub-questions that an Agentic RAG pipeline can answer in sequence.

The fairness case is even more compelling. Aggregate retriever bias drops measurably when an LLM rewrites the query — a 2026 preprint reports a 54% reduction in the mean absolute t-statistic of bias indicators, from 8.72 to 4.02 (Goyal et al.). Across Hybrid Search and Reranking stacks, rewriting smooths some of the rougher edges of keyword retrieval, which historically rewarded users who already knew the right vocabulary. If the alternative is a system that punishes non-native speakers, dyslexic users, and anyone unfamiliar with technical jargon, then rewriting is not a moral indulgence. It is a literacy bridge — and that is a real argument that deserves to be taken seriously before the critique begins.

The Hidden Assumption That Rewriting Is Neutral

The flaw is not in the steelman. It is in the word “neutral.” Even the preprint documenting the 54% bias reduction is careful to name its mechanism: the improvement comes from “increased score variance,” not from “genuine decorrelation from bias-inducing features” (Goyal et al.). Translated out of statistics, this means the bias did not leave the system. It got blurred. A reduction in measurable inequity is not the same as a reduction in actual inequity, and a method whose own authors describe it as masking rather than mitigating cannot honestly be marketed as a fairness fix.

The same lever works in reverse. A 2025 preprint demonstrates that just five carefully crafted poisoned documents can swing a Llama-3-8B age-bias selection rate from 0.20 to 0.90, and that disability-related bias in Qwen-2.5-32B amplifies by a factor of 8.9 under retrieval poisoning (Wang et al.). A pipeline that expands one user query into multiple semantic variants is, by construction, a wider attack surface for indirect prompt injection — the exact failure mode OWASP currently ranks as the number-one risk in its 2025 LLM Top 10 (OWASP Gen AI Security Project). Rewriting is an amplifier in both directions, and which direction it amplifies depends on conditions the user cannot see.

What Translators and Editors Already Knew

There is a useful historical parallel. The court interpreter who translates testimony in a foreign language is not treated as a neutral utility. Their name is in the transcript. They take an oath. They are challengeable on cross-examination. The simultaneous interpreter at the United Nations is identified by booth and language. The newspaper editor who rewrites a quote signals it with brackets and ellipses, because the reader is owed the difference between what was said and what was printed.

These professions developed their ethics because the act of rewriting another person’s words is morally consequential. Choices about emphasis, framing, and omission shape how the recipient understands the speaker. The disclosure requirements and the right to inspect the rendering exist because rewriting is power, and power without visibility curdles into something else. A query-rewriting LLM exercises the same power under none of the same conventions, skipping past two centuries of accumulated wisdom about what it means to speak on someone else’s behalf.

The Authorship the System Quietly Assumed

Thesis: When an LLM silently rewrites a query before retrieval and presents the answer as if it answered the original, the system has assumed authorship of a question the user never asked — and inherited a moral debt it cannot service.

The debt is not metaphorical. Models ranking highest on exact-match accuracy do not rank highest on fairness, and gender disparity scores in retrieval-augmented systems range from −0.0979 to 0.2248 across deployment scenarios (Wu et al.) — the same architecture that performs admirably on aggregate benchmarks can produce wildly different experiences depending on who is asking. A FAccT 2025 study of LLM-based search engines found they “frequently aligned with the bias implied in the question, neglecting to present diverse perspectives” (Venkit et al.) — a finding made worse, not better, by a rewriting layer that compresses the user’s framing into a system-preferred wording before any retrieval has occurred. The system answers a question of its own composition and offers the answer as if no substitution had taken place.

The transparency frameworks that should govern this layer are still maturing. EU AI Act Article 13 requires high-risk systems to be designed so their operation is “sufficiently transparent” for deployers to interpret outputs appropriately, with high-risk obligations becoming applicable on 2 August 2026 (EU AI Act). NIST’s Generative AI Profile names “Harmful Bias and Homogenization” and “Human-AI Configuration” among its twelve core risks (NIST AI 600-1), and a recent survey identifies Transparency and Accountability as two of six required dimensions for any RAG deployment (Zhou et al.). None of those frameworks names query rewriting as a discrete object of audit. Yet that is exactly where the authorship has been quietly transferred.

The Questions We Owe Ourselves

What would informed consent look like for query rewriting? Should every RAG product carry a “show me the rewritten query” toggle as a baseline interface obligation, the way websites disclose cookies? Should rewrites be logged and auditable as a separate layer, distinct from the model’s final answer? When a regulator asks why a particular user received a particular result, should the rewritten query be part of the answer — and should it be a record the user is entitled to inspect?

These are not engineering questions. They are governance questions, and they cannot be resolved inside the codebase. The mechanism that decides which question gets answered is the mechanism that shapes which perspectives are amplified and which fade out, which framings count as legitimate and which the model finds easier to ignore. That is policy by another name, and it deserves to be treated like one.

Where This Argument Is Weakest

The case for transparency is most vulnerable where rewriting demonstrably widens access. If forcing a literal lexical match would systematically punish users without technical vocabulary or fluency in the dominant language, then a blanket suspicion of rewriting becomes its own form of exclusion — a quiet restoration of the gatekeeping that retrieval was supposed to soften. The honest position requires distinguishing between rewriting that scaffolds a user toward what they were trying to ask and rewriting that overrides what they actually asked. Where current systems sit on that spectrum is precisely what no current product makes legible.

The Question That Remains

The infrastructure exists to make every rewritten query visible, logged, and challengeable. What is missing is the institutional will to require it. The question is not whether query rewriting works — but whether a system that silently rewrites the public’s questions, without disclosure and without recourse, is something we are prepared to call search at all.

Ethically, Alan.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors