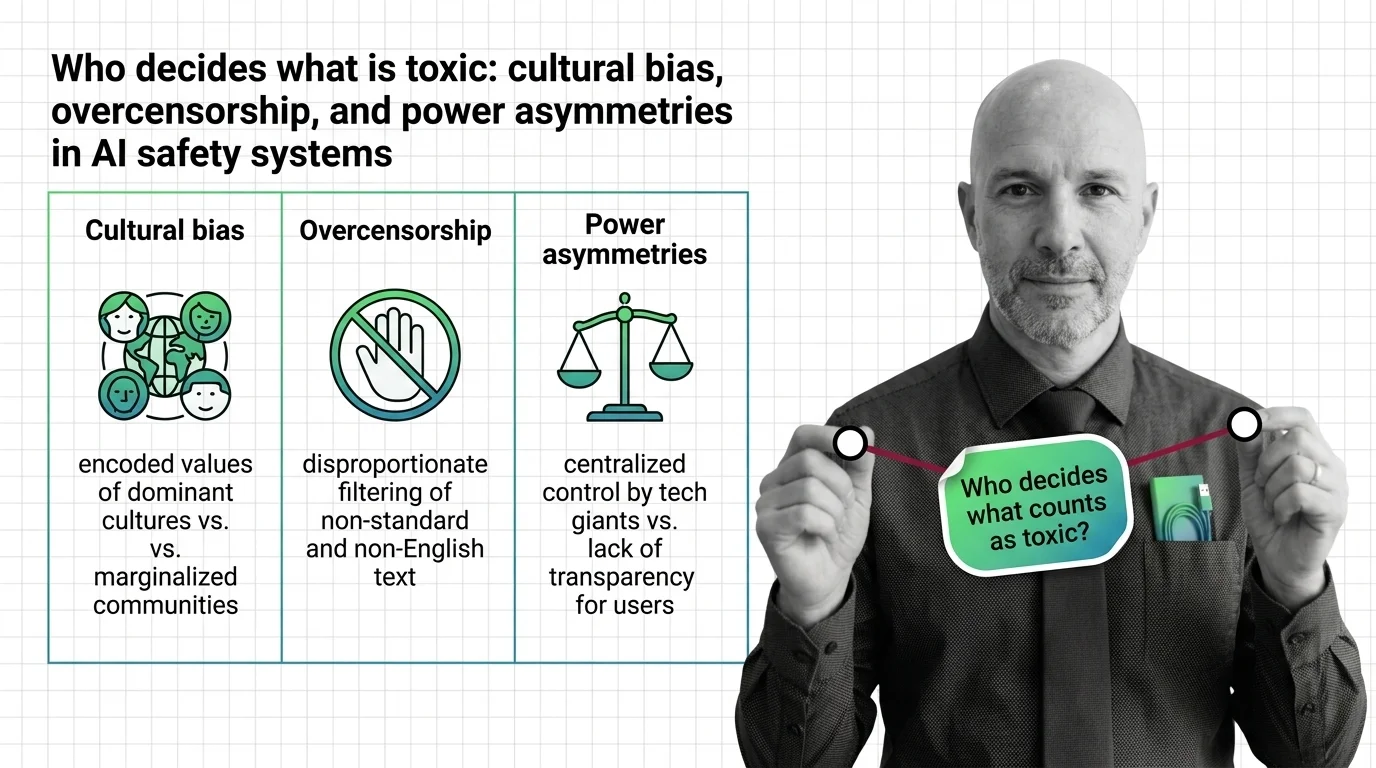

Who Decides What Is Toxic: Cultural Bias, Overcensorship, and Power Asymmetries in AI Safety Systems

Table of Contents

The Hard Truth

Imagine a system designed to protect people from harmful speech — that consistently flags the speech of the people it claims to protect. Not a hypothetical. Not an edge case. A documented pattern, operating at scale, right now.

There is a quiet consensus in the AI industry that safety is a solved engineering problem — that the right classifier, the right taxonomy, the right threshold can sort human expression into clean categories of safe and unsafe. This consensus is wrong, and the consequences of its wrongness fall unevenly on those least positioned to push back.

The Gatekeepers Nobody Elected

Every Safety Classifier encodes a decision about what counts as harmful. That decision is not neutral. It reflects the values, the cultural context, and the linguistic assumptions of whoever built the training data, designed the taxonomy, and set the threshold. When those decisions scale to billions of interactions, they become — without exaggeration — a form of governance over permissible expression.

The question that rarely surfaces in technical discussions of Toxicity And Safety Evaluation is not whether classifiers work. It is who they work for. Whose definition of toxicity prevails when a system trained primarily on American English encounters African American English, or German political discourse, or the dialects of the Global South? The answer, documented across multiple independent studies, is that the dominant culture’s norms become the default — and everything else registers as deviation.

The Case for Classifying at Scale

The need for automated Content Moderation is real. No human team can review the volume of content flowing through modern platforms. The alternatives — either no moderation or selective human review — create their own injustices. Automated safety systems emerged because the scale of online harm genuinely outpaced human capacity to address it.

Frameworks like Harmbench, with its catalog of 510 adversarial behaviors and 18 Red Teaming For AI methods, represent a serious attempt to standardize evaluation. Toxigen, with its 274,000 examples of implicit toxicity across 13 minority groups, tries to capture the subtlety that earlier keyword-based systems missed entirely. And tools like Llama Guard aim to provide safety classification that developers can embed directly into their applications — safety as infrastructure rather than afterthought.

These are not trivial achievements. The people building these systems are, by and large, trying to reduce genuine harm. That sincerity is not the problem. The problem is what sincerity obscures.

Toxicity Is Not a Universal Constant

The hidden assumption inside the current generation of toxicity classifiers is that toxicity is a stable, culturally portable property of text. It is not. Toxicity is context-dependent, dialect-dependent, and power-dependent — and the systems we build to detect it consistently misread these dependencies.

Consider Perspective API, historically the most widely deployed toxicity scorer. Tweets in African American English scored approximately 0.55 or higher on its toxicity scale, compared to 0.25 for semantically equivalent content in Standard American English (Resende et al.). Separately, AAVE-expressing users were more than twice as likely to have their speech classified as hate speech across studies using different classifiers and datasets (Sap et al.). The system could not distinguish between a dialect and a threat — and the cost of that failure fell entirely on Black speakers.

The bias extends beyond English. German-language tweets received median toxicity scores more than three times higher than comparable content in other European languages, with COVID-related German tweets scoring roughly five times higher than Italian equivalents (Nogara et al.). A German user discussing politics was, by the system’s logic, inherently more toxic than an Italian user saying roughly the same thing.

These are not historical curiosities. Perspective API is sunsetting at the end of 2026, but the architectural assumptions it popularized — universal scoring, language-agnostic thresholds, binary toxicity — live on in its successors. Research found that LlamaGuard 3’s refusal rates dropped by roughly fifty percentage points once political context was stripped from prompts, suggesting that much of what the system classified as unsafe was political speech, not dangerous content (Yadav et al.). LlamaGuard 3 has since been superseded by LlamaGuard 4, whose cultural fairness has not yet been independently audited.

Security & compatibility notes:

- Perspective API sunsetting: Service ends December 31, 2026 with no migration support from Google. Applications relying on Perspective API should transition to alternative moderation systems.

- LlamaGuard 3 superseded: Replaced by LlamaGuard 4 (12B). LG3 was found vulnerable to concept-erasure attacks that collapsed its safety classifications entirely.

When the Censor Speaks Only English

The asymmetry deepens when you look at who is excluded entirely. LlamaGuard 3 supported eight languages — eight, in a world with thousands. Non-English speakers face a compounding disadvantage: language models underperform for them, and the moderation systems meant to protect them either do not operate in their language or rely on machine translation that strips context and nuance.

In the Global South, the pattern inverts in a particularly cruel way. AI moderation systems simultaneously over-remove lawful speech and under-remove genuinely harmful content. The system is both too aggressive and too passive, in precisely the wrong directions. A person writing in Yoruba or Tagalog encounters a safety apparatus that was not designed with them in mind, has not been tested in their language, and cannot distinguish their expression from the threat models it was trained to detect.

Meanwhile, the benchmarks that evaluate these systems reflect the same narrowness. An analysis of safety benchmarks found that eighty-one percent test only predefined risk categories, seventy-nine percent rely on binary pass/fail scoring, and sixty-eight percent evaluate only single-turn interactions (Yu et al., ICML 2025). The benchmarks do not ask whether the system is fair across cultures. They ask whether it catches the threats its designers anticipated — which means the evaluation itself encodes the same blind spots as the systems it evaluates.

Safety as Governance, Not Engineering

Thesis: AI safety classification is a governance function masquerading as an engineering problem, and treating it otherwise concentrates power over permissible expression in the hands of those least accountable for its consequences.

The structural incentive is troubling. AI developers currently control both the design and the disclosure of their own safety evaluations — a configuration that, as one theoretical analysis argues, creates pressure to underreport limitations rather than surface them. When the entity that builds the classifier also defines the benchmark, selects the test data, and publishes the results, the separation between judge and defendant dissolves. The EU AI Act, which becomes fully applicable in August 2026, gestures toward external accountability — but regulation alone cannot solve a problem that is fundamentally about whose values count as default.

Questions Worth Sitting With

This is not a problem that admits a technical fix. Recalibrating thresholds does not address the deeper issue — that the choice of what to classify, in which language, against which cultural standard, is a political act performed under the cover of technical necessity.

What would it mean to treat safety classification as a democratic process rather than an engineering specification? Who should be in the room when a model’s safety taxonomy is designed — and who is conspicuously absent now? If a Hallucination is a system generating confident falsehoods, what do we call a safety classifier generating confident misclassifications of entire dialects?

Where This Argument Is Weakest

The strongest objection to this critique is practical: without automated classification, harmful content scales unchecked. The communities most harmed by bias in safety systems are also the communities most harmed by unmoderated hate speech. Any framework that weakens moderation in the name of fairness risks trading one injustice for another.

There is also the possibility that newer systems will genuinely improve. Anthropic’s Constitutional Classifiers demonstrated a statistically insignificant increase in over-refusal on harmless queries while reducing jailbreak success dramatically (Anthropic Research). If the trade-off between safety and fairness is not zero-sum — if both can improve simultaneously — then the governance argument becomes less urgent. That remains to be proven across languages and cultures, but the possibility deserves honest acknowledgment.

The Question That Remains

The tools we build to make AI safe encode assumptions about whose safety matters, whose speech is suspect, and whose culture sets the standard. Until we treat those assumptions as political decisions rather than engineering parameters, we will keep building systems that protect some people by silencing others. The question is not whether AI safety classifiers work. The question is: work for whom?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors