Who Decides What Gets Measured: The Accountability Gap in Standardized LLM Evaluation

Table of Contents

The Hard Truth

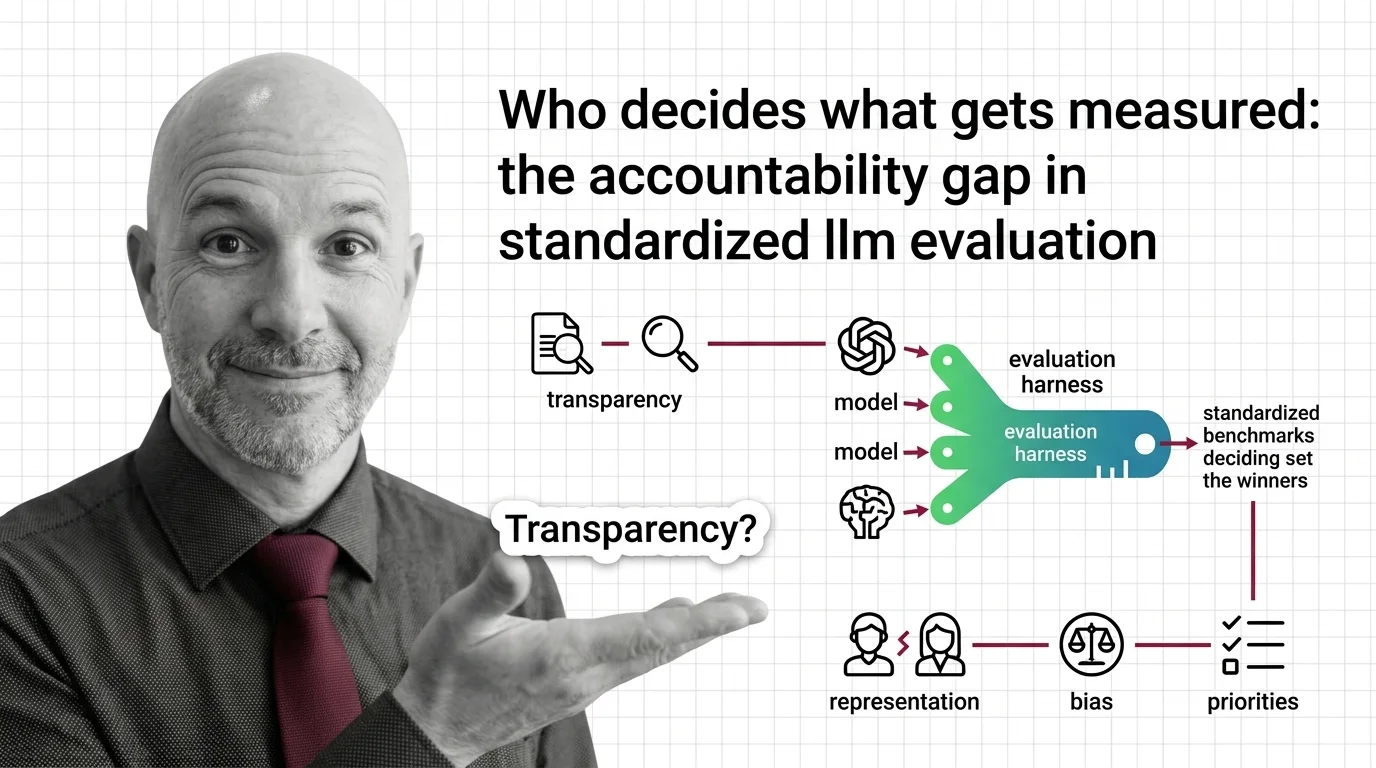

Every major AI model is ranked by the same handful of benchmarks. The teams who design those benchmarks never ran for office, never published a mandate, and answer to no regulatory body. What happens when the most consequential gatekeepers in AI are the ones nobody elected?

There is a particular kind of authority that becomes invisible because it looks technical. A score on a leaderboard. A ranking that separates the funded from the forgotten. We have built an entire infrastructure of AI Model Evaluation — and almost nobody is asking who designed the instruments, what they chose to measure, and what they decided to leave out.

The Comfort of a Number

An Evaluation Harness promises something deeply appealing: objectivity. Feed a model through a standardized battery of tests, and the numbers tell you what is good. Helm Benchmark evaluates across seven dimensions — accuracy, calibration, robustness, fairness, bias, toxicity, and efficiency — spanning sixteen scenarios (Stanford CRFM). OpenCompass covers more than seventy datasets. The Open LLM Leaderboard publishes rankings that shape funding decisions, hiring conversations, and product roadmaps across the industry.

The appeal is understandable. Without standardized measurement, every claim about model quality is anecdotal. A team says their model “performs well.” Compared to what? Measured how? By whom? Evaluation harnesses replaced that ambiguity with numbers, and in doing so, they gave the field something it desperately needed — a shared language for comparison.

But a shared language is not a neutral language. Every measurement encodes a priority. And priority, when it operates at the scale of an entire industry, is a form of governance.

The Rational Case for Standardization

The conventional defense of standardized benchmarks deserves to be stated at its strongest. Without common evaluation frameworks, we face a worse alternative: proprietary benchmarks designed by the same companies building the models. Independent harnesses like Inspect AI — open-sourced by the UK AI Safety Institute — and community-maintained frameworks like Deepeval at least create a space where evaluation is not entirely self-serving.

A ten-country consensus on AI evaluation practices, published in February 2026 (NIST), suggests that governments recognize the need for shared measurement too. The alternative to standardized evaluation is not freedom from measurement. It is measurement controlled entirely by those with the most to gain from favorable results.

This argument is reasonable. It is also incomplete — because it treats the existence of shared standards as sufficient, without asking who writes them and what they leave out.

The Metric Is the Message

Here is the assumption buried inside every leaderboard: that the dimensions being measured are the dimensions that matter. A Confusion Matrix tells you about classification accuracy — true positives, false negatives, the clean arithmetic of right and wrong. Precision, Recall, and F1 Score provide a more granular lens. But none of these instruments ask whether the classification task itself is the right question. None of them measure whether a model’s outputs serve the people affected by its decisions, or whether its training data was obtained with consent.

The dimensions that standardized harnesses choose to evaluate become, by default, the dimensions the industry optimizes for. What gets measured gets funded. What gets funded gets built. And what gets built reshapes the world — not because anyone deliberately chose that set of values, but because a technical committee selected a set of benchmarks and the market treated them as truth.

Benchmark Contamination makes this worse. A 2024 survey found GPT-3.5 and GPT-4 had been exposed to approximately 4.7 million samples from 263 benchmarks — a figure likely larger now (Xu et al.). When models train on the tests designed to evaluate them, we stop measuring capability and start measuring pattern recognition. LLMs exceeding ninety percent on MMLU while showing up to thirteen percentage point drops on novel tests (Nature) confirms the instruments are being gamed. A gamed benchmark is not a broken tool — it is a tool that still looks functional while it quietly stops telling the truth.

When the Ruler Defines the Room

In the early twentieth century, standardized intelligence testing promised the same thing AI benchmarks promise now: an objective, scientific method to compare cognitive capacity. The IQ test was presented as neutral — a measurement of something real, independent of the instrument. It took decades to surface the obvious: the test encoded its designers’ assumptions, measuring one kind of intelligence valued by one culture and presenting that narrow slice as universal truth.

The concentration of power in AI evaluation follows the same arc. Industry share of major AI model development rose from eleven percent in 2010 to ninety-six percent by 2021 (Eriksson et al., data through 2021), and the trend has only intensified. When a small number of institutions produce both the models and the evaluation resources, the ecosystem is not independent in the way it appears. The benchmarks look academic. The leaderboards look open. But measurement infrastructure is entangled with production infrastructure in ways rarely disclosed and almost never audited.

Benchmarks Are Governance in Technical Clothing

Thesis: Standardized AI evaluation is an unaccountable form of governance — not because the people behind it are malicious, but because the structure lacks the transparency, representation, and oversight that governance demands.

Consider what these instruments do. They determine which models receive attention, investment, and adoption. They shape research agendas by making some capabilities visible and others invisible — channeling thousands of engineering hours toward benchmark performance rather than safety, equity, or the needs of communities that benchmarks do not represent. Who decides which benchmarks matter, and how do evaluation harnesses shape AI development priorities? The answer: a handful of teams, operating without formal mandate, whose choices propagate through the ecosystem as if they were natural law.

This is not a measurement problem. It is a power problem. And power exercised without accountability — without representation from those affected, without mechanisms for challenge — is power that will serve narrow interests, not because anyone intends it, but because that is what unchecked authority does.

Questions We Owe the Instruments

The ethical concerns with standardized harnesses deciding which models succeed are not abstract. They are structural. Who sits on the committee that selects which tasks a harness evaluates? Whose definition of “fairness” does the fairness metric encode? When a benchmark becomes saturated — when models consistently score above ninety percent and the instrument loses its ability to discriminate — who decides what replaces it, and what assumptions does the replacement carry?

These are not questions with comfortable answers. But the discomfort is where the work needs to happen. The people most affected by AI systems — patients in diagnostic pipelines, applicants filtered by hiring algorithms, communities subjected to predictive policing — have no representation in the design of evaluation instruments. NIST’s AI Agent Standards Initiative, launched in February 2026 (NIST), represents one attempt to formalize evaluation governance. Whether it will include meaningful input from affected communities, or remain a conversation among technologists, is still open — and the answer will say more about AI accountability than any benchmark score.

Where This Argument Is Weakest

The most honest challenge to this position is practical: without standardized benchmarks, what do we use? Abandoning evaluation harnesses does not create better evaluation — it creates no shared evaluation at all. Critique without alternative risks paralyzing a field in a moment when imperfect measurement is better than none.

If contamination-resistant evaluation or participatory benchmark design demonstrate that inclusive governance and rigorous measurement can coexist, this argument loses urgency. Competition among frameworks like HELM, OpenCompass, and Inspect AI might naturally diversify the dimensions being measured. That correction has not materialized yet. But honesty demands acknowledging it could.

The Question That Remains

We built the instruments that decide which AI succeeds and which disappears. We let them operate without the oversight we would demand from any other institution wielding that kind of influence. The question is not whether benchmarks are useful — they are. The question is whether we are willing to govern the governors, or whether we will keep pretending that a number on a leaderboard is just a number.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors