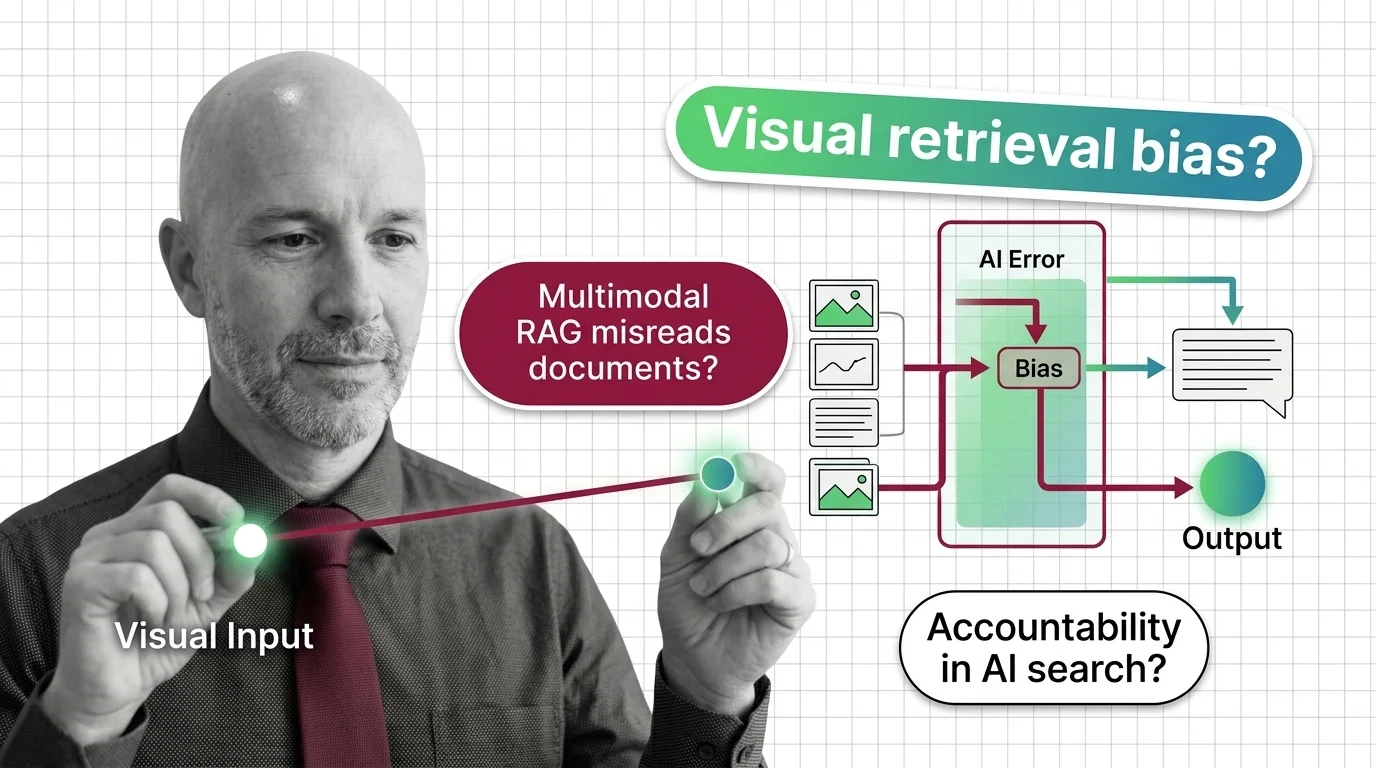

When Multimodal RAG Misreads the Document: Accountability and Bias in Visual Retrieval

Table of Contents

The Hard Truth

A claim adjuster opens the system, types a question, and reads an answer drawn from twelve scanned pages the model selected on her behalf. She never sees the pages it discarded. If the answer is wrong, whose error is it — and at what point in that pipeline did a human last have the chance to disagree?

There is a quiet shift happening in how enterprises retrieve their own documents. The retrieval layer used to read text. Now it reads pixels — pages, charts, scanned letters, signatures, layouts — through a vision-language model that decides what is relevant before any person sees it. Multimodal RAG systems are being adopted in healthcare, claims handling, and legal review with a speed that outpaces our ability to articulate what we are delegating, and to whom.

What We Outsource When the Model Sees the Page

The traditional argument for retrieval-augmented systems was modest: ground the model in your own corpus so it stops inventing answers. The visual variant promises something more ambitious. By embedding the page as an image, it claims to understand layout, tables, and stamps the way a human reviewer does. That promise is alluring, especially in industries where the document is the truth — the loan file, the discharge summary, the policy schedule.

But what exactly are we delegating when we hand a page to a vision-language model and trust its sense of what matters? We are outsourcing the first act of reading. We are letting a system decide which paragraph in a contract is “the relevant one,” which figure in a chart is “the supporting evidence,” which signature is “the authoritative one.” That decision used to be a human act, embedded in a workflow with names attached to it. Now it happens in a tensor, before anyone has had the chance to disagree.

The Case for Letting the Model Read the Document

The conventional defense is reasonable, and it should be presented at full strength. Document parsing has always been the weak link in enterprise retrieval. Scanned PDFs lose their tables to OCR. Multi-column layouts confuse text extractors. Figures and diagrams are stripped before they reach the search index. Tools like ColPali and the ViDoRe benchmark line emerged precisely because the older pipeline — extract text, embed text, retrieve text — discarded the parts of a document where meaning often lives.

Letting the vision-language model read the page directly, without the lossy Document Parsing And Extraction step, recovers information that brittle pipelines used to throw away. For low-stakes search, this is a clear improvement. For an internal knowledge base where someone always re-reads the source before acting, the gain in recall is real, the loss in interpretability is tolerable, and the human reviewer still has the last word.

The problem is that the architecture is being adopted in places where the human reviewer no longer has the last word — where the answer is the action, and the page behind it is rarely opened.

Whose Visual Vocabulary Is the Default?

Here is the assumption inside the conventional wisdom: that the model’s sense of “relevant page” is a neutral act of perception. It is not. A vision-language model trained mostly on English documents and Western page conventions has internalized a particular visual vocabulary. ColPali’s first benchmark covered English and French only; the v2 expansion added Spanish and German, and even that broader version showed a significant performance gap against English-only evaluation (ViDoRe v2 on Hugging Face). For minority scripts the gap is not a gap but a cliff — a study on Manchu OCR found that frontier vision-language models, including Kimi-VL, Pixtral, Gemini 2.5 Pro and GPT-4o, “perform poorly in zero-shot setting” on low-resource scripts (Manchu OCR study).

This is not a benchmark complaint. It is a question about whose documents the system is built to read. A multilingual insurer in Central Europe processes claims in Hungarian, Czech, and Romanian. A community health network reads handwritten intake forms in languages the training data has barely seen. When the retriever fails, it does not fail uniformly; it fails along the grain of who was underrepresented when the model was trained. The system inherits bias and discrimination from training data and from skewed retrieval corpora, and amplifies those biases when the retrieved evidence is unbalanced. The pipeline is not neutral. It is a particular reader, with particular blind spots, scaled to the speed of an API.

There is a second layer of distortion underneath this one. Recent hallucination work in vision-language models catalogues three problematic behaviors at once: disproportionate attention to uninformative trailing visual tokens, over-dependence on previously generated tokens, and excessive fixation on system prompts (AAAI 2026 Dual-Level Attention). Translated out of the lab: the model can be looking at the right page and still answer from the wrong part of it.

When the Document Becomes a Witness

There is a useful parallel here, and it is older than computing. For most of legal and bureaucratic history, a document was treated as a witness. Witnesses can be cross-examined. They can be challenged on what they saw, what they remembered, what they chose to emphasize. A claims file, a medical record, a court exhibit — these were artifacts whose authority depended on someone being able to interrogate the chain of custody.

Multimodal RAG breaks the chain of custody for the act of reading. The page is admitted as evidence by a system whose interpretive choices cannot be cross-examined in any meaningful sense. We can audit the output, but the model’s selection of which page mattered, and which region of which page it weighted, is largely opaque. The Knowledge Graphs For RAG community has been pushing back on this opacity by attaching structured relationships to retrieved evidence, and citation-enforced strategies are now exploring span-level attribution to preserve fine-grained document context for legal and regulatory verifiability. These are real improvements. They do not yet solve the deeper problem.

The deeper problem is that the visual retriever is becoming a silent fact-finder in workflows that were never designed for one.

The Visual Layer Is a Governance Layer

Thesis: Multimodal RAG is not a retrieval optimization. It is a delegation of interpretive authority that requires governance proportional to the consequences of the answer.

That sentence sounds modest, and it is not meant to. The European framework already implies it without naming it. The EU AI Act’s high-risk regime begins enforcement on August 2, 2026 for Annex III systems — employment, credit, education, public administration — with maximum penalties of €35M or 7% of global turnover (Legal Nodes). Article 50 explicitly covers AI that generates or manipulates audio, video, image, and text together, which is precisely the multimodal pipeline. A high-risk multimodal system that processes personal data triggers both a Fundamental Rights Impact Assessment under Article 27 and a Data Protection Impact Assessment under GDPR Article 35 (IAPP). The NIST AI Risk Management Framework’s Generative AI Profile extends the framework explicitly to multimodal systems and their data integrity and provenance risks.

These regimes are not multimodal-RAG-specific. None of them tells you how to audit the page-selection step in a vision-language retriever. But they make a quiet point: the moment the system shapes a high-stakes answer, the burden of explaining it falls back on the institution that deployed it. Metadata Filtering, span-level citation, and provenance logging are not just engineering choices. They are the architecture by which an institution preserves its ability to answer the question “why this page, and not the other one?”

The visual layer is a governance layer. It is being treated as a performance layer, and that mistake is becoming structural.

Sitting With the Inheritance

What does this mean in practice for the people who must decide whether to adopt these systems? It is not a call to abandon them. It is a call to refuse the framing that visual retrieval is a neutral upgrade. A few questions worth carrying into the next architecture review: which populations are most likely to be underrepresented in the corpus the retriever has learned to weight? Which downstream decisions will be taken without a human re-reading the source? What evidence would persuade you that the retriever is misreading along a specific demographic axis — and have you instrumented anything that could surface that evidence?

Healthcare is a useful stress test. A scoping review on RAG in clinical contexts notes that recommendations become less appropriate when underlying databases underrepresent minority populations, and that automation bias compounds the harm because clinicians tend to trust the system’s framing of which evidence is salient (medRxiv scoping review). The same dynamic exists in any field where the answer is consumed faster than the source is read.

Compatibility note: ColPali (

colpali-engine) recently removed support for context-augmented queries and images and deprecatedprocess_queryin favor ofprocess_text; it now requires Python ≥3.10,<3.15 and Transformers v5. Teams running visual retrieval pipelines should pin versions deliberately and treat the upgrade as a breaking change rather than a routine bump (illuin-tech ColPali GitHub).

Where the Argument Could Fail

The position taken here would weaken if two things turned out to be true. The first: that span-level citation, provenance logs, and metadata filtering layers become so reliable that the page-selection step is fully auditable, and the institution can always answer why one page was retrieved over another. The second: that the bias gap between English-trained vision-language models and minority-language documents closes quickly enough that the harm distribution flattens. Either would shift the argument. Neither has happened yet.

The Question That Remains

Multimodal RAG is being adopted in places where the answer is the action, and the source page is rarely re-read. The technical question is solvable; the institutional one is not yet asked at the right altitude. When the retriever decides what counts as evidence — for whom, in which language, against which layout — who in your organization can stand behind that choice when it goes wrong?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors