When RAG Confidence Scores Mislead in High-Stakes Decisions

Table of Contents

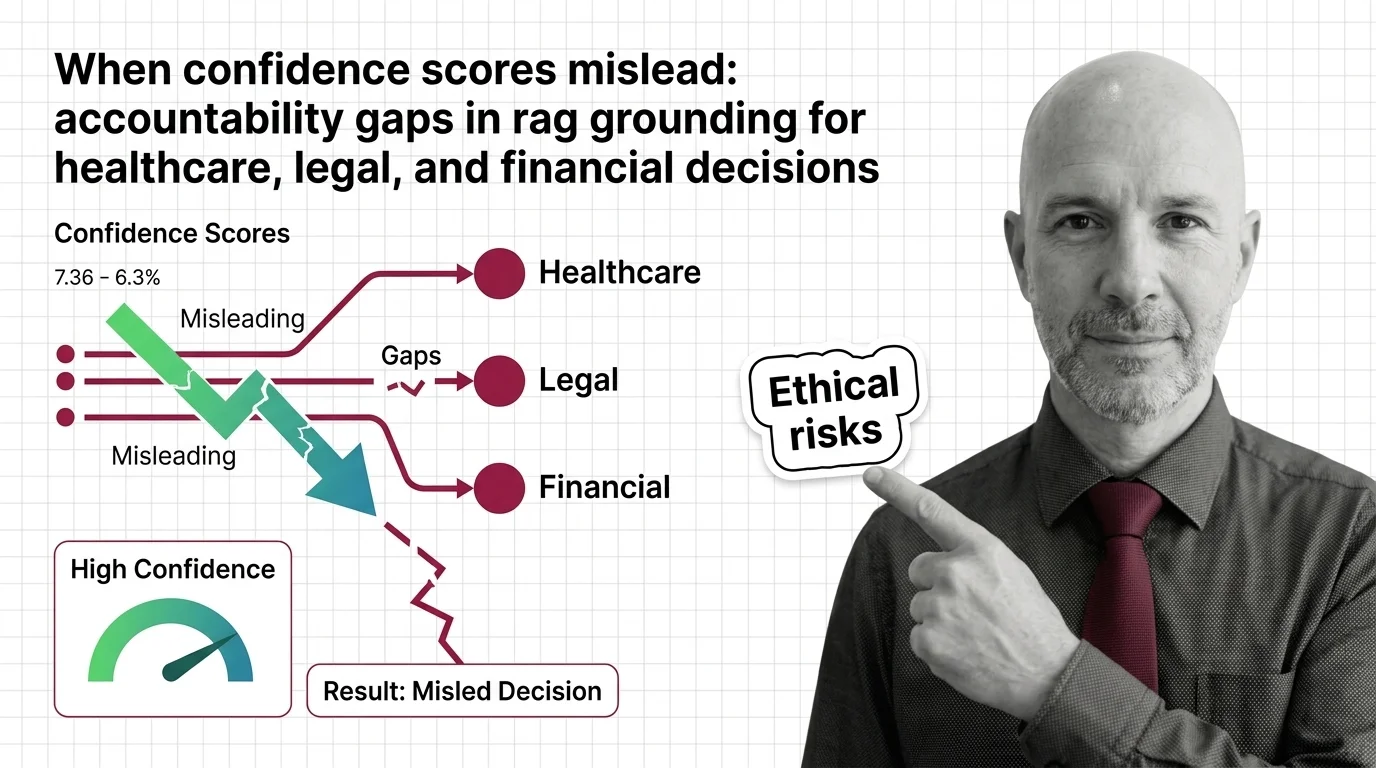

The Hard Truth

A clinician glances at a 0.95 faithfulness score on a drug-interaction summary and signs off. A junior associate watches a citation pass a hallucination check and files the brief. A loan officer accepts a credit memo because the grounding gauge glows green. Three professions, one shared assumption — that a number on a dashboard is a substitute for the judgment those professions were credentialed to provide.

We have built an industry around evaluating whether language models tell the truth, and we have started calling those evaluations confidence. The RAG Guardrails And Grounding stack now ships with leaderboards, judge models, and probabilistic gates that emit clean numbers between zero and one. Inside high-stakes decisions, those numbers have quietly begun to function as authority — and that quiet is doing a great deal of moral work.

The Question Underneath the Dashboard

There is a question regulated industries have not yet learned to ask out loud. What does a faithfulness score of 0.92 mean to a patient whose triage path was ranked, or to a borrower whose loan application was just declined? In the language of RAG Evaluation it means a judge model — sometimes another LLM, sometimes a fine-tuned classifier — found that most claims in the answer could be traced back to retrieved passages. It does not mean the retrieved passages were correct. It does not mean the model considered an alternative. It does not mean the answer is right.

The numbers that govern the conversation are awkward. The Vectara harder leaderboard, which spans 7,700+ articles across law, medicine, finance, education, and technology, has found that today’s strongest reasoning models still hallucinate above ten percent on its harder benchmark (Vectara Blog). Ten percent is not catastrophic in a casual chatbot. In a system that triages emergency care, scores credit, or drafts a legal motion, ten percent is a description of how often a confidently wrong answer will slip past the gate. And the score the user actually sees is not that gate’s failure rate. It is a different number entirely, computed by a different model, on a different question.

So what exactly does the green light mean to the human who will have to defend the decision in court?

What the Confidence-Score Defense Actually Sounds Like

The serious case for confidence scoring in regulated domains is not naive. Vectara HHEM now powers a leaderboard that has matured from single-classifier scoring to a FaithJudge ensemble combining few-shot LLM judgment with human annotations, per Vectara Blog. Patronus Lynx fine-tuned Llama-3-70B-Instruct into a 70-billion-parameter open hallucination detector that reaches 87.4% accuracy on HaluBench across finance, medicine, and general-domain samples (Patronus AI Blog). On the medical PubMedQA slice, Lynx-70B is 8.3% more accurate than GPT-4o at catching errors. Nemo Guardrails ships programmable rails that intercept whole classes of unsafe behavior before a token reaches the user. None of this is theatre.

The defense continues: scores create the conditions for governance. A regulator can ask for thresholds. An auditor can ask for logs. A hospital, a law firm, a bank can require that no answer below 0.9 ever reaches a human signature line. Confidence numbers translate machine behavior into a vocabulary that compliance teams already understand, and a vocabulary they can negotiate with vendors over.

Inside that vocabulary the position is coherent. Numbers create the conditions for accountability. They are also, on closer inspection, the wrong unit of accountability for the decisions in question.

What the Score Is Actually Measuring

The hidden assumption inside the defense is that confidence and correctness are the same conversation. They are not. A Grounding score answers a narrow technical question — does the generated answer align with retrieved context — and treats every other failure mode as out of scope. The Atlan analysis of major frameworks is unsentimental about this: a RAG system can score 0.95 faithfulness and still produce wrong answers if the retrieved context is stale or incorrect, because no inference-layer framework can distinguish a factually wrong context from a correct one (Atlan).

The cracks compound below that. RAGAS assigns a score of zero to “I don’t know” and refusal answers, penalizing the safe behavior most regulators actually want, per Atlan. TruLens uses a 0–10 information-overlap scale without published guidelines, producing inconsistent scores on identical evidence (Atlan). LLM-as-judge methods exhibit documented length, recency, and self-preference biases, with human-judge agreement landing somewhere in a 0.6–0.8 band (Atlan). On clinical reasoning benchmarks, retrieval-augmented prompting has been shown to make LLaMA-3.1 more overconfident and Phi-3.5 more underconfident on the same MMLU subset under identical settings (arXiv 2412.20309).

The word “confidence” hides three different things in one shared label — token-level probability, judge-model agreement, and calibrated uncertainty — and most dashboards do not tell their users which of the three they are looking at. A clinician taught to interpret a p-value will read these numbers through the wrong epistemology, and nothing on the screen will warn her.

A Different History Tells a Different Story

Medicine has done this before. Pulse oximetry — the small clip that estimates blood oxygen — was for decades treated as ground truth. It was not. The sensor had been calibrated mostly on patients with lighter skin, and a generation of darker-skinned patients received delayed treatment because the green number on the monitor lied with quiet confidence. The device was not malicious. It was simply measuring something narrower than the question the clinician was asking.

Confidence scores in RAG Evaluation sit in the same epistemic position. They measure something specific — alignment between an answer and a retrieved corpus — and they are read as something more general. The retrieval layer is partly governed by Sparse Retrieval and reranker stacks whose biases ride invisibly into the score. The judge model is partly governed by training distributions whose blind spots become the dashboard’s blind spots. None of that asymmetry is visible in the number that reaches the human at the end of the chain.

Pulse oximetry did not fail because clinicians were reckless. It failed because the sensor abstracted away the variability that mattered. Our governance failure with RAG confidence scores would be the same failure replayed at the speed of inference.

The Position This Argument Reaches

Thesis (one sentence, required): A confidence score in a high-stakes RAG pipeline is a measurement, not an authorization, and any system that allows the score to substitute for human judgment has misallocated responsibility from the people credentialed to bear it to a number that cannot.

That conclusion holds even — perhaps especially — when the underlying engineering is excellent. The better the leaderboard, the more deference the dashboard receives, and the less likely a clinician or attorney is to argue with it. The score does not absorb responsibility; it relocates it. Annex III of the EU AI Act lists credit scoring of natural persons, life and health insurance risk pricing, emergency healthcare triage, and eligibility for essential public services as high-risk uses, and the transparency obligation requires that systems be “sufficiently transparent to enable deployers to interpret outputs correctly,” with the relevant rules taking effect August 2026 (EU AI Act). A green confidence score that conceals which of the three definitions of confidence it is reporting does not meet that bar. The legal obligation is moving toward a kind of interpretability the technology has not yet supplied.

The empirical record in adjacent domains has already arrived. Esquire Solutions documents at least fifteen cases of AI-fabricated citations appearing in court filings in the thirty months following the Mata v. Avianca order. In Johnson v. Dunn, decided in the Northern District of Alabama on July 23, 2025, a large law firm was sanctioned after hallucinated citations appeared in a deposition motion (Esquire Solutions). The UK Financial Conduct Authority’s 2026 priorities require that AI tools be “explainable, unbiased and aligned with customer outcomes,” with documented oversight of vendors and models (Skillcast). The institutions are beginning to ask the question. The dashboards are not yet ready to answer it.

Questions We Owe the People Downstream

The work is not to abandon evaluation. The work is to remember what evaluations are for. Before a faithfulness score is allowed to govern a clinical, legal, or financial decision, who has been told which kind of confidence it represents — and who decided that distinction was unnecessary? When a refusal is scored as a failure, what kind of behavior is the system being trained to suppress, and in whose interest? In the moment a regulated decision goes wrong, will the audit trail allow anyone to reconstruct why the system was confident — or only that it was?

These are questions a number cannot answer. They are also the questions our institutions are about to start asking under conditions where “the model said so” will not, on its own, be a defense.

Where This Argument Is Weakest

The argument depends on the claim that human judgment, properly resourced, is a better check than calibrated automation. That claim is not always true. Clinicians overrule correct algorithmic recommendations. Loan officers introduce their own biases. If RAG confidence scoring genuinely improves on the worst human errors in high-stakes domains, then dismissing the dashboard for being narrow is itself a kind of negligence. A future where well-calibrated grounding scores measurably reduce malpractice and wrongful denials would prove this essay too cautious.

The Question That Remains

The dashboard reads 0.95 and the room is reassured. Somewhere upstream a retrieval choice was made, a judge model was trained, a threshold was set — by people the user will never meet, accountable to no one in the room. When the decision goes wrong, who carries the weight, and on whose authority did they ever agree to carry it?

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

Ethically, Alan.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors