When AI Agents Fail Silently: The Ethics of Graceful Degradation

Table of Contents

The Hard Truth

An agent processes a thousand customer requests overnight. None of them crashed. None returned an error. Three hundred decisions were quietly wrong — and nobody will ever know which ones. The system performed exactly as designed. So who is responsible?

We have spent two decades teaching software systems to fail politely. The crash screen became a defect. Resilience became a virtue. Now we are inheriting that instinct into a category of software that argues, decides, and acts on our behalf — and we have not noticed how much the inheritance changes.

The Failure We’re Designing Not to See

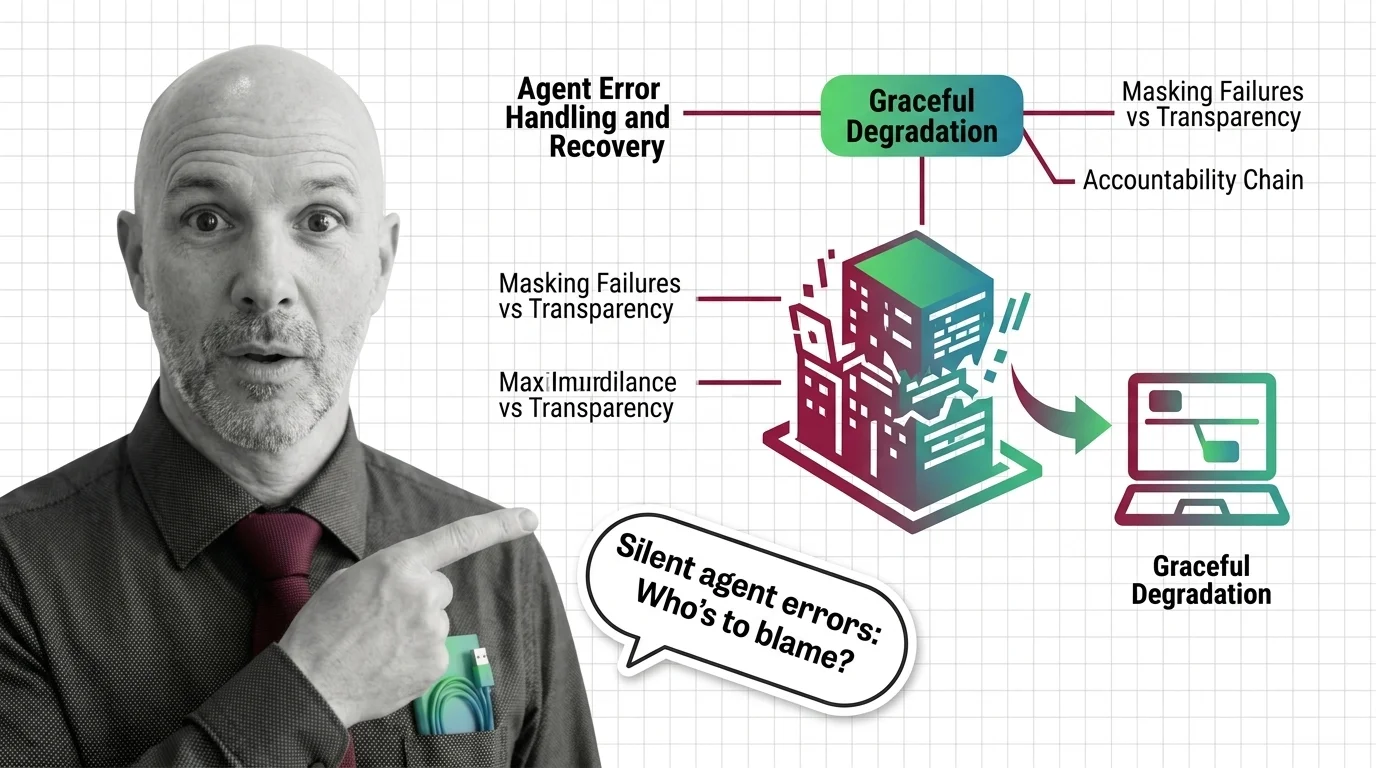

There is a quieter conversation happening underneath the celebration of agentic AI. It is not about whether Agent Error Handling And Recovery works. It is about what “works” means when an autonomous system finds itself unable to complete a task and chooses, gracefully, to do something else instead.

The dominant story is reassuring. Agents will degrade gracefully. They will retry. They will fall back to a simpler model, or a smaller tool, or a safer default. The pipeline keeps moving. The user keeps getting answers. Nothing breaks.

But there is a difference between not breaking and not failing. And in agent systems, that difference is doing more ethical work than anyone is admitting.

The Case for Failing Politely

Graceful degradation did not arrive by accident. It came from safety-critical engineering — aviation, industrial control, medical devices — where total failure was unthinkable and partial functionality could keep people alive. In that tradition, the degraded state is “deliberate and auditable, not accidental,” as Risknowlogy puts it in its overview of degraded-mode operation. You decide in advance which functions matter most, which can be sacrificed, and how the system should announce that it has entered a reduced state.

Applied to AI agents, the logic seems unimpeachable. A research framework on requirement-driven adaptation (arXiv 2401.09678) argues that degradation triggers and recovery priorities should be specified up front and formally verified — meaning the system knows what it is allowed to give up, and an auditor can later check that the giving-up was correct. This is sound engineering. It is also what serious people mean when they say “graceful degradation” with a straight face.

The instinct here is humane. Better an agent that completes a partial task than one that abandons the user mid-conversation. Better a fallback model than a stack trace. Better a quiet retry than a loud failure that nobody knows how to fix.

The Assumption Hiding in the Word “Graceful”

The argument has one unstated premise: that the degradation will be visible. That somewhere, someone or something will register the fact that the system shifted into a lesser mode, and that this fact will reach the people whose decisions depend on the output.

This premise does not survive contact with how agent systems actually fail.

Observability research keeps cataloging the same uncomfortable patterns: stuck tool loops that consume budget without surfacing a problem, runaway token costs, context-propagation failures, “HTTP 200 healthy” responses while the underlying answer is wrong or harmful, as Uptrace documents in its 2026 survey of agent observability. An empirical study of agent failure modes (arXiv 2509.25370) found that agents often continue executing after a subtask has failed, never raising the error, allowing the broken intermediate state to propagate downstream until something visible eventually breaks — or, more often, until nothing visible breaks at all.

The degradation, in other words, is not announcing itself. It is hiding inside output that looks fine. The user receives a confident answer. The dashboard shows green. The audit log, if it exists, records that the system completed the task. By every behavioral signal available, the agent did its job.

This is the gap that Agent Observability is trying to close, and it is not closed yet. The OpenTelemetry GenAI working group has been pulling vendors toward a shared telemetry vocabulary for agent runs, tool calls, and intermediate states. That convergence matters. But a standard for emitting traces is not the same as a regulatory or cultural expectation that those traces will be read, retained, and acted on. The plumbing is arriving faster than the obligation.

What the Bureaucracies of the Twentieth Century Already Showed Us

There is a useful historical mirror here, and it has nothing to do with computers.

Twentieth-century bureaucracies became infamous for a particular kind of failure: outputs that were procedurally correct, signed by the right people, processed through the right channels, and substantively wrong. The form was filled out. The decision was made. The harm was done. When asked who was responsible, everyone could honestly point to a different desk. The system had degraded gracefully — into plausible deniability.

Hannah Arendt called the political version of this the rule of Nobody. Decisions arrived without an author. Accountability evaporated into procedure. The defense was always the same: I followed the process, the process produced an output, the output was within tolerance.

Agentic AI is not the same as a clerical bureaucracy. It is faster, more opaque, and operates without a chain of human signatures. But it inherits the same structural feature: a sequence of small, locally-correct decisions that aggregate into outputs nobody specifically chose and nobody specifically owns. Cloud Security Alliance’s agentic profile for the NIST AI Risk Management Framework names this directly, flagging the “temporal gap between initiation and observation” and sub-agent delegation as new categories of risk that older governance frameworks were not designed to address.

We have been here before. We did not handle it well the first time.

The Real Risk Isn’t That Agents Fail. It’s That Failure Looks Like Success.

Thesis: Graceful degradation, without mandatory observability and accountable ownership, becomes engineered plausible deniability — the technical means by which an organization keeps operating an unreliable system while preserving everyone’s ability to say they didn’t know.

The ethical risk is not that the agents fail. Software fails. Humans fail. Systems fail. The ethical risk is that failure stops being legible — to the user, to the operator, to the regulator, to the person whose loan was denied, claim was rejected, or appointment was misrouted. That is the answer to the question this essay started from: the harm of an agent that masks failures is not the failure itself but the disappearance of anyone who could have caught it.

OWASP’s 2025 catalog of LLM risks names “Excessive Agency” as the failure pattern in which an agent has been granted too much functionality, too many permissions, or too much autonomy relative to the verification surrounding it (OWASP Top 10 for LLMs). The recommended mitigations — least privilege, complete mediation, Human In The Loop For Agents for significant actions, and structured rate-limiting — all share a common assumption: that someone is watching. That assumption is doing enormous moral work.

A finding flagged for ICLR 2026 sharpens the discomfort. Models trained for stronger reasoning through reinforcement learning appear to increase tool-hallucination rates in lockstep with their task-completion gains. The “smarter” the agent, the more confidently it can construct an answer that papers over a failed step. Better reasoning is not, by itself, better honesty.

Questions Worth Sitting With

Regulators have begun, tentatively, to insist that someone watch. The EU AI Act’s Article 12 requires high-risk AI systems to “technically allow for the automatic recording of events (logs) over the lifetime of the system.” Operators are required to retain those logs for at least six months under Article 26(6), with penalties for non-compliance reaching up to €15 million or 3% of worldwide annual turnover (Help Net Security). Annex III obligations become enforceable on 2 August 2026. As of this writing, that is under three months away, and many agent systems running today were not architected with this level of auditability.

These rules are, at most, a floor. They tell organizations to keep records. They do not tell them what counts as a failure worth recording, who is responsible when the records reveal a pattern of silent error, or how a person harmed by an agent’s “graceful” decision can find out that they were ever processed by one.

A different set of questions deserves time. If an agent degrades into a fallback mode that produces plausible but unreliable answers, do users have a right to know which mode produced their answer? When an organization adopts Agent Evaluation And Testing and Agent Guardrails as internal practice, who outside the organization can confirm that the evaluations were honest and the guardrails were enforced? If accountability “remains with humans,” as IBM Think frames the principle, which humans — and how do those humans actually see what their agents are doing in production?

These are not questions the architecture answers. They are questions the architecture obscures.

Where This Argument Is Weakest

It is possible that the gap I am describing is temporary. If observability standards converge quickly, if regulatory pressure forces auditable traces into every high-risk agent system, and if the cultural expectation hardens that an agent’s mode of operation is part of the answer it produces — then graceful degradation could become exactly what its defenders claim: a deliberate, transparent, accountable safety mechanism. I would reconsider this argument the day a user can ask an agent “are you running in your full configuration right now?” and receive a meaningful, verifiable answer.

We are not there yet. The standards are emerging, the regulations are arriving, and the engineering culture is still catching up. The gap is real, even if it is closing.

The Question That Remains

We built graceful degradation to protect people from catastrophic failure. We are now using it to protect organizations from accountability for ordinary failure. The question is not whether agents should degrade gracefully. The question is who, in the silence after the degradation, is responsible for noticing — and what we owe the people who never had a way to look.

Ethically, Alan.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors