What Is Workflow Orchestration for AI and How DAGs, State Machines, and Conditional Branching Structure LLM Pipelines

Table of Contents

ELI5

Workflow orchestration for AI decides the order, branching, retries, and recovery of multi-step LLM pipelines. Three shapes dominate in 2026 — DAGs for static parallel work, graph state machines for looping agents, and event-driven step graphs for everything between.

A multi-step LLM pipeline can burn through a serious chunk of inference budget, write several tool calls to a database, and then die on a rate limit two steps from the end. When that happens, you discover something uncomfortable about your code: you did not build a workflow, you built a script with delusions. The difference is everything the runtime knows about state, retries, and where to resume.

That gap is what orchestration fills.

The Coordination Layer Hiding Inside Every Production Agent

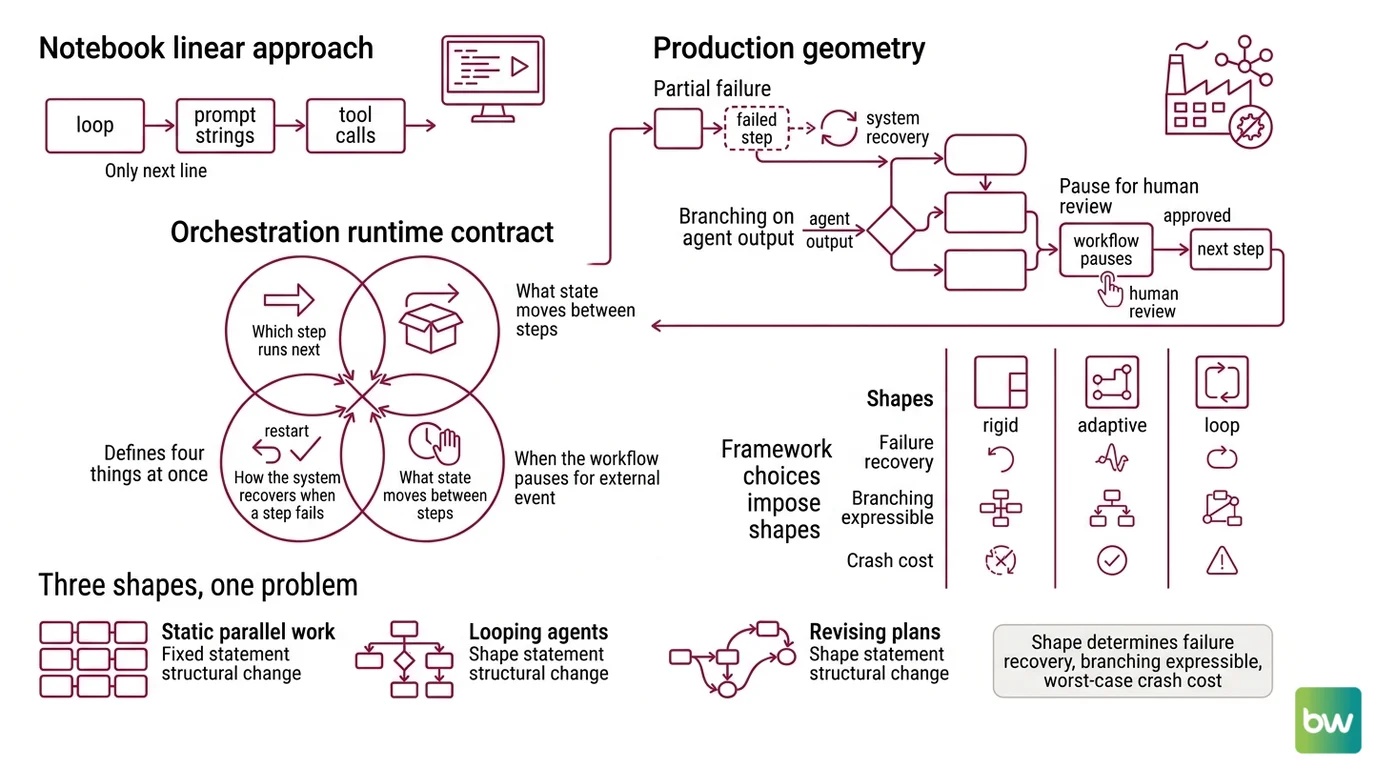

Most LLM applications start as a notebook. A loop, a few prompt strings, a couple of tool calls. The shape looks linear because the developer is following the happy path in their head. The system itself has no concept of “step” — only of “next line.”

Production changes the geometry. Suddenly the system has to survive partial failure, branch on an agent’s output, pause for human review, and replay the last successful state after a crash. The notebook shape cannot represent any of that. Orchestration is the abstraction that names each step, captures what flows between them, and gives the runtime something to recover toward.

What is workflow orchestration for AI?

Orchestration is the runtime contract between an LLM application and the world it acts on. It defines four things at once:

- which step runs next

- what state moves between steps

- how the system recovers when a step fails

- when the workflow pauses for an external event — a tool result, a human approval, a timer

The survey on agent workflow architectures by Yu et al. separates the design space along two axes — functional capabilities like planning and multi-agent collaboration, and architectural features like orchestration flows, specification languages, and agent roles (Yu et al. 2025). The orchestration flow is the axis that determines whether your agent is a script with logs or a system with memory.

Not a wrapper. A runtime.

The framework you choose imposes a shape on this contract. That shape is not cosmetic. It determines what kinds of failure you can recover from, what kinds of branching are expressible, and what the worst-case cost of a crash looks like once your inference bill starts compounding.

Three Shapes, One Problem

The shape your orchestrator imposes is a statement about how much structural change you expect at runtime. Static, parallel work fits one shape. Looping agents that revise their plan fit another. The middle ground — long-running work with conditional fan-out and pause/resume — fits a third.

How does workflow orchestration coordinate multi-step LLM pipelines?

By committing to one of three structural patterns, each with a different runtime model.

DAGs (directed acyclic graphs) are the classical orchestration shape. Tasks are nodes, dependencies are edges, and the scheduler executes nodes whose dependencies have resolved. Apache Airflow pioneered this pattern for ETL and remains the most widely adopted scheduler for scheduled data and feature pipelines (Airflow Docs). Prefect kept the Python-native ergonomics but allowed dynamic, not strictly acyclic flows (Prefect Docs). Dagster reframes the unit as the asset — a table, a feature, a model — rather than the task, which suits ML feature pipelines and lineage tracking (Dagster Docs).

Inside the LLM world, the DAG shape shows up wherever a planner can decompose work in advance. The LLMCompiler pattern, surveyed in Yu et al. 2025, uses an LLM as a static planner that identifies independent tool calls and dispatches them in parallel for latency speedup. The DAG is the right shape when the work is decomposable up front and the cost of being wrong about the plan is low.

Graph state machines take over when the agent’s output itself decides what runs next, where the DAG shape breaks. LangGraph models the application as a StateGraph — nodes are functions, edges include conditional routing on shared state, and START / END markers bound the run (LangGraph Docs). Cycles are first-class. An agent can call a tool, examine the result, decide to call another tool, decide to retry the first, and eventually emit a final answer — all inside one graph that the runtime understands as a coherent execution (LangChain Blog). The official documentation describes the abstraction as a graph; the “state machine” label is editorial shorthand the community has adopted because the behavior is closer to that than to a DAG.

Event-driven step graphs are the third position. LlamaIndex Workflows 1.0 ships with this model: the application is divided into Steps that are triggered by Events and which themselves emit Events that trigger further steps (LlamaIndex Docs). Loops, parallel paths, async, durable pause/resume — all expressible without committing to a strict DAG shape (LlamaIndex Blog). The execution model is closer to how distributed systems already coordinate work: messages, handlers, and back-pressure between components.

The three shapes are not interchangeable. DAGs assume the plan is knowable; state machines assume the plan is discovered at runtime; event-driven graphs assume the plan is reactive to external signals. Picking the wrong shape is what produces orchestrators that work in development and lie in production.

The Anatomy Underneath the Diagrams

Strip the framework names away and the same primitives keep appearing. The shapes differ in which primitives they make ergonomic, not in which ones exist.

What are the core components of an AI workflow orchestration system?

Seven primitives recur across LangGraph, Airflow, Prefect, Dagster, LlamaIndex Workflows, Temporal, and AWS Step Functions:

| Primitive | What it does | Where it shows up |

|---|---|---|

| Sequencing | Defines “after step A, run step B” | Every framework |

| Conditional branching | Runtime decision: which next step, based on state or output | LangGraph conditional edges, ASL Choice state, LlamaIndex event types |

| Parallel / fan-out | Multiple steps run concurrently, results joined | DAG schedulers, LangGraph Send, Step Functions Parallel / Map |

| Loops / cycles | A step can lead back to a prior step | LangGraph (native), LlamaIndex (via events), Step Functions (state transitions) |

| Shared state | Each step reads and writes a structured payload | LangGraph State, Airflow XCom, LlamaIndex Context |

| Human-in-the-loop | Workflow pauses for an external decision | LangGraph interrupts, Step Functions wait-for-callback |

| Persistence / durability | Workflow survives crashes via checkpoint and replay | Temporal, AWS Lambda Durable Functions, LangGraph persistence layer |

The last primitive — durability is where the most consequential shift is happening. Temporal models workflows as ordinary code with unlimited duration, automatic retries, and checkpoint-and-replay; long-running LLM agent loops, multi-agent handoffs, and prompt-chain pipelines that must survive timeouts or provider rate limits are the canonical use cases (Temporal Docs). AWS Step Functions encodes workflows in JSON via Amazon States Language as explicit state machines, integrating directly with AWS services (AWS Step Functions Docs). AWS Lambda Durable Functions, announced at re:Invent 2025 and reaching GA in early 2026, brings the same checkpoint-and-replay model directly inside Lambda — explicitly positioned at AI agent orchestration (AWS Blog).

Durability is the layer your top-of-stack framework either inherits or has to invent.

Security & compatibility notes:

- LlamaIndex Workflows: Pre-1.0 tutorials may show deprecated API shapes. Pin to

llama-index-workflows1.0 or later when copying examples (LlamaIndex Blog). - AWS Lambda Durable Functions: GA in early 2026 — older orchestration write-ups do not cover it; verify the current API against the AWS Blog announcement before adoption.

- Temporal: Temporal Nexus and Multi-Region Replication reached GA in early 2026; comparisons published before that date predate these capabilities.

- LangGraph deployment claims: Third-party blog posts cite Uber, Klarna, and LinkedIn as production users; these claims are not independently verified against official customer announcements and should be treated as illustrative rather than evidence.

What the Shape Predicts

The choice of orchestration shape is not a taste preference. It predicts the failure modes you will see in production.

- If your work decomposes cleanly into independent tasks and the plan is stable across runs, you should observe linear cost-to-add-a-step — and a DAG orchestrator will feel natural.

- If your agent’s next action depends on its previous output, you should observe DAG frameworks fighting you with workarounds: sub-DAGs, branch operators, dynamic task mapping. That is the signal a graph state machine fits the problem better.

- If long-running steps need to pause for external events — a webhook, a human approval, a slow tool — you should observe state corruption or retry storms in any framework that lacks durable execution. That is the signal you need Temporal, Step Functions, or Lambda Durable Functions underneath whatever you chose at the top.

Rule of thumb: Pick the shape that matches your runtime structural change, not your application domain. ETL with stable plans → DAG. Agents that decide what to do next → graph state machine. Long-running, event-reactive flows → event-driven step graph with durable execution underneath.

When it breaks: Orchestration cannot fix nondeterministic agent outputs. If the LLM emits a malformed tool call, every framework will faithfully execute that malformed call — checkpoint it, retry it, and replay it across restarts. The orchestrator coordinates structural failure, not semantic correctness. Combine it with output validation and typed state schemas, or you will simply make your bugs durable.

The Reverse Lesson from Distributed Systems

There is a quiet inversion happening at the bottom of the stack. For years, application developers built workflows on top of state machines they wrote themselves — fragile, hand-rolled, undocumented. Temporal’s argument is that the state machine should disappear into the runtime, and developers should write ordinary code that the platform makes durable (Temporal Blog).

LLM orchestration accelerated this inversion. The combination of unbounded latency, provider rate limits, and the cost of replaying expensive inference made the “write your own retry loop” approach untenable. The frameworks that survived 2024–2026 are the ones that exposed durability as a default rather than a bolt-on.

The trend identified by Yu et al. — toward structural change as a first-class runtime action, including planner-executor frameworks like DyFlow that revise sub-goals mid-execution — only intensifies this pressure (Yu et al. 2025). The more your workflow’s shape changes at runtime, the more the runtime itself has to be the source of truth for “where am I and what happens next.”

The Data Says

Workflow orchestration is the difference between an LLM script and an LLM system. Three shapes dominate — DAG, graph state machine, event-driven step graph — and beneath all three, durable execution is becoming the default rather than the upgrade. The shape encodes an assumption about how much your plan changes at runtime; pick the wrong shape and your retries, your branching, and your recovery will all fight you instead of working for you.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors