What Is Image Upscaling and How AI Super-Resolution Reconstructs Detail Beyond the Original Pixels

Table of Contents

ELI5

AI image upscaling doesn’t enlarge what’s already there — it predicts what should be there. Generative models like GANs and diffusion networks fabricate plausible high-resolution pixels using patterns absorbed from millions of training images.

Run a blurry 256-pixel portrait through Real-ESRGAN and eyelashes appear that were never captured by the camera — yet placed with anatomically plausible density. The model did not enlarge what existed. It generated what statistically should exist. The distance between those two operations is the entire story of modern super-resolution.

The Pixels That Aren’t There

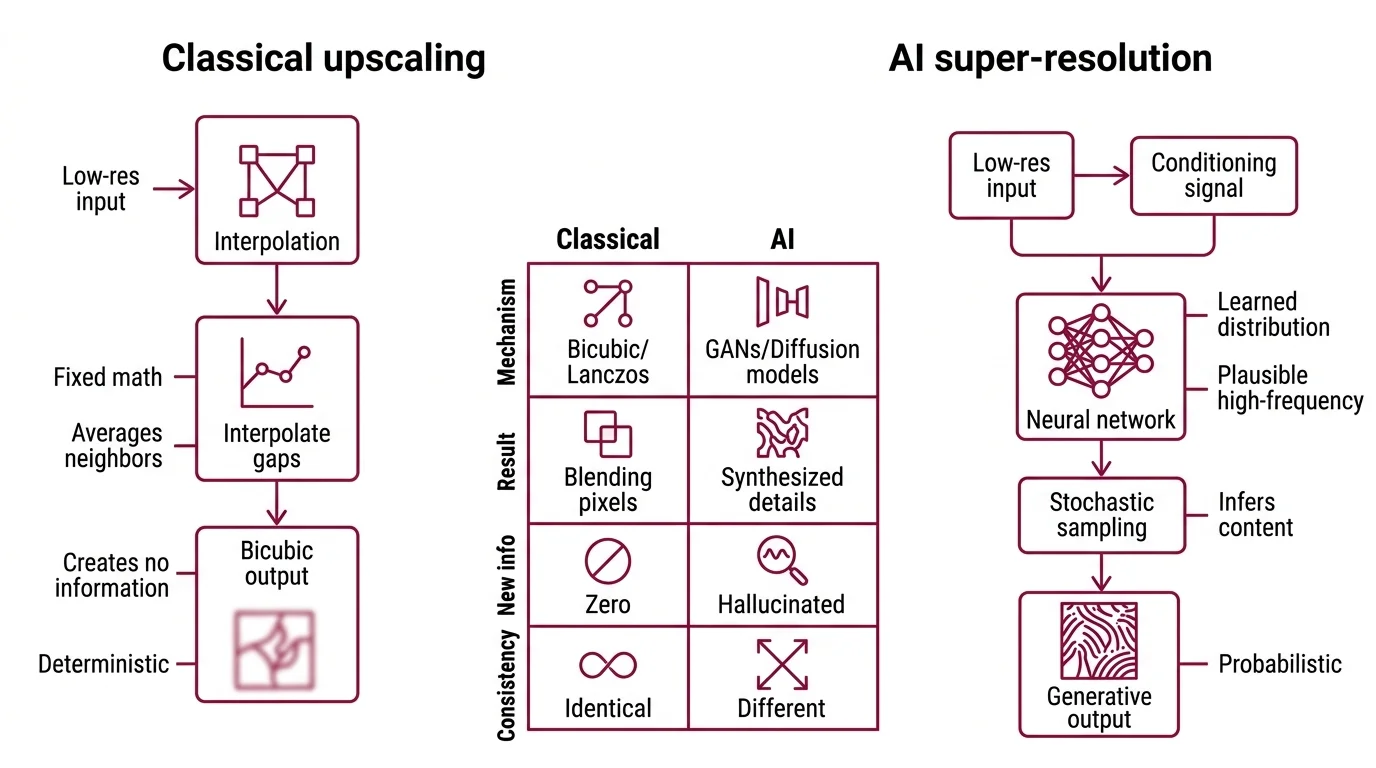

Classical upscaling is interpolation: you have N pixels, you need 4N pixels, and you fill the gaps with weighted averages of neighbors. Bicubic, Lanczos, and their cousins share one structural property — they cannot create information that was not in the input. AI super-resolution does something different, and the difference is mathematical rather than cosmetic.

What is AI image upscaling?

AI image upscaling is the use of trained neural networks to increase an image’s resolution while inferring detail that was not present in the low-resolution source. Unlike bicubic or Lanczos filters — which mathematically blend existing pixels — AI upscalers (typically convolutional neural networks, generative adversarial networks, or Diffusion Models) produce a high-resolution output by sampling from a learned distribution of plausible high-frequency content.

The key word is “plausible.” The model does not recover what was lost during downsampling; it hallucinates content that matches the statistical signature of high-resolution images in its training data.

This shifts the problem from a deterministic operation to a probabilistic inference. A bicubic filter applied to the same input always returns the same output. A diffusion-based upscaler returns different outputs across runs because its forward pass involves stochastic sampling. Two valid upscales of the same input can disagree on whether a freckle is present.

How does AI super-resolution add detail that was never in the original image?

The honest answer requires abandoning the language of “adding detail.” The model is generating an entirely new image that happens to be consistent with the input as a low-resolution constraint.

Inside a generative super-resolution model, the low-resolution input acts as a conditioning signal. During training, the model internalized the joint distribution of edges, textures, and local structures that characterize natural photographs. Given the conditioning input, it samples a high-resolution image whose downsampled version would match the input. Many such images exist. The model picks one.

This is why two different super-resolution outputs of the same blurry license plate can produce two different sets of digits — both consistent with the blur, neither necessarily correct. The detail was not in the input. It was in the prior — the latent geometry the model carries from training.

ESRGAN, introduced by Wang et al. in 2018, made this concrete by replacing pixel-wise loss with a perceptual loss computed in feature space, plus a relativistic adversarial loss that asks the discriminator not “is this real?” but “is this more real than that?” (ESRGAN arXiv). The training objective stops rewarding pixel-level accuracy and starts rewarding plausibility.

Real-ESRGAN, the most widely deployed open-source upscaler, took the next step: it trained exclusively on synthetic degradations — blur, noise, JPEG artifacts, and downsampling applied in random combinations — so the model would learn to invert real-world image corruption rather than the clean bicubic downsampling used in benchmarks (Real-ESRGAN GitHub). The result is a model that hallucinates plausible detail under realistic input conditions, not laboratory ones.

Not enlargement. Inference.

Two Mathematical Lineages: GAN and Diffusion

The field has split, roughly, into two camps that arrive at the same problem from opposite directions. Both produce high-resolution images that look natural; both invent detail that was not present in the input. But the mechanism by which they do so reveals different intuitions about what plausibility means and how it should be enforced.

What is the difference between GAN-based and diffusion-based upscaling?

A GAN-based upscaler (the ESRGAN/Real-ESRGAN lineage) is a single-pass generator trained against a discriminator. The generator network takes the low-resolution image and produces a high-resolution candidate in one forward pass. The discriminator has been trained to distinguish real high-resolution images from generator outputs. Their adversarial loop pushes the generator toward a fixed point: outputs that the discriminator cannot reliably distinguish from real photographs. ESRGAN’s specific contribution was the Residual-in-Residual Dense Block (RRDB) backbone and a relativistic discriminator — both designed to stabilize this adversarial dynamic, which had previously suffered from mode collapse and noisy textures (ESRGAN arXiv).

A diffusion-based upscaler reverses the framing. It does not generate the high-resolution image directly. Instead, it starts from pure noise and iteratively denoises across many steps, with each step conditioned on the low-resolution input. The model has learned, during training, to undo a fixed noising schedule applied to high-resolution images. At inference, you reverse the schedule: start with noise, run the denoising process, end with a high-resolution image. SUPIR (Scaling Up to Excellence), presented at CVPR 2024 by Yu et al., builds this on top of Stable Diffusion XL with a specialized adapter (ZeroSFT) that injects the low-resolution conditioning, plus a vision-language model (LLaVA) that generates a text prompt describing the image to guide the denoising process (SUPIR arXiv).

The differences cascade. GANs are fast — a single forward pass, well-suited for real-time use. Diffusion is slow — many denoising steps, each a full network pass. GANs produce sharper, sometimes harsher textures with occasional adversarial artifacts. Diffusion produces smoother, more naturalistic textures at higher cost, with a stronger tendency to hallucinate larger structural changes that GANs would not attempt.

The intuitive narrative — diffusion is better, GANs are obsolete — turns out to be partially wrong. Under matched architecture, GAN matches or beats diffusion on perceptual quality once you control for model size, training data, and compute (Mei et al. arXiv). What looks like architectural superiority is sometimes a scale advantage in disguise.

Tiles, Tools, and the Production Reality

The mathematics of super-resolution is one story. The engineering of running it on a 4K image without melting your GPU is another, and it shapes which models actually get deployed.

Most generative upscalers were trained at modest resolutions — 512×512 to 1024×1024 pixels. Run them at higher resolution and two things happen: VRAM consumption explodes quadratically with image area, and spatial coherence degrades because the model never saw inputs of that size during training. The standard production workaround is Tiled Upscaling — splitting the image into overlapping patches, processing each independently, and stitching the results back together. Tiled Diffusion in ComfyUI recommends a 512-pixel tile with 64 pixels of overlap, balancing memory pressure against the seam artifacts that emerge when adjacent tiles disagree about texture (RunComfy Docs).

Three tools dominate the prosumer surface. Magnific, now part of the Freepik AI Suite, supports 2x to 16x scaling with outputs up to 10,752 by 7,168 pixels (Magnific AI). Its Precision v2 mode added three purpose-built models — sublime, photo, and photo denoiser — explicitly to address hallucination complaints, including a high-fidelity setting that suppresses fabricated detail (Freepik Blog). Topaz Gigapixel runs locally — important for photographers handling private client work — and offers nine AI model presets. Topaz Labs discontinued perpetual licenses on October 3, 2026, moving to a Topaz Studio subscription (Topaz Labs Pricing); older tutorials referencing the one-time purchase are out of date. ComfyUI is the open-source workflow surface where prosumers chain diffusion models like SUPIR with custom samplers and tiling strategies — slower to set up than commercial tools, but with no model lock-in.

The relationship between these tools and the underlying research is loose. Magnific’s architecture is undisclosed beyond “proprietary deep-learning models.” Topaz’s models are similarly opaque. SUPIR, by contrast, is fully open — code, weights, and paper are all public — but the project has no formal release tags, and users pull weights directly from HuggingFace (SUPIR GitHub). Production deployments converge on Real-ESRGAN as the speed default and SUPIR or its successors as the quality-maximized option for offline batch processing.

ComfyUI workflows blur the line with AI Image Editing — upscaling and prompt-conditioned modification share the same diffusion infrastructure, so a single graph can both restore and reinterpret. Custom LoRA for Image Generation adapters can be layered into the upscaling pass to bias the prior toward a specific domain: anime restoration, vintage film grain, or product photography where the generic prior produces wrong textures.

What the Pixel Geometry Predicts

If a model fabricates plausible detail rather than recovers lost detail, three predictions follow.

If you upscale an image whose original captured something rare — a specific person’s facial features, a particular logo, distinctive license plate digits — expect the model to default toward the modal version of that category from its training distribution. The face will look generically like a face. The logo will look approximately correct but not legally identifiable. This is not a bug. The model is honestly reporting that it has no information to reconstruct the specific case.

If your upscaling output looks suspiciously sharp on a region that was a smear of pixels in the input, that sharpness is invented. The crispness does not validate accuracy. For forensic, medical, or evidentiary use, generative super-resolution is fundamentally inappropriate — the output is a plausible image, not a recovered one (Hallucination Score Paper).

If you switch from a GAN to a diffusion model and the texture quality improves, check whether you also increased model size, training data, or step count. The architectural choice may matter less than these adjacent variables.

Rule of thumb: generative upscalers reduce uncertainty about high-frequency content by replacing it with the model’s prior — useful when plausibility matters more than fidelity, dangerous when fidelity matters more than plausibility.

When it breaks: the failure mode that limits all generative super-resolution is hallucination of structurally significant content — faces that don’t match the subject, text that reads almost-but-not-quite the original characters, fingerprints that look right but are not — and there is no industry-standard benchmark for measuring it; the 2025 Hallucination Score paper proposed one, but adoption remains research-stage (Hallucination Score Paper).

The Data Says

AI image upscaling is not a method for recovering lost information. It is a method for generating new information that is statistically consistent with what was preserved. The two main lineages — GAN and diffusion — disagree on the mechanism but agree on the principle: the high-resolution output is sampled from a learned prior, not reconstructed from the low-resolution input. The practical consequences trace back to that single fact. Plausibility is not accuracy, and the most useful upscaling tools are the ones that let you choose between them.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors