Agent Frameworks: How LangGraph, CrewAI, and AutoGen Orchestrate LLMs

Table of Contents

ELI5

An agent framework is the runtime that turns an LLM into a worker — wiring its reasoning to tools, memory, and a control loop. The model decides what to do; the framework executes, remembers, and recovers when something fails.

You can build a working LLM agent in a weekend with while True and a few function calls. Most teams do exactly that — until the agent has to handle a multi-step task, recover from a tool failure, or pick up where it left off after a crash. That is when the homemade loop unravels and the real question surfaces: which framework’s abstraction is actually shaped like the work you are doing?

Three names dominate the answer in 2026. LangGraph, CrewAI, and AutoGen all claim to orchestrate LLM agents, and all three behave differently the moment the agent leaves the demo and starts handling real users. The differences are not stylistic. They are architectural — and they surface exactly where your workflow breaks.

What an Agent Framework Actually Is

Before the comparison, the category itself needs a sharper edge. The phrase “agent framework” gets used for everything from a thin prompt wrapper to a full multi-process runtime, which is part of why teams pick the wrong one.

What is an agent framework in AI?

An agent framework is the layer of software that takes an LLM’s textual decisions and turns them into executable actions inside a controlled loop. The model proposes; the framework disposes. Concretely, it provides four things the bare model cannot: a way to call external tools with structured arguments, a memory store that survives between turns, a planner or controller that decides when the loop ends, and a transport for state — what has happened so far, what the agent observed, what it intends to do next.

The community formula has converged to a compact identity: “Agent = LLM + Tools + Memory + Planning/Control” (Prompting Guide). The framework’s job is to be the surrounding apparatus that makes the equals sign hold under load.

That definition is intentionally narrow. A framework is not the model. It is not the tools either — those are just APIs and Python functions on the other side of a schema. The framework is the Agent Planning And Reasoning substrate: the runtime that decides what runs next, what gets remembered, and what to do when a step fails.

Notice the consequence: choosing a framework is choosing a control flow, not a feature list. Two frameworks can both list “tool calling” and “memory” as features and still behave like different machines, because the abstraction that carries the loop is different in each.

That is the lens for what comes next.

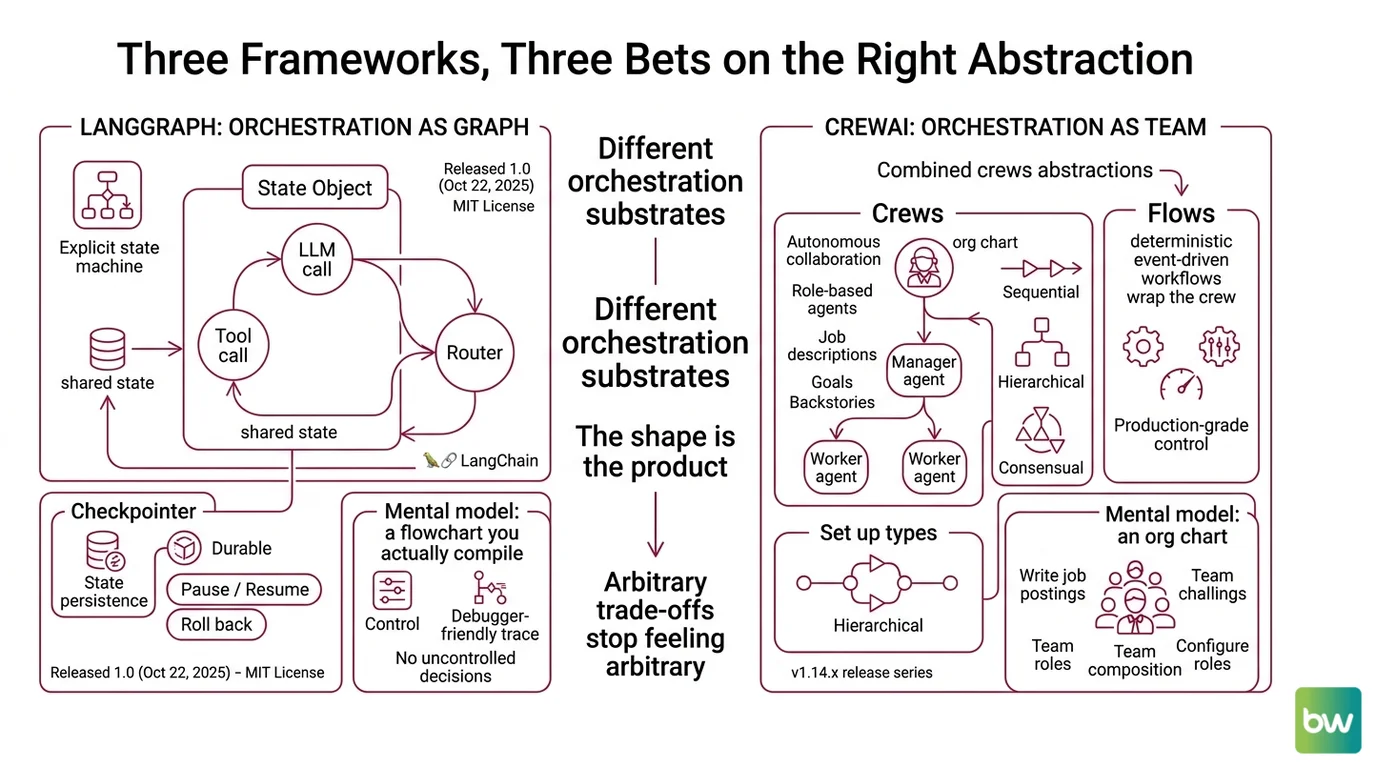

Three Frameworks, Three Bets on the Right Abstraction

LangGraph, CrewAI, and AutoGen each picked a different shape for the orchestration substrate. The shape is the product. Once you see it, the trade-offs stop feeling arbitrary.

How do agent frameworks like LangGraph and CrewAI orchestrate LLM calls, tools, and memory?

LangGraph models the agent as a graph. Released as a 1.0 generally-available milestone on October 22, 2025 under MIT license (LangChain Blog),

LangGraph represents the agent as an explicit state machine: nodes are functions (an LLM call, a tool call, a router), edges are transitions, and a shared State object flows through every node carrying everything the agent has seen and decided. The runtime persists that state to a checkpointer, so the same conversation can be paused, resumed across processes, or rolled back to an earlier turn. LangChain Docs describes it as “a low-level orchestration framework and runtime for building stateful, durable, long-running agents as graphs.”

The mental model is a flowchart you actually compile. You declare every legal transition; the LLM never decides “where do I go next” outside the structure you drew. That gives you control and a debugger-friendly trace, at the cost of writing more graph than you would write loop.

CrewAI models the agent as a role inside a team. CrewAI is independent of LangChain and combines two abstractions side by side: Crews — autonomous, role-based collaborations where agents have job descriptions, goals, and backstories — and Flows, deterministic event-driven workflows that wrap the crew when you need production-grade control (CrewAI Docs). The processes you can configure for a crew include sequential, hierarchical (a manager agent delegates to workers), and consensual.

The mental model is an org chart. You stop writing transitions and start writing job postings. CrewAI is currently in the v1.14.x release series — the patch number moves on roughly weekly cadence, so pin the minor version, not the patch.

AutoGen models the agent as an actor exchanging messages. AutoGen v0.4 is asynchronous and event-driven, organized into three layers — Core, AgentChat, and Extensions — with cross-language support across Python and .NET (Microsoft Research Blog). Agents do not call each other directly; they send messages to channels, and the runtime dispatches.

But the trajectory matters more than the architecture. AutoGen is now in maintenance mode — bug fixes and security patches only — and Microsoft is directing new users to the Microsoft Agent Framework, which reached 1.0 GA on April 3, 2026 (Microsoft Learn). Microsoft Agent Framework merges AutoGen and Semantic Kernel into one stack with Python and .NET SDKs. AutoGen is still useful as a reference implementation of the actor model for agents, but it is no longer the strategic destination.

That is the architectural divergence in one paragraph each. Graph. Org chart. Actors. The framework is the abstraction; the abstraction is the bet.

The Components Every Framework Has to Solve

Underneath the architectural bet, all three frameworks have to solve the same set of problems. Naming them makes the comparison precise instead of religious.

What are the core components of an agent framework: planner, executor, memory, and tool layer?

The standard decomposition lists six moving parts (IBM Think): the LLM as the reasoning brain, a planner that decomposes a task into steps, memory that holds both short-term context and longer-term facts, a tool layer that wraps APIs and functions with schemas the model can call, an orchestrator that routes work, and executor agents that actually run the steps. Different docs cut the cake differently — some merge planner and orchestrator, some split memory into episodic and semantic — but those six map cleanly onto every implementation worth using.

| Component | What it does | LangGraph | CrewAI | AutoGen v0.4 |

|---|---|---|---|---|

| LLM | Generates the next action as text | Any provider via LangChain wrappers | Provider-agnostic via litellm | Pluggable model clients |

| Planner | Decomposes goal into steps | Implicit in graph structure you draw | Agent role/goal/backstory + manager agent | Group chat or planner agent |

| Memory | Carries state between turns | State object + checkpointer (durable) | Short-term per crew run + entity memory | Shared message context per group |

| Tool layer | Lets the model call external code | Functions registered as nodes | @tool decorators on Python functions | Tool registration via Extensions |

| Orchestrator | Decides what runs next | Graph edges (deterministic) | Process: sequential, hierarchical, consensual | Async event dispatch |

| Executor | Performs the chosen action | Node function | Agent acting on a task | Actor handling a message |

The pattern in the table is the explanation. LangGraph pushes orchestration into a structure you write by hand and rewards you with a checkpointable Agent Memory Systems layer. CrewAI pushes orchestration into role descriptions and processes and rewards you with less code per agent. AutoGen pushes orchestration into asynchronous message passing and rewards you with a pattern that scales across processes — at the price, currently, of being on a maintenance track.

There is a subtle observation worth flagging. Agent framework decompositions are not standardized. Some authors prefer profile/memory/planning/action; some prefer orchestrator/executor/memory/tools. Both are valid, and the disagreement is not noise — it reflects a genuinely young field where the right cuts are still being argued out. Pick one decomposition, use it consistently, and treat any framework documentation that quietly mixes two as a sign you should reread it slowly.

This is also where Multi Agent Systems as a category re-enters the picture: the moment more than one executor exists, the orchestrator stops being a routing detail and starts being the product. CrewAI’s hierarchical process and AutoGen’s group chat are both attempts to give that orchestrator a name.

Compatibility & maintenance notes:

- AutoGen — maintenance mode: No new features are landing; bug fixes and security patches only. New builds should target Microsoft Agent Framework 1.0 (released April 3, 2026), which absorbs AutoGen and Semantic Kernel into a single stack (Microsoft Learn).

- AutoGen v0.4 breaking change: v0.4 was an async/event-driven rewrite of v0.2. Tutorials and code samples written for v0.2 will not run.

- LangGraph 1.0 deprecation:

langgraph.prebuiltis deprecated; the same functionality now lives underlangchain.agents(LangChain Changelog). Existing imports will need to be updated.- CrewAI 1.14.0 removal:

CodeInterpreterTooland the related code-execution parameters were removed in 1.14.0; agents that relied on in-process code execution need an external sandbox now.- CrewAI litellm bump (resolved): litellm was bumped to >=1.83.0 to address CVE-2026-35030, and gitpython was upgraded for a path-traversal CVE. Both are fixed in current 1.14.x releases.

What the Architecture Predicts

The mechanism is now concrete enough to make predictions. These are if/then statements you can test against your own workflow before committing to a framework.

- If your task has a fixed control flow with audit requirements, LangGraph’s explicit graph and durable state will pay back the extra ceremony, because every transition is something a reviewer can read.

- If your task is intrinsically collaborative — research, writing, review chains — CrewAI’s role abstraction will produce shorter code, because you are describing the team you would have hired anyway.

- If your task needs to run across processes or languages, the actor model behind AutoGen (and Microsoft Agent Framework as the supported successor) is the architecture that survives that boundary, because messages serialize where shared state does not.

- If your task changes shape every week, choose the framework whose abstraction lets you delete the most code, not the one with the most features. Optionality is a real cost.

The practical translation: pick by control flow shape first, ecosystem second. Most “framework regret” in long-running agents traces back to a workflow whose actual structure was incompatible with the abstraction the team chose during the demo.

Rule of thumb: Draw your agent’s control flow on paper before reading any framework’s tutorial. The framework whose abstraction matches your drawing with the fewest workarounds is the right one.

When it breaks: Every framework hides a class of bugs behind its abstraction. LangGraph hides them in graph design — a missing edge produces an agent that silently halts on a state it cannot route. CrewAI hides them in role drift — vague backstories produce agents that confidently do the wrong job. AutoGen hides them in async ordering — a message arriving in the wrong sequence corrupts shared context. The framework is not the bug, but it shapes which bug is yours.

A Quieter Observation About Convergence

The three architectures look opposed, but they are converging. LangGraph 1.0 added higher-level agent abstractions on top of its graph. CrewAI Flows added deterministic control on top of its crews. Microsoft Agent Framework merges AutoGen’s actor model with Semantic Kernel’s planning primitives. The endpoints are pulling toward each other.

The pattern is familiar from earlier infrastructure cycles: the first generation argues about abstractions, the second generation discovers each abstraction needs the others, and the third generation arrives at a unified runtime that exposes them as layers. We are somewhere between the first and second generation right now. That is exactly the period when picking by today’s strengths is more dangerous than picking by tomorrow’s direction.

The Data Says

Agent frameworks differ less in what they do than in the shape they impose on your control flow — graph, org chart, or actor mesh. LangGraph’s 1.0 release is a stable bet on durable, stateful graphs (LangChain Blog); CrewAI’s 1.14.x series is a stable bet on role-based crews backed by deterministic flows; AutoGen is in maintenance and its successor is Microsoft Agent Framework 1.0 (Microsoft Learn). The right choice is the one whose abstraction matches the structure of your workflow, not the one whose tutorial demos best.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors