Agent State Management: How Checkpointing Persists Memory Across Turns

Table of Contents

ELI5

Agent state management is how an AI agent remembers. Between every LLM call, a snapshot of its working memory — messages, tool results, plans — gets written to storage and reloaded on the next turn. No snapshot, no continuity.

A new ReAct agent works perfectly the first time you run it. You ask a question, the model picks a tool, the tool returns a result, the model answers. Then you ask a follow-up that depends on what just happened — and the agent looks at you blankly. Every step in between has been forgotten. Not because the model is broken, but because nothing wrote it down.

That blankness is the surface symptom of a deeper truth: the LLM at the center of every agent is stateless. Each call to it is an independent Inference pass over a fresh context window. Anything the agent appears to “remember” — the user’s name, a half-finished plan, the result of step three — exists only because something outside the model wrote it down between calls and read it back at the start of the next one.

That something is the state management layer. According to LangChain’s State of Agent Engineering report, over 60% of agent production incidents trace back to it (LangChain). It is the most boring-sounding part of the agent stack and the part that breaks most often.

The Anatomy of an Agent’s Working Memory

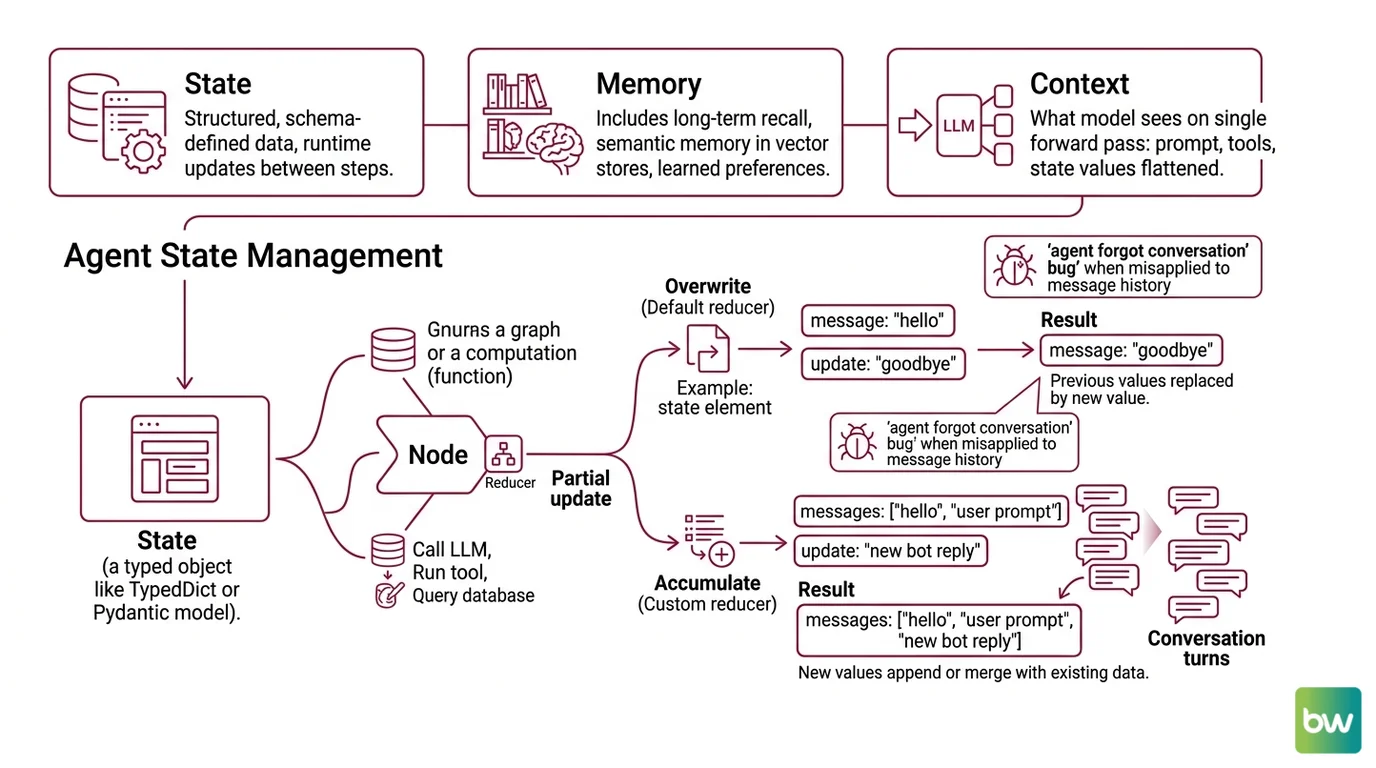

Before the mechanism, the vocabulary. The terms state, memory, and context get used interchangeably in agent tutorials, and the conflation is where most early bugs are born. State is structured, schema-defined data that the runtime updates between steps. Memory — in the sense of

Agent Memory Systems — is the broader category that includes long-term recall across sessions, semantic memory in vector stores, and learned preferences. Context is what the model sees on a single forward pass: prompt, tools, current state values, all flattened into tokens.

This article is about state. The narrow, structural, schema-shaped layer the agent runtime owns directly.

What is agent state management?

Agent state management is the discipline of capturing, updating, and persisting the data an agent needs across reasoning steps and conversation turns. In the canonical reference implementation —

LangGraph’s StateGraph — the State is a typed object, usually a TypedDict or a Pydantic model, that flows through a graph of computation nodes. Each node is a function that reads the current state, does something (calls an LLM, runs a tool, queries a database), and returns a partial update.

The update is not applied by overwriting the whole object. It is applied by a reducer — a small function that defines, per field, how new values combine with old ones. The default reducer overwrites the value. A custom reducer might append to a list (so messages accumulate instead of replacing each other), merge two dictionaries, or take the maximum of two numbers, per LangChain Docs.

That choice — overwrite versus accumulate — is where most “the agent forgot the conversation” bugs live. If messages is declared without a list-merging reducer, every node that returns a new message replaces the old ones instead of adding to them. The agent has technically updated state. It has also wiped the history.

What are the components of an agent state management system?

Every state-management implementation worth using has the same five-part anatomy, regardless of the framework’s marketing language:

- State schema — a typed structure declaring the fields the agent tracks. Messages, plans, tool outputs, scratchpad notes, user metadata. The schema is the contract between nodes.

- Reducers — per-field merge functions that turn a partial update into the next state. The reducer for

messagesis almost always “append”; the reducer forcurrent_planis almost always “overwrite.” - Nodes — units of computation that read state and return partial updates. An LLM call is one node. A tool execution is another. The graph defines how they connect.

- Checkpointer — the persistence layer. After each super-step (a synchronized batch of node executions), the checkpointer writes a

StateSnapshotto a backing store. LangGraph ships withInMemorySaver,SqliteSaver/AsyncSqliteSaver,PostgresSaver/AsyncPostgresSaver, andCosmosDBSaver, per LangChain Docs. - Thread — a unique identifier (

thread_id) that groups checkpoints belonging to one conversation or task. To resume an agent, you pass the samethread_idin the runtime config; the checkpointer loads the latest snapshot for that thread.

The OpenAI Agents SDK calls the equivalent abstraction a Session, with backends including SQLiteSession, RedisSession, SQLAlchemySession, MongoDBSession, and OpenAIConversationsSession (OpenAI Agents SDK Docs). The names differ; the structure does not. Once you see the schema-reducers-nodes-checkpointer-thread pattern, you see it in every

Agent Frameworks Comparison.

The Checkpoint as a Time Machine

A checkpoint is not just a backup. It is a positioned, addressable point in the agent’s execution graph — and once execution becomes addressable, the runtime gains capabilities that have nothing to do with memory.

How does agent state management work under the hood?

The mechanism rests on a single insight: an agent is a graph traversal, and every edge is an opportunity to write state to disk.

Consider what happens during one turn of a LangGraph agent. The runtime receives an input. It identifies the entry node. It executes that node — typically an LLM call — which returns a partial state update. The reducer combines the update with the existing state. The runtime writes a StateSnapshot containing seven things, per LangChain Docs: values (the current state), next (which nodes are scheduled to run), config (including the thread_id), metadata, created_at, parent_config (the previous snapshot in this thread), and tasks (any pending node executions). Then it picks the next node. Then it runs again.

Each snapshot points back at its parent. The thread is a linked list of snapshots, ordered in time, persisted in the checkpointer’s backing store. The default serializer, JsonPlusSerializer, encodes values with ormsgpack and falls back to extended JSON for LangChain message types, datetimes, and enums (LangChain Docs).

What this enables is more interesting than what it stores. Because every super-step writes a snapshot with a unique checkpoint_id, the runtime can do four things that an in-memory agent cannot:

- Conversational memory that survives process restarts. Lose the worker, keep the conversation.

- Time travel — replay execution from any past checkpoint, or fork a new branch from one. This is how you A/B-test prompt changes against the same intermediate state.

- Human-in-the-loop — pause execution at a node, let a human review or edit the state, resume from the same

checkpoint_idwith the modified values. - Fault-tolerant resume — if a tool call crashes mid-graph, the agent restarts from the last successful super-step, not from scratch.

Notice what is happening here. The checkpointer was introduced to solve a memory problem. It ended up enabling debugging, governance, and reliability features that have nothing to do with memory. Persistence reshapes what an agent can do.

The architectural pattern is older than LLM agents. Workflow engines, distributed databases, and crash-recovery systems all rely on the same trick: write a durable snapshot at every committed step, and you can replay history. LangGraph adapted that pattern to a graph of LLM and tool calls. The novelty is the substrate, not the mechanism.

This is also why state management is foundational to Agent Planning And Reasoning. A planner that cannot inspect its own past steps cannot revise a plan; it can only generate a new one. A reasoner that cannot fork from a past checkpoint cannot try alternative branches; it can only commit linearly. The richer the persistence model, the richer the cognitive moves the agent can make.

What the State Model Predicts

If the mechanism is correct, certain things should be true about how production agents fail and succeed. They are.

- If a reducer is missing or set to overwrite when it should accumulate, the agent will appear to lose conversation history at exactly one transition — the node that returns a new value for that field.

- If

thread_idis omitted from the runtime config, every call starts from a fresh state, regardless of how many checkpointers you have configured. The persistence layer is healthy; the addressing is broken. - If two requests share the same

thread_idsimultaneously, you have created a write race. Whichever super-step finishes last wins, and partial updates from the other branch silently disappear. This is a common failure mode in Multi Agent Systems where a supervisor and a worker write to the same thread. - If the checkpointer is

InMemorySaverin production, your agent’s memory dies when the worker process recycles. It is the right default for notebooks and the wrong default for everything else.

These predictions are not exotic. They follow directly from the schema-reducers-nodes-checkpointer-thread model, which is why the same bugs appear in CrewAI, AutoGen, and Google ADK under different names.

Rule of thumb: If your agent’s behavior changes between development and production, look at the checkpointer first and the prompts last.

When it breaks: State management adds latency and storage cost on every super-step. For high-frequency, low-stakes agents (autocomplete-style assistants, single-turn classification), the persistence overhead can dominate the LLM call itself, and a stateless design is genuinely faster. The mechanism is load-bearing, not free.

Compatibility & version notes for LangGraph users:

- LangGraph 1.0 (released late 2025):

langgraph.prebuilt.create_react_agentis deprecated; migrate imports tofrom langchain.agents import create_agent. Python 3.10+ is required (ClickIT).Interruptclass: simplified from four fields to two in 1.0. Code that readInterrupt.valueor.iddirectly may break (ClickIT).langgraph-checkpoint2.0.26 → 2.1.0: introduced aValueError: Checkpointer requires configurable keysregression for basic ReAct agents usingMemorySaver(LangChain GitHub Issue #5385). Pin versions in production until resolved.

The Data Says

State management looks like plumbing and behaves like architecture. The choice of reducer, the placement of the checkpointer, the discipline around thread_id — these decide whether an agent merely runs or actually persists. The frameworks differ in syntax. The five components — schema, reducers, nodes, checkpointer, thread — do not.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors