What Are Retrieval-Augmented Agents and How They Combine Agentic Reasoning with Dynamic Retrieval

Table of Contents

ELI5

A retrieval-augmented agent is a language model that decides for itself when to look something up, what to look up, and whether the result is good enough — turning retrieval from a single pipeline step into a loop the model controls.

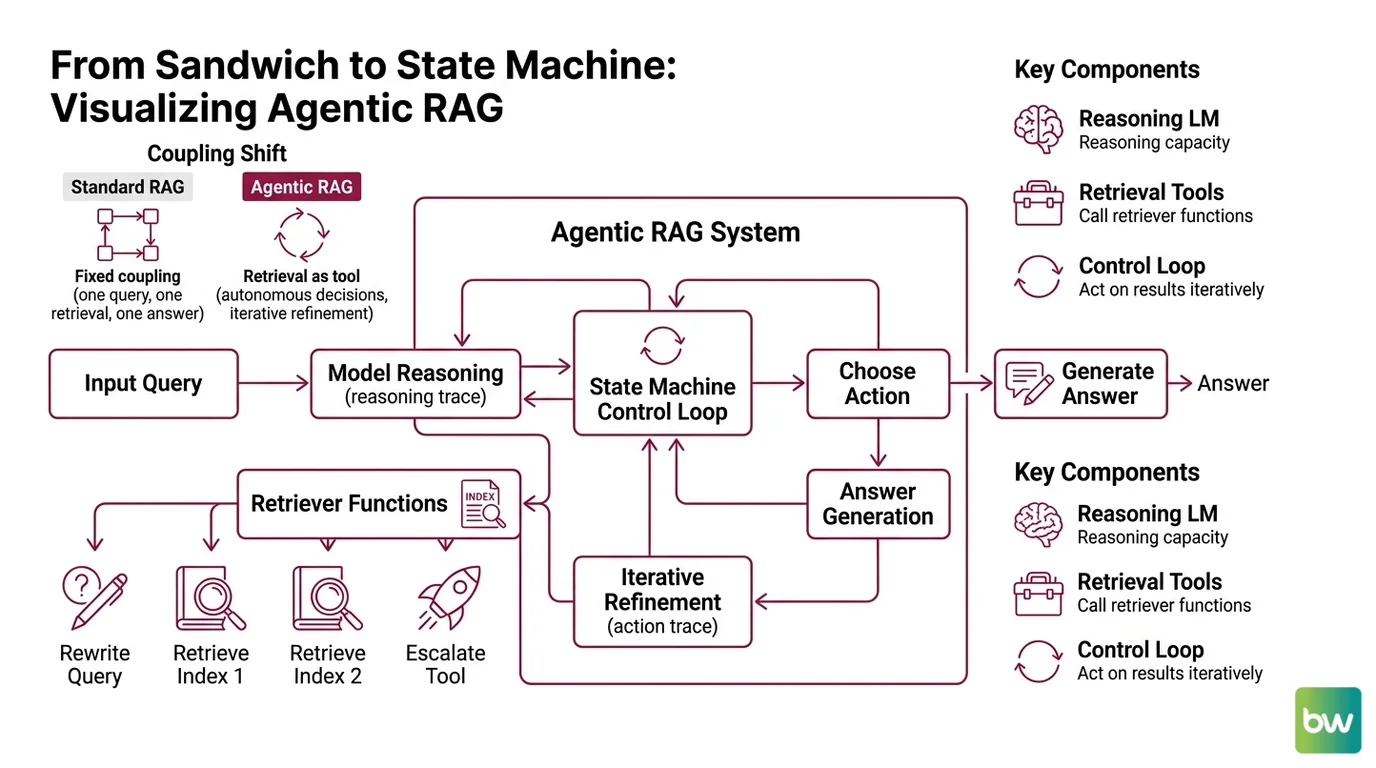

The classic mental model says retrieval-augmented generation is a sandwich. A query goes in, vectors come back, the model produces an answer, and everyone goes home. That picture works for a FAQ bot. It falls apart the moment the question requires comparing two documents, or noticing that the first retrieval missed the point entirely. What replaces the sandwich is an architecture where the model itself decides whether to retrieve, whether to rewrite, whether to try again — and that is the substrate of retrieval-augmented agents.

From Sandwich to State Machine

The original RAG paper proposed a fixed coupling between a retriever and a generator: one query, one retrieval, one answer. That coupling held for years because the parametric model had no way to make decisions over its own context — it was a passenger in the pipeline. The moment language models gained reliable tool-calling, the retriever stopped being a stage and became a function the model could choose to invoke.

That shift is what survey authors mean when they describe Agentic RAG as “embedding autonomous AI agents into the RAG pipeline” to dynamically manage retrieval strategies and iteratively refine contextual understanding (Singh et al. 2025).

What are retrieval-augmented agents?

A retrieval-augmented agent is the composition of three things that used to live in separate slides:

- A language model with the capacity to reason about its own next step

- One or more retrieval tools it can choose to call

- A control loop that lets the model act on the result of each call

The base architecture descends from the Lewis et al. (2020) RAG paper, which paired a dense retriever with a sequence-to-sequence generator for knowledge-intensive tasks. That work showed retrieval could be wired into generation. It did not give the generator a vote.

The agentic version adds the vote. The model now emits not just tokens but tool calls — structured intents that the runtime executes. When the result returns, the model reads it as additional context and chooses again: answer, rewrite, retrieve from a different index, escalate to a slower tool. The reasoning trace and the action trace become interleaved, which is exactly the pattern Yao et al. (2023) formalized as ReAct. Their ALFWorld experiments showed a 34% improvement over imitation and reinforcement-learning baselines (Yao et al. 2023), demonstrating that the interleaving itself — not a bigger model — was doing the work.

A useful caution before going further: the literature has not converged on one name. “Agentic RAG,” “agentic retrieval,” and “retrieval-augmented agents” appear near-interchangeably in current sources. They point at the same architectural idea. Treat the terminology as overlapping vocabulary, not as three distinct systems.

Not a smarter retriever. A retriever the model can argue with.

The reason this matters operationally is that the failure modes change. A static RAG pipeline fails silently — wrong chunks in, wrong answer out, no second pass. An agentic pipeline can fail noisily, because the model can detect the mismatch between the question and what came back, and route around it. That capacity to notice the gap is what distinguishes the architecture — not a heuristic bolted on top, but a structural property of the loop.

The Decision Layer

The interesting part of any agentic RAG system is not the vector store. The vector store is the same vector store. The interesting part is the small piece of logic that decides whether to query it at all — and what to do when the answer is mediocre.

That logic has a name in the LangChain implementation, and reading it is the fastest path into the mechanism.

How do retrieval-augmented agents decide when and what to retrieve?

In the canonical LangGraph implementation, decisions are made by a conditional routing function that inspects the model’s most recent message. If the message contains a tool_calls field, control flows to the retriever node. If it does not, the workflow terminates and the model’s response is returned directly to the user (LangChain Docs).

That sentence is doing more work than it looks like. It means the model is the dispatcher. The graph does not contain an if-this-then-retrieve rule written by a human. The model emits — or chooses not to emit — a structured tool call, and the routing function reads that emission as a vote.

The full workflow follows five stages: Generate or Route → Retrieve → Grade Documents → Rewrite if needed → Generate Answer (LangChain Docs). Each stage is a node in a graph; transitions between nodes are gated by conditional functions that read the current state.

Two stages deserve attention because they are where naive RAG breaks down:

- Grade Documents. After retrieval, a separate model call evaluates whether the returned chunks are actually relevant to the question. Cosine similarity says they were near the query in embedding space. Grading asks whether they answer the question. Those are different claims.

- Rewrite. If grading concludes the retrieval missed, the agent rewrites the query — sometimes decomposing it, sometimes reformulating the entities — and loops back to retrieval. The model is allowed to disagree with its own first attempt.

This is a structurally different system than “search then summarize.” The reasoning lives in the graph topology, not in the prompt.

The primitives that make this composable are surprisingly few. LangGraph exposes a @tool decorator to mark functions as model-callable, a ToolNode that runs them, and a bind_tools() call that tells the model which tools exist (LangChain Docs). Everything else — the conditional edges, the grader, the rewriter — is application code on top of those three handles.

LlamaIndex offers a parallel surface with a slightly different emphasis. Where LangGraph foregrounds the control graph, LlamaIndex foregrounds the retriever itself. Its agentic retrieval guide describes auto-routed retrieval, composite retrieval across multiple indices, and reranking as the three modes a lightweight agent can choose between (LlamaIndex Blog). Routing happens across three layers: chunk-based retrieval for fine-grained passages, file-via-metadata for catalog-style queries, and file-via-content when the question demands a whole-document view.

The architectural takeaway is symmetric across both frameworks: retrieval is no longer a single act. It is a menu, and the model orders.

Anatomy of a Working System

Once you accept that retrieval is a decision the model makes repeatedly, the system has to grow some new organs.

What are the core components of a retrieval-augmented agent system?

The Singh et al. (2025) survey classifies architectures along four axes — agent cardinality, control structure, autonomy level, and knowledge representation — and catalogues six sub-types: single-agent, multi-agent, hierarchical, corrective, adaptive, and graph-based RAG. The variation is real, but every working instance contains the same six components in some form:

| Component | Role | Typical Implementation |

|---|---|---|

| Policy / Router | Decides whether to retrieve, answer, or escalate | LLM with tool-calling, plus conditional graph edges |

| Tool Layer | Exposes retrievers, calculators, code executors as callable functions | @tool decorators, OpenAPI specs, MCP servers |

| Retrievers | Return passages, files, or rows from indices | Dense vector store, BM25, SQL, document agents |

| Grader | Judges whether retrieved context answers the question | Separate LLM call with structured output |

| Memory | Holds intermediate state across loop iterations | Graph state object, scratchpad, episodic store |

| Orchestrator | Sequences the loop and enforces termination | Workflow Orchestration For AI layer |

Two of these deserve a closer look because they are where the agentic part actually lives.

The policy is the difference between an agent and a pipeline. In static RAG, a developer hard-codes the retrieve-then-generate sequence. In an agent, the policy is whatever the language model emits at each step — implemented in practice as a tool-calling LLM whose outputs are interpreted as actions. Singh et al. summarize this in four agentic design patterns: Reflection, Planning, Tool Use, and Multi-agent Collaboration. Retrieval-augmented agents lean heavily on Tool Use and Reflection; the corrective and adaptive sub-types add Planning.

The grader is the component most often missing in early implementations and most often regretted later. Without it, the system has no way to distinguish “I retrieved nothing useful” from “I retrieved excellent context,” because both states present as a model about to confidently generate. Adding a grading step costs an extra LLM call per loop and pays for itself the first time it catches a hallucinated citation.

A subtler component is the Code Execution Agents role. Retrieval-augmented agents are not limited to text retrieval — many production systems route arithmetic, lookups against structured data, or schema-aware queries to a code-execution tool. The same routing logic that decides between vector and BM25 also decides between “answer from text” and “compute the answer.”

What the Architecture Predicts

The architecture is not abstract. It generates testable predictions about behavior — and these are the ones worth watching when you instrument a system.

- If the question is well-covered by the index, expect the agent to take a single retrieval pass and return. The loop is a capability, not an obligation.

- If retrieval quality is poor on the first attempt, expect rewrites to dominate cost. Token usage on agentic systems is bimodal: cheap when the index is good, expensive when the index is weak.

- If the grader and the generator share the same model, expect correlated failures. A model that hallucinates a citation will also fail to grade it as missing. This is the strongest argument for using a smaller, differently-tuned model as the grader.

- If you remove the grading step to save tokens, expect failure rates to look identical to static RAG for hard questions. Without grading, the agent is structurally indistinguishable from a pipeline — it just costs more.

Rule of thumb: If your system never rewrites a query and never decides not to retrieve, you have built a pipeline with extra steps, not an agent.

When it breaks: Agentic loops have no built-in convergence guarantee. A poorly-tuned policy will retrieve, grade, fail, rewrite, retrieve, grade, fail — burning tokens and latency without progress. Production deployments hit this immediately and need hard limits on loop depth, plus a fallback path that returns “I don’t know” rather than spinning. The survey authors call this out as one of the open challenges, alongside evaluation, coordination, memory management, and governance (Singh et al. 2025).

Compatibility notes (as of May 2026):

- LangGraph (Python): Stabilized at v1.0 in October 2025. Code targeting v0.1–v0.3 will break on import paths and renamed constants;

langgraph-prebuilt==1.0.2added a requiredruntimeparameter toToolNode.afunc(LangChain Issues #6363).- LangGraph streaming API: Pass

version="v2"tostream(),astream(), orinvoke()for typed output; older paths still work but are no longer the recommended surface (LangChain Changelog).- LlamaIndex: Python 3.9 deprecated across multiple packages as of March 2026;

asyncio_moduleparameter replaced byget_asyncio_module(LlamaIndex Changelog).

The Geometry Of An Open Question

There is a structural observation worth ending on. Static RAG closes the question of what to retrieve before the model sees it. Agentic RAG leaves the question open and lets the model collapse it through interaction. That difference looks small in a diagram and is enormous in practice — it is the difference between a model that is asked to produce an answer and a model that is asked to figure out whether it can.

Whether that capacity generalizes to high-stakes domains is one of the things the survey explicitly flags as unsettled. Applications in healthcare, finance, education, and enterprise document processing are documented (Singh et al. 2025), but evaluation methodology for agentic systems remains an open research problem.

The Data Says

Retrieval-augmented agents replace the fixed retrieve-then-generate pipeline with a control loop the model navigates through tool calls, grading, and conditional rewrites. The architectural difference is not bigger models or better embeddings — it is the model’s authority to decide whether the current context is sufficient. The cost is token overhead and convergence risk; the gain is the system’s ability to notice when it is wrong.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors