Multi-Agent Systems: Supervisor, Debate, and Swarm Patterns

Table of Contents

ELI5

A multi-agent system is a group of language model agents that split a job between them. One agent plans and delegates, others execute, and a memory layer keeps the conversation coherent across turns.

When Anthropic rebuilt its Research feature, it stopped trusting a single model with the whole task. A lead Claude Opus 4 instance now spawns parallel Claude Sonnet 4 sub-agents that each chase a different thread of a query. The shape — one planner, many specialists, a shared memory — outperformed single-agent Opus 4 by 90.2% on Anthropic’s internal research evaluation (Anthropic Engineering). The interesting part is not that two heads beat one. It is that the geometry of coordination changes what the system can actually compute.

The illusion of “just add more agents”

There is a tempting mental model: if one agent is smart, ten agents must be smarter. Slot a few personas into a chat room, let them argue, harvest the consensus.

That model is wrong in a useful way.

A multi-agent system is not a panel of pundits. It is a control structure — a graph of who calls whom, who holds state, who breaks ties, and who is allowed to terminate. Change the graph and you change the kinds of failures the system produces. The supervisor, debate, and swarm patterns are not flavors of the same idea; they are different geometries that solve different coordination problems.

How a multi-agent system actually computes

Before mapping the three architectures, we need to be precise about what a multi-agent system is doing under the hood and why developers reach for one in the first place.

What are multi-agent systems in AI?

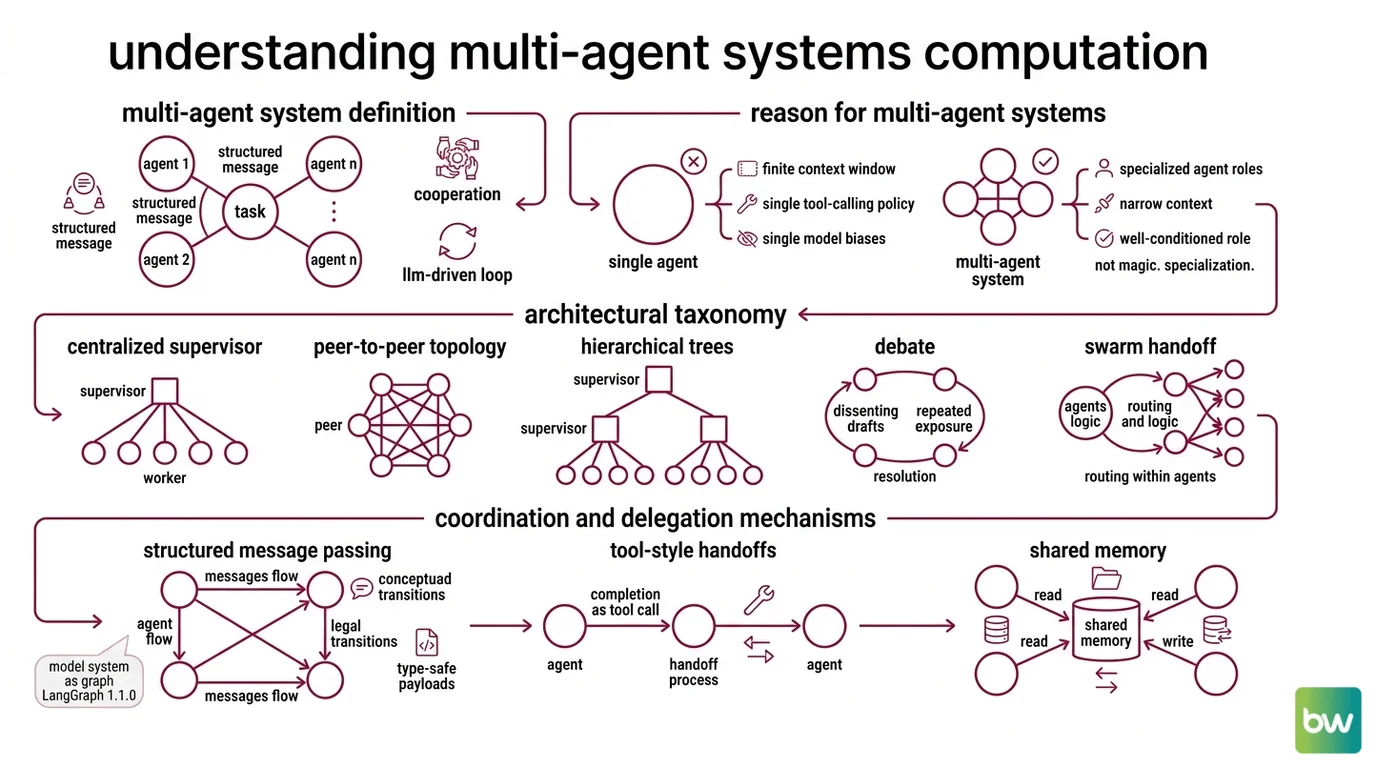

A multi-agent system in AI is a runtime in which two or more LLM-driven Agent Orchestration loops cooperate to solve a task that any single loop would handle poorly. Each agent has its own role, its own toolset, and often its own model. They communicate through structured messages — handoffs, tool calls, or shared state — rather than free-form conversation.

The standard architectural taxonomy separates centralized supervisors (orchestrator-worker), peer-to-peer or decentralized topologies, hierarchical multi-supervisor trees, debate, and swarm-style handoff patterns. The differences are not cosmetic. A supervisor tree concentrates planning in one node; a swarm pushes routing into the agents themselves; a debate forces agreement through repeated exposure to dissenting drafts.

The reason you reach for any of them is usually mechanical, not philosophical. A single agent has a finite context window, a single tool-calling policy, and a single model with a single set of biases. The moment your task asks the model to plan AND search AND critique AND format, you are asking the same parameters to specialize in opposite directions. Splitting the task across agents lets each one keep a narrow, well-conditioned role.

Not magic. Specialization.

How do multi-agent systems coordinate and delegate tasks between agents?

Coordination in modern frameworks happens through three mechanisms — message passing, tool-style handoffs, and shared memory — usually layered together.

The first mechanism is structured message passing. Frameworks like LangGraph 1.1.0 model the system as a graph: each node is an agent, edges describe legal transitions, and messages flow along the edges with type-safe payloads. The graph is the contract. An agent that wants to escalate cannot improvise — it must take an edge that exists.

The second mechanism is the handoff-as-tool. The

OpenAI Agents SDK exposes another agent as if it were a function the current agent can call; handoff() accepts tool_name_override, tool_description_override, an on_handoff callback, and input_filters that let the receiving agent see only the relevant slice of conversation history (OpenAI Agents SDK Docs). The hand-off becomes part of the action space the model already knows how to use.

Microsoft Agent Framework — the v1.0 GA successor that merged AutoGen and Semantic Kernel — exposes the same idea alongside sequential, concurrent, group-chat, and Magentic-One orchestration patterns (Microsoft Learn).

Google ADK, introduced at Google Cloud NEXT 2025, builds the hierarchy directly into the type system: every BaseAgent can declare sub_agents, and delegation walks the parent-child tree (Google’s ADK site).

The third mechanism is shared memory. Without it, each handoff would amount to amnesia, and the system would behave like a relay race where every runner forgets the baton. We will come back to memory in the next section.

What is happening underneath all three mechanisms is the same thing: a single token budget is being divided across multiple inference passes that each maintain their own posterior over what to do next. Each agent samples from a distribution conditioned on its narrow role and recent memory, instead of from one diluted distribution conditioned on everything at once. That is the actual reason coordination beats concatenation.

The anatomy of a coordinated agent

Coordination only works when each agent has the right organs. There are four — orchestrator, workers, memory, and tools — and the most common failure modes come from getting one of them wrong.

What are the core components of a multi-agent system: orchestrator, workers, memory, and tools?

The orchestrator is the agent that owns the plan. It decomposes the user request into sub-tasks, decides which worker handles each one, and decides when the task is finished. In LangGraph, it is typically a node with a routing function or a Supervisor Agent Pattern, and LangChain now recommends building supervisors directly via tool-calling rather than the dedicated supervisor library for most cases — it gives the developer more control over the context the supervisor sees. In CrewAI 1.14.4, the orchestrator is implicit in the Crew’s hierarchical process, with Agents defined by role, goal, and backstory and Tasks routed sequentially or hierarchically.

The workers are specialized agents with narrow roles — a researcher, a coder, a critic, a formatter. They typically have access to a strict subset of tools and a model chosen for the task: faster and cheaper for retrieval, slower and more capable for synthesis. Anthropic’s Research system follows this exact split, with Opus 4 as the lead and Sonnet 4 as the parallel sub-agents (Anthropic Engineering); the Claude Agent SDK exposes the same orchestrator-worker primitives for developers building on Claude.

The memory layer is the connective tissue. The standard taxonomy in Agent Memory Systems distinguishes four kinds: short-term (the working context window), long-term episodic (what happened across past sessions), long-term semantic (facts the agent knows), and procedural (how it does things). Long-term memory is bridged into short-term via vector retrieval (Redis Engineering Blog). A swarm or supervisor with no memory layer collapses into a stateless function call; a system with poorly-scoped memory leaks irrelevant context into every turn and pays for it in latency and confusion.

The tools are how agents act on the outside world — APIs, code execution, file I/O, other agents. The interesting design choice is which tools each agent can see. Hide the tools you do not want the model to use. Expose handoffs as tools when you want delegation to feel native. The action space is the policy.

When you read a multi-agent framework’s docs, this is the four-organ skeleton you should be looking for. The vocabulary differs — Crew/Agent/Task in CrewAI, Graph/Node/State in LangGraph, Agent/Handoff/Guardrail/Tracing in the OpenAI Agents SDK — but the anatomy is the same.

Three architectures, three geometries of control

The choice of architecture is a choice about where the planning effort lives.

In supervisor or orchestrator-worker systems, control lives at the top. One agent decomposes the problem; workers execute leaves of the plan; results bubble up; the supervisor decides when to stop. This is the shape Anthropic uses for parallel research, and it is the shape LangGraph’s supervisor reference implementation models with create_handoff_tool, including multi-level hierarchies — supervisors of supervisors. It is the safest default when the task decomposes cleanly into independent sub-tasks. It fails when the sub-tasks are not actually independent, because the supervisor cannot see what the workers learned mid-task without explicit feedback edges.

In Agent Debate systems, control is symmetric. Multiple agents propose answers, then re-read each other’s drafts and refine over several rounds. The Du et al. multi-agent debate paper showed this pattern improves factuality and reasoning on benchmarks where a single chain-of-thought run would not. The mechanism is not “the agents convince each other.” It is that exposing each agent to dissenting drafts shifts the conditional distribution over the next token toward answers that survive cross-examination. The cost is real — multiple rounds across multiple agents — and the benefit is task-dependent. Debate earns its keep on hard reasoning and factual claims; on simple lookups, it just multiplies the bill.

In

Swarm Architecture or handoff systems, control is distributed. There is no global supervisor; each agent decides when to hand the conversation to a peer, usually via a handoff tool. The shape is closer to a relay than a hierarchy. The OpenAI Agents SDK is the production successor to OpenAI’s experimental Swarm, whose repository is no longer actively maintained (OpenAI’s Swarm GitHub). The word “swarm” is overloaded: there is OpenAI’s archived library, the general decentralized peer-to-peer pattern from the multi-agent systems literature, and the langgraph-swarm library — they are not the same artifact. Swarms shine when the routing logic is itself task-dependent and you do not want a single planner to be the bottleneck.

The three architectures are not competitors. They are different answers to the question: where should the planning effort live?

What the geometry predicts

Once you see the architecture as a control graph, several practical predictions fall out.

- If your task decomposes into independent sub-tasks, expect the supervisor pattern to dominate on latency, because workers run in parallel.

- If your task is a hard reasoning or factuality problem, expect debate to help — and expect the help to come at multiplied compute.

- If your task has dynamic routing that depends on intermediate results, expect a swarm or handoff-style architecture to outperform a static supervisor, because the planner does not have to predict the routing in advance.

- If your handoffs feel like amnesia, the failure is almost always in the memory layer, not the agents — check that long-term episodic memory is being retrieved into short-term context at handoff time.

Rule of thumb: the shape of the agent graph should mirror the shape of the task — flat tasks deserve flat coordination, tree-shaped tasks deserve trees.

When it breaks: Multi-agent systems multiply both capability and cost. Token usage scales with the number of agents and rounds, debugging spans multiple inference traces, and a misrouted handoff can cascade silently because each agent looks locally correct. Anthropic’s own guidance is to use multi-agent systems for tasks worth the token bill — research, complex coding, long-horizon planning — not for tasks a single well-prompted agent can handle (When to use multi-agent systems).

Framework status notes:

- OpenAI Swarm: Repository not actively maintained since the OpenAI Agents SDK launched in March 2025. Use the Agents SDK for new work.

- AutoGen: In maintenance mode — bug and security fixes only. Microsoft directs users to Microsoft Agent Framework v1.0 for new projects.

- LangGraph supervisor library: LangChain now recommends building the supervisor directly via tool-calling for most cases; the dedicated library is still available but offers less context-engineering control.

The Data Says

A multi-agent system is a control graph, not a chat room. Anthropic’s orchestrator-worker shape outperformed single-agent Opus 4 by 90.2% on its internal research eval, but the gain is conditional on the task actually decomposing. Pick the architecture — supervisor, debate, or swarm — that matches where the planning effort needs to live, and put real engineering into the memory layer or none of it works.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors