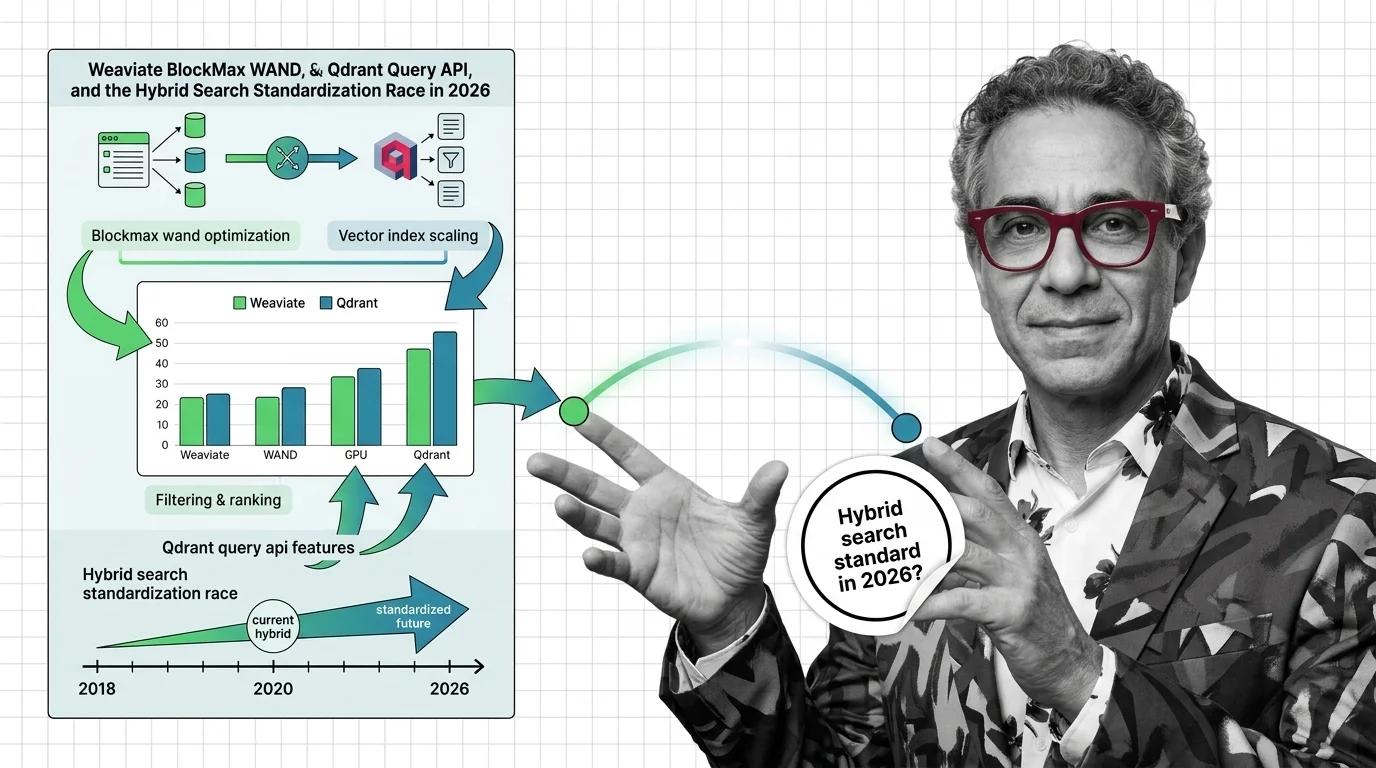

Weaviate BlockMax WAND, Qdrant Query API: The 2026 Hybrid Search Race

Table of Contents

TL;DR

- The shift: Hybrid search stopped being a vendor feature and became a baseline pattern — dense plus sparse plus Reciprocal Rank Fusion behind one query endpoint.

- Why it matters: The competitive question for RAG infrastructure moved from “do you support hybrid?” to “how fast, how cheap per byte, and how composable with rerankers?”

- What’s next: Vendors who don’t ship sub-50ms BM25 and unified query APIs by Q3 2026 lose the agentic-RAG buying cycle.

A year ago, hybrid search was a checkbox in a vendor evaluation. This quarter it’s a pricing-model question. Three release notes from three different parts of the stack — sitting in different parts of the stack — quietly shipped the same architecture inside eighteen months. The convergence is the news.

The Hybrid Search Race Just Settled Into a Shape

Thesis: hybrid search is no longer a competitive edge — it is a commoditized substrate, and the new battleground is throughput and cost per byte.

The pattern locked in across the entire vector-database ecosystem within a single buying cycle. Dense vector retrieval, sparse lexical retrieval, and Reciprocal Rank Fusion behind one endpoint. That is the shape every serious vendor shipped — and the shape that Retrieval Augmented Generation buyers now expect by default.

That’s not a feature war. That’s a category collapse.

The implications cascade. Hybrid Search stopped justifying premium pricing the moment Postgres extensions could do it natively. Differentiation moved one layer up — to query latency, index footprint, reranking support, and how cleanly the stack drops into an Agentic RAG loop.

You’re either competing on infrastructure economics now, or you’re selling something the market already considers free.

Three Vendors, One Architecture Bet

The evidence is that the same bet got placed across very different parts of the stack — and it landed.

Weaviate shipped BlockMax WAND in v1.30, promoting it from technical preview to general availability (Weaviate Blog). The numbers from their internal benchmarks: query latency dropped up to 94% on the Fever dataset and 80% on MS Marco. The Inverted Index compressed by 50–90%, with one MS Marco index shrinking from 10,531MB to 941MB (Weaviate Blog). That is not an incremental optimization. That is a category reset on lexical retrieval inside a vector database.

Qdrant ships v1.17.1 as of March 2026 (Qdrant Releases). The structural move was earlier: the Query API in v1.10, July 2024, unified hybrid retrieval behind a single server-side endpoint with RRF as the default fusion and DBSF as an alternative (Qdrant Docs). 2025 added score-boosting reranking, ACORN filtered HNSW, MMR, and ColBERT-style late-interaction (Qdrant 2025 Recap). The 2026 roadmap: 4-bit quantization, read/write segregation, scalable multi-tenancy.

Postgres caught up in the same window. ParadeDB pairs pg_search BM25 with pgvector. Tiger Data shipped pg_textsearch v1.0 GA in March 2026 (Tiger Data Blog). Elasticsearch’s RRF retriever has been native since v8.9 with k=60 as the default (Elastic). Redis 8.4 added FT.HYBRID — BM25 plus vector plus filter in one atomic operation.

Different stacks, same architecture. When five independent vendors converge on RRF and a unified endpoint inside eighteen months, that’s not coincidence. That’s the market crowning a winner pattern.

Compatibility notes:

- Weaviate BlockMax WAND: New on-disk inverted-index format, not backwards compatible with pre-v1.30 segments. Online migration is provided, but plan a maintenance window for large clusters (Weaviate GitHub).

- Weaviate v1.28/v1.29: BlockMax WAND was technical preview only. Treat BM25 results as advisory until you upgrade to v1.30+.

- Qdrant storage engine: v1.17.x removes RocksDB in favor of Gridstore. Direct upgrade from v1.15.x to v1.17.x is blocked — step through v1.16.x first.

The Winners

The vendors that owned both lexical and vector engines walk out with leverage. Weaviate shipped BM25 inside the same database — no second hop, no second cluster, no orchestration layer between dense and sparse. Qdrant exposed both through one Query API call, fused server-side. Buyers picking RAG infrastructure right now don’t want to operate two systems.

Postgres-native shops also win this cycle. Teams that resisted standing up a separate vector store just got vindicated. Tiger Data and ParadeDB closed the gap on Elasticsearch-grade hybrid retrieval inside the database the team already runs. The “do we really need a dedicated vector DB?” conversation tilted decisively in Q1 2026.

Reranker infrastructure providers inherit the rest. When fusion becomes table stakes, the next layer up — cross-encoder reranking, late-interaction models, learned sparse retrieval — becomes the new differentiator. The faster you commoditize, the more value flows to the layer above.

The Losers

Three positions got harder to defend in months.

Vendors selling hybrid search as a premium SKU just watched their pricing card age. If a Postgres extension can deliver BM25 plus vector plus RRF inside a transaction, the standalone “hybrid premium” is a tax buyers won’t keep paying.

Single-modality vector databases are the second casualty. Anyone shipping dense-only retrieval in 2026 is selling half a stack. The hybrid pattern beats dense-only on workloads with rare tokens, code, IDs, and named entities — which is most production RAG.

Teams that built custom dense-plus-BM25 plumbing in 2024 absorb the third hit. That work is now inventory the platform layer ships natively. You’re either migrating onto a native Query API this year or maintaining infrastructure your vendor gives away.

What Happens Next

Base case (most likely): RRF with k=60 cements as the default fusion across every major vector database, and the competitive battle shifts to query latency and index footprint per dollar. Signal to watch: A second vendor publishes BlockMax WAND-class latency drops on standard benchmarks within two release cycles. Timeline: Q4 2026.

Bull case: Hybrid retrieval gets absorbed into agentic loops as a primitive, with agentic-RAG frameworks calling unified query endpoints natively. Composability — not raw recall — becomes the win condition. Signal: A frontier agent framework ships first-class adapters that treat Qdrant, Weaviate, and Postgres as interchangeable retrieval backends. Timeline: Mid-2027.

Bear case: Score normalization across heterogeneous retrievers stays brittle. RRF papers over the problem but cross-encoder rerankers eat the entire downstream value, leaving hybrid as a cost line on the way to a reranker call. Signal: Production teams quietly bypass server-side fusion and re-run cross-encoder rerankers on the top results from each retriever in parallel. Timeline: End of 2026.

Frequently Asked Questions

Q: Where is hybrid search heading in 2026 and which vendors are setting the standard? A: Toward a commoditized baseline: dense plus sparse plus RRF behind one endpoint. Weaviate sets the lexical-throughput bar with BlockMax WAND, Qdrant sets the API shape with the Query API, and Postgres extensions set the floor. The race moved from “support” to “speed and economics.”

The Bottom Line

Hybrid search just stopped being a feature and started being plumbing. The vendors that thrive from here will be the ones with the lowest cost per query and the cleanest agent integrations — not the ones with the longest feature list. Watch BlockMax WAND-class latency announcements; that’s where the next move shows up.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors