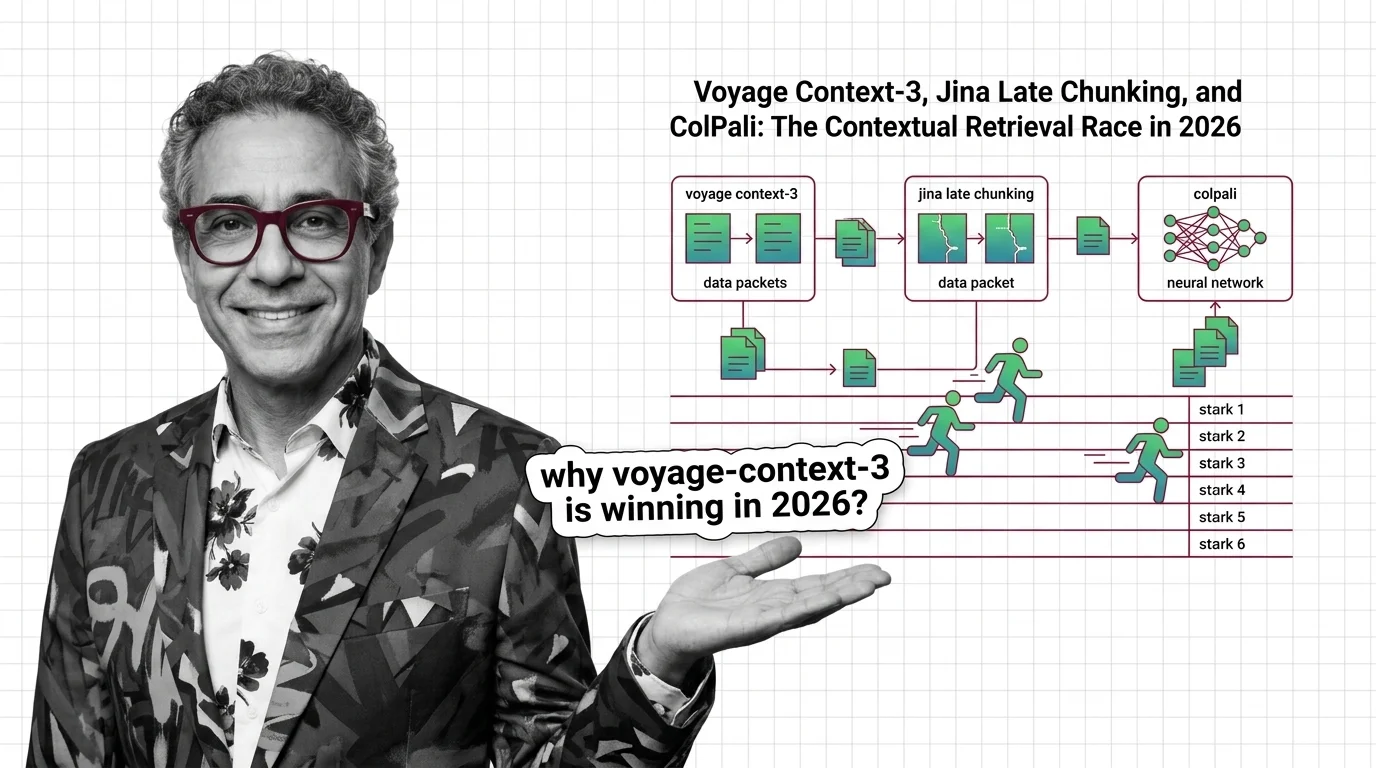

voyage-context-3, Jina Late Chunking, ColPali: Contextual Retrieval in 2026

Table of Contents

TL;DR

- The shift: Contextual Retrieval stopped being a prompt recipe and became three competing architectures — contextualized embeddings, late chunking, and visual late interaction.

- Why it matters: Anthropic’s 2024 chunk-summary recipe is now the baseline, not the frontier. Stacks built around it are already a generation behind.

- What’s next: ECIR 2026 just gave late interaction its own workshop. The retrieval layer is being rebuilt from the embedding model up.

For two years, “contextual retrieval” meant one thing: ask Claude to write a 50-token summary for every chunk in your corpus, prepend it, then index. By 2026 that recipe is the floor, not the ceiling. Three independent labs shipped three different replacements — and none of them ask you to pay an LLM to rewrite your documents.

The race is on. And the race is structural, not cosmetic.

The Recipe Era Just Ended

Thesis (one sentence, required): Contextual retrieval has split from a single LLM-based preprocessing trick into three competing architectures that bake context directly into the retrieval layer.

Anthropic’s original 2024 recipe still works. Prepending an LLM-generated chunk summary cuts failed retrievals by 49% on its own, and 67% when paired with Reranking, per Anthropic News. At $1.02 per million document tokens, it was the first contextualization technique cheap enough to run at corpus scale.

That was the floor. Three labs just raised it without asking you to rewrite a single chunk.

Voyage AI moved the contextualization step inside the embedding model. Jina moved it inside the long-context forward pass. Illuin sidestepped chunking entirely by treating the document as an image. Same problem, three structural answers, all shipping in production.

The pure-recipe era just ended.

Three Releases, One Direction

The convergence is the signal. When three independent teams pick three different paths to the same destination, the destination is real.

Path one: contextualization inside the embedding model. voyage-context-3 shipped on July 23, 2025 (Voyage AI Blog). Each chunk embedding is computed with full document context already mixed in — no separate LLM call, no prepended summary, no second pass. On Voyage’s own benchmarks the model beats Anthropic’s recipe by 6.76% at the chunk level and 2.40% at the document level (Voyage AI Blog). Treat those numbers as a vendor claim, not peer-reviewed truth. The structural point holds regardless: the contextualization moved into the model weights.

Path two: late chunking on long-context encoders. Jina’s late chunking, formalized in arXiv 2409.04701 (Late Chunking arXiv) and shipped in jina-embeddings-v3 via a single late_chunking=true parameter (Jina AI Docs), keeps your naive splitter. The encoder runs across the whole 8192-token document first, then the chunk vectors are pooled from that contextualized pass. Same chunks, different math. voyage-context-3 still beats it by more than 20 points head-to-head (Voyage AI Blog), but late chunking’s appeal is operational — you keep your existing pipeline and flip a flag.

Path three: skip text chunking entirely. Colpali (arXiv 2407.01449) treats every PDF page as an image, embeds it through PaliGemma-3B, and matches queries with ColBERT-style multi-vector late interaction. The descendants — ColQwen2.5, ColQwen3.5, ColSmol — now sit at the top of the ViDoRe leaderboard for visual document retrieval (illuin-tech GitHub). For documents where layout, tables, and figures carry the meaning, this isn’t a better chunker. It’s the abolition of the chunker.

Three paths. One verdict: contextualization belongs in the retrieval architecture, not in a preprocessing prompt.

Who Moves Up

Embedding providers that own the contextualization step now own the moat. Voyage AI — already inside MongoDB’s stack — shipped the first model that makes Anthropic’s recipe redundant for greenfield builds, with 256/512/1024/2048-dim Matryoshka outputs and binary quantization that compresses storage by 99.48% versus OpenAI-v3-large at comparable recall (Voyage AI Blog). Storage cost just stopped being an argument against switching.

Vector databases that already support multi-vector late interaction — Vespa, Qdrant, Weaviate — pick up the ColVision wave for free. Their competitors who only handle single-dense vectors get to choose between a hard rebuild and watching visual document Retrieval Augmented Generation move to someone else’s stack.

Teams that already ship retrieval as a service. ECIR 2026 hosting the First Workshop on Late Interaction and Multi-Vector Retrieval (LIR Workshop arXiv) is the academic stamp on what production teams shipped two years ago. The research conversation finally caught up to the deployments.

Who Gets Left Behind

Anyone whose RAG stack is “OpenAI embeddings + naive splitter + cosine similarity” is running 2023’s playbook against 2026’s competition. That stack still works. It just no longer wins.

Vendors selling LLM-based chunk-summarization as a product feature have a problem. The technique now competes with embedding models that produce contextualized vectors directly — no extra inference pass, no rewrite cost, no retention of intermediate summaries. The recipe survives as a fallback for teams locked into a specific embedding provider. As a moat, it just evaporated.

Single-vector dense retrieval as a category. ColPali, ColQwen, and the late-interaction lineage prove that for visual or layout-heavy corpora, one vector per chunk leaves accuracy on the table. Single-dense isn’t dead — it’s the right tool for short, clean text — but it just lost its claim to being the default for everything.

What Happens Next

Base case (most likely): Voyage AI ships voyage-context-4 within the next two quarters, every major embedding provider follows with a contextualized variant, and Anthropic’s chunk-summary recipe becomes legacy infrastructure quoted only in tutorials. Signal to watch: A Cohere or OpenAI release that explicitly markets “contextualized chunk embeddings” as a product category. Timeline: End of 2026.

Bull case: ColPali-style visual late interaction goes mainstream for enterprise document RAG. Vector databases that support multi-vector retrieval pull ahead, and PDF-heavy verticals — legal, finance, pharma — migrate off text-only pipelines. Signal: A Tier 1 vector DB ships first-class ColVision support with managed inference. Timeline: Mid-2027.

Bear case: Long-context LLMs keep getting cheaper and the entire retrieval layer compresses into “stuff the document into the context window.” Contextual embeddings become a niche optimization for cost-sensitive deployments. Signal: Frontier labs ship a 10M-token model at sub-cent-per-call pricing. Timeline: Late 2027 at the earliest.

Frequently Asked Questions

Q: How is voyage-context-3 outperforming Anthropic’s contextual retrieval recipe in 2026? A: voyage-context-3 bakes document context into the embedding model itself, eliminating the LLM rewrite step. On Voyage’s benchmarks it beats Anthropic’s recipe by 6.76% at chunk level and 2.40% at document level — without per-document inference cost (Voyage AI Blog).

Q: Where is contextual retrieval heading in 2026 with late chunking and multimodal late interaction? A: Toward three coexisting architectures: contextualized embeddings for general text, late chunking for teams keeping legacy pipelines, and ColPali-style visual late interaction for layout-heavy documents. The single “right” stack is gone — match the architecture to the corpus.

The Bottom Line

Anthropic’s 2024 recipe taught the industry that context belongs in the retrieval step. The 2026 answer is that context belongs in the model, not in a prompt wrapper. You’re either evaluating contextualized or late-interaction embeddings now, or you’re shipping last year’s accuracy at next year’s prices.

Watch for voyage-context-4 and the next ColQwen drop. Both will arrive faster than your procurement cycle.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors