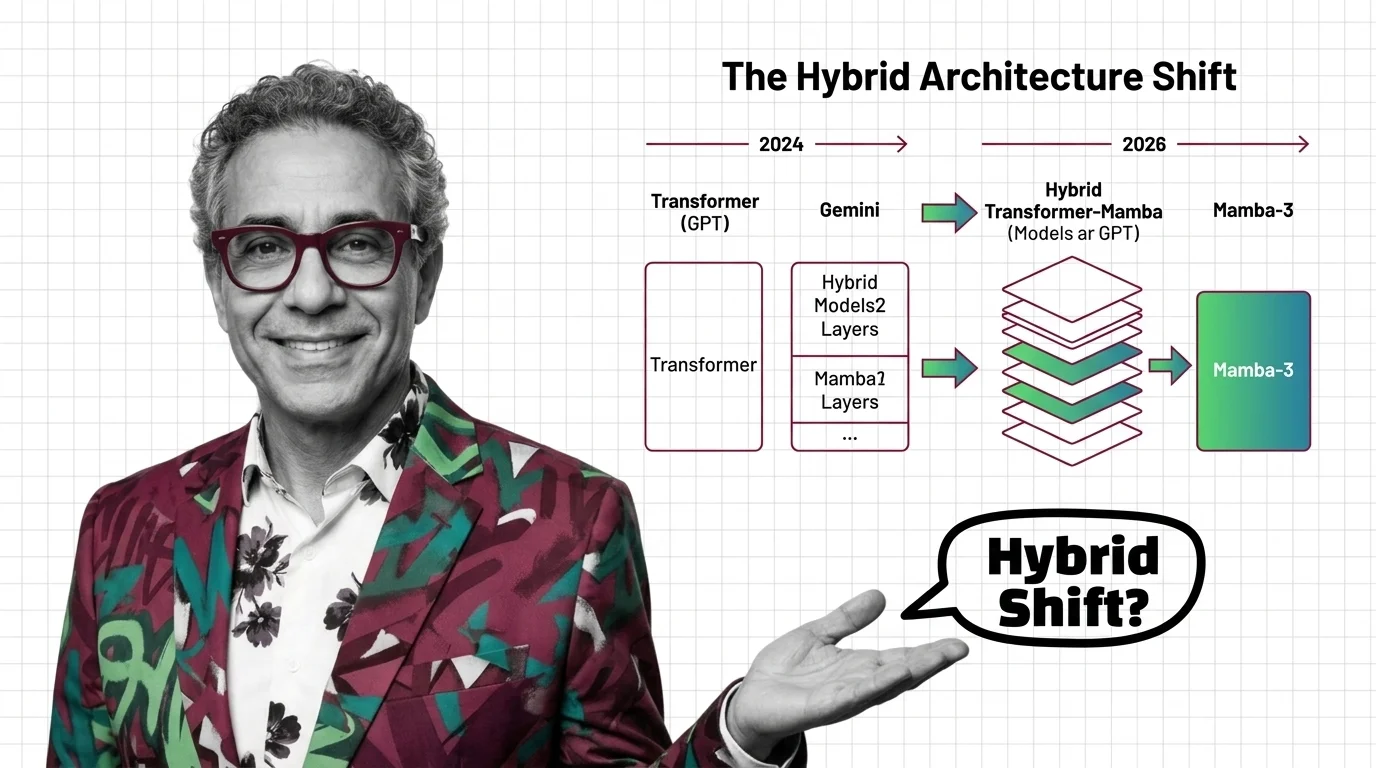

Transformers in 2026: GPT to Gemini, Mamba-3, and the Hybrid Architecture Shift

Table of Contents

TL;DR

- What happened: Mamba-3 launched March 17, NVIDIA shipped Nemotron 3 Super on March 11, and the architecture race just split into three lanes: pure transformer, pure SSM, and hybrid.

- Why it matters: The transformer monopoly that started with “Attention Is All You Need” in 2017 is fracturing. Hybrid models are no longer experiments. They are shipping.

- What’s next: Hybrid Mamba-transformer designs are the new default for frontier training runs. Pure transformers are not dead, but their cost advantage on long-context workloads is gone.

Two releases in six days. That is the speed at which the Transformer Architecture debate went from academic to operational. NVIDIA dropped Nemotron 3 Super on March 11. Together AI and academic collaborators released Mamba-3 on March 17. One is a hybrid. The other is a pure State Space Model. Both target the same bottleneck: the quadratic cost of attention at scale.

Two Releases, Six Days, One Architecture Split

NVIDIA’s Nemotron 3 Super shipped March 11, 2026. A hybrid Mamba-Transformer mixture-of-experts model with 120B total parameters, 12B active at inference, and a 1M token context window (NVIDIA Blog). The design uses Latent MoE for higher expert density, multi-token prediction for a reported 3x inference speedup, and NVFP4 pretraining for hardware efficiency.

Six days later, Mamba-3 arrived. Released March 17 from researchers at CMU, Princeton, Together AI, and Cartesia AI. Published at ICLR 2026 under Apache 2.0 (Together AI Blog). At 1.5B parameters, it delivers roughly 4% relative accuracy gain over a transformer baseline with up to 7x faster inference on long sequences running on H100 hardware (VentureBeat). Mamba-3 introduces complex-valued states, MIMO decoding, and exponential-trapezoidal discretization, resulting in a 2x smaller state compared to Mamba-2 (Together AI Blog).

One constraint: Mamba-3’s benchmarks are at 1.5B scale only. Larger-scale results are not yet available.

The Architecture Map as of March 2026

The original 2017 transformer used an Encoder Decoder design. That blueprint has splintered.

OpenAI’s GPT-5.4 runs a decoder-only transformer with grouped-query Multi Head Attention and sliding-window attention, pushing up to 1M context tokens (Applying AI). Anthropic’s Claude Opus 4.6, released February 5, and Sonnet 4.6, released February 17, are transformer-based with 1M context windows and pricing at $5/$25 and $3/$15 per million tokens respectively (Anthropic Docs). Neither OpenAI nor Anthropic has publicly disclosed internal architecture details like parameter counts or exact layer composition.

Google’s Gemini 2.5 Pro runs a sparse mixture-of-experts transformer with 1M context and a 2M target, natively multimodal from the ground up (Google DeepMind). Meta’s Llama 4 went wide: Llama 4 Scout uses 17B active out of 109B total parameters across 16 experts with a 10M token context window. Maverick scales to 17B active out of 400B total with 128 experts (Meta AI Blog). The Tokenization and Embedding pipelines feed into architectures that now diverge dramatically in how they route computation.

Then there are the hybrids. NVIDIA’s Nemotron 3 Super interleaves Mamba layers with transformer Feedforward Network and Attention Mechanism blocks under MoE routing. AI21’s Jamba 1.5 runs a hybrid Transformer-Mamba-MoE design with 398B total parameters, 94B active, and a 256K context window under Apache 2.0 (AI21 Blog).

The pure transformer still dominates deployed models. But the architecture diversity in active development has never been wider.

The Hybrid Thesis

Thesis: The industry is converging on hybrid architectures that combine attention strength with SSM efficiency, not choosing sides.

The old framing was transformers versus alternatives. Dead framing.

What is happening is a merge. Standard transformers pair attention layers with Positional Encoding to handle recall-intensive tasks where full pairwise token comparison matters. SSM layers handle long-range dependencies with linear scaling instead of quadratic. The hybrid approach gets both properties in one model.

NVIDIA’s Nemotron 3 Super is proof of concept at production scale. AI21 shipped Jamba 1.5 with the same thesis. The industry consensus behind hybrids is growing fast (AI21 Blog).

The economics push the same direction. Quadratic scaling means compute costs explode with context length. SSM layers change that math. When longer sequences cost less to process, the business case for hybrids writes itself.

Who Moves Up

NVIDIA. Not just selling GPUs anymore. Shipping the architecture that runs on those GPUs. Nemotron 3 Super positions them as infrastructure and model provider simultaneously.

Meta. Open-weight MoE at Llama 4 scale gives them distribution. Every startup that cannot train from scratch becomes a Meta downstream consumer. The 10M context window on Scout is a statement of intent.

Together AI and the academic SSM researchers. Mamba-3 under Apache 2.0 means the open-source ecosystem can build on it. If hybrid architectures become standard, the teams that built the SSM components become essential suppliers.

Who Gets Left Behind

Any team building exclusively on one architecture without a hybrid strategy. If your entire stack assumes pure transformer attention, and the cost curve favors hybrids on long-context workloads, you are carrying technical debt that compounds quarterly.

Any developer relying on the Hugging Face Transformers library without watching the API surface. The v5.0 release introduces breaking changes: WeightConverter refactoring, tokenizer consolidation, and deprecated class removals. Upgrade plans are not optional.

Organizations waiting for a clear winner before committing. There is no clear winner coming. The architecture race is branching, not converging on a single design. Waiting is a strategy for falling behind.

What Happens Next

Base case (most likely): Hybrid Mamba-transformer architectures become the default for new large-scale training runs by late 2026. Pure transformers remain dominant in production due to existing infrastructure, but new projects start hybrid-first. Signal to watch: A top-3 lab announces a hybrid flagship model. Timeline: Q3-Q4 2026.

Bull case: Mamba-3 scales beyond 1.5B and matches transformer quality at every benchmark. Hybrid models achieve a decisive cost-per-token advantage. Pure transformers start looking like legacy infrastructure. Signal: Mamba-3 results at 70B+ parameters with competitive quality. Timeline: H1 2027.

Bear case: SSM layers introduce training instability at scale. Hybrid architectures add complexity without clear quality wins. The industry doubles down on pure transformers and eats the compute cost. Signal: Multiple failed hybrid training runs from well-funded labs. Timeline: If no scaled SSM results by Q2 2027, the window narrows.

Frequently Asked Questions

Q: Which major AI models use transformer architecture in 2026? A: GPT-5.4, Claude Opus 4.6, Gemini 2.5 Pro, and Llama 4 all run on transformer-based architectures. NVIDIA’s Nemotron 3 Super and AI21’s Jamba 1.5 use hybrid Mamba-transformer designs. Pure SSMs like Mamba-3 are emerging but not yet at flagship scale.

Q: How do GPT, Claude, Gemini, and Llama each implement transformer architecture? A: GPT-5.4 uses a decoder-only design with grouped-query attention. Claude Opus 4.6 is transformer-based with 1M context. Gemini 2.5 Pro runs a sparse mixture-of-experts transformer. Llama 4 uses MoE with up to 128 experts and 400B total parameters in the Maverick variant.

Q: Will Mamba and state space models replace transformers in 2026? A: Not in 2026. Mamba-3 shows strong results at 1.5B scale, but larger benchmarks are pending. The industry trend is hybrid architectures combining transformer and SSM layers, not full replacement. The merge, not the overthrow, is the story.

Q: What are hybrid transformer-Mamba architectures like Nvidia Nemotron? A: Hybrid architectures interleave transformer attention layers with Mamba-style state space layers. Nemotron 3 Super uses this approach with MoE routing, running 12B active parameters from 120B total with 1M context. The goal: transformer-quality recall with linear-scaling efficiency.

The Bottom Line

The transformer is not dead. Its monopoly is. Every major release in March 2026 points the same direction: hybrid architectures that pair the recall strength of attention with the efficiency of state space models. You are either building for a multi-architecture future or maintaining a stack that gets more expensive by the quarter.

Disclaimer

This article discusses financial topics for educational purposes only. It does not constitute financial advice. Consult a qualified financial advisor before making investment decisions.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors