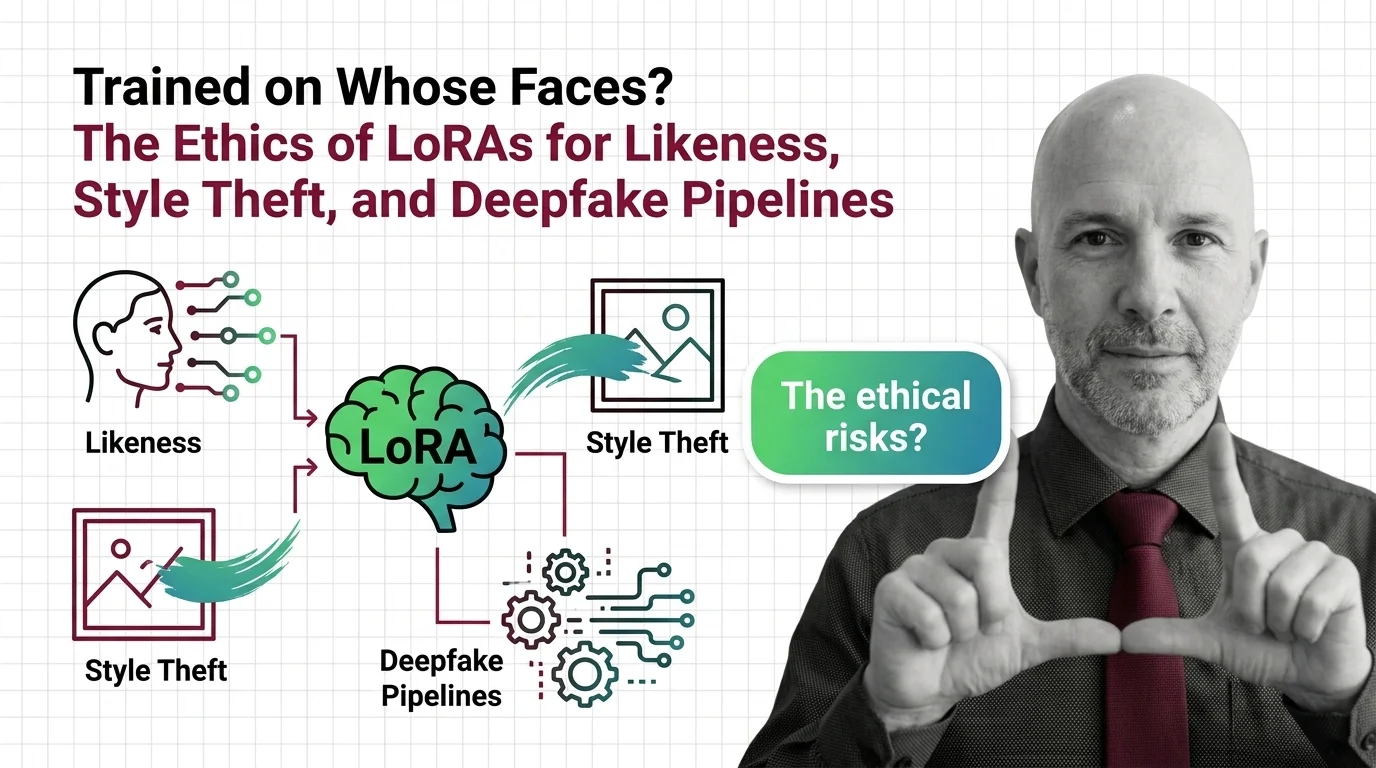

Trained on Whose Faces? LoRA Ethics: Likeness, Style Theft, Deepfakes

Table of Contents

The Hard Truth

What happens to consent when fine-tuning a model on a specific human being takes fifteen minutes, twenty photographs, and a graphics card already sitting under a teenager’s desk? And what do we call the moral framework that arrives only after the tool is everywhere?

A LORA is a small adapter — a few megabytes of weights that bend a much larger Diffusion Models backbone toward a chosen subject or style. The technology itself is morally inert. The economy that grew around it is not. Somewhere between the academic paper and the Discord channel, training adapters on real people’s faces became something closer to a sport, and the question of who agreed to be in the corpus stopped being asked out loud.

The Fifteen-Minute Question

The defining feature of LoRA training for image diffusion is not its accuracy. It is its accessibility. Twenty to thirty reference images and roughly fifteen minutes on a 24 GB VRAM consumer GPU are enough to produce a working likeness adapter for a Flux-class model (Hartzog et al., FAccT 2025). That sentence sounds neutral until you read it as a permissions architecture. The cost of fine-tuning a model on a specific human being has fallen below the cost of asking them.

By December 2024, 44.3% of all Flux LoRAs uploaded to Civitai sought to target identifiable individuals (Hartzog et al., FAccT 2025). Of roughly two thousand explicit deepfake models the same audit examined, 96% targeted identifiable women, with no documented evidence of consent. Across the broader hub-class ecosystem, around 35,000 publicly downloadable deepfake variants accounted for roughly 15 million downloads since November 2022. These are not edge cases. They are a category.

What the Adapters Promise

The case for permissive likeness LoRAs is not a strawman, and pretending otherwise weakens any argument against them. The original promise was real: low-rank adaptation made fine-tuning accessible to people who would never see the inside of a hyperscale datacenter. A small studio could train a custom style for a children’s book. A photographer could prototype a new aesthetic. An indie game team could keep a character visually consistent across thousands of frames. None of this requires a face that does not belong to the operator.

There is also a sincere argument that opt-in regimes concentrate power. If only Adobe and Getty can afford to license enough faces and styles to train competitive models, the public-interest case for open weights collapses into oligopoly. That tension is real, and the people who care most about democratized creative tooling are not the villains here. The hard part is that the same toolchain that lets a costume designer iterate on a fictional character also lets a stranger compile twenty photos from someone’s wedding album and produce an adapter that will reproduce them on demand.

What the Steelman Quietly Counts On

The democratization argument works only if a hidden premise holds: that the marginal cost of misuse stays bounded, and that platform-level moderation can absorb the rest. Neither has held. A January 2026 investigation into the bespoke deepfake economy found that a majority of paid bounty requests on Civitai were for LoRAs rather than finished images, that more than half were sexually explicit, and that 86% of deepfake-targeted bounties specifically commissioned LoRA work (MIT Technology Review). The marketplace did not emerge despite the platform’s stated policies; it routed around them.

The financial system noticed before most of the industry did. Visa and Mastercard halted card payments to Civitai in May 2025 over non-consensual content concerns (Unite.AI). When payment processors move faster than ethics committees, that is a signal about which institutions are actually feeling the harm. Civitai’s published rules forbid non-consensual intimate imagery; the documented gap is not policy but enforcement at the speed and scale at which adapters get uploaded. That is a different problem, and it does not get smaller as the tooling gets easier.

A Different Question, Borrowed From an Older Craft

We have a name for the moral injury at the heart of this. Likeness has been a contested category since photography forced courts to invent the right of publicity in the late nineteenth century. What is new is not the wrong. What is new is that the wrong now scales by adapter rather than by photograph. A nineteenth-century portraitist could paint a senator without consent and the resulting harm was bounded by the canvas. A LoRA trained on the same senator can be invoked endlessly, in any context, by anyone with the file.

The right reference class for the LoRA debate is not copyright at all. It is the long argument over whether human likeness — face, voice, gait, signature gesture — should be treated as something a person retains by default rather than something they have to actively claim. Treating likeness as a withholdable resource is what most legal traditions already do, awkwardly, in fragments. Treating it as architecture is what generative tooling now demands. The current scaffolding is not built for that demand.

What the Reckoning Actually Is

Thesis (one sentence, required): The LoRA debate is not about which adapters should exist — it is about whether consent can be retrofitted onto a fine-tuning ecosystem whose original design treated identifiable humans as default training material.

The current legal scaffolding is partial and uneven, and pretending otherwise inflates its protection. In the United States, the TAKE IT DOWN Act was signed into federal law on May 19, 2025, criminalizing knowing publication of non-consensual intimate imagery — including AI-generated material — and requiring covered platforms to remove such content within 48 hours of victim notice (Orrick analysis). Penalties run up to two years in federal prison for adult-victim cases and three years where minors are involved. Platform compliance obligations begin May 19, 2026. The DEFIANCE Act, which would create a civil cause of action with statutory damages, passed the Senate by unanimous consent in July 2024 but stalled in the House and was reintroduced in January 2026; as of April 2026 it is not federal law (Reality Defender summary). On the EU side, Article 50 of the AI Act becomes binding for deepfake disclosure obligations on August 2, 2026 (EU AI Act portal). Andersen v. Stability AI — the closest thing the industry has to a merits test on training-data legality — has a trial scheduled for September 8, 2026 in the Northern District of California, with no ruling on the merits yet (BakerHostetler litigation tracker).

These instruments share a feature. Each addresses one harm category at a time. Non-consensual sexual imagery has the strongest federal scaffolding. Style theft of a named living artist has almost none. Likeness fraud against a private person sits in a patchwork of state laws and the Federal Trade Commission’s impersonation rule. The reckoning, such as it is, is fragmentary by design.

What We Can Sit With

There is no clean prescription here, and pretending otherwise would betray the difficulty of the problem. Three questions are worth holding open. First: should the unit of consent in a fine-tuning ecosystem be the original training image, the resulting adapter, or the downstream output — and who bears the responsibility for tracking that chain? Second: what does meaningful artist-side defense look like when the most-downloaded protective tools, like the University of Chicago’s Glaze and Nightshade, are designed to raise the cost of unlicensed training rather than prevent it (MIT Technology Review)? And third: what does it mean that the most active governance work on identifiable-person LoRAs is being done not by ethics boards but by payment processors and victim-led legislation?

Where This Argument Is Weakest

The honest weakness of this position is that I cannot offer a consent regime for likeness LoRAs that does not concentrate enforcement in a small number of platforms with the resources to operate it. If that consolidation is unacceptable, the alternative is a tooling ecosystem where the rules are whatever the most permissive hub allows. I do not think those are the only two options, but I cannot draw the third one cleanly. If a workable distributed model emerges that resists both oligopoly and unaccountable hosts, this argument deserves to be revised.

The Question That Remains

Adapters are tiny. Consent infrastructure is large. We have built a generation of tooling whose unit cost dropped faster than our moral grammar grew, and we are now asking the people most harmed to build the safety layer the architects skipped. Whose faces should it have been, and whose burden should it be now?

Ethically, Alan.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors