Trained on Bias, Deployed on Faces: The Ethical Cost of CNN-Powered Surveillance Systems

Table of Contents

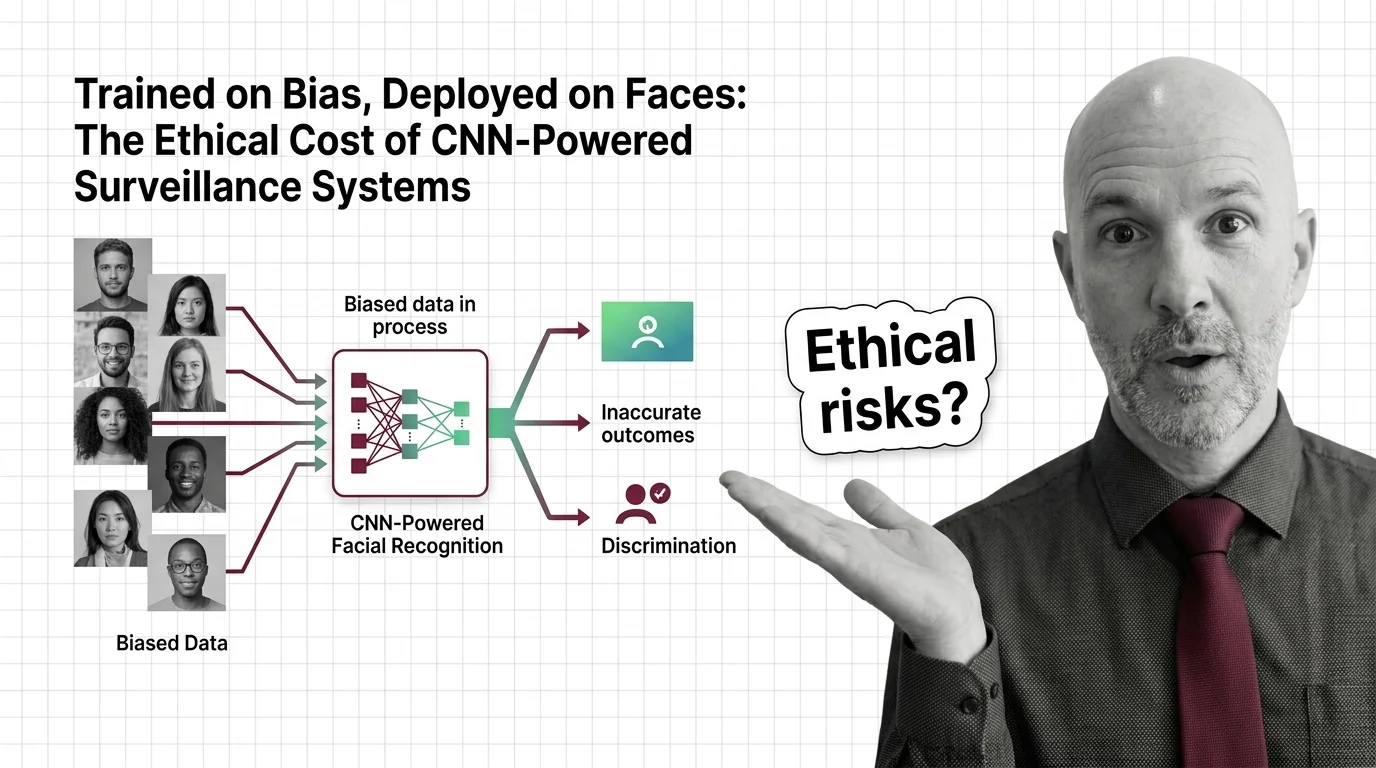

The Hard Truth

If a system is right 98% of the time but wrong almost exclusively about people who look like you — is it accurate, or is it a weapon dressed in statistics?

In 2020, Robert Williams was handcuffed on his front lawn in Detroit, in front of his wife and two daughters, because a Convolutional Neural Network matched his driver’s license photo to a shoplifting suspect. The match was wrong. Williams was held in custody before anyone questioned the algorithm — a case that culminated in a landmark settlement in June 2024, requiring changes to the Detroit Police Department’s facial recognition policies (ACLU). He was not the last. At least seven documented wrongful arrests have been tied to facial recognition in the United States, six of the seven involving Black individuals (Innocence Project). The actual number is almost certainly higher. This is not a story about a technology that needs refinement. It is a story about what happens when a statistical approximation is treated as testimony — and given authority over human bodies.

The Promise That Benchmarks Sell

The numbers look reassuring on paper. Systems built on architectures like FaceNet and VGG16 exceed 98% accuracy on the Labeled Faces in the Wild benchmark. That number travels well — into vendor pitches, police procurement documents, political arguments for public safety. What it conceals is that LFW, the dataset against which these scores are measured, is composed of 83.5% white faces (Harvard JOLT).

Accuracy, it turns out, is not a property of the system. It is a property of the system for a particular population. And the populations most likely to encounter facial recognition in policing are the populations least represented in the data that shaped the system’s understanding of what a face is.

The Feature Map a convolutional neural network learns during training encodes patterns from the data it was given. When that data overwhelmingly represents lighter skin tones, the network’s learned representations become sharper for those tones and coarser for everything else. In the 2018 Gender Shades study, Joy Buolamwini and Timnit Gebru found error rates of up to 34.7% for darker-skinned females compared to 0.8% for lighter-skinned males (Buolamwini & Gebru). A 2019 NIST evaluation confirmed the pattern at scale: algorithms were 10 to 100 times more likely to misidentify Black or East Asian faces than white faces (NIST). Some vendors have improved since that report, but demographic gaps persist in ongoing evaluations — the disparity narrowed, it did not disappear.

The Comfortable Belief in a Technical Fix

The conventional response is reasonable, even well-intentioned. Build better datasets. Balance the training data. Apply Batch Normalization techniques and debiasing layers. Use Residual Connection architectures that preserve fine-grained information through deeper networks. The argument is familiar: bias is a data problem, and data problems have data solutions.

There is truth here. Research into debiasing techniques exists, and some of it shows genuine promise. But the assumption underneath — that training data can be cleaned until fairness falls out the other end — contains a structural blind spot. The IJB-A dataset, another standard benchmark for facial recognition, contains 79.6% lighter skin tones (Harvard JOLT). This is not an accident. It reflects who gets photographed, catalogued, and made available at internet scale. It reflects whose faces exist in scrapable databases and whose privacy norms restrict collection. Balancing a dataset does not balance the power structures that produced it.

That distinction matters, because it determines where we look for the solution — and how long we are willing to wait for one.

The Architecture of Selective Failure

Here is the assumption that deserves closer examination: the problem is technical, therefore the solution must be technical. But the failure pattern tells a different story. CNN-powered facial recognition does not fail randomly. It fails along demographic lines that mirror existing social hierarchies — darker skin, female gender, younger age. These are the axes along which the system’s confidence collapses.

When a technology’s failure modes reproduce the exact patterns of historical discrimination, calling it a data problem is an understatement. It is a design problem, a procurement problem, and a governance problem. A Neural Network Basics for LLMs curriculum teaches you how convolutions extract spatial features from pixel grids. What it does not teach is what happens when those features are extracted from a world that was never photographed equally.

Porcha Woodruff was eight months pregnant when Detroit police arrested her in February 2023 for a carjacking she did not commit. The charges were dismissed for insufficient evidence. The algorithm, however, had sufficient confidence. The system that ran the algorithm had sufficient authority. What was insufficient was any mechanism to ask whether the match made sense — whether a pregnant woman fit the physical description, whether a confidence score warranted handcuffs, whether the tool should have been used at all.

When Infrastructure Becomes Ideology

We have a long history of embedding ideology into infrastructure and calling it neutral. Redlining was a mapping technique. Literacy tests were an assessment method. The tools were always described in technical language, and the harm was always legible only to those who suffered it.

CNN-powered surveillance follows this pattern with uncomfortable precision. Clearview AI assembled a database of over 20 billion images scraped from the internet and social media. Despite a $51.75 million settlement in 2025 — which included a permanent injunction barring private-entity access — the company continues to operate for law enforcement. A court approved its web-scraping practices in March 2025, and an April 2026 hearing revealed that ATF used Clearview for 549 searches with no oversight mechanism in place.

The ethical risks of CNN-powered facial recognition and mass surveillance are not edge cases. They are the predictable consequences of systems built without the question: who does this harm?

The Inequality Is the Architecture

Surveillance systems built on biased training data do not have a bias problem — they have a legitimacy problem. No amount of technical refinement resolves the fundamental question: by what right does an unaudited algorithm determine who gets stopped, detained, or arrested? The disparity is not a temporary deficiency awaiting the next model version. It is the structural signature of a system that was never designed to account for the people it most affects.

The EU AI Act recognizes a version of this argument. As of February 2, 2025, untargeted facial image scraping and emotion recognition in workplaces and schools are prohibited practices, with full enforcement of real-time biometric restrictions for law enforcement arriving August 2, 2026 — carrying penalties of up to EUR 35 million or 7% of global annual turnover (EU Digital Strategy). In the United States, the response has been fragmented: San Francisco became the first city to ban police use of facial recognition in 2019, and roughly fifteen states had enacted some form of restriction by end of 2024, though enforcement remains contested.

Regulation is a start. But regulation that addresses where these systems operate without addressing how biased training data causes convolutional neural networks to discriminate across race and gender in the first place — that regulation treats the symptom while the mechanism stays intact.

The Questions That Follow the Arrest

This is not a call to ban convolutional neural networks. The architecture itself is morally inert — a set of mathematical operations that detect spatial patterns. The question is not whether the tool works. The question is what we permit it to decide, under what conditions, with what oversight, and with what recourse for those it harms.

If a system cannot demonstrate equitable performance across demographic groups, should it be permitted to operate in contexts where its errors carry criminal consequences? If a vendor cannot disclose what their training data contains, should a police department be authorized to purchase their product? If an individual is arrested on the basis of an algorithmic match, should they have the right to examine the model’s training data, its error rates for their demographic, and the confidence threshold that triggered the arrest?

Where This Argument Breaks

Intellectual honesty demands acknowledging the conditions under which this position weakens. If debiasing techniques advance to the point where demographic disparities in facial recognition become statistically negligible — and if those techniques are adopted not just in research labs but in operational law enforcement systems — then the legitimacy argument loses much of its force. A system that performs equitably across populations is harder to challenge on discriminatory grounds, even if surveillance itself remains a contested practice.

The gap between research and operational reality, however, suggests that day is not close.

The Question That Remains

We built systems that see faces but not people. We trained them on a world that was photographed unequally and expected them to judge equally. The question is not whether we can fix the algorithm. It is whether we will demand the fix arrive before — not after — the next person is told to put their hands behind their back on the word of a machine that never learned their face.

Disclaimer

This article is for educational purposes only and does not constitute professional advice. Consult qualified professionals for decisions in your specific situation.

AI-assisted content, human-reviewed. Images AI-generated. Editorial Standards · Our Editors