Glitch Tokens, Fertility Gaps, and the Unsolved Technical Limits of Subword Tokenization

BPE tokenizers produce glitch tokens and penalize non-Latin scripts with fertility gaps. Learn where the math breaks — and what is emerging to fix it.

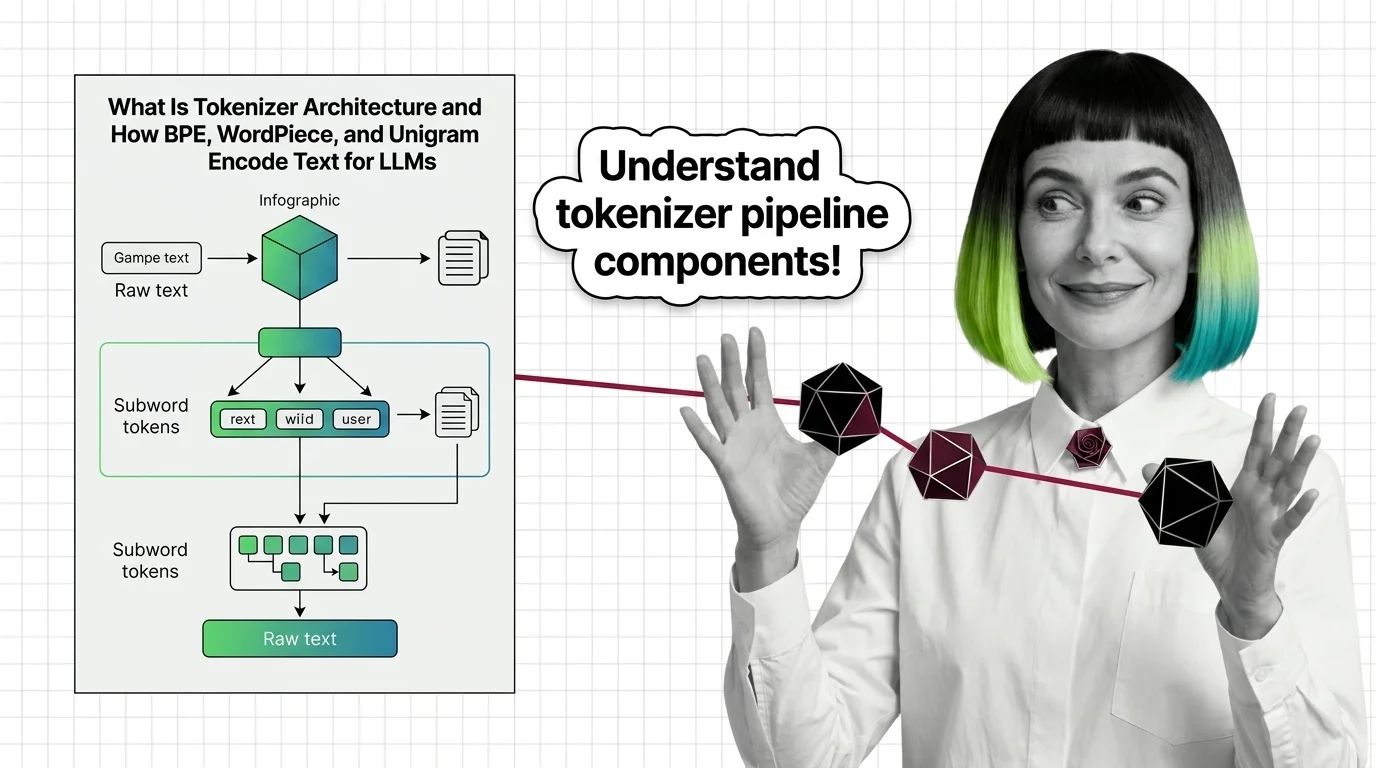

Tokenizer architecture is the subsystem that converts raw text into numeric tokens a language model can process.

It determines how words, subwords, and characters map to a fixed vocabulary using algorithms like BPE, WordPiece, or SentencePiece. These design choices shape vocabulary size, multilingual performance, inference cost, and downstream model quality. Also known as: BPE, Byte-Pair Encoding, SentencePiece.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

BPE tokenizers produce glitch tokens and penalize non-Latin scripts with fertility gaps. Learn where the math breaks — and what is emerging to fix it.

Tokenizer architecture determines how LLMs read text. Learn how BPE, WordPiece, and Unigram split text into subword tokens before attention ever fires.

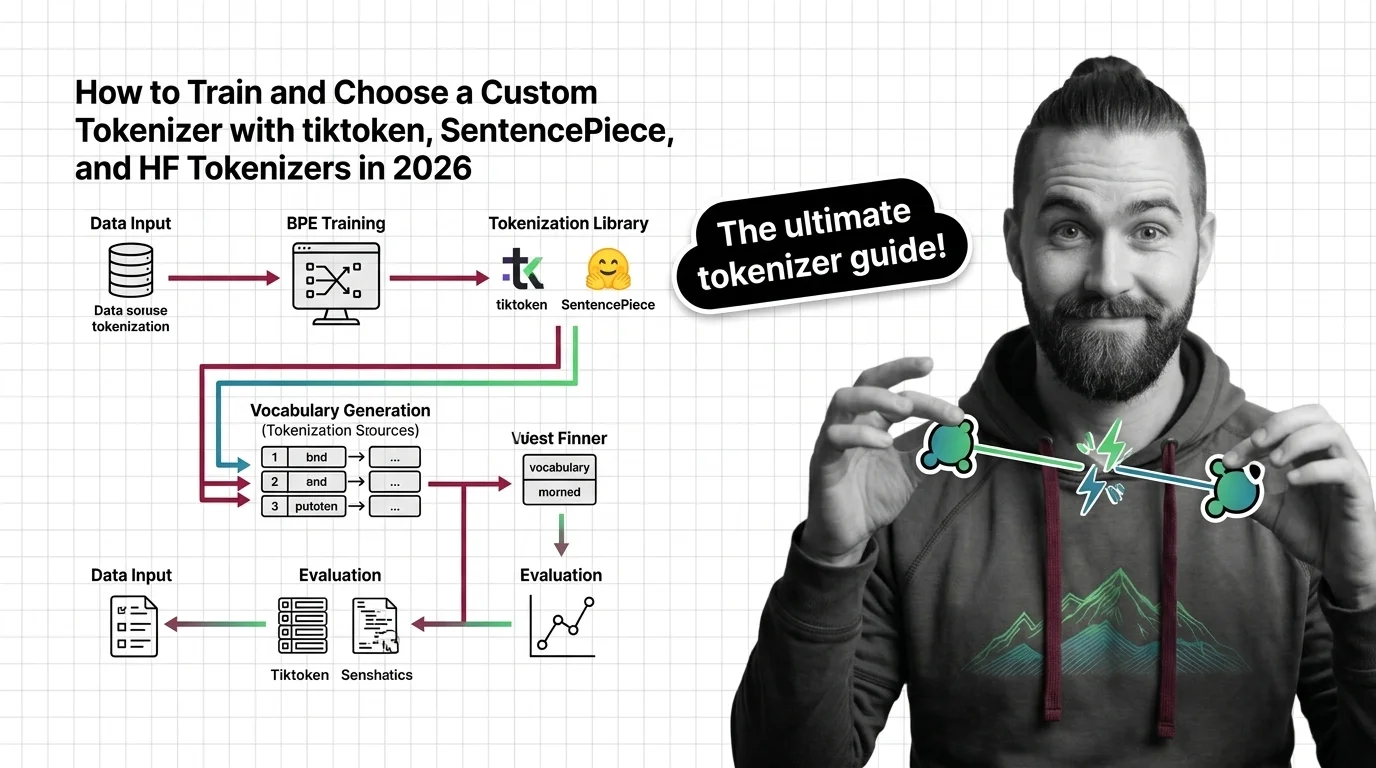

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

Learn how to choose, train, and validate a custom tokenizer using tiktoken, SentencePiece, and HF Tokenizers with a spec-first framework for 2026 LLM projects.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated March 2026

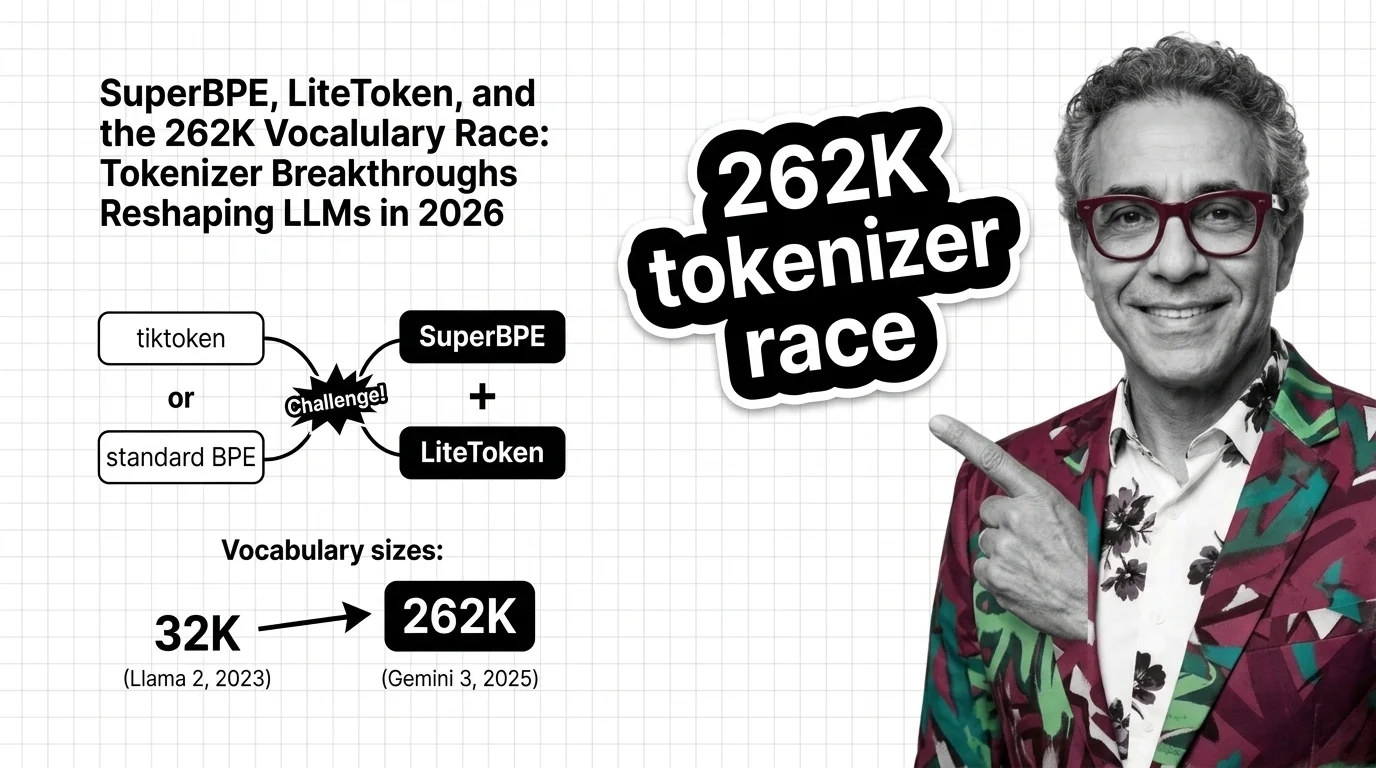

Tokenization is the overlooked frontier. SuperBPE and LiteToken expose 262K vocabulary gains in inference costs, reshaping LLM competitive positioning.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

Tokenizer bias means non-English speakers pay more per API token. Explore why this structural disparity exists and who bears responsibility for fixing it.