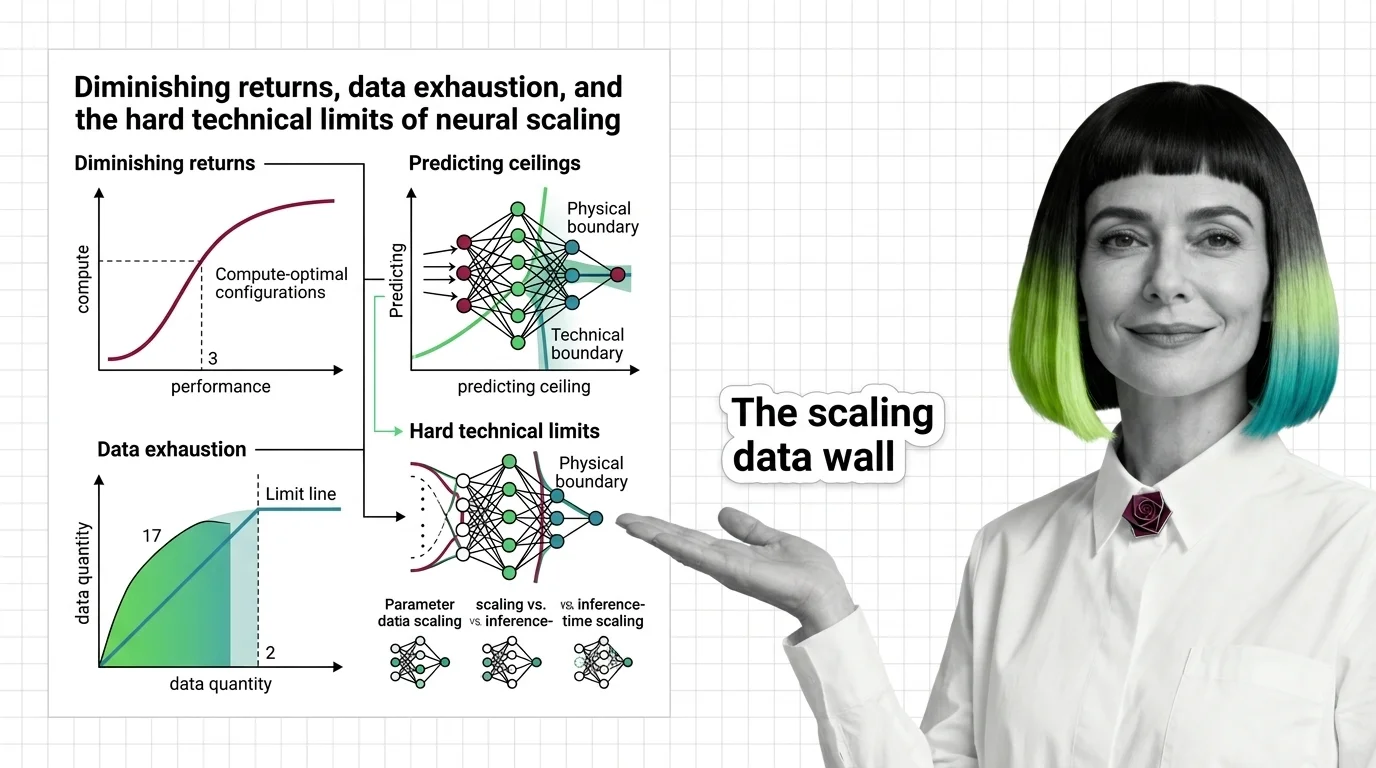

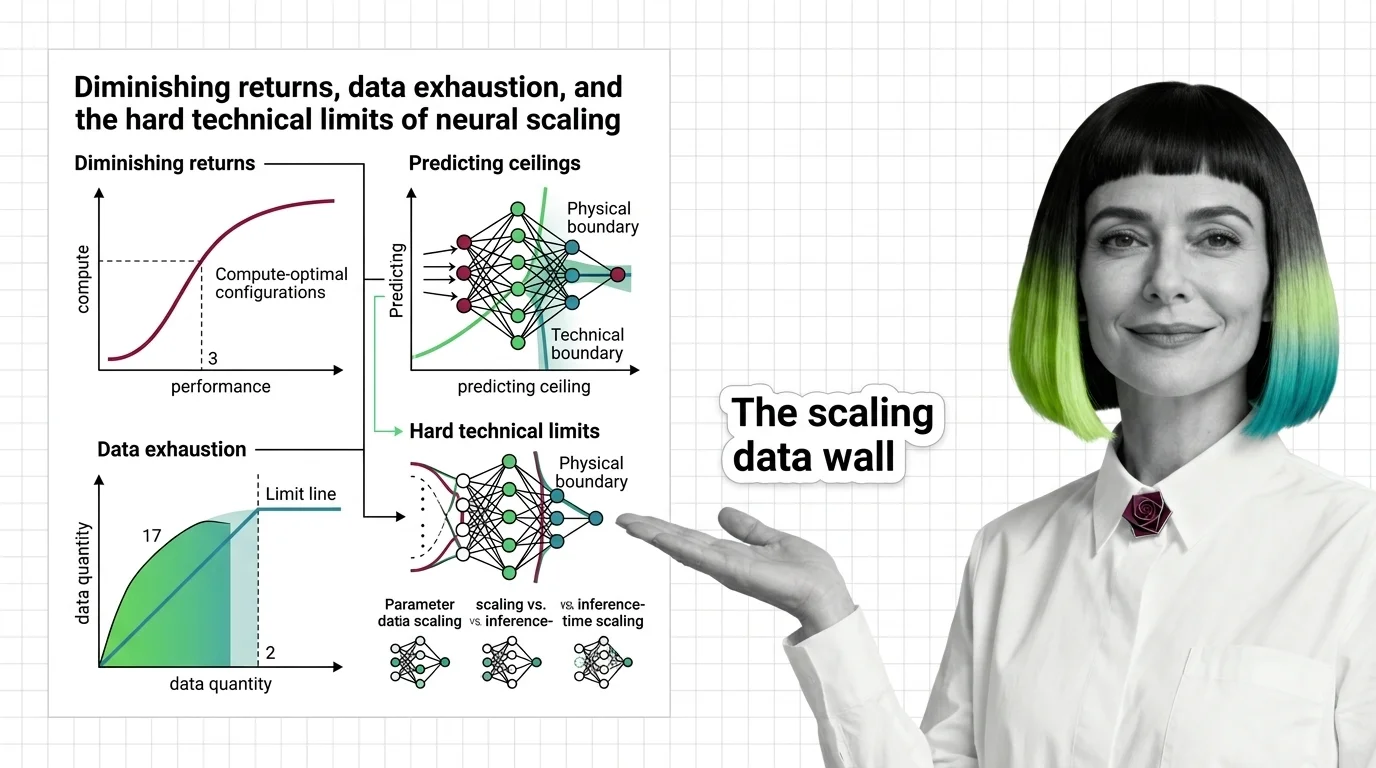

Diminishing Returns, Data Exhaustion, and the Hard Technical Limits of Neural Scaling

Scaling laws predict how AI models improve with compute, but power-law exponents guarantee diminishing returns. Learn where the ceilings are — and why.

Scaling laws are empirical relationships that predict how large language model performance changes as you increase model size, training data, or compute budget.

These power-law curves, most notably the Chinchilla scaling results, reveal predictable trade-offs between parameters, tokens, and FLOPs. They guide decisions about how to allocate resources during training and help explain why some capabilities emerge only at sufficient scale. Also known as: LLM Scaling Laws

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Scaling laws predict how AI models improve with compute, but power-law exponents guarantee diminishing returns. Learn where the ceilings are — and why.

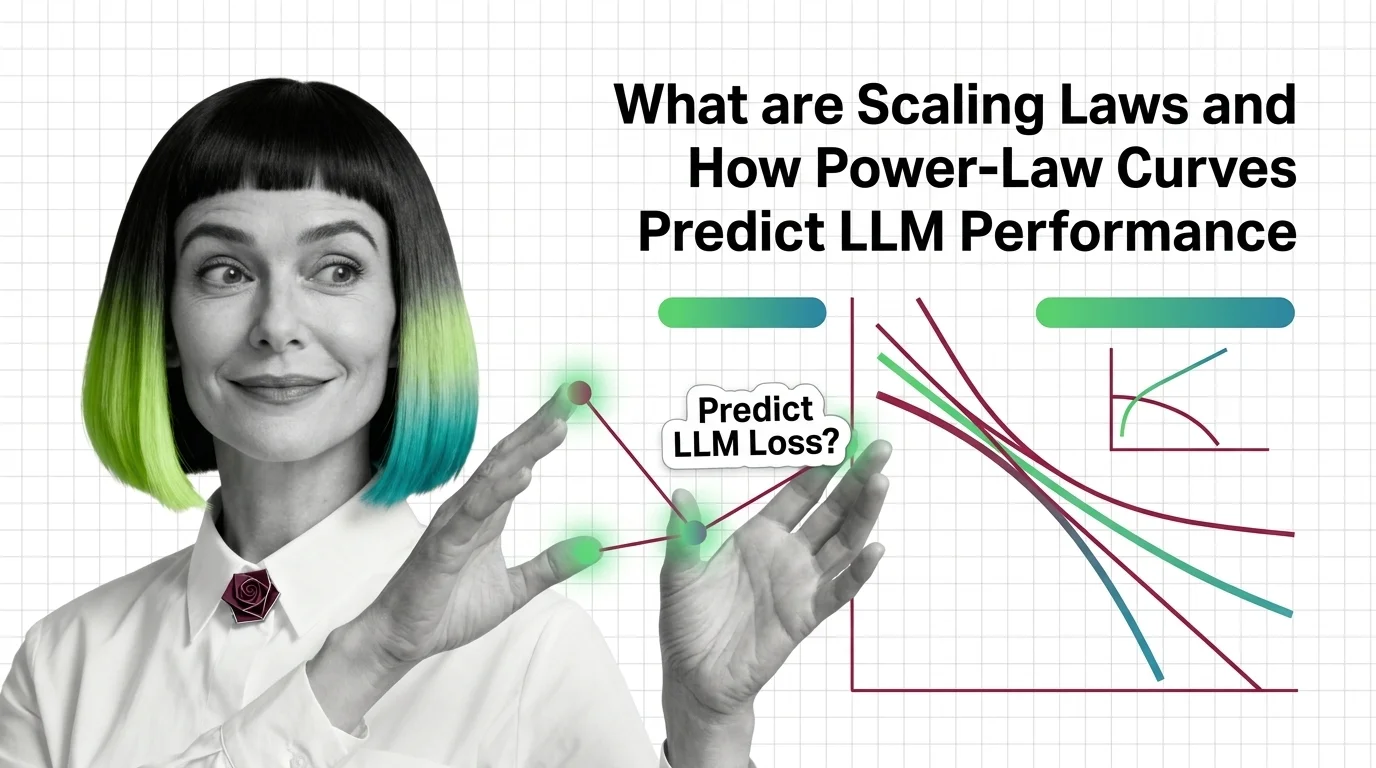

Scaling laws predict LLM performance from model size, data, and compute via power-law curves. Learn the math behind Kaplan, Chinchilla, and Densing Law.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

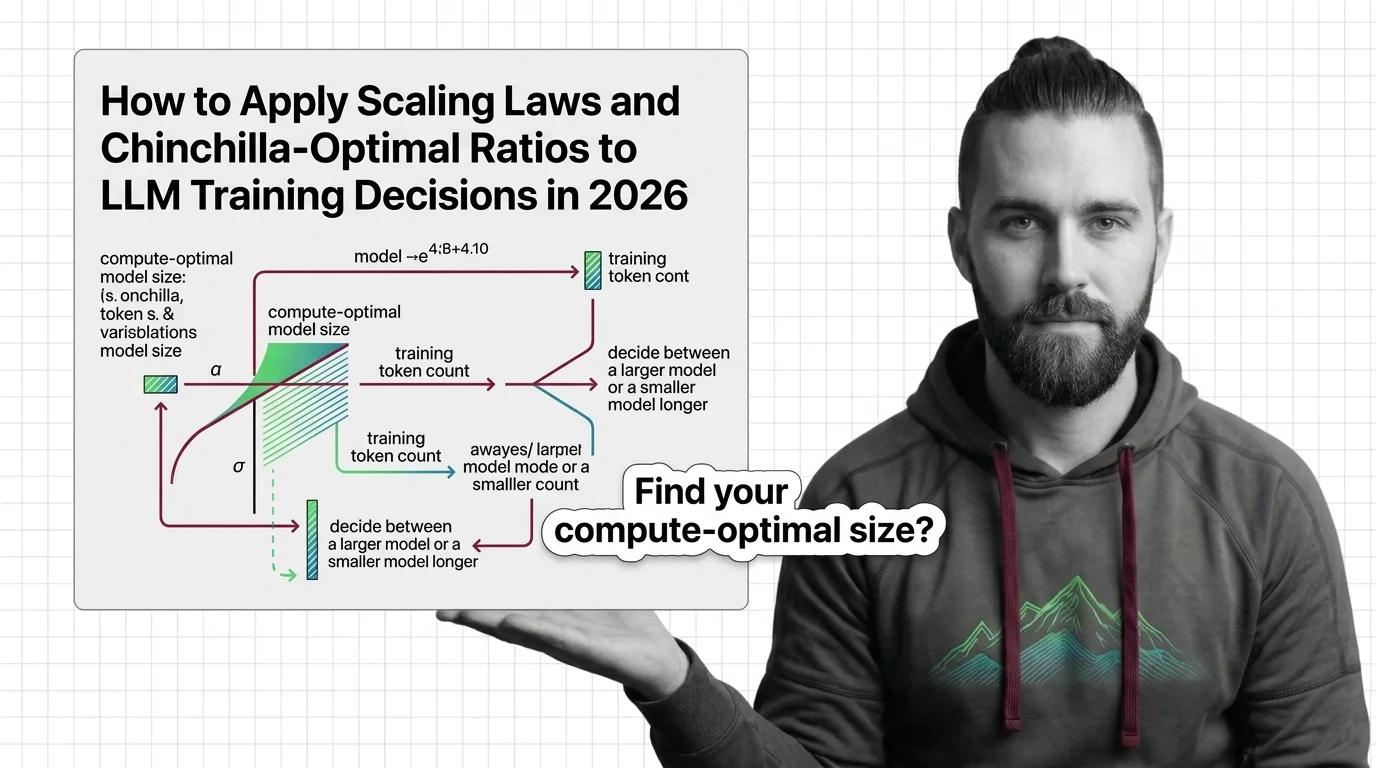

Apply scaling laws and Chinchilla-optimal ratios to real LLM training decisions. Compute budgeting, model sizing, and inference-aware trade-offs for 2026.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated March 2026

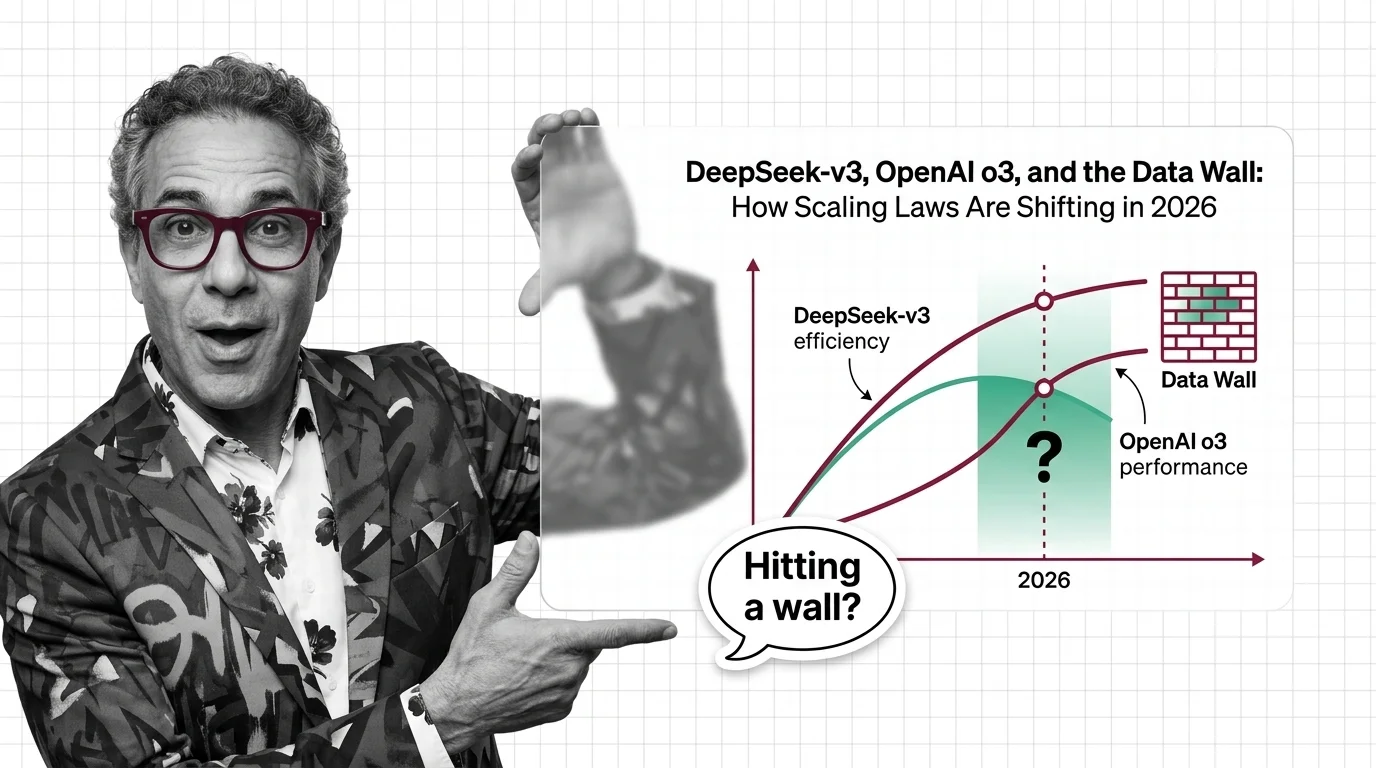

Scaling laws split in 2025 along three axes. DeepSeek proved efficiency, o3 proved inference-time compute, and the data wall is forcing the post-Chinchilla rethink.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

Scaling laws promise better AI through more compute, but the energy, water, and capital costs concentrate power among the few. Who pays the scaling tax?