Scaling Laws

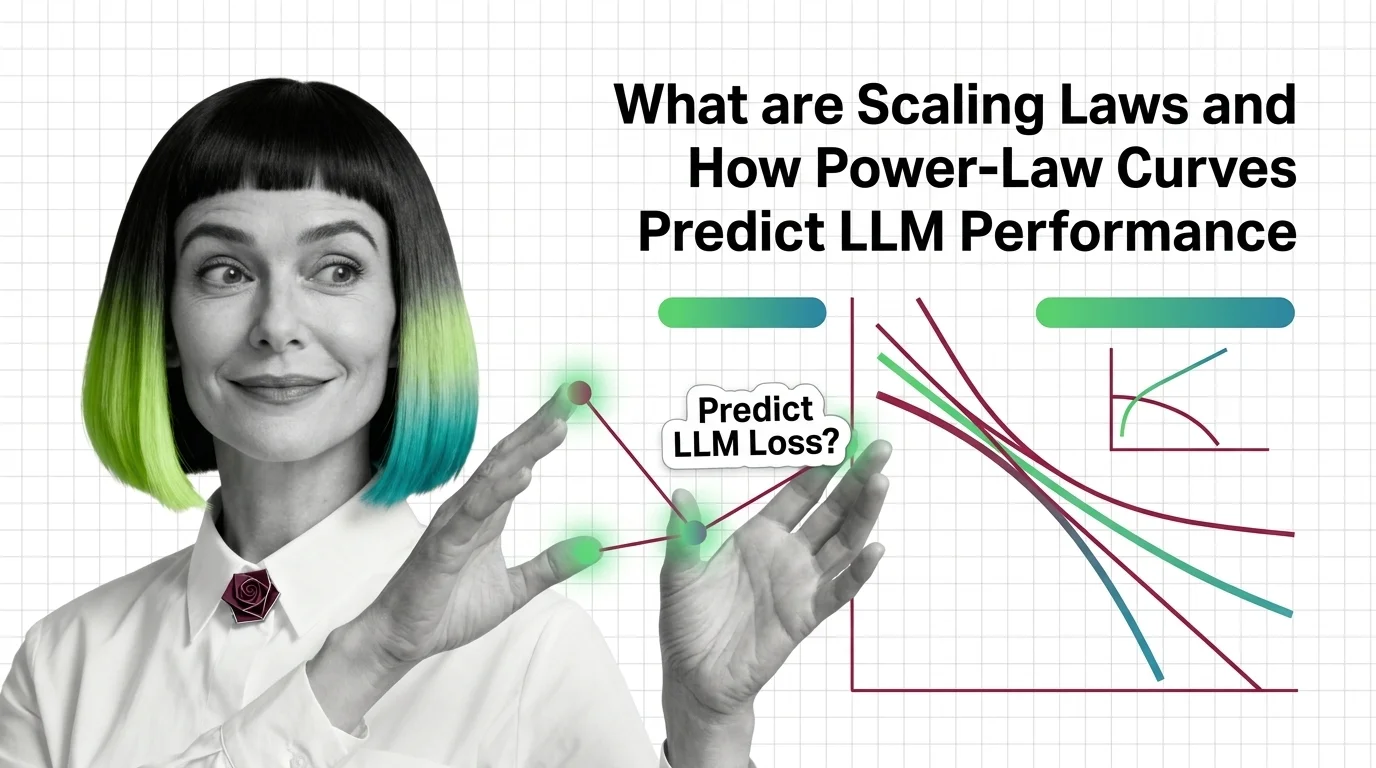

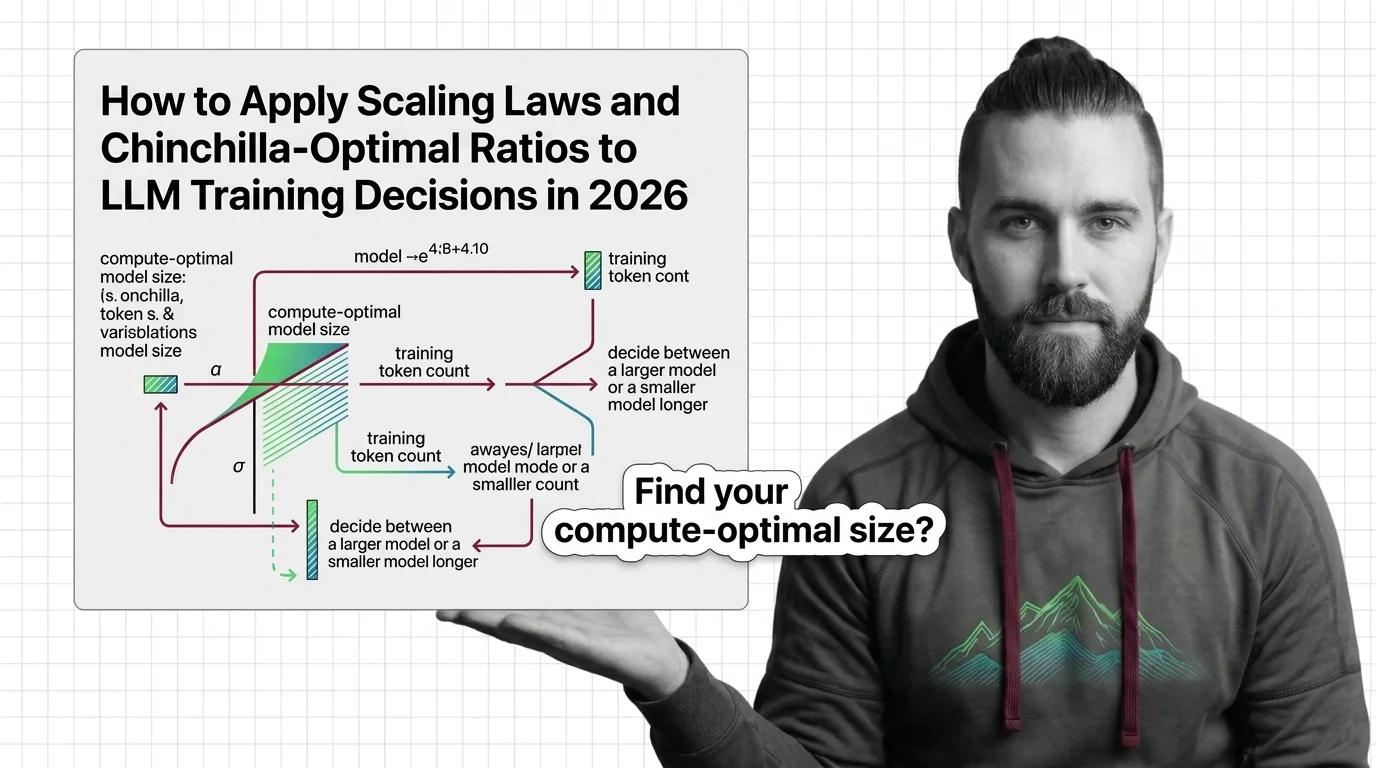

Scaling laws are empirical relationships that predict how large language model performance changes as you increase model size, training data, or compute budget. These power-law curves, most notably the Chinchilla scaling results, reveal predictable trade-offs between parameters, tokens, and FLOPs. They guide decisions about how to allocate resources during training and help explain why some capabilities emerge only at sufficient scale. Also known as: LLM Scaling Laws

Understand the Fundamentals

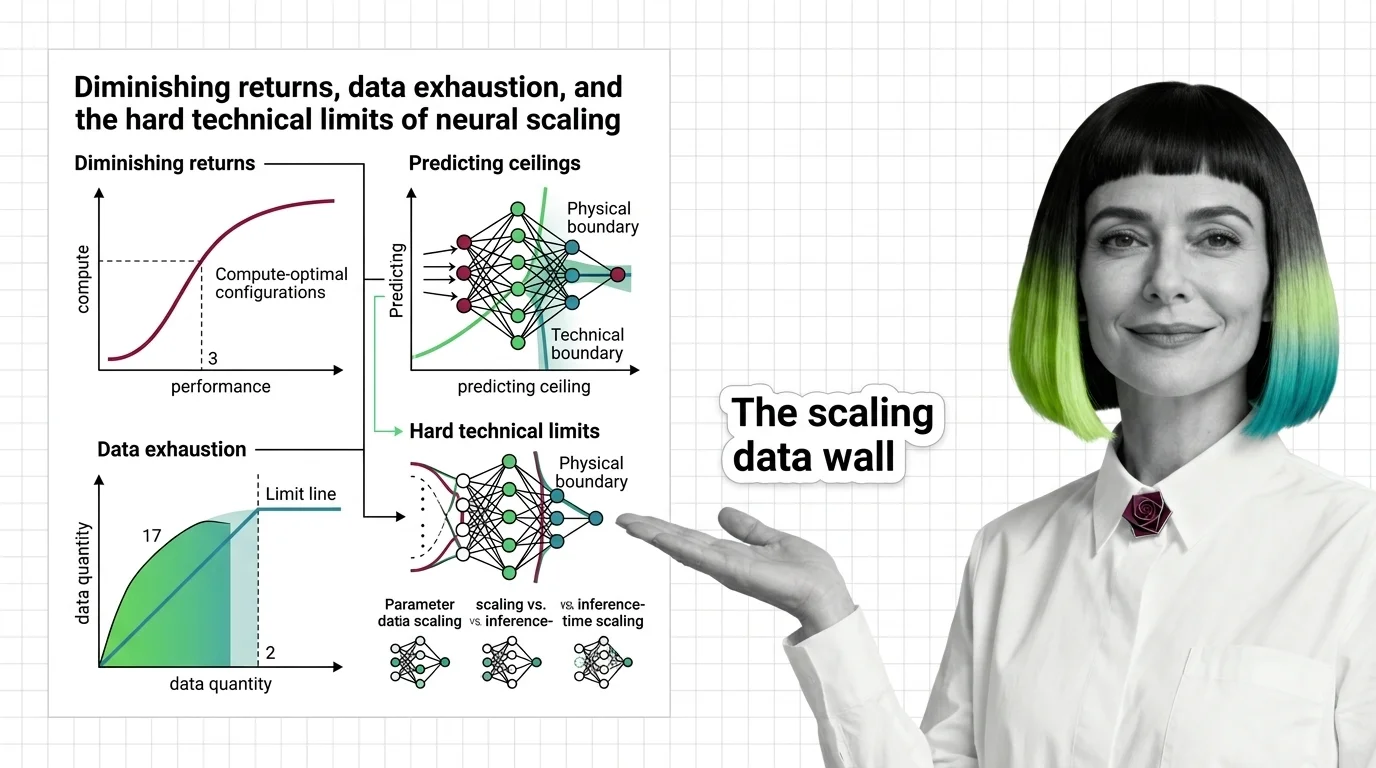

Scaling laws reveal surprisingly predictable patterns in how neural networks improve with size. Understanding these power-law relationships explains why certain capabilities appear only beyond specific thresholds.

Build with Scaling Laws

Practical guides cover how to use scaling curves for compute-optimal training decisions and where standard predictions break down in real-world resource allocation.

What's Changing in 2026

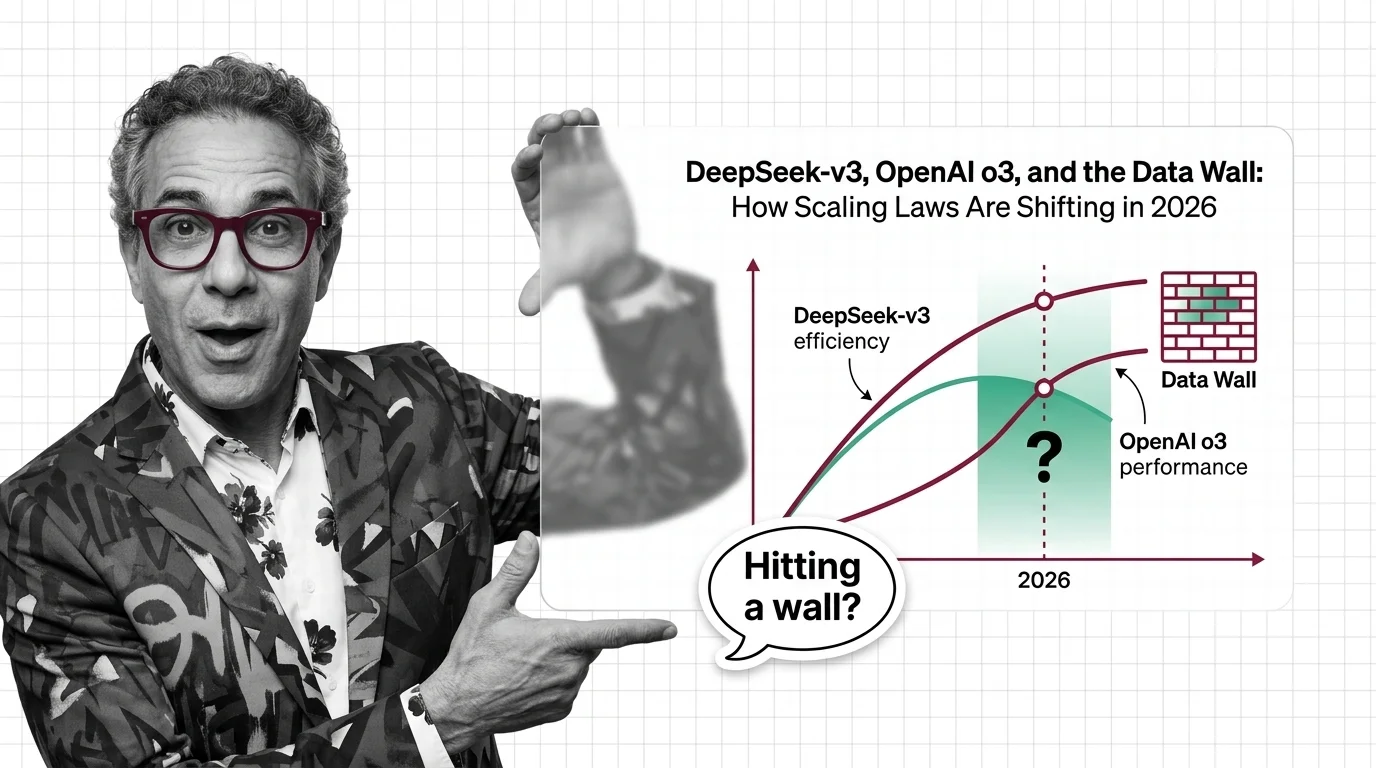

The relationship between scale and capability is actively shifting as new training paradigms challenge established assumptions. Staying current prevents costly misallocation of resources.

Updated March 2026

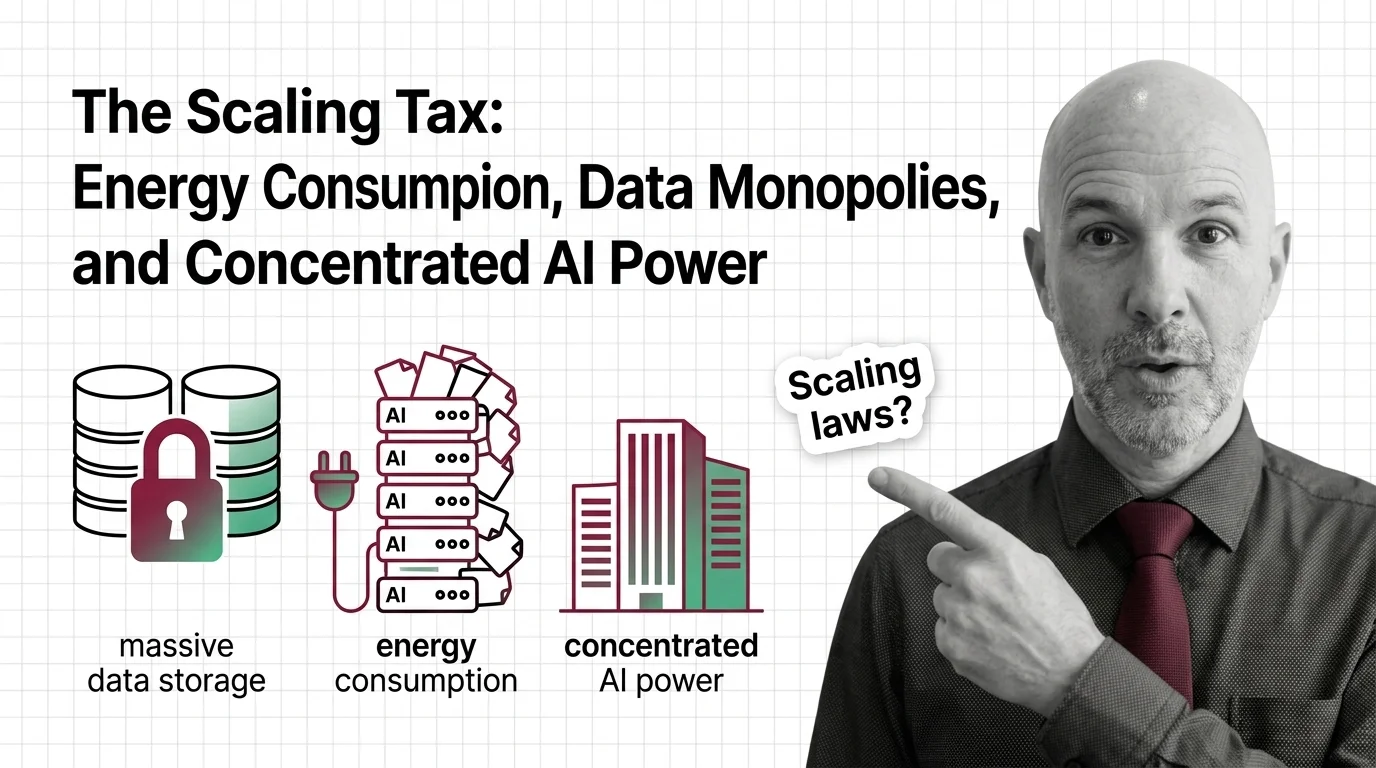

Risks and Considerations

Uncritical faith in scaling can concentrate power among the few organizations able to afford massive compute, while masking diminishing returns and environmental costs.