From Chunking to Reranking: RAG Pipeline Components and Prerequisites

Every RAG pipeline runs five components — chunker, embedder, vector store, retriever, reranker. Here is what each one does and where each one breaks.

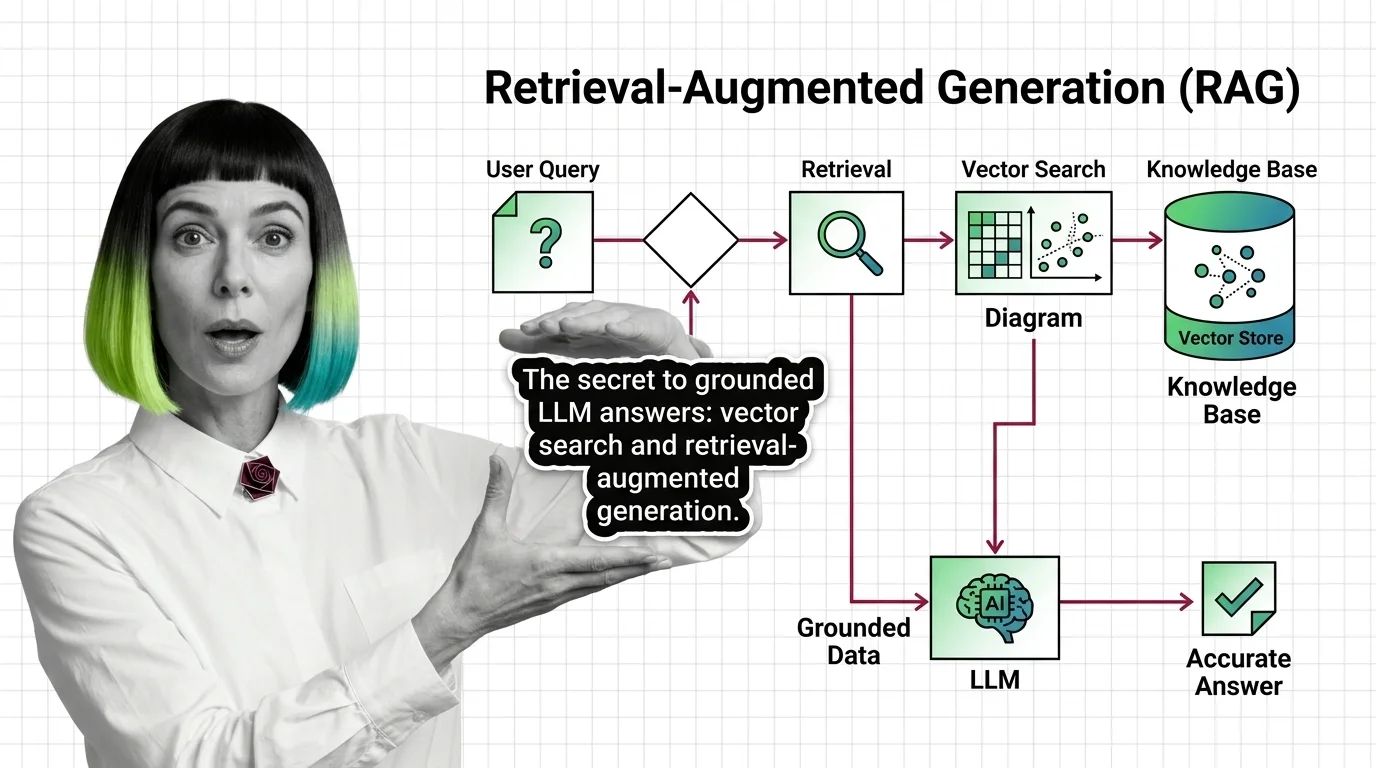

Retrieval-Augmented Generation (RAG) is an architecture pattern that connects a large language model to an external knowledge source so the model can pull relevant documents at query time and ground its answers in factual data.

It typically combines vector search, chunking, and reranking to retrieve passages, then feeds them into the prompt as context. RAG reduces hallucinations and lets LLMs answer from private or up-to-date information without retraining. Also known as: RAG.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Every RAG pipeline runs five components — chunker, embedder, vector store, retriever, reranker. Here is what each one does and where each one breaks.

Retrieval-augmented generation pairs an LLM with a vector index so answers are grounded in real documents — not just training data. The mechanism, explained.

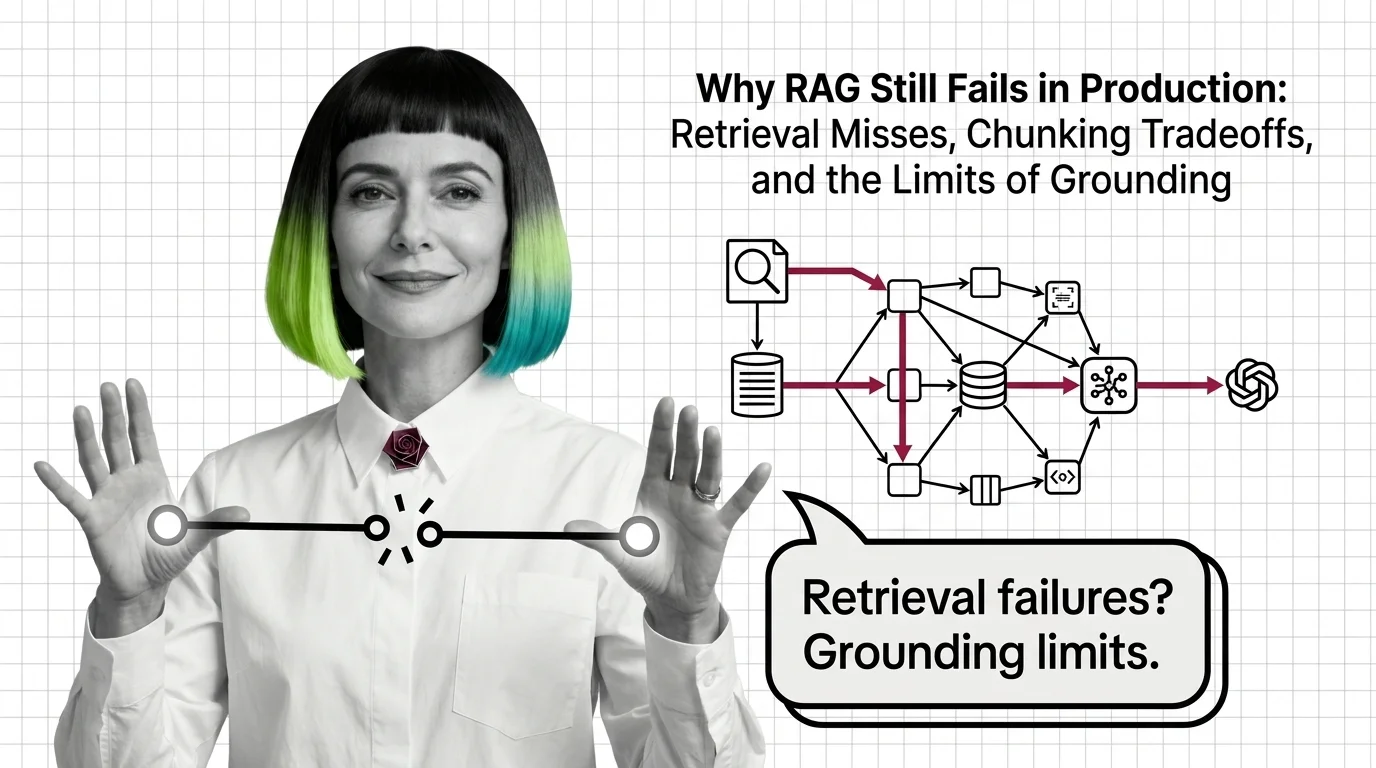

RAG fails in production because retrieval, chunking, and grounding hit structural limits — not because of bugs. Why correct retrieval still hallucinates.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

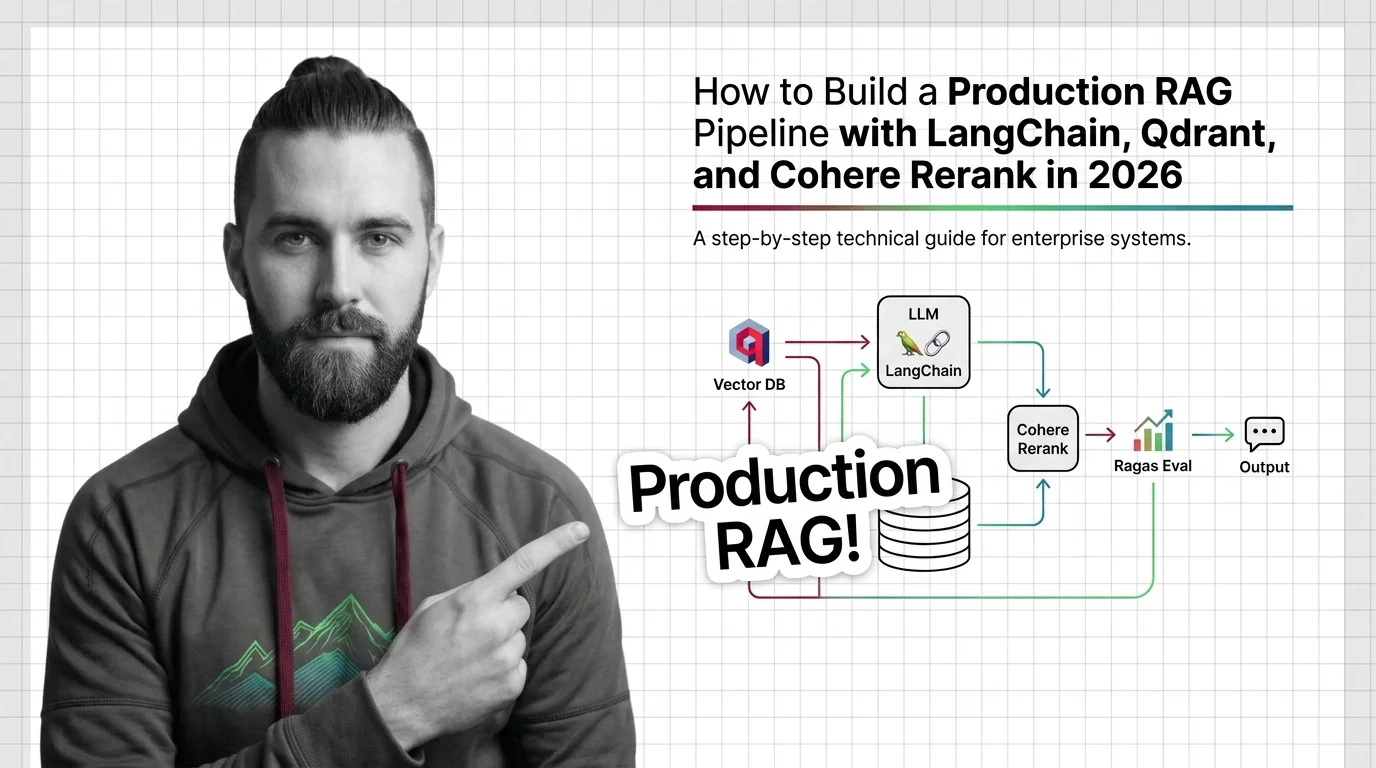

Build a production RAG pipeline in 2026 with LangChain, Qdrant hybrid retrieval, Cohere Rerank 4, and Ragas eval. Specs, contracts, and validation that ship.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated April 2026

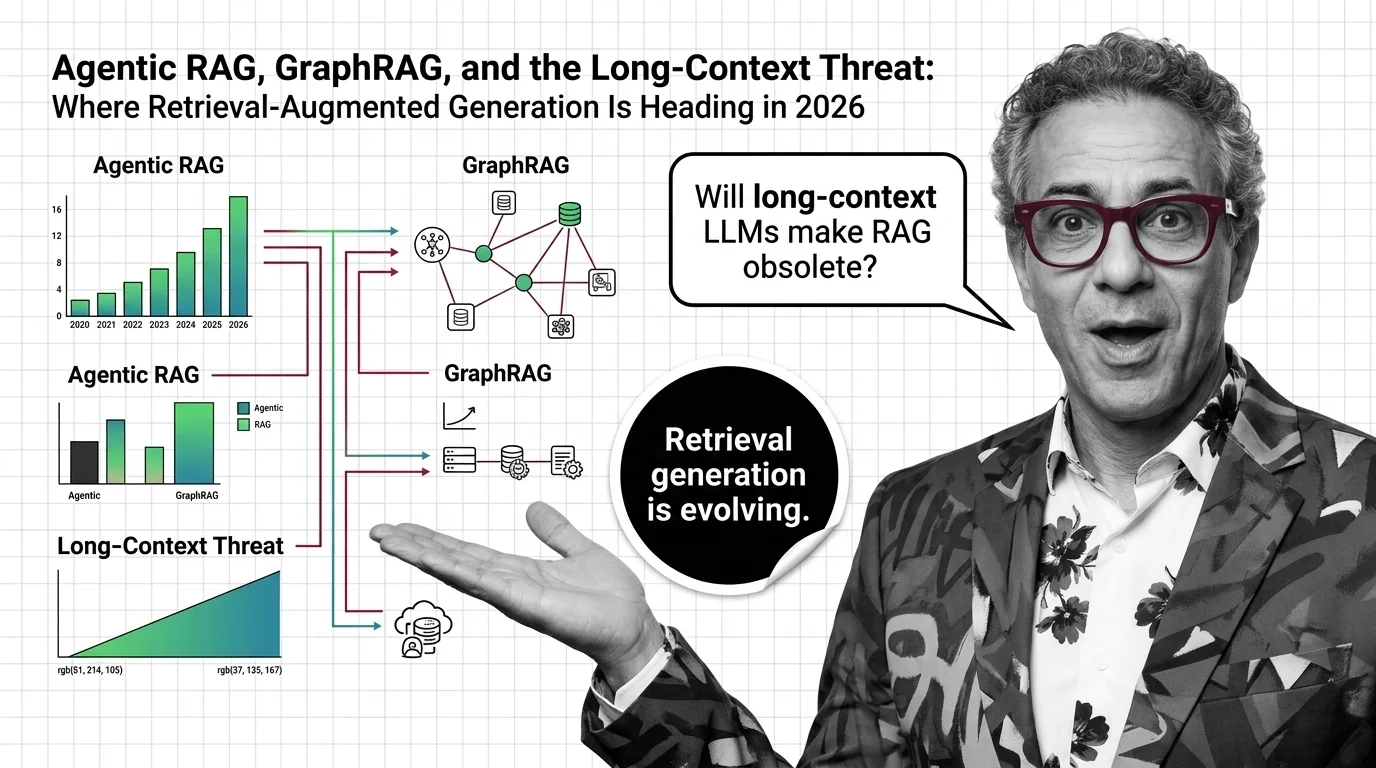

RAG isn't dying — it splits into three architectures in 2026: agentic, graph, and long-context. How production stacks route queries across all three.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

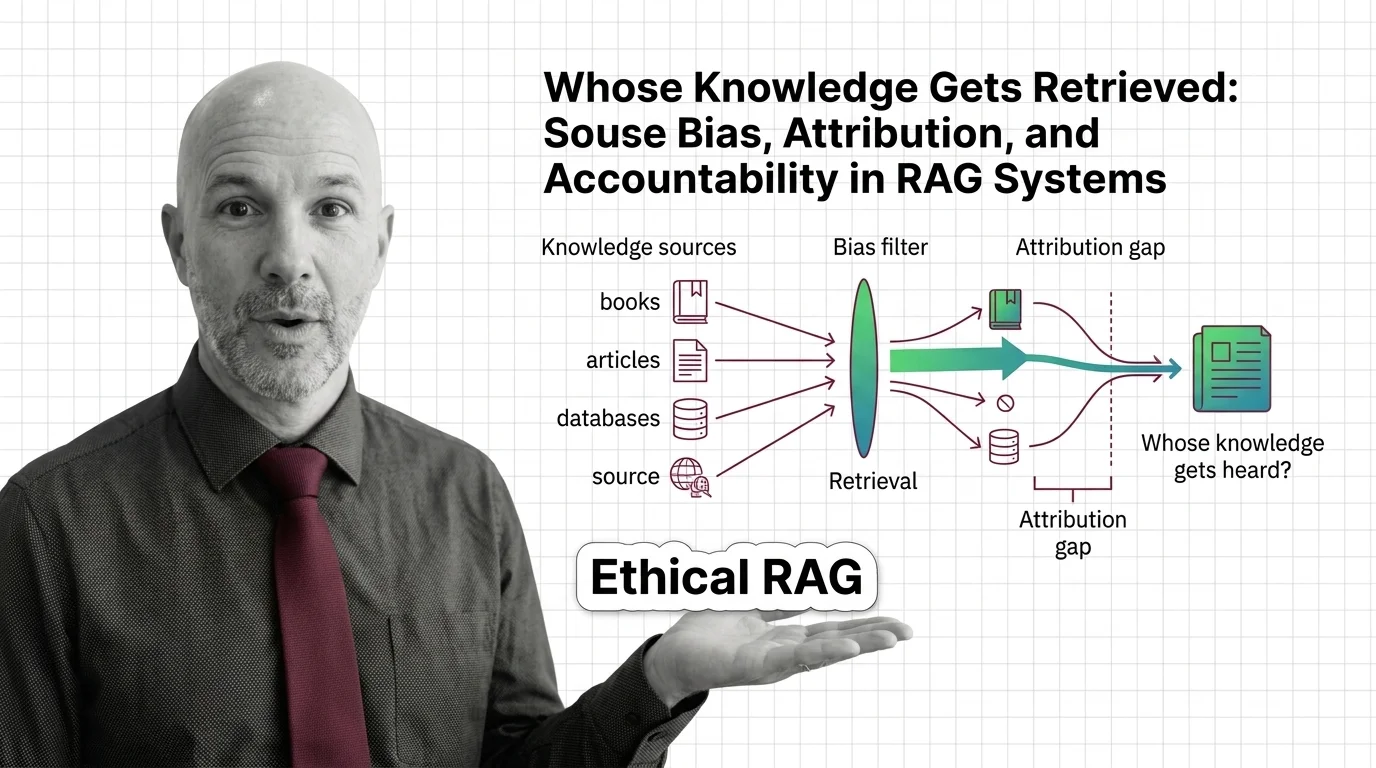

Retrieval-augmented generation isn't neutral. Source bias, attribution gaps, and corpus poisoning quietly decide whose knowledge counts in RAG outputs.