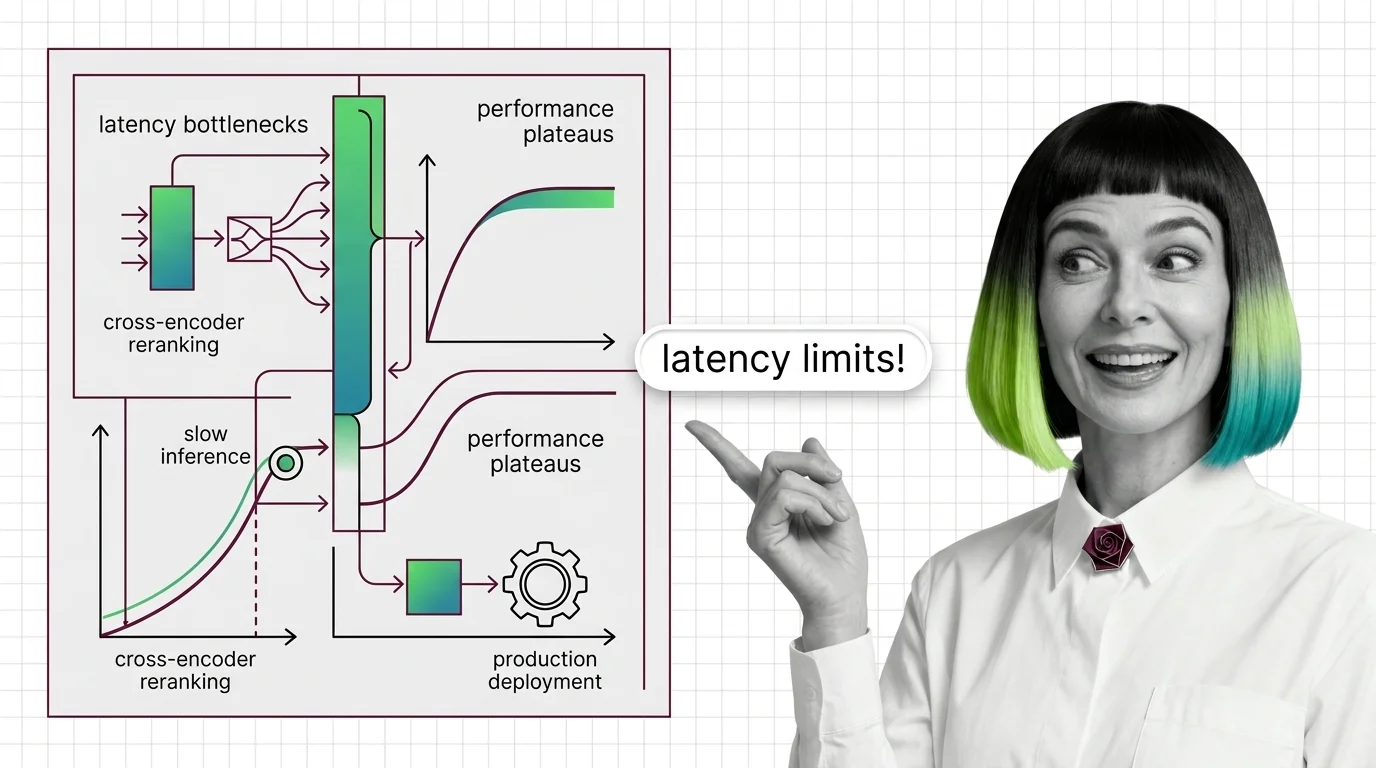

Cross-Encoder Reranker Limits: Latency Walls and Domain Drift

Cross-encoder rerankers hit two architectural walls: latency scales linearly with candidates and quadratically with tokens, plus MS-MARCO domain drift.

Reranking is a second-stage step in retrieval systems where a more accurate model rescores the top candidates returned by an initial search.

Instead of replacing your search index, it reorders results by examining each query-document pair directly, lifting the most relevant items to the top. This sharply improves precision in RAG pipelines, semantic search, and recommendation systems with minimal architectural change. Also known as: Cross-Encoder Reranking, Reranker.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Cross-encoder rerankers hit two architectural walls: latency scales linearly with candidates and quadratically with tokens, plus MS-MARCO domain drift.

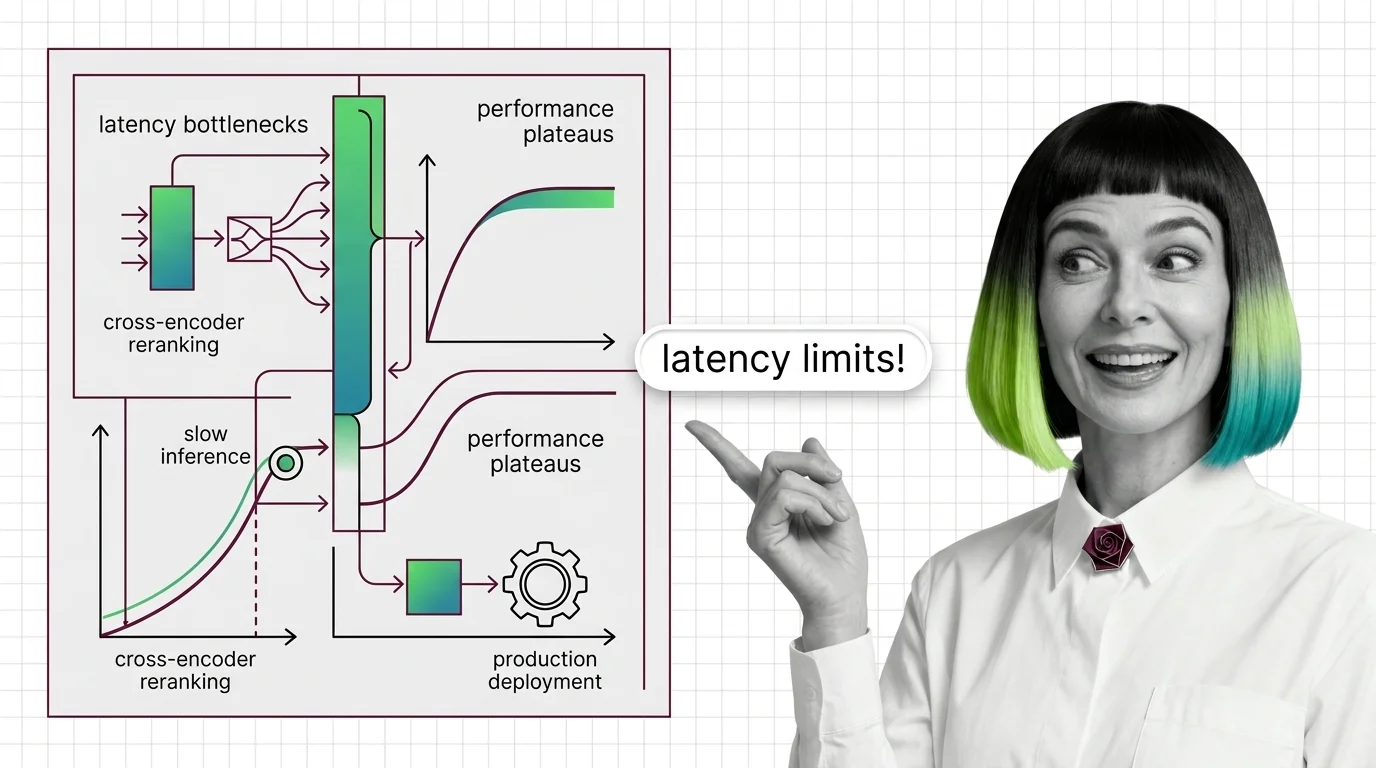

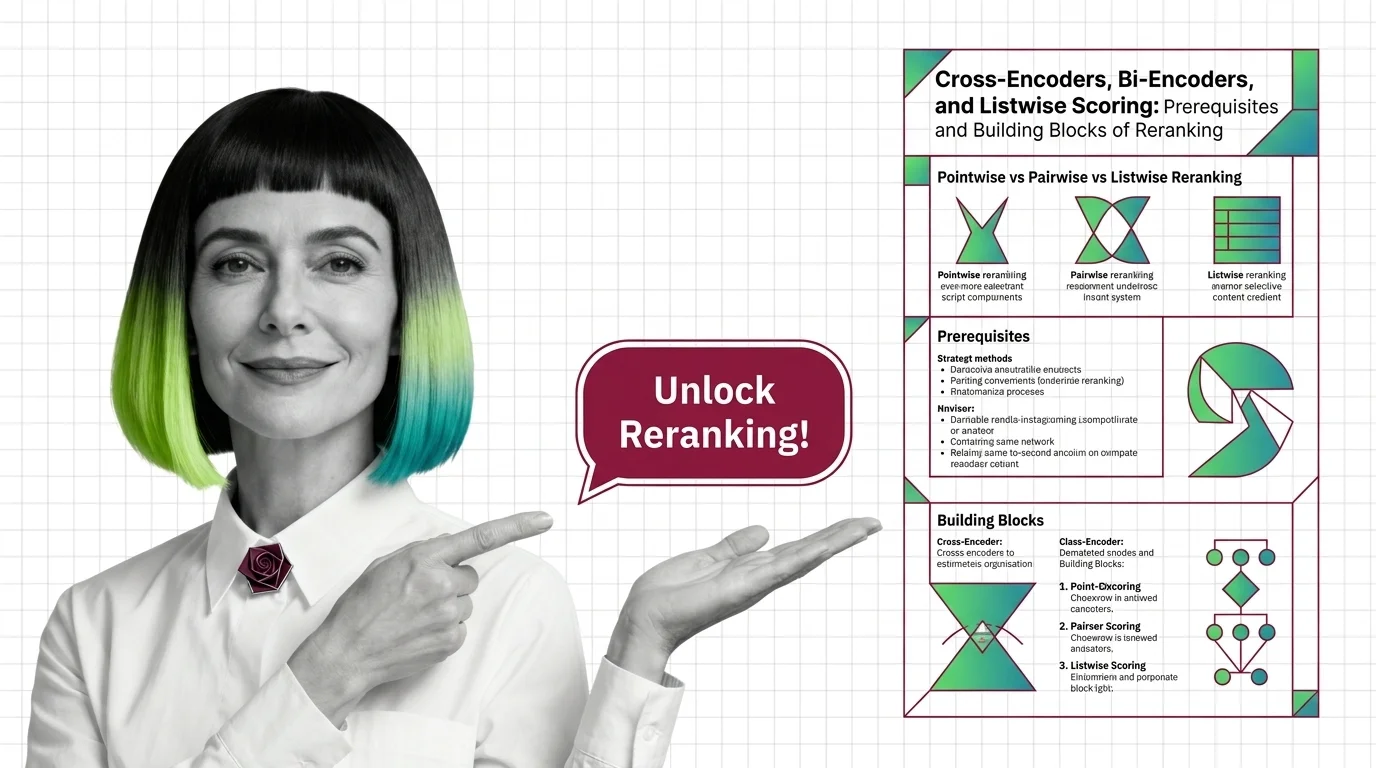

A reranker reorders the top candidates from vector search using a heavier model. Cross-encoders, bi-encoders, and listwise scoring explained.

Reranking splits recall and precision into two stages. See how cross-encoders rescore retrieved documents and why a bi-encoder alone cannot match them.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

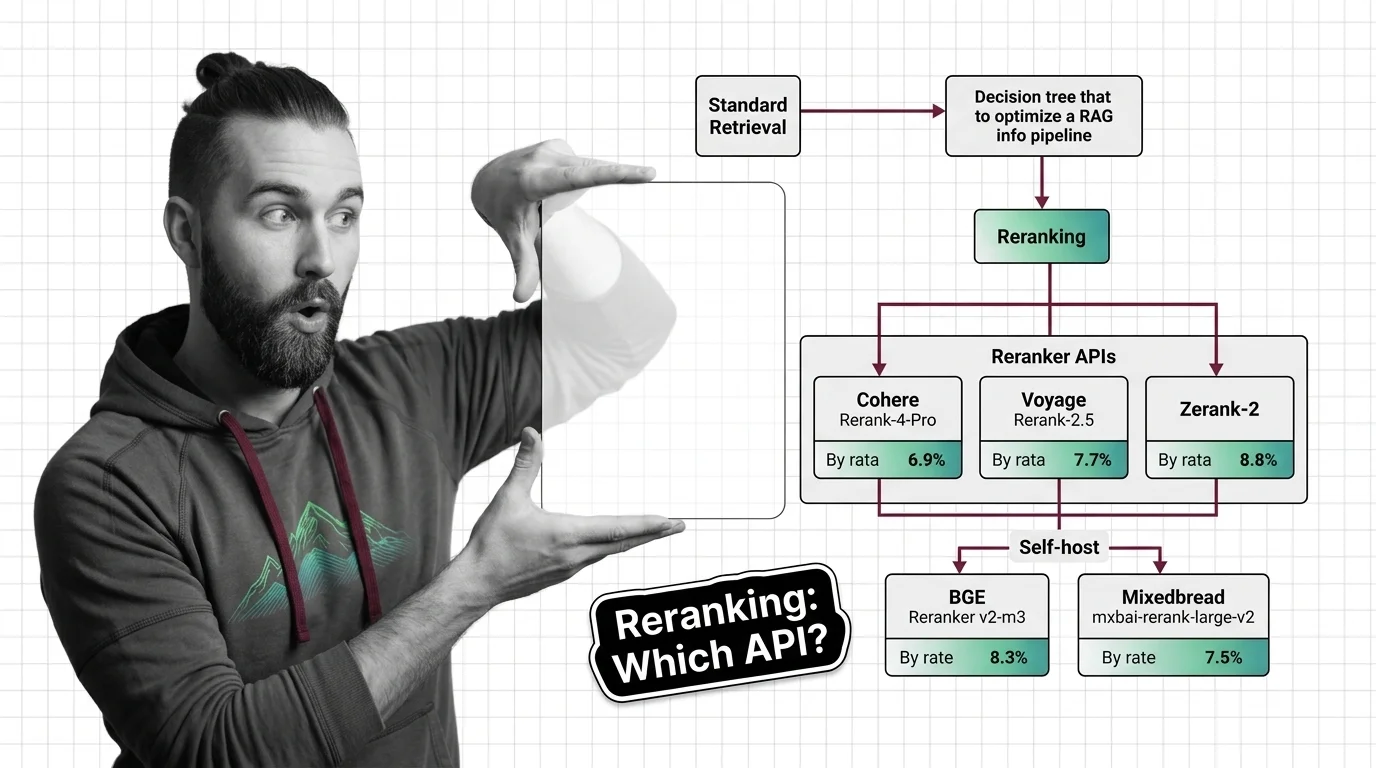

Add a reranker to your RAG pipeline in 2026. Compare Cohere Rerank 4 Pro, Voyage Rerank-2.5, Zerank-2, and self-hosted BGE/Mixedbread options.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated April 2026

The 2026 Agentset reranker leaderboard shows a 4B open-weight model topping Cohere's flagship — and on absolute retrieval quality, the gap is gone.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

Top rerankers come with non-commercial licenses or closed APIs. Reranking quality is rising; our ability to inspect the scoring is not.