OWASP LLM Top 10, MITRE ATLAS, and the Frameworks That Structure AI Red Teaming

OWASP LLM Top 10 and MITRE ATLAS give red teams structured attack categories. Learn how these frameworks turn AI security testing from guesswork into coverage.

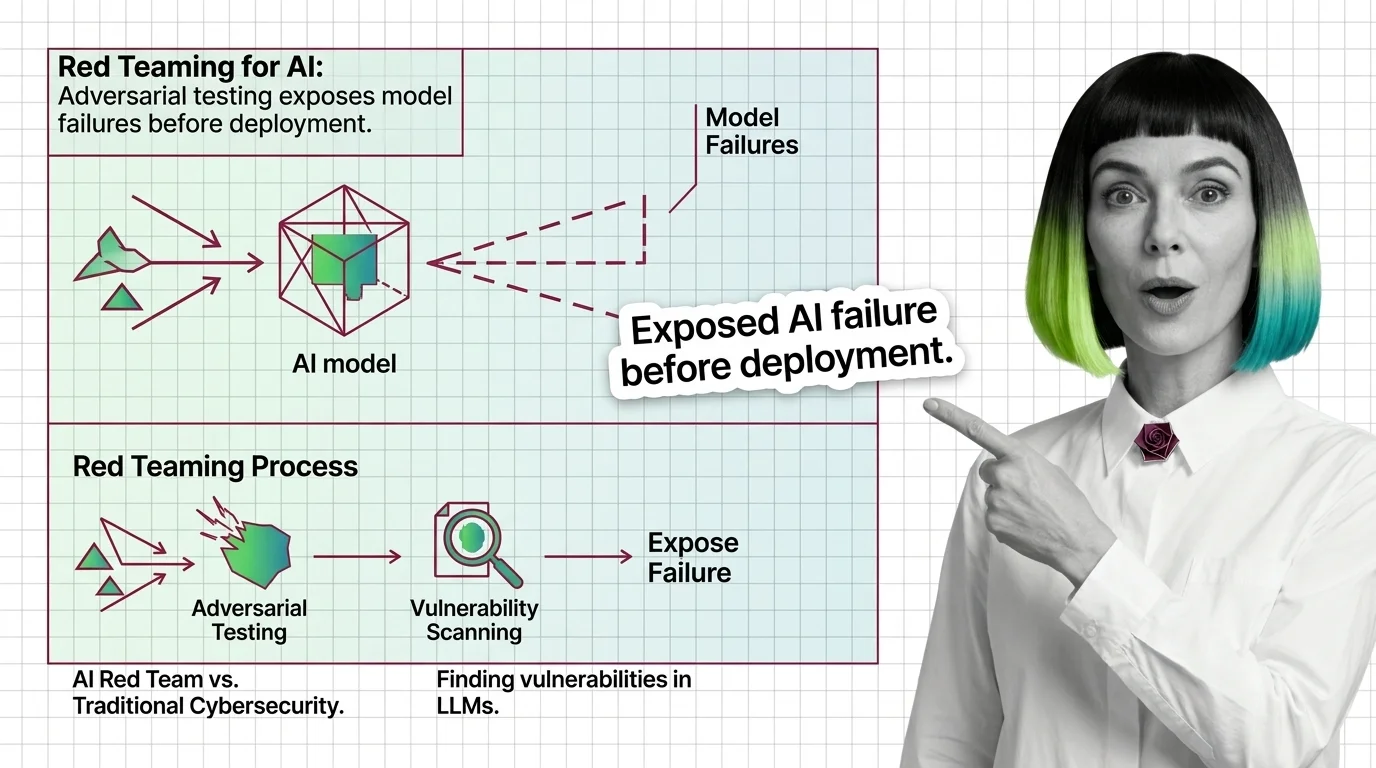

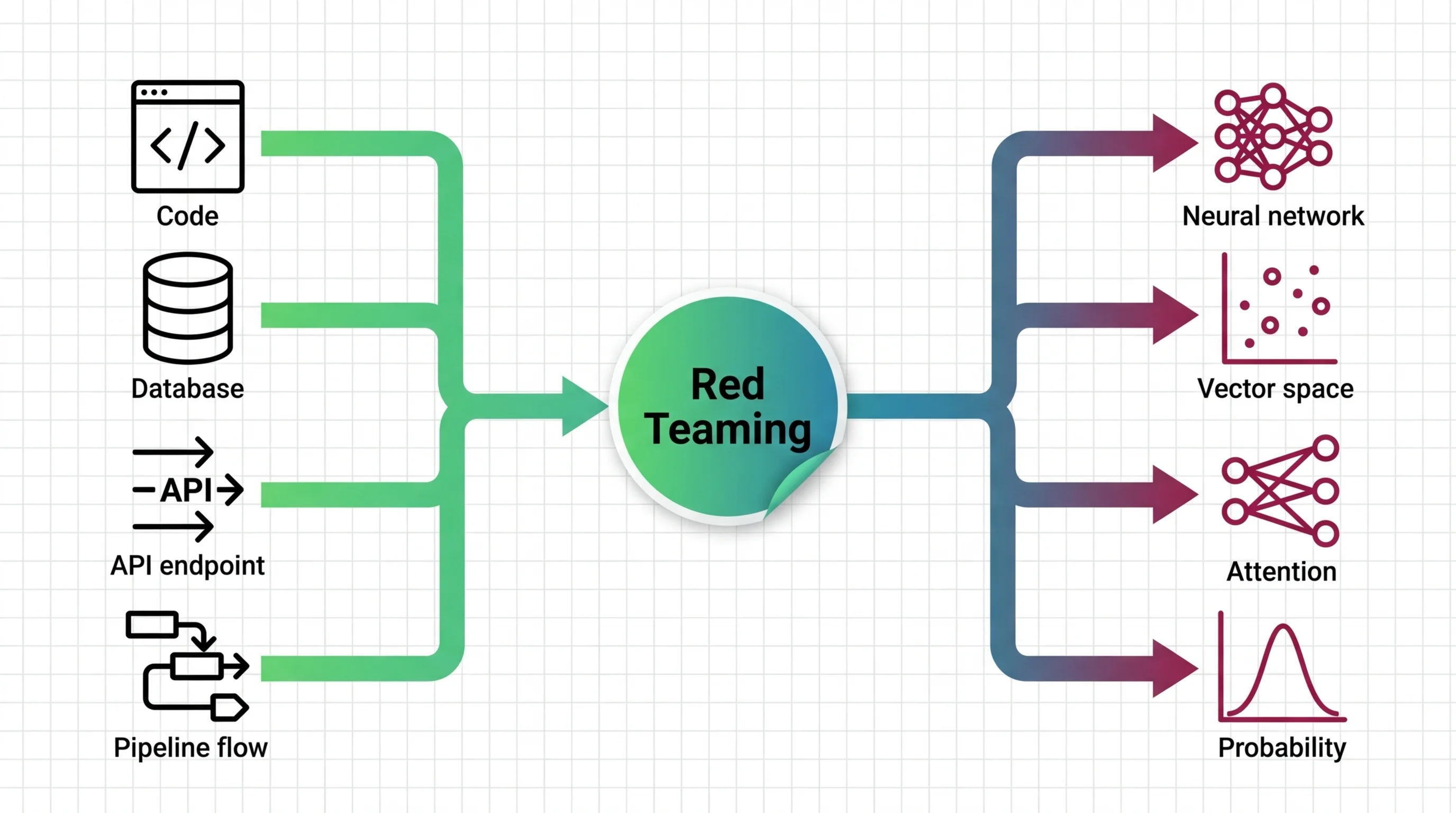

Red teaming for AI is adversarial testing where humans or automated systems deliberately probe an AI model to find failures, harmful outputs, jailbreaks, and edge cases before production deployment.

Teams simulate real-world attacks to uncover vulnerabilities that standard evaluations miss, including bias, toxicity, and safety failures. The practice draws from military and cybersecurity traditions but adapts them for the unique risks of generative AI systems. Also known as: AI Red Teaming, Adversarial Testing

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

OWASP LLM Top 10 and MITRE ATLAS give red teams structured attack categories. Learn how these frameworks turn AI security testing from guesswork into coverage.

Red teaming uses adversarial testing to reveal AI vulnerabilities. Discover what it catches, mechanics, and why it outperforms traditional security approaches.

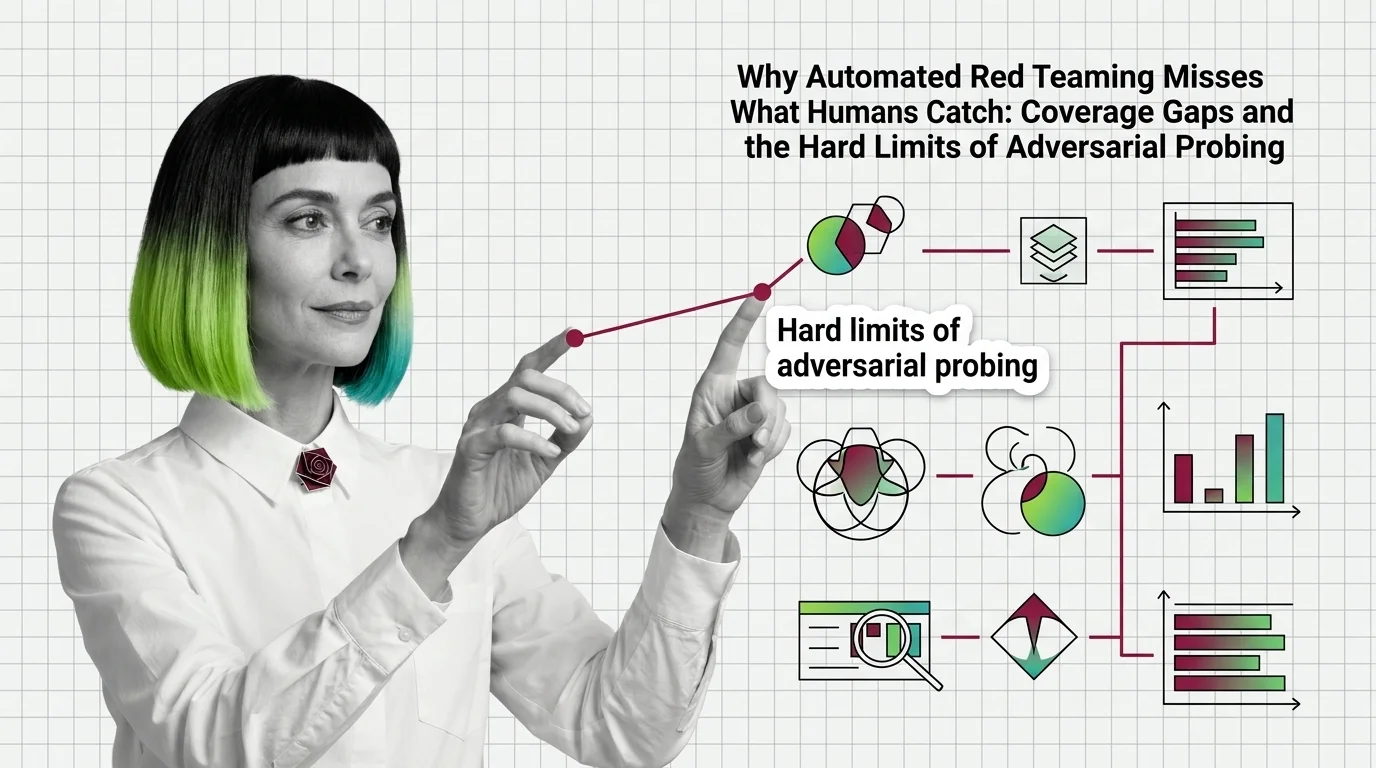

Automated red teaming outperforms human testing but misses critical failures. Coverage gaps explain why automated testing remains fundamentally incomplete.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

AI safety testing breaks classical software assumptions. Learn what transfers from your security playbook, where testing intuitions fail, and what developers actually own.

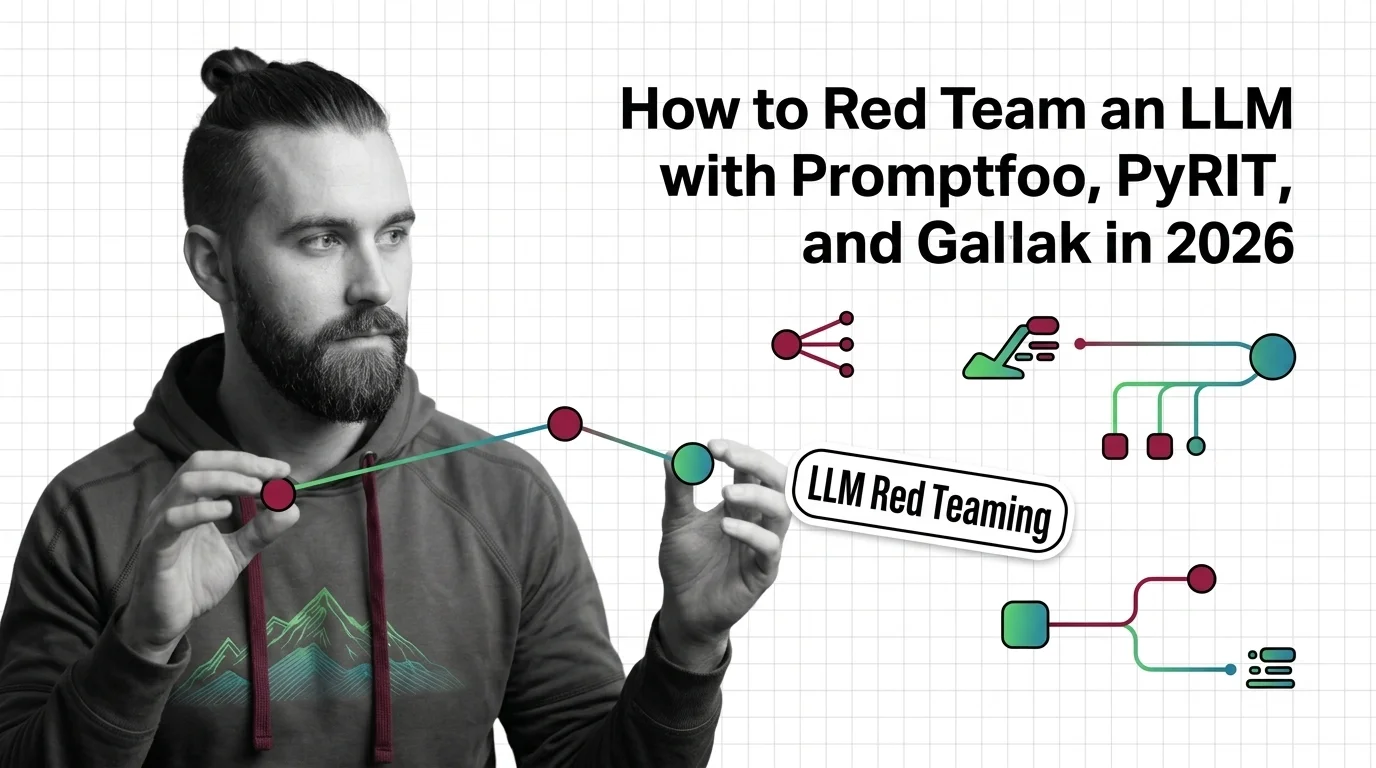

Build an LLM red teaming pipeline with Promptfoo, PyRIT, and Garak. Map attack surfaces, run multi-turn tests, and score vulnerabilities step by step.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated March 2026

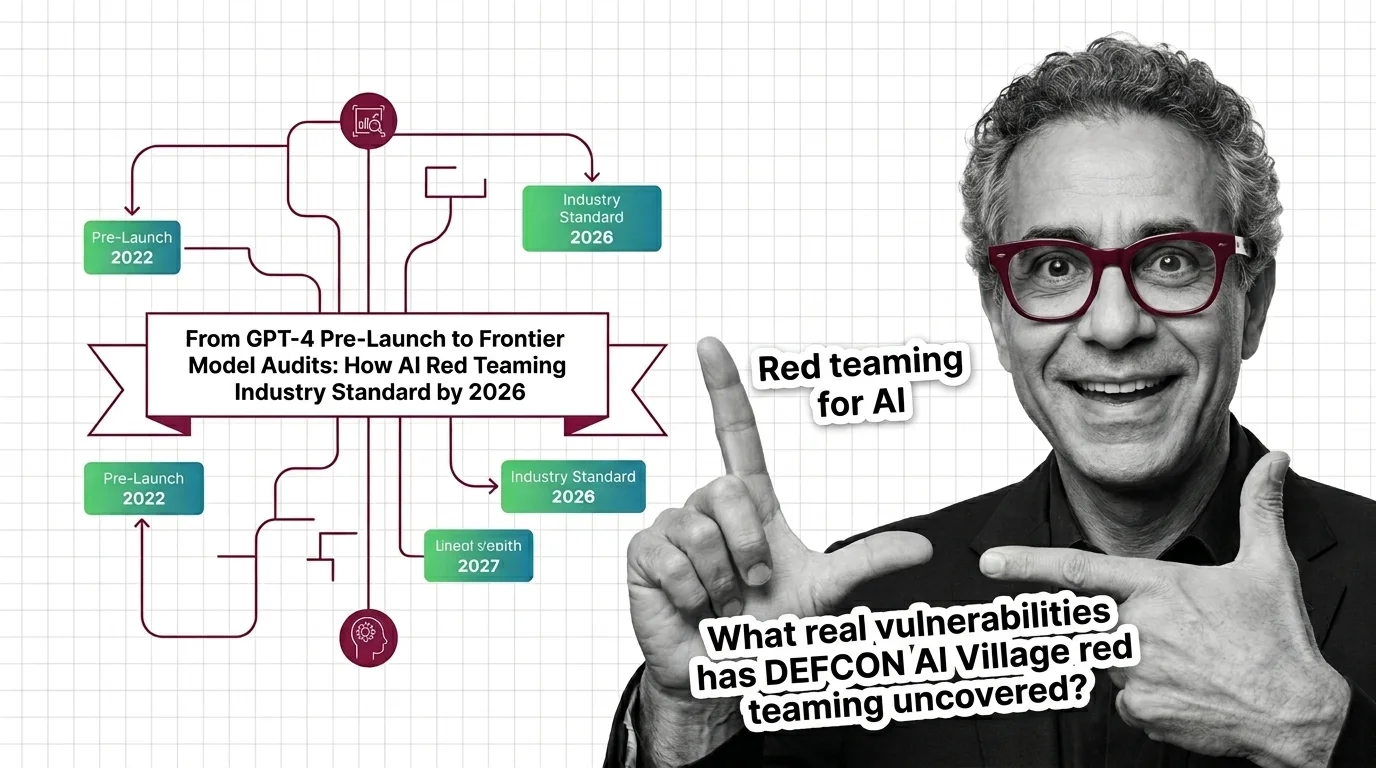

AI red teaming went from OpenAI's voluntary GPT-4 audit to a federal procurement requirement in under three years. Here's what shifted and who benefits.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

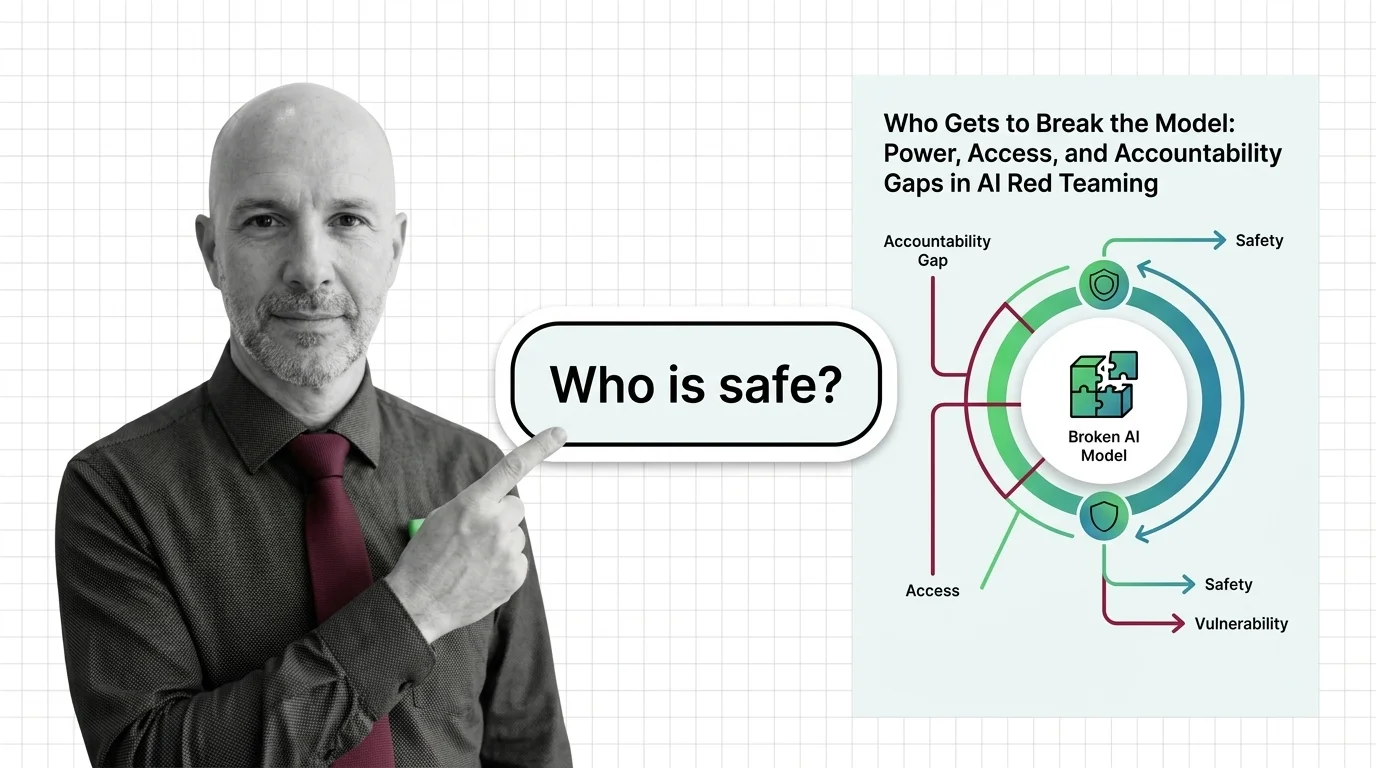

AI red teaming promises safety through adversarial testing, but who selects the testers, defines harm, and bears accountability when the process fails the public?