Citation, Confidence, and Abstention: The 3 Layers of RAG Faithfulness

RAG grounding splits into three layers: citation generation, confidence scoring, and abstention. See how each fails differently and what each actually measures.

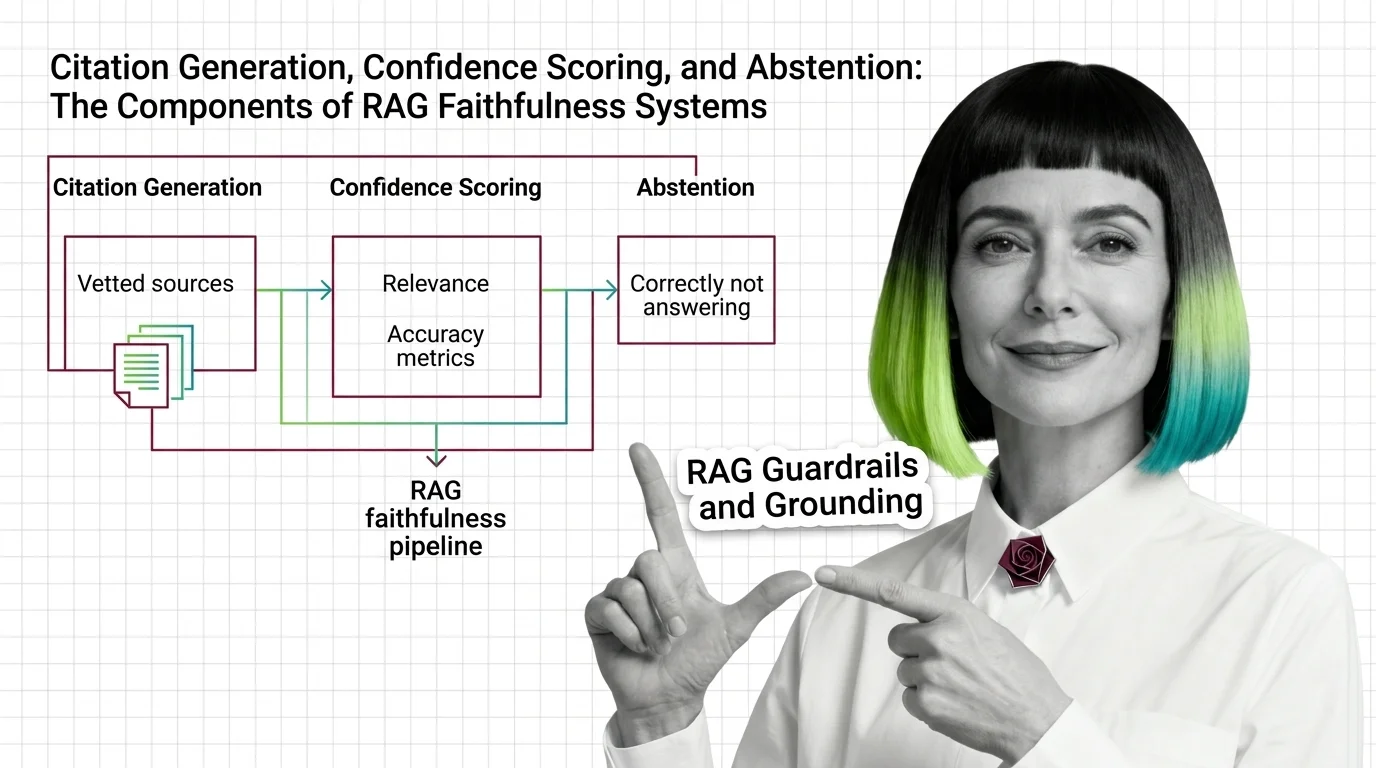

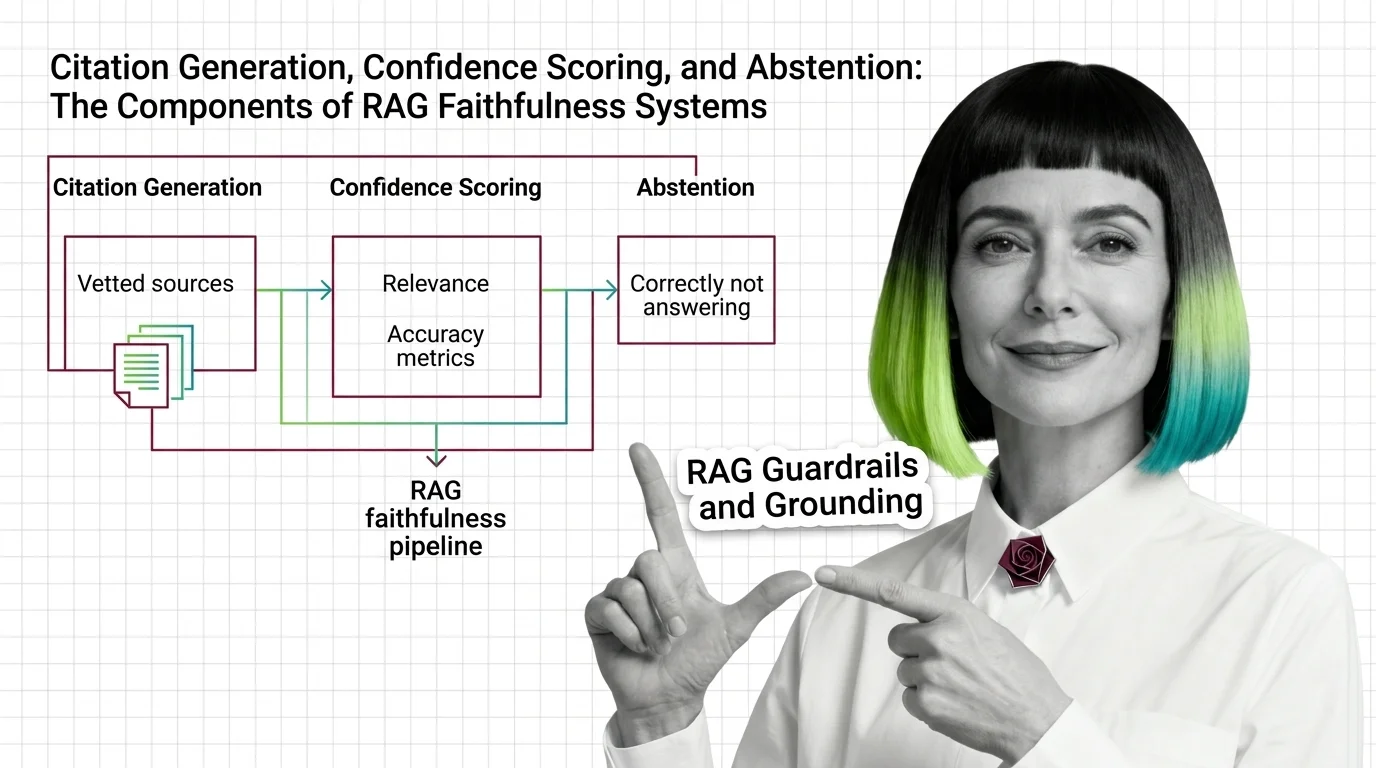

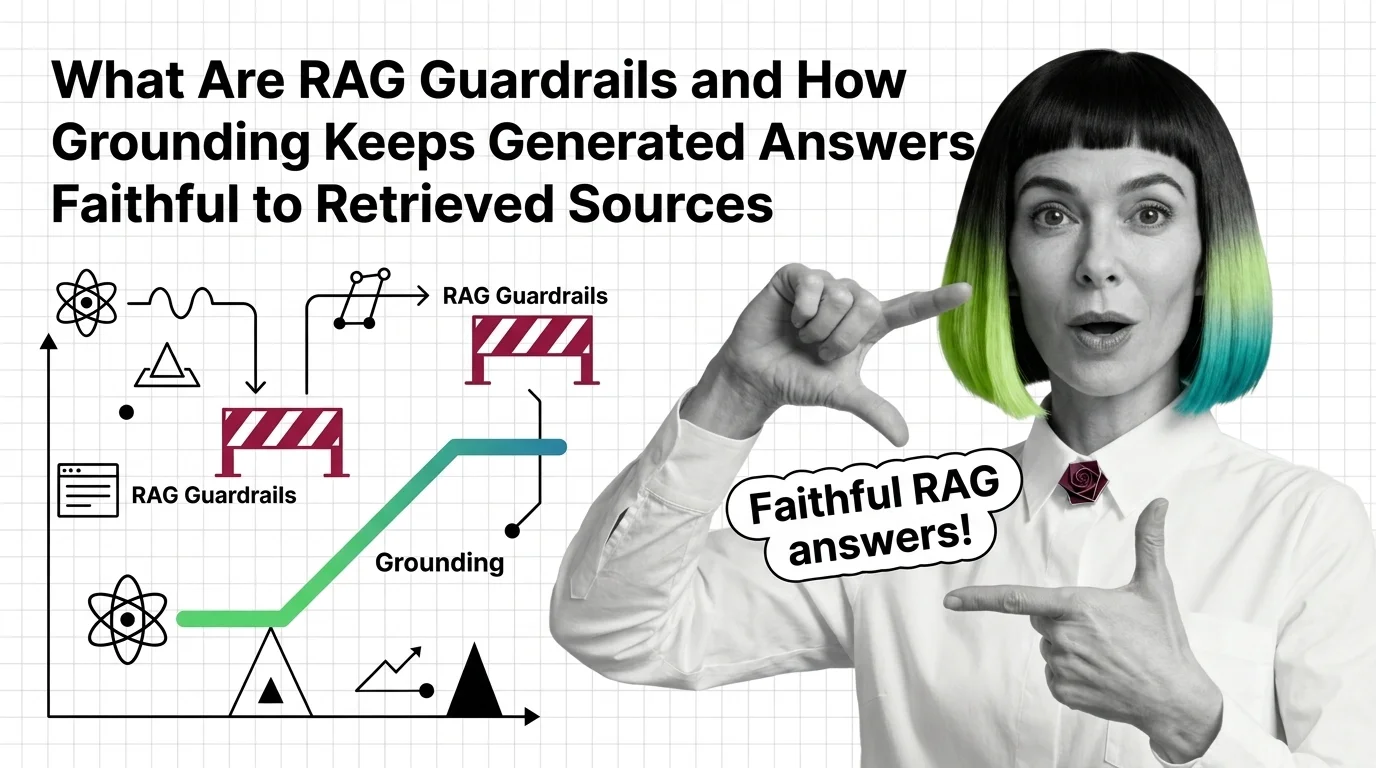

RAG guardrails and grounding are the techniques that keep generated answers tied to retrieved evidence rather than model imagination.

They include citation generation, confidence scoring, abstention when retrieval is weak, and hallucination detection specific to retrieval-augmented outputs. Also known as: Grounded Generation, Citation Generation.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

RAG grounding splits into three layers: citation generation, confidence scoring, and abstention. See how each fails differently and what each actually measures.

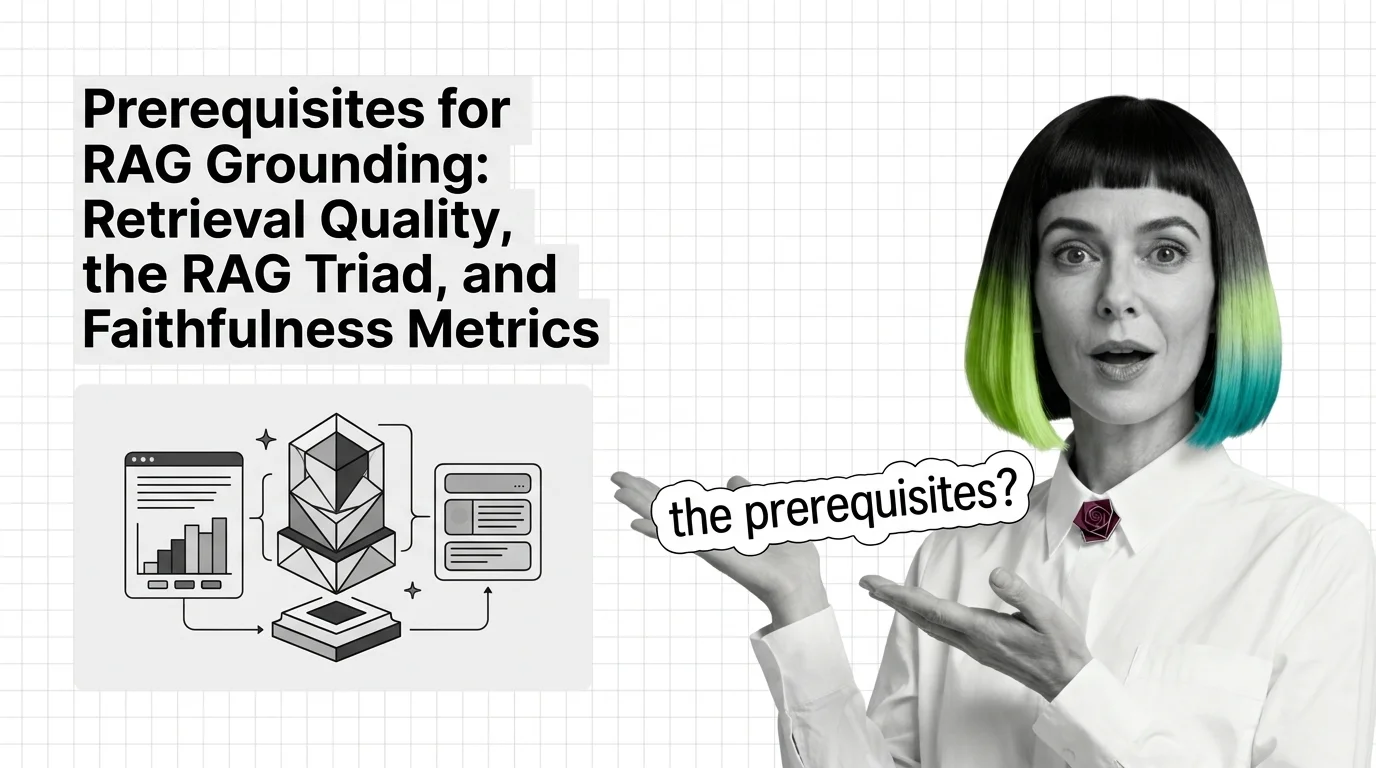

Before you bolt guardrails onto a RAG pipeline, learn the RAG Triad — context relevance, groundedness, answer relevance — and how faithfulness gets measured.

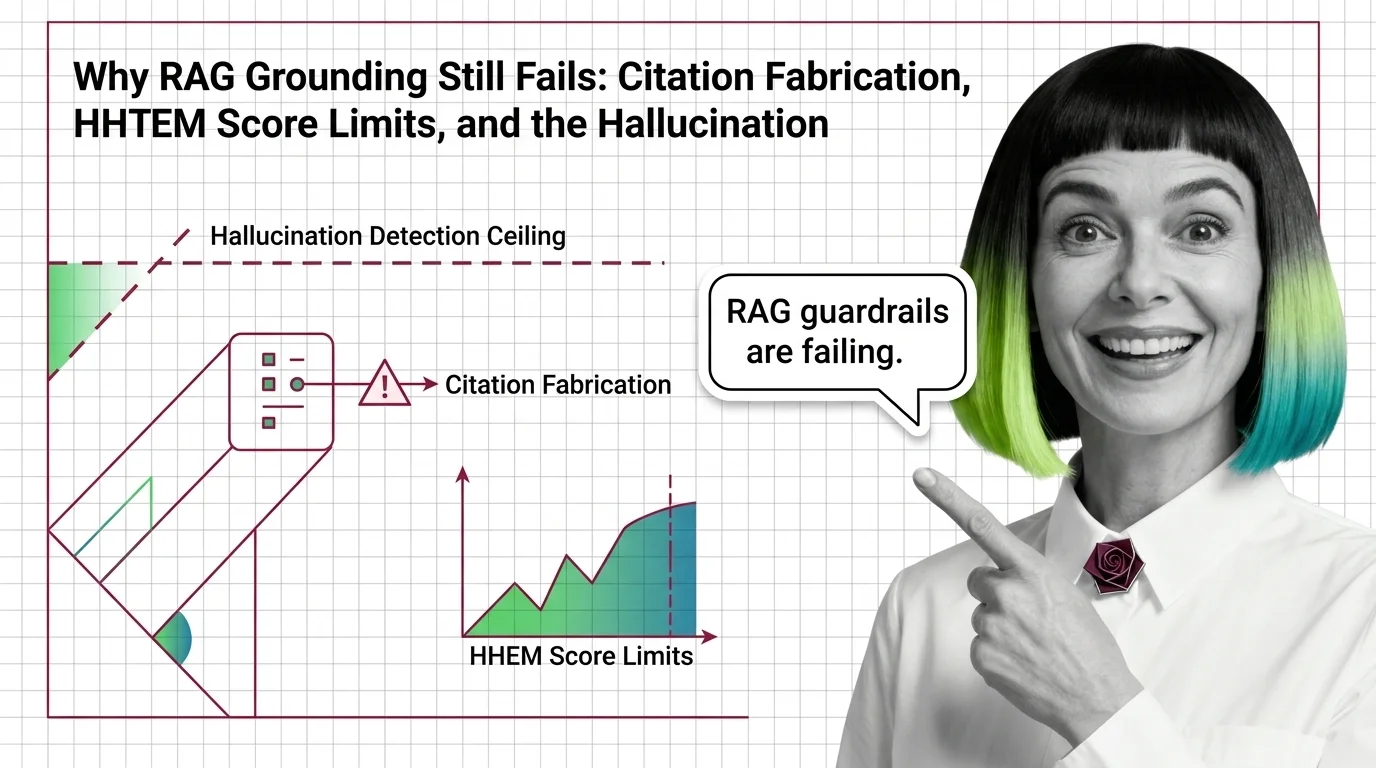

RAG guardrails and grounding force generated answers to stay tied to retrieved sources. Learn how the mechanism works in 2026 — and why it still leaks.

RAG hallucination detection has a certified ceiling. Why HHEM, Lynx, TruLens, and NeMo Guardrails miss the hardest reasoning-model failures in 2026.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

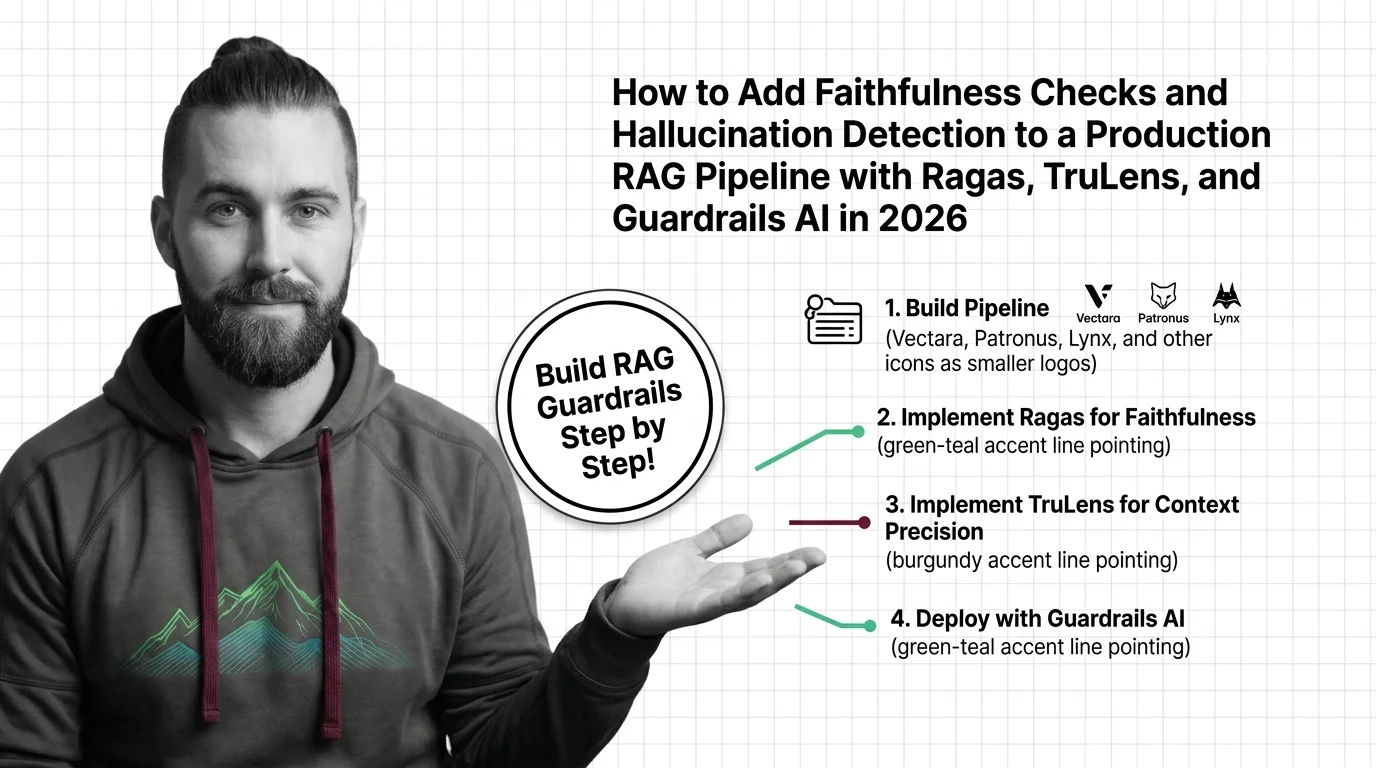

Wire Ragas, TruLens, and Guardrails AI into your RAG pipeline to catch hallucinations before users see them. A three-layer spec for faithfulness in 2026.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated May 2026

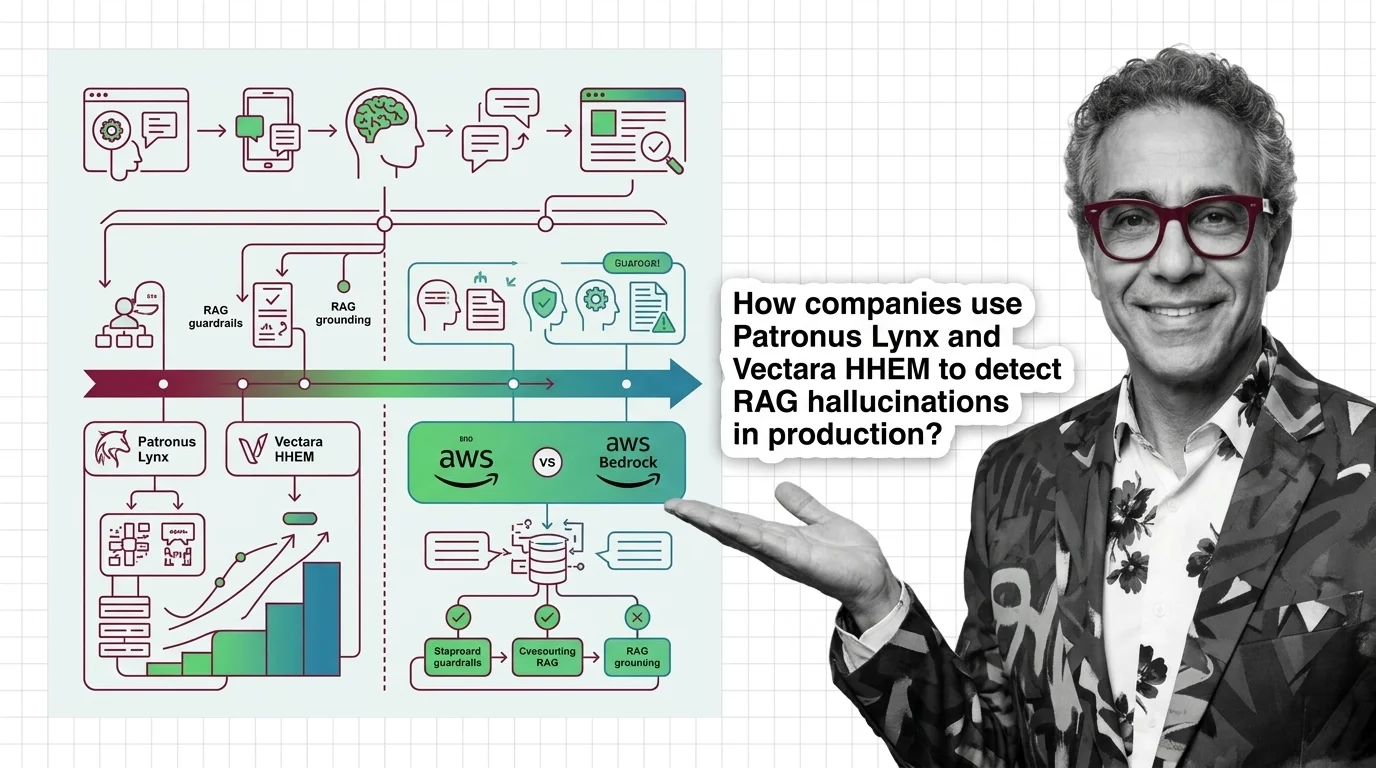

Patronus Lynx, Vectara HHEM-2.3, and AWS Bedrock Contextual Grounding now define RAG faithfulness tooling. The guardrails category just consolidated.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

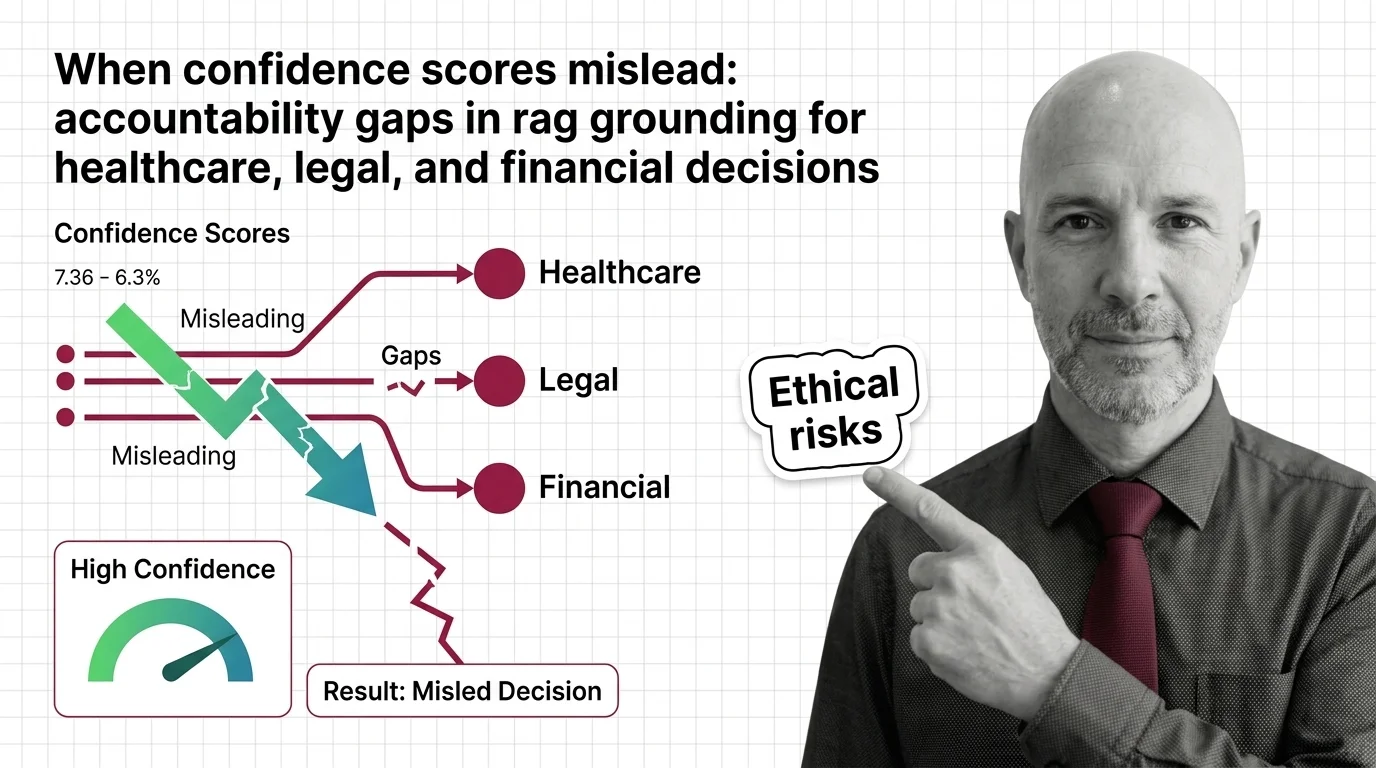

RAG faithfulness scores can hit 0.95 and still produce wrong answers. Why confidence numbers fail in healthcare, legal, and financial decisions.