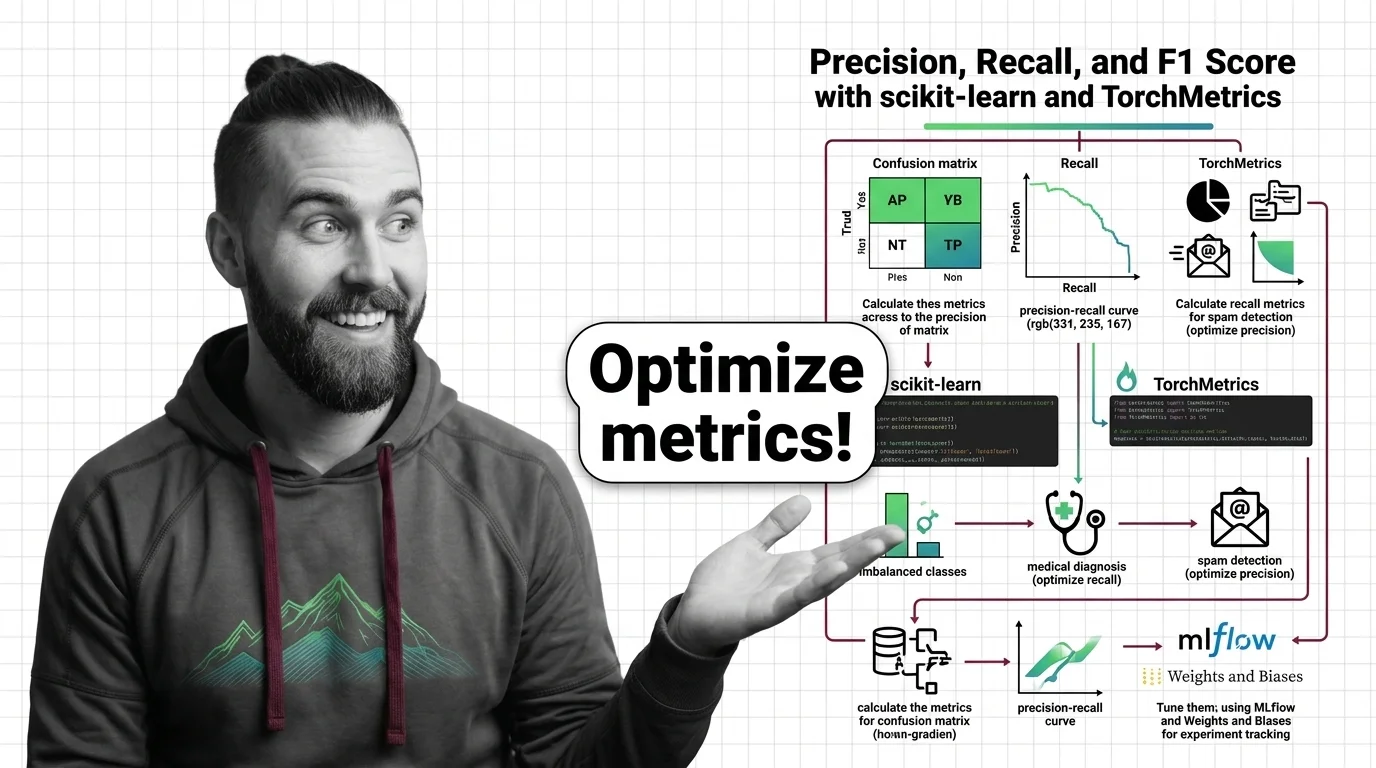

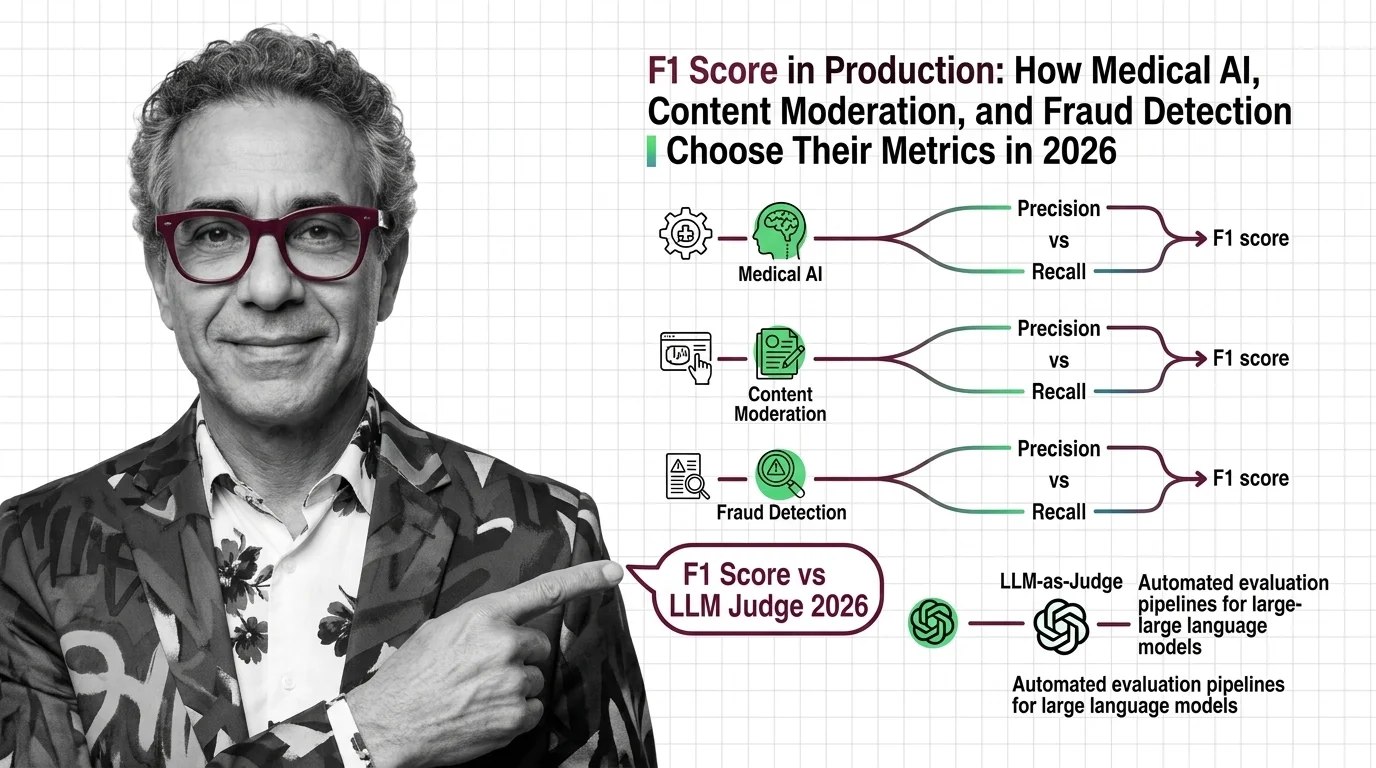

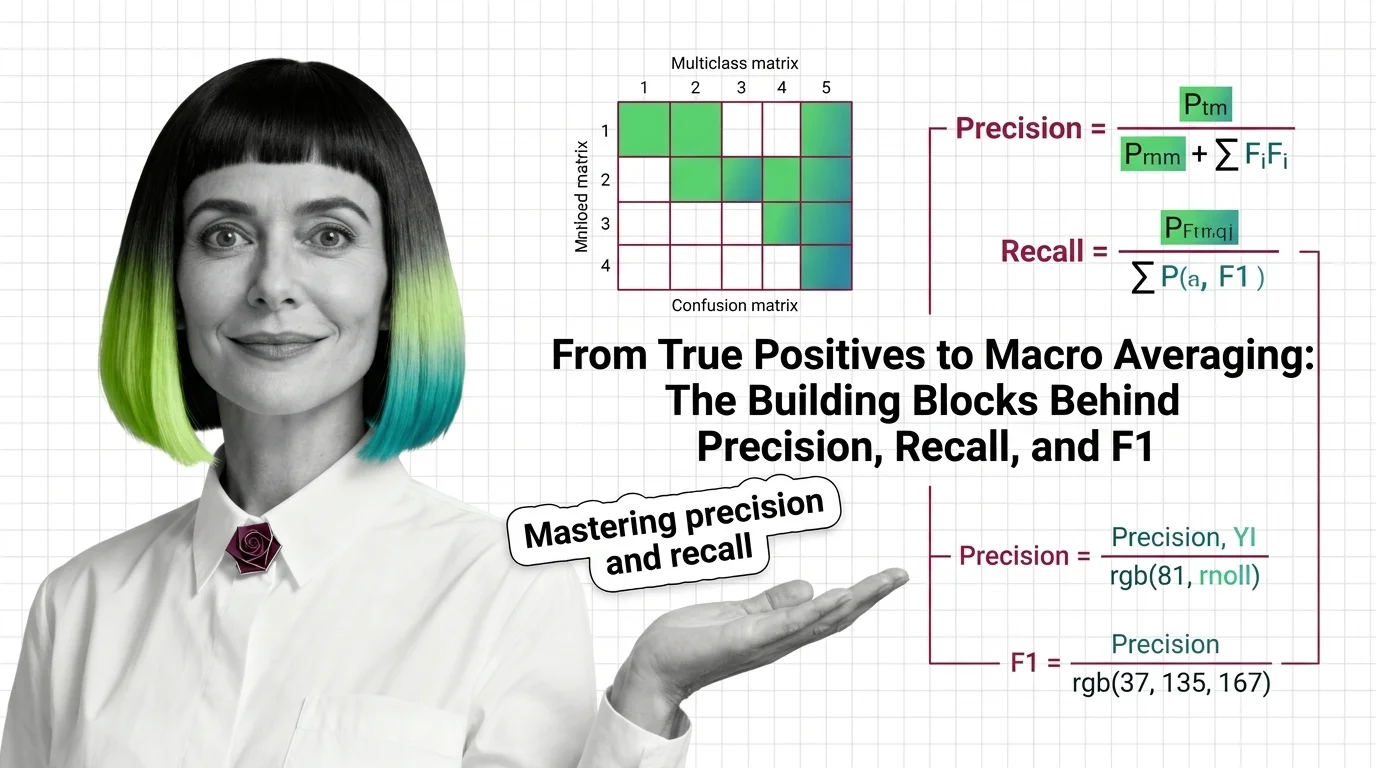

From True Positives to Macro Averaging: The Building Blocks Behind Precision, Recall, and F1

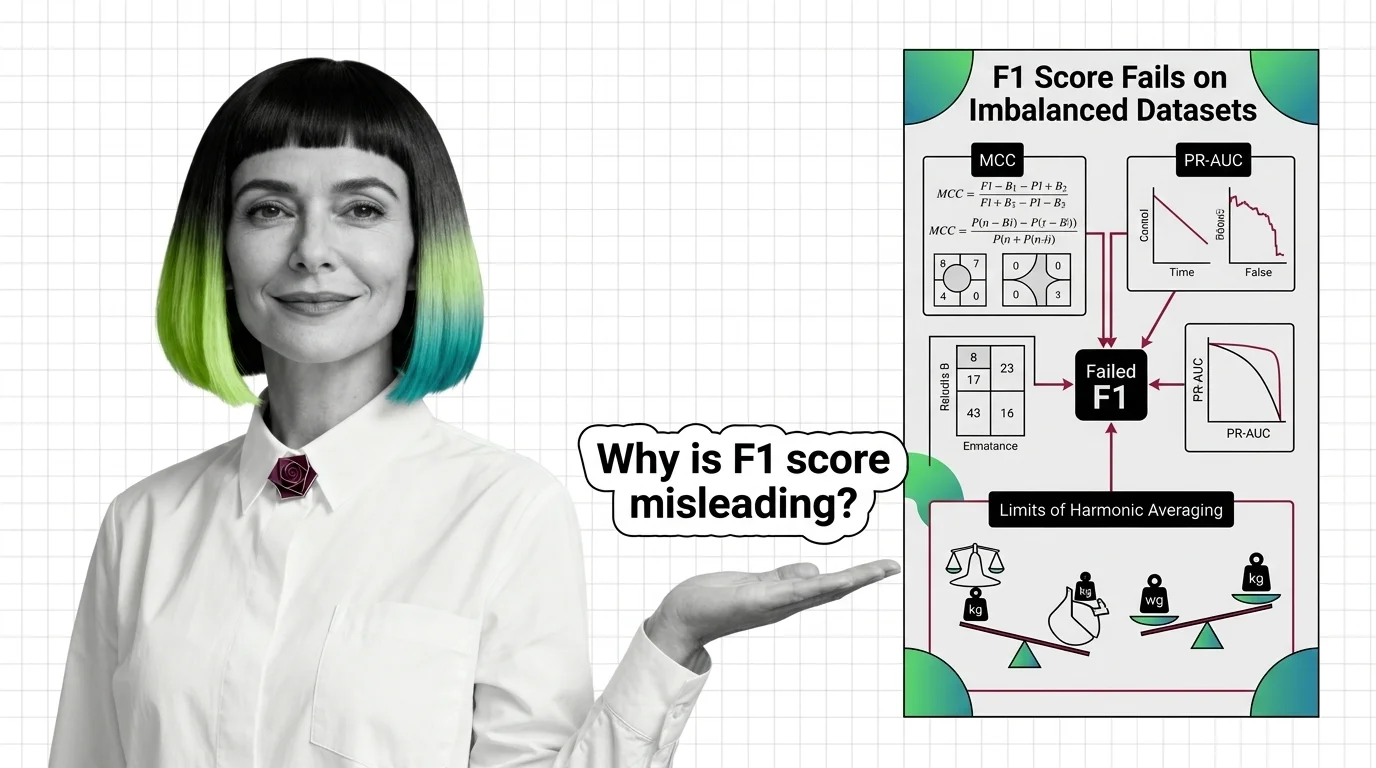

Precision, recall, and F1 score measure what accuracy hides. Learn how true positives, confusion matrices, and macro averaging reveal classifier performance.