Precision Recall and F1 Score

Precision, recall, and F1 score are classification metrics used to evaluate machine learning models. Precision measures how many predicted positives are correct, recall measures how many actual positives are found, and F1 score balances both as their harmonic mean. These metrics are essential for any task involving categorical predictions, from spam detection to medical diagnosis. Also known as: Precision and Recall, F1 Score, F-Measure

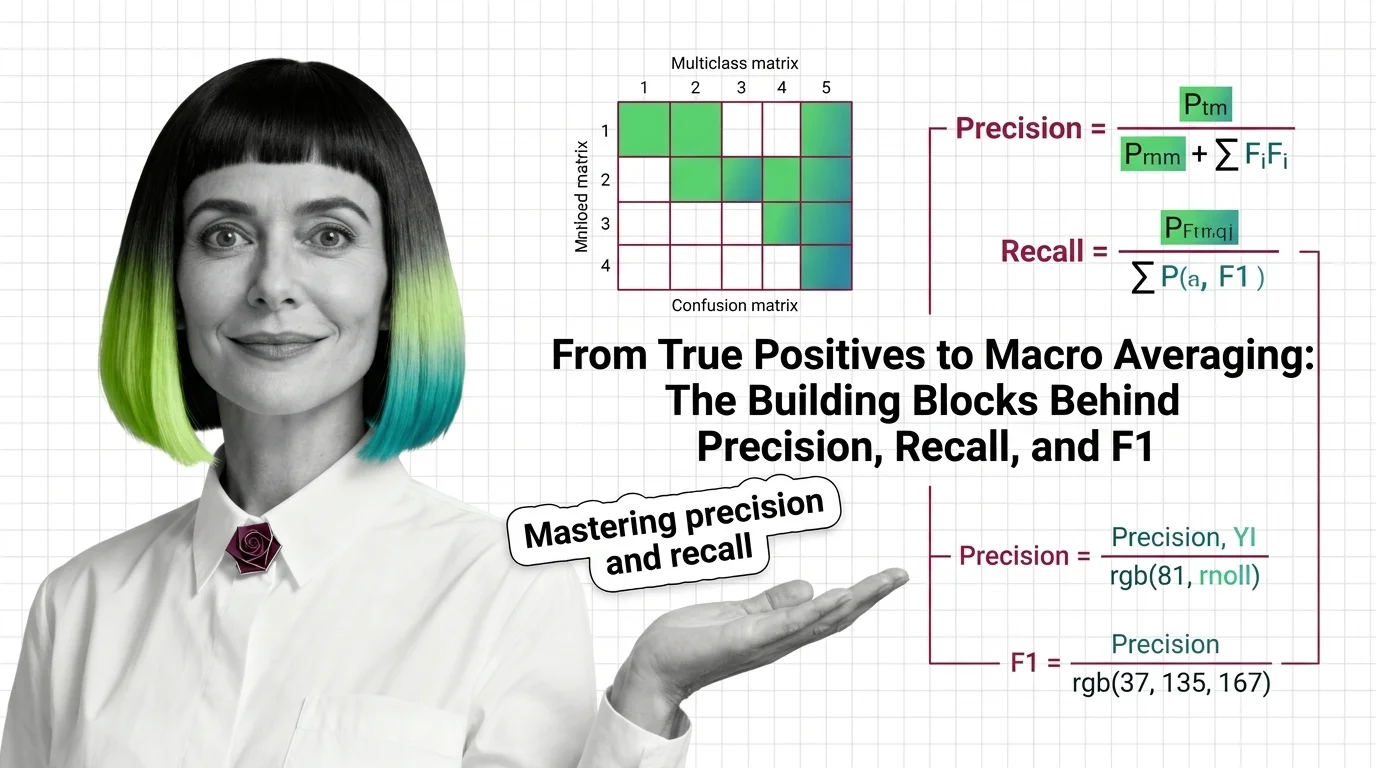

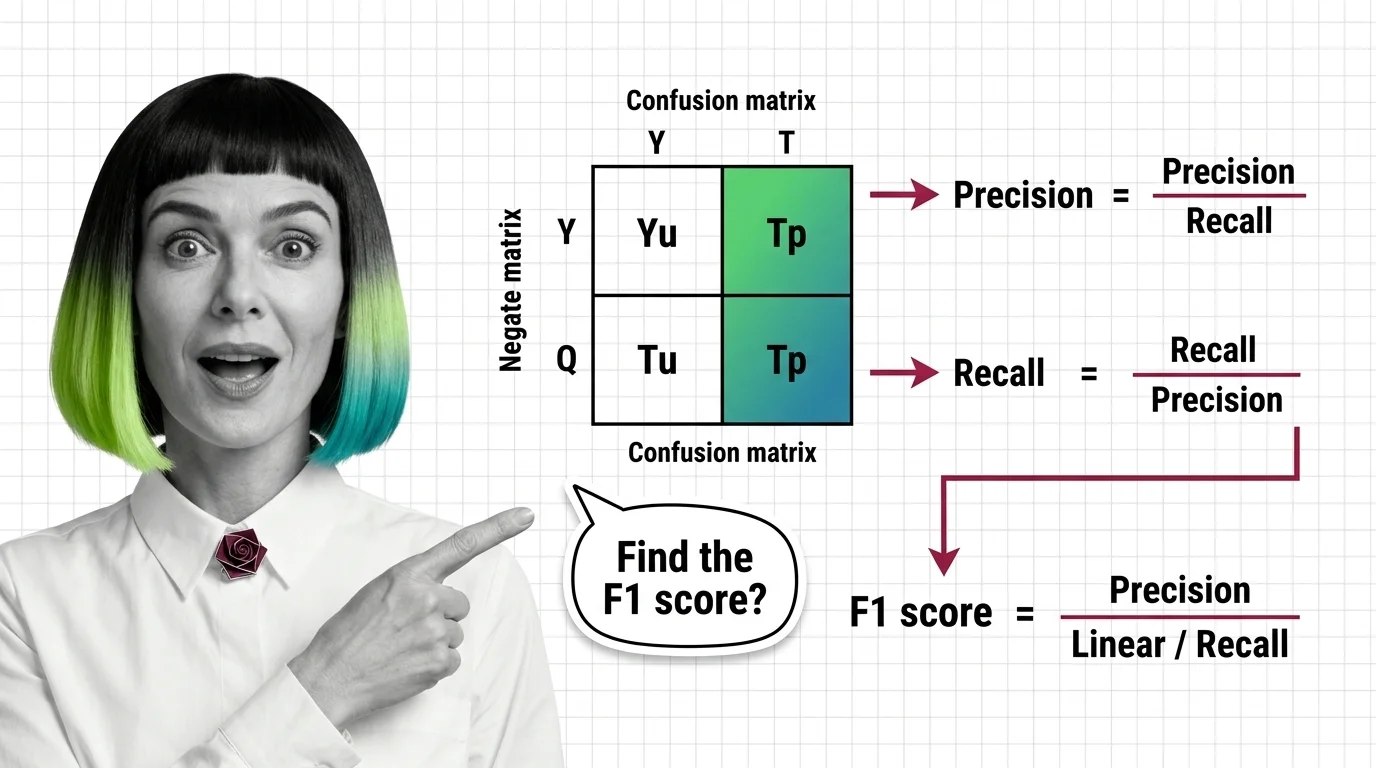

Understand the Fundamentals

Precision, recall, and F1 score quantify different facets of classification accuracy. These articles explain why a single metric never tells the full story and how the confusion matrix connects them.

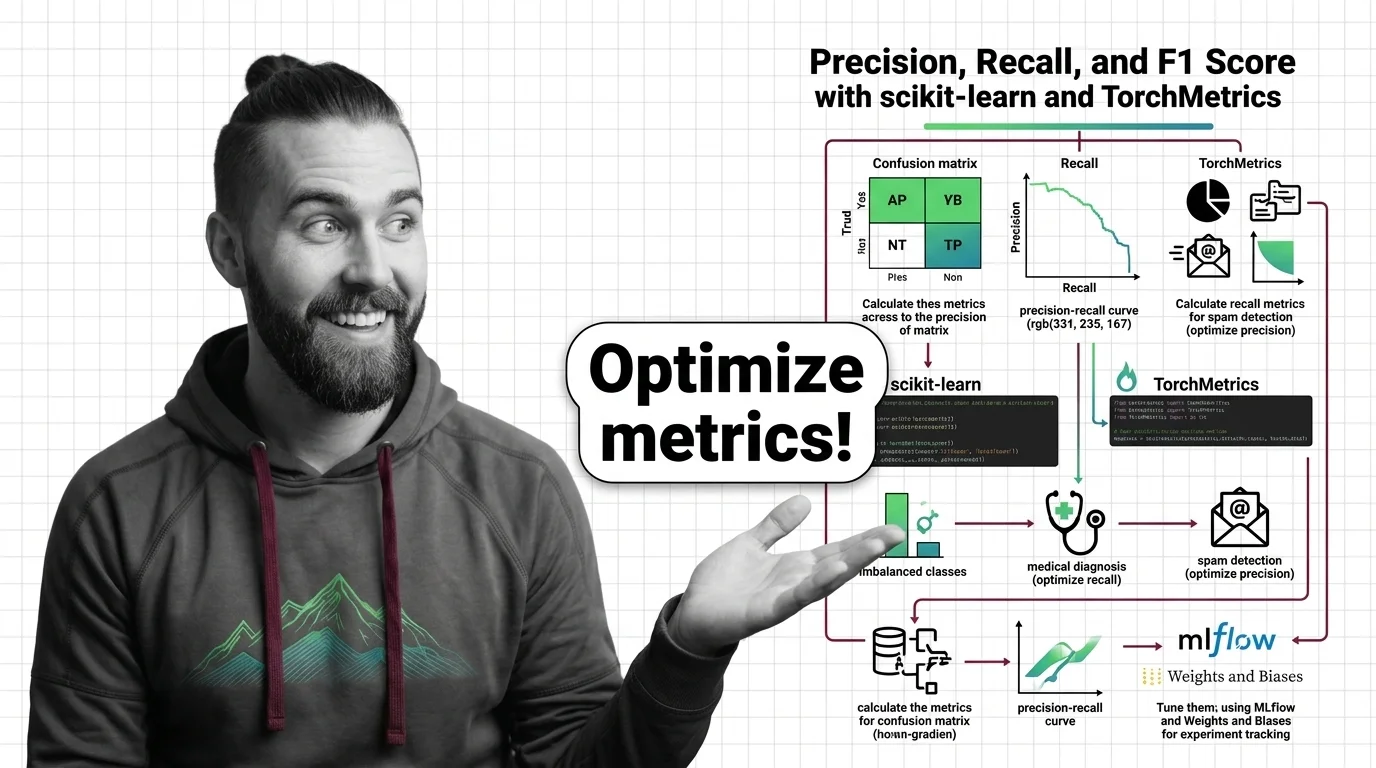

Build with Precision Recall and F1 Score

The practical guides cover choosing between precision and recall for your use case, calculating F1 variants in code, and tuning classification thresholds to match real-world trade-offs.

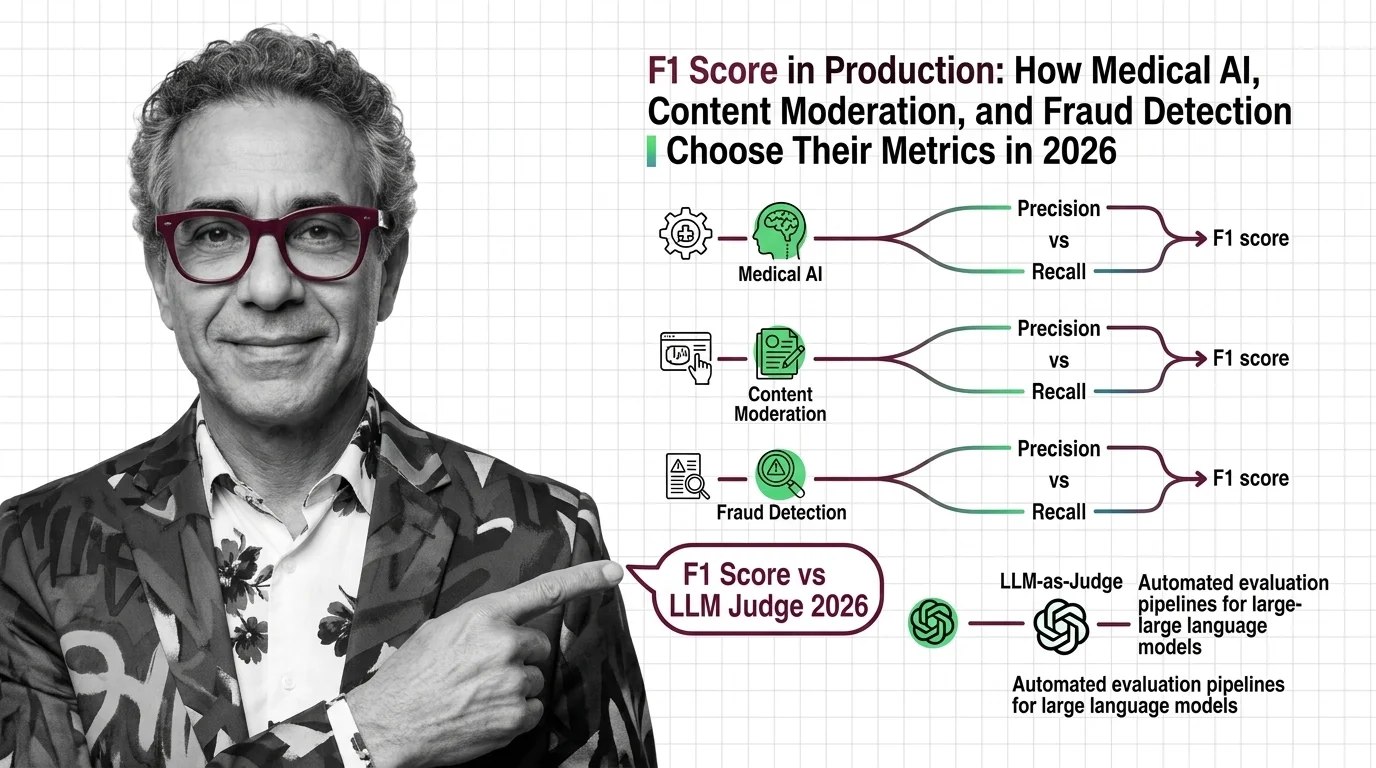

What's Changing in 2026

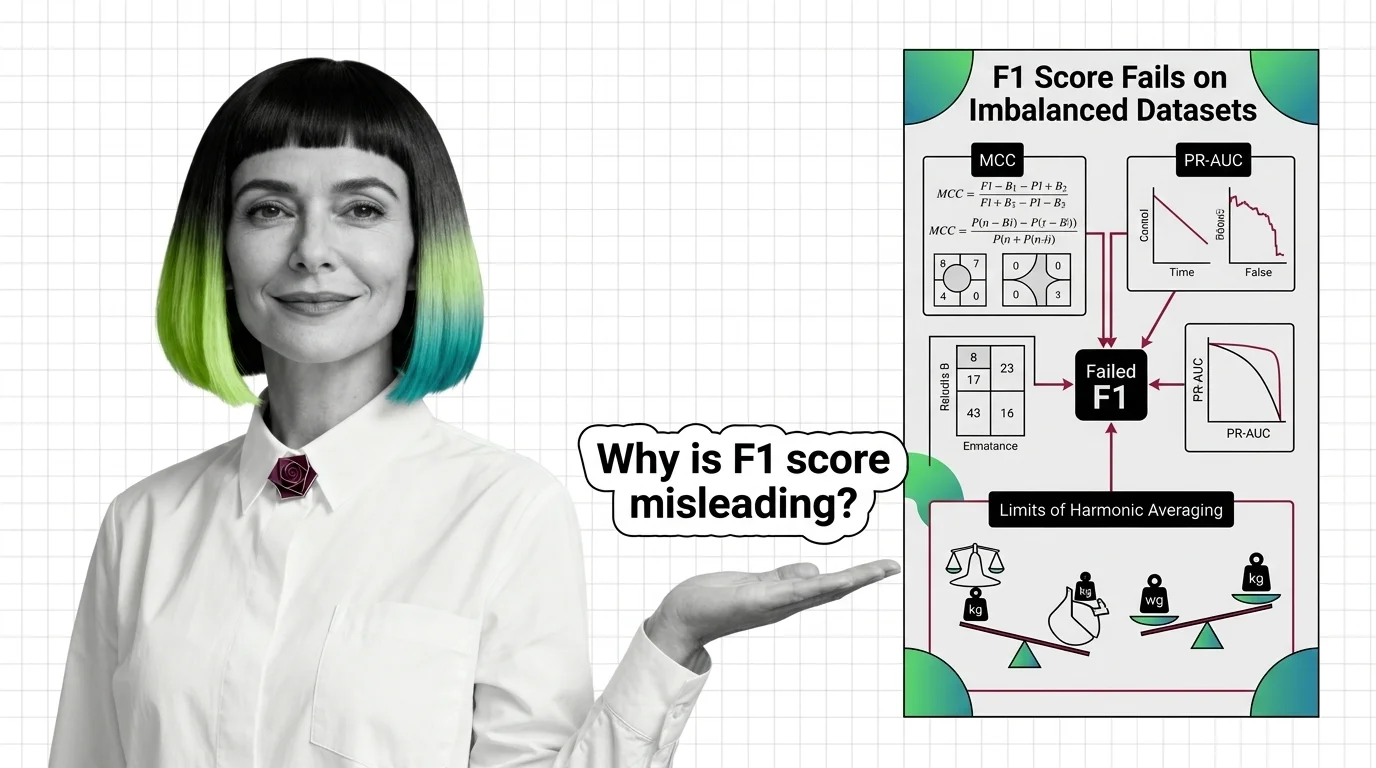

Classification metrics evolve as models face harder tasks and messier data. Tracking how the field handles imbalanced datasets and multi-class scoring keeps your evaluation strategy ahead of the curve.

Updated March 2026

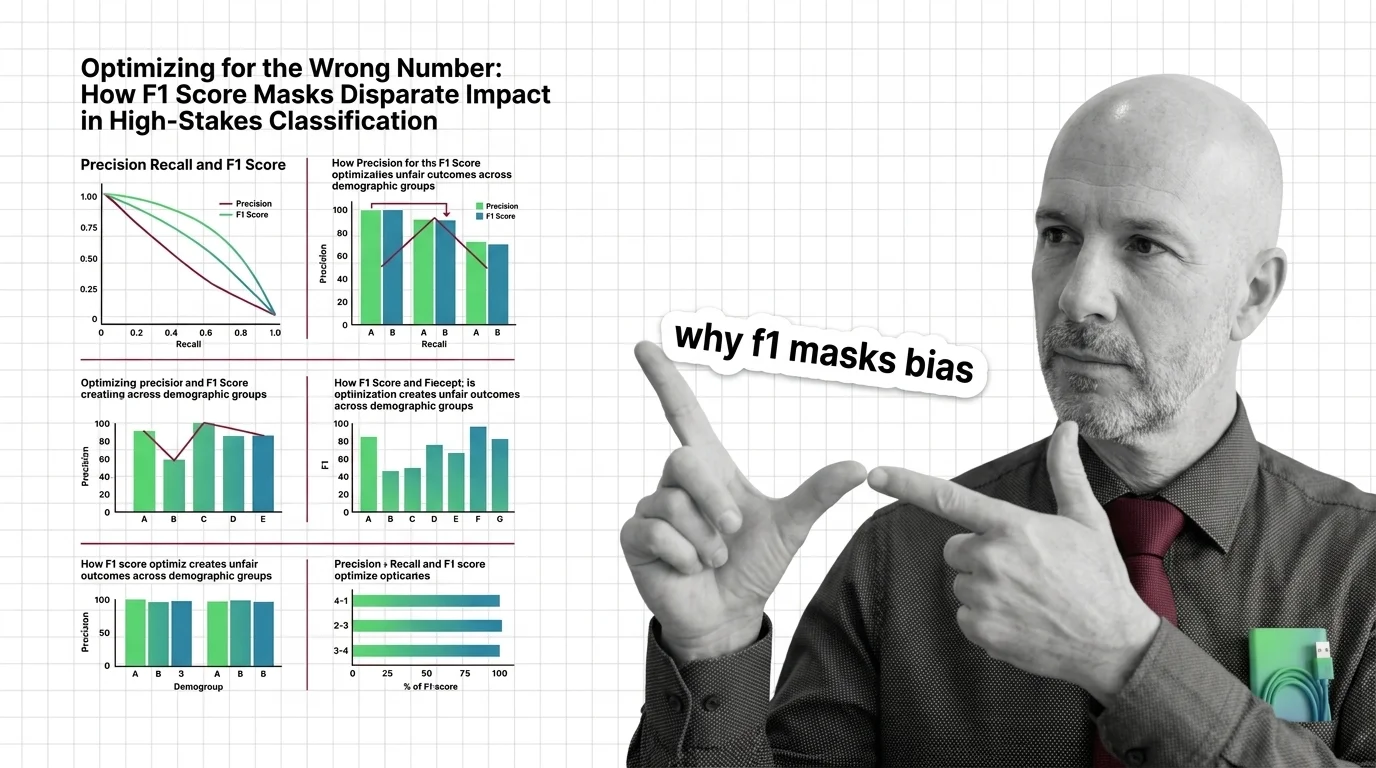

Risks and Considerations

Optimizing for F1 score without examining subgroup performance can hide bias and cause real harm. These articles explore what goes wrong when a single aggregate number drives high-stakes decisions.