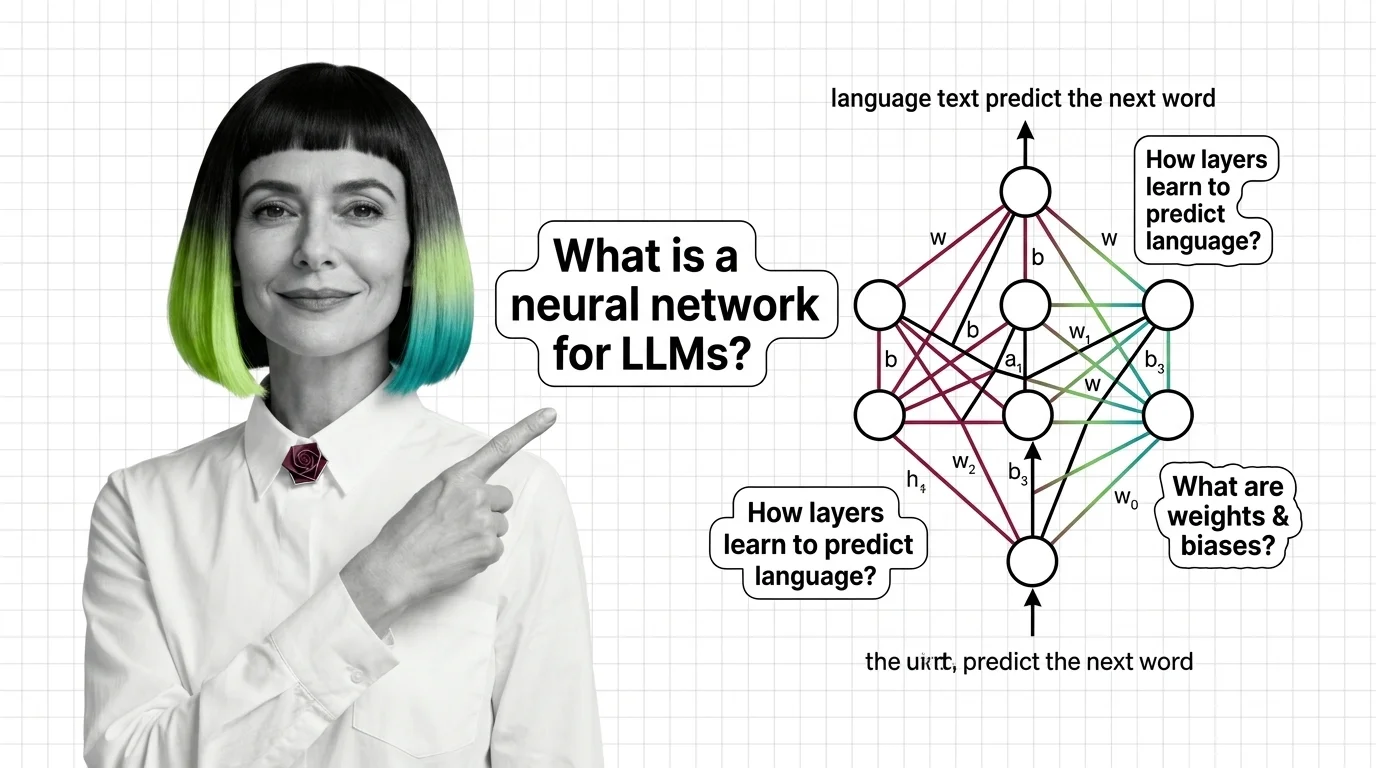

Neural Network Basics for LLMs

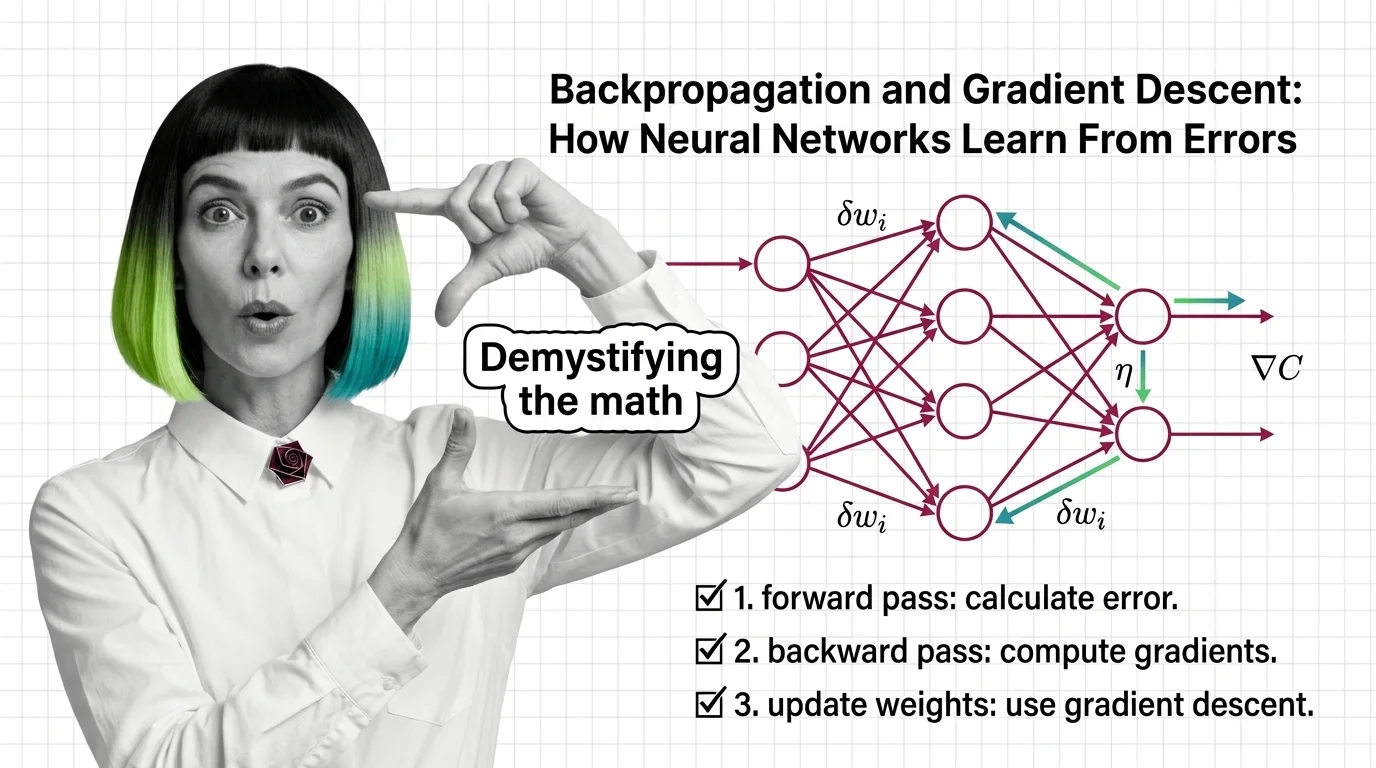

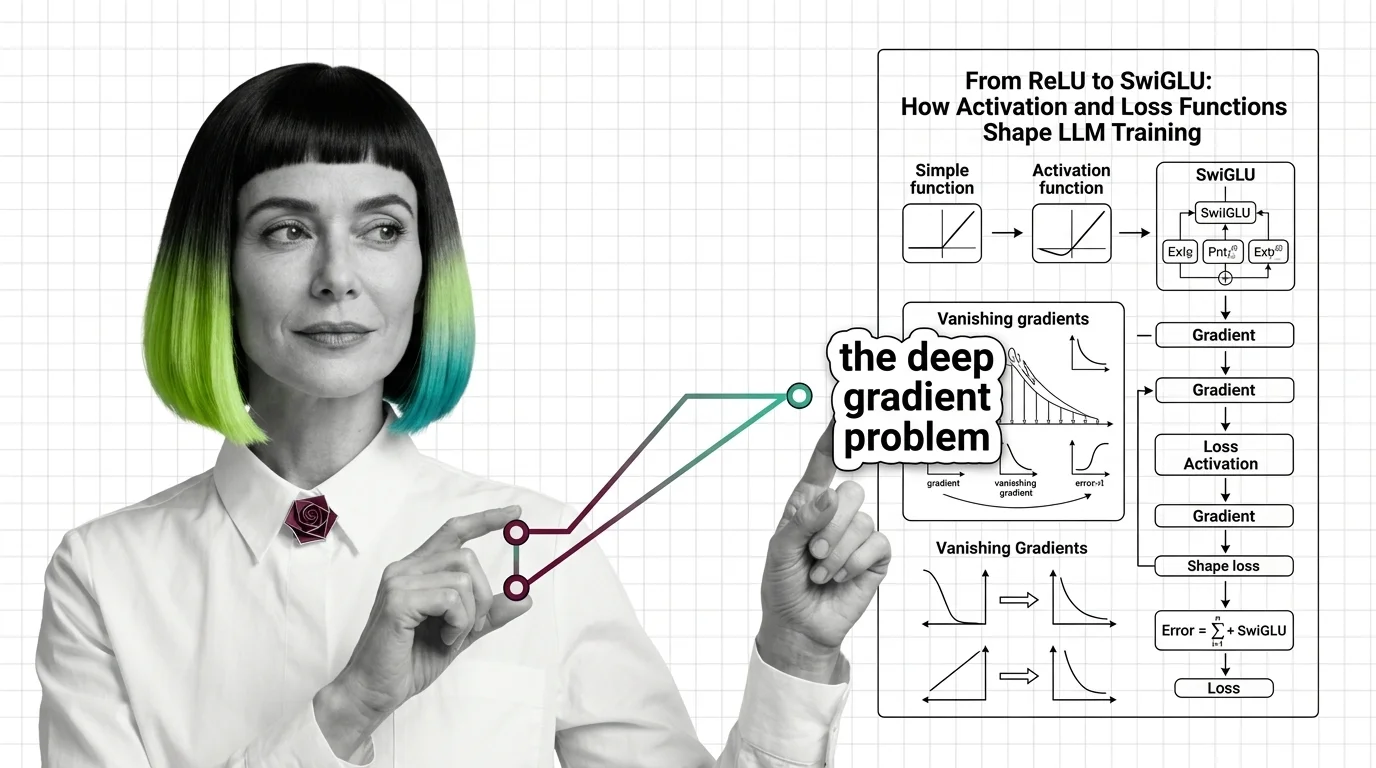

Neural networks are computational systems that learn patterns from data by adjusting internal parameters called weights across interconnected layers. For large language models, the key building blocks include layers that transform text into numerical representations, backpropagation that propagates errors backward to update weights, gradient descent that optimizes learning, and activation functions that introduce non-linear decision boundaries. Also known as: NN Fundamentals.

Understand the Fundamentals

Neural networks underpin every large language model, yet most explanations skip the mechanics that matter. These explainers trace how layers, weights, and gradients actually produce coherent text.

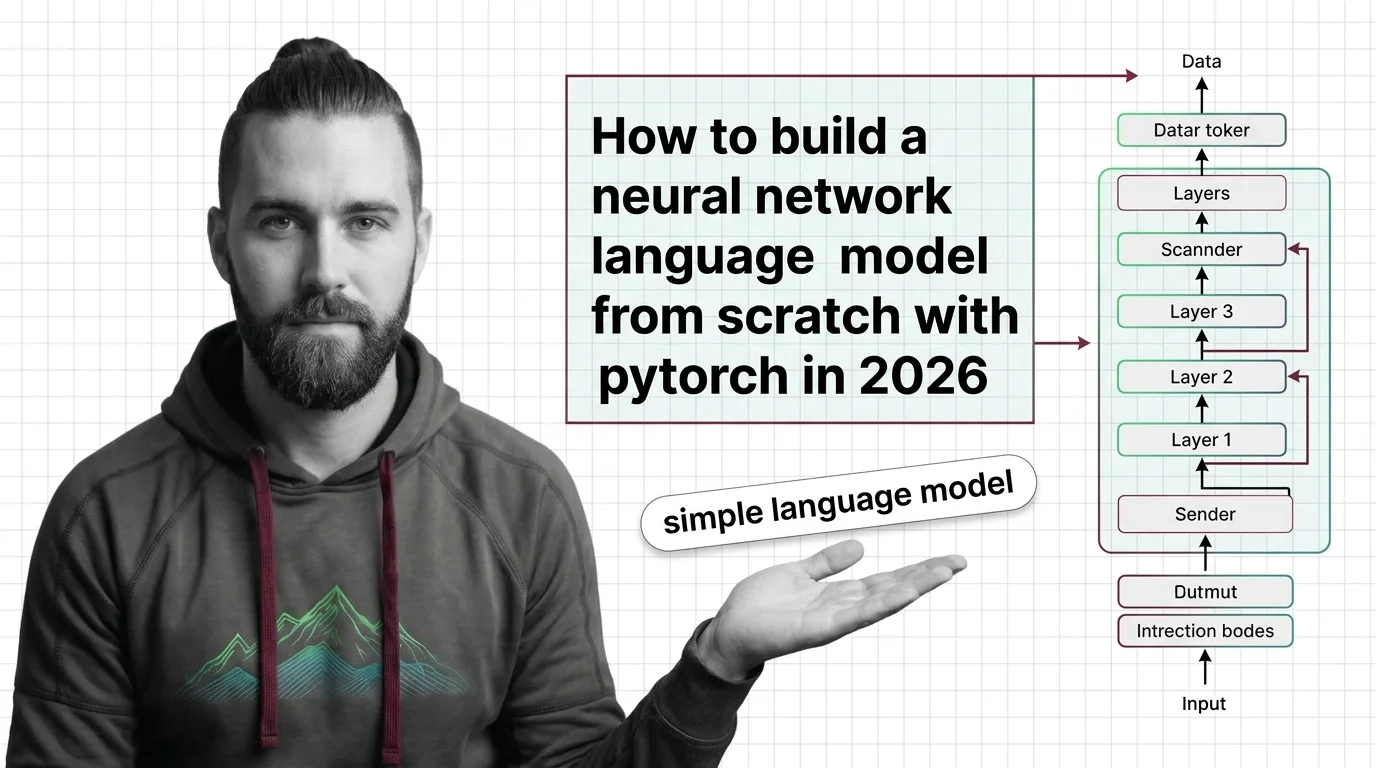

Build with Neural Network Basics for LLMs

These guides walk you through building a working language model from scratch, confronting real trade-offs in architecture choices, training stability, and compute constraints along the way.

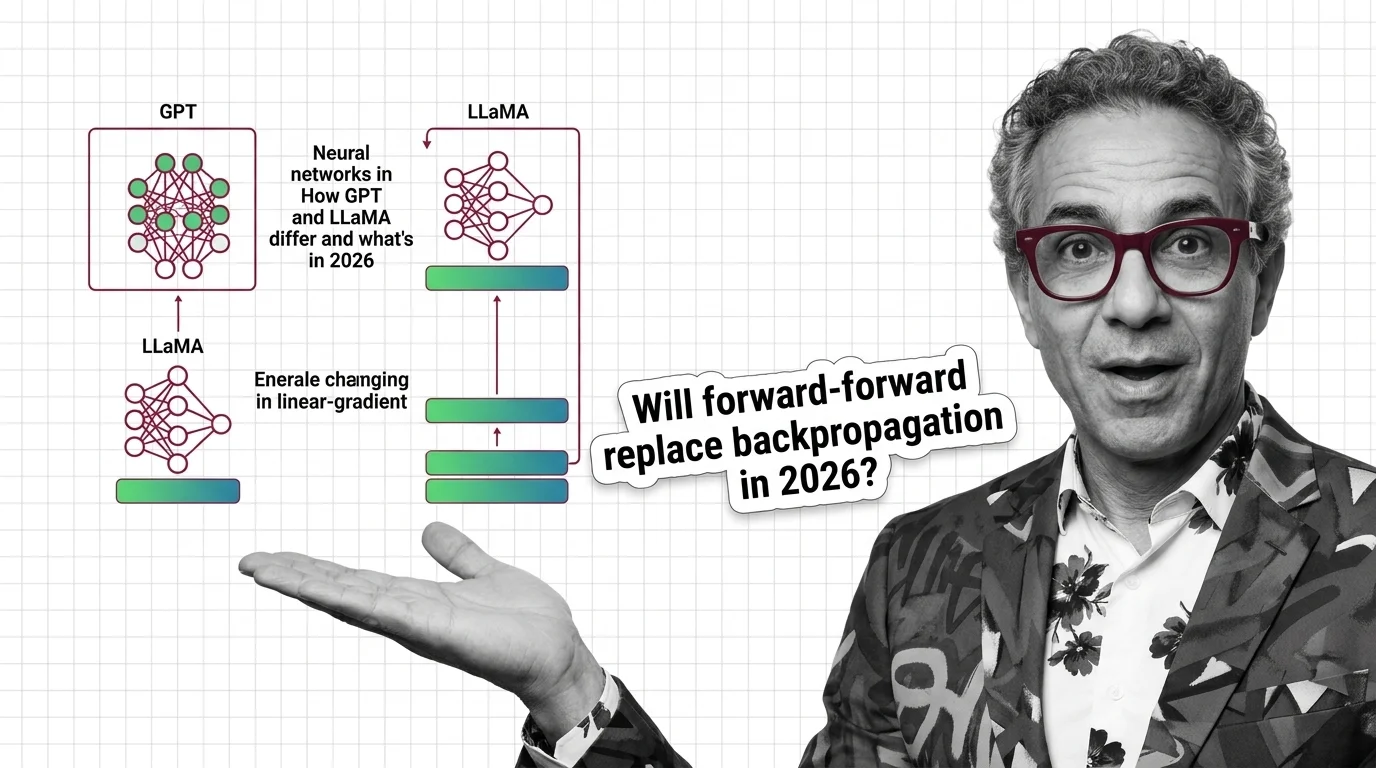

What's Changing in 2026

Neural network architectures are evolving rapidly, with new activation functions and training techniques reshaping what language models can do. Staying current means understanding which shifts matter for your stack.

Updated April 2026

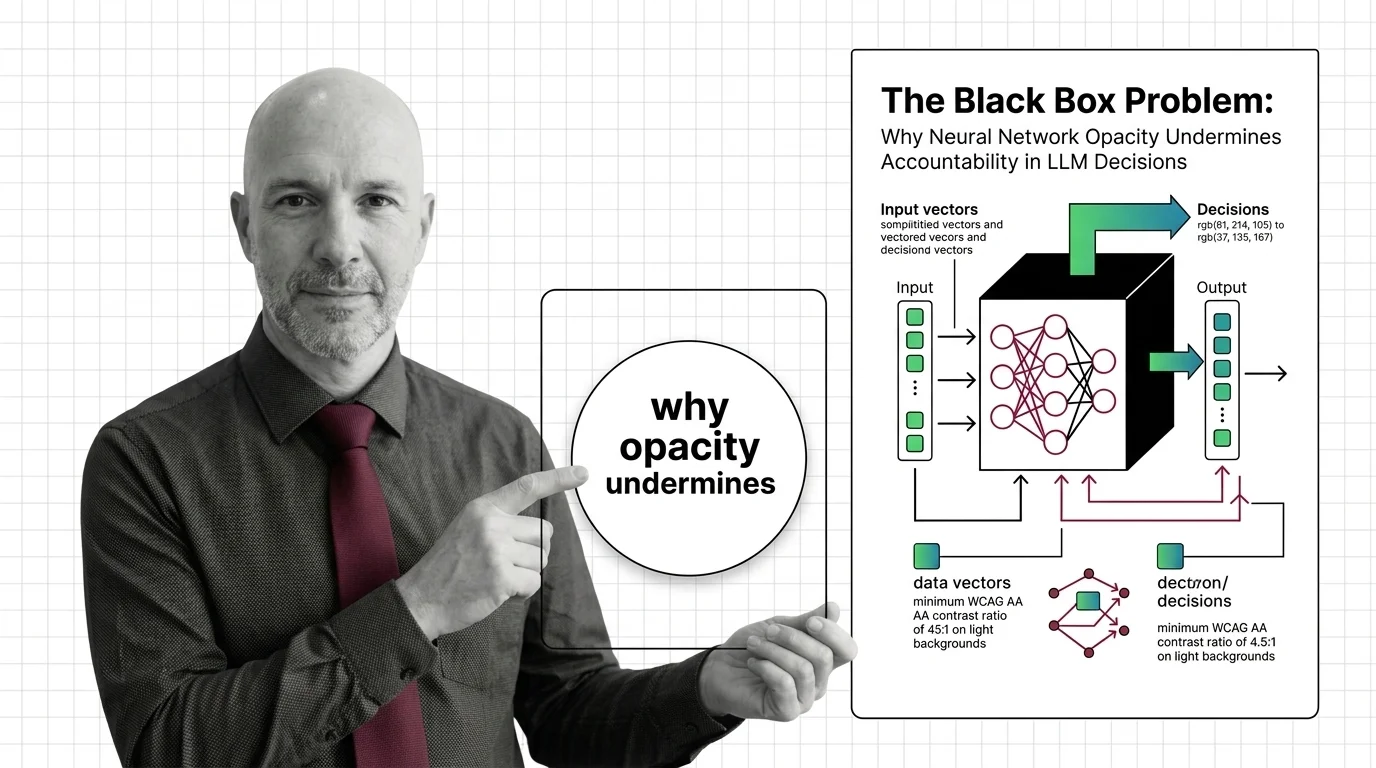

Risks and Considerations

Neural networks operate as opaque systems where tracing a specific output back to a specific learned pattern remains unsolved. These pieces examine accountability gaps and the limits of current interpretability methods.