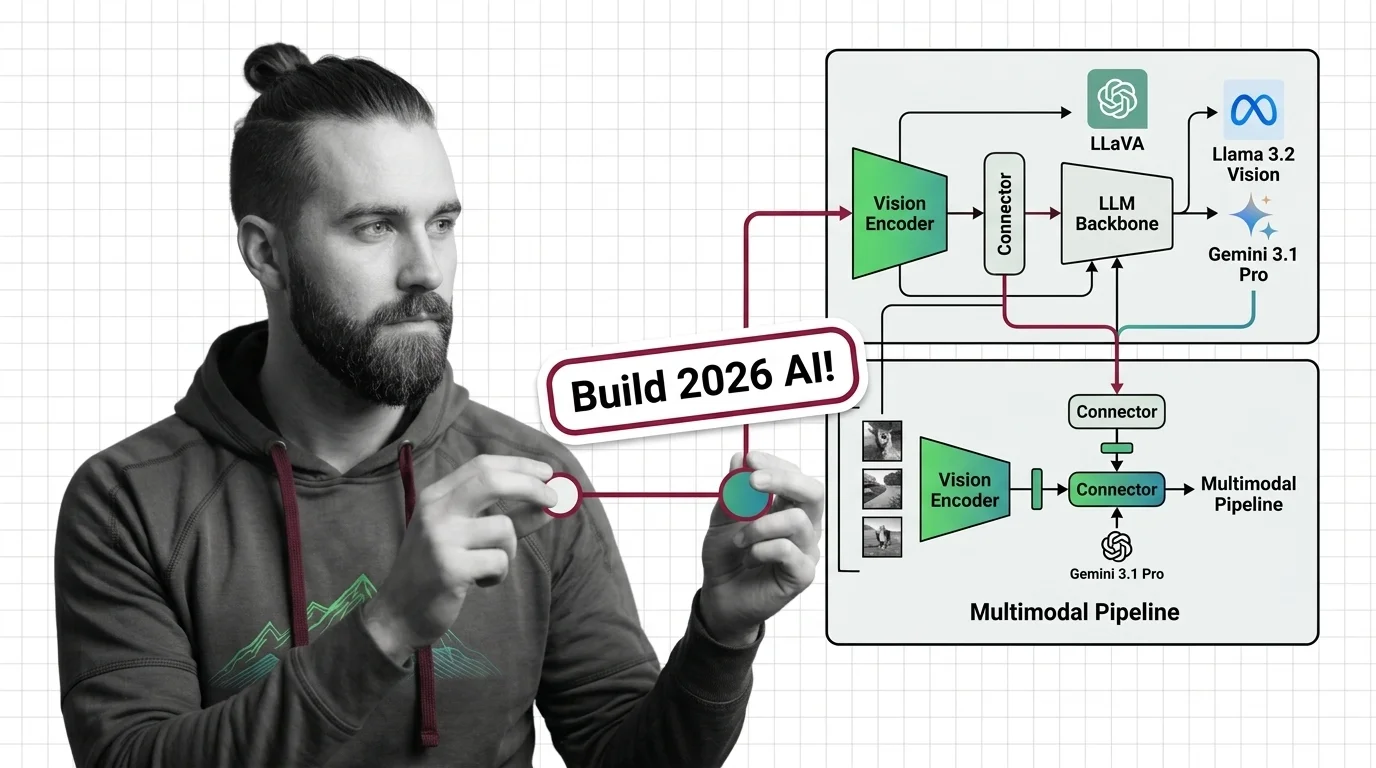

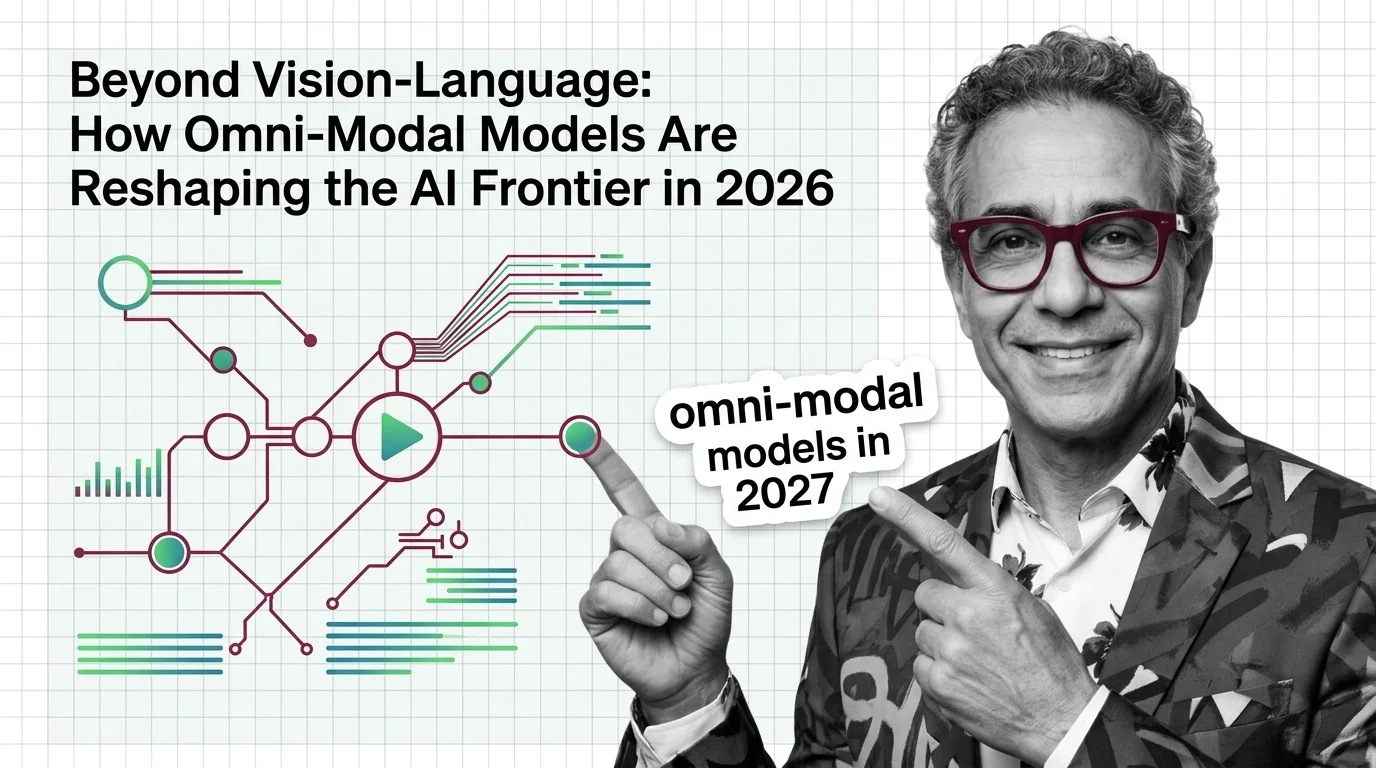

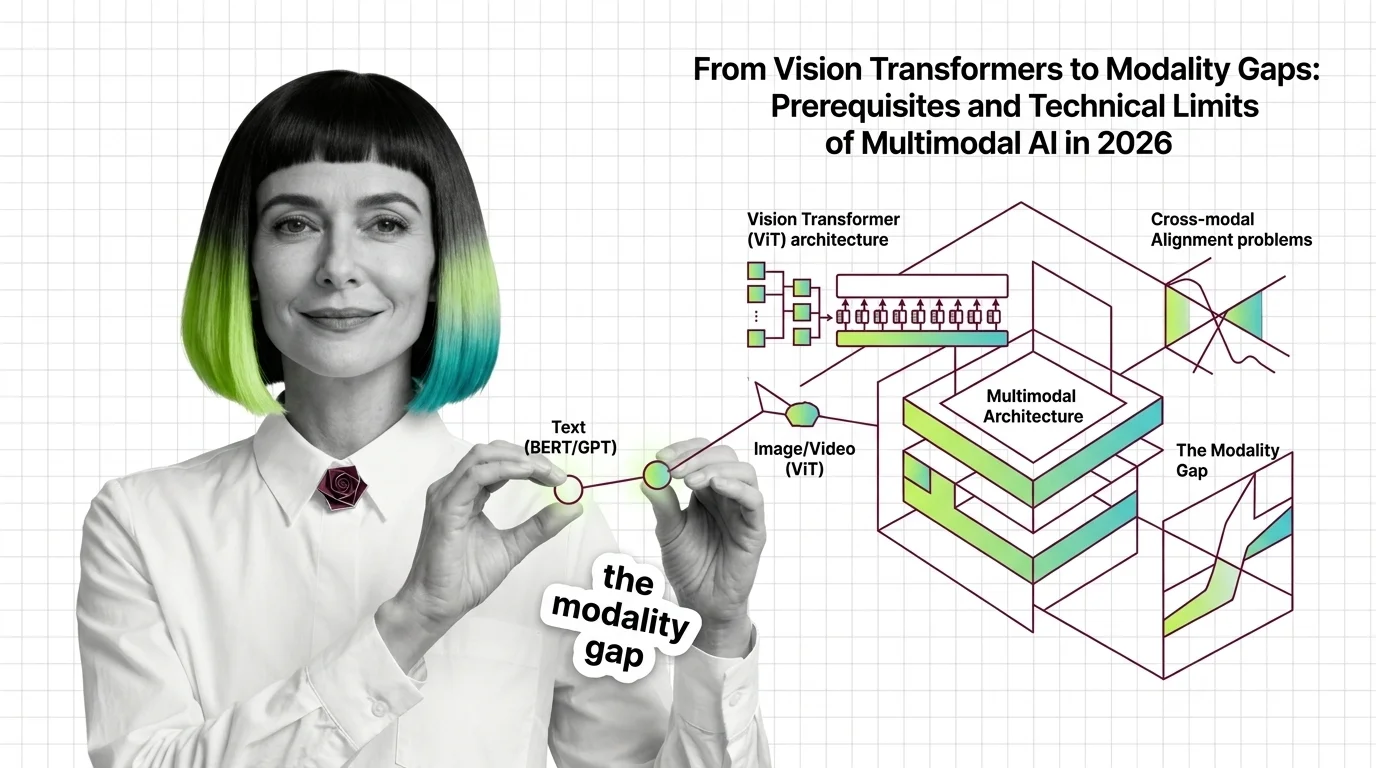

From Vision Transformers to Modality Gaps: Prerequisites and Technical Limits of Multimodal AI in 2026

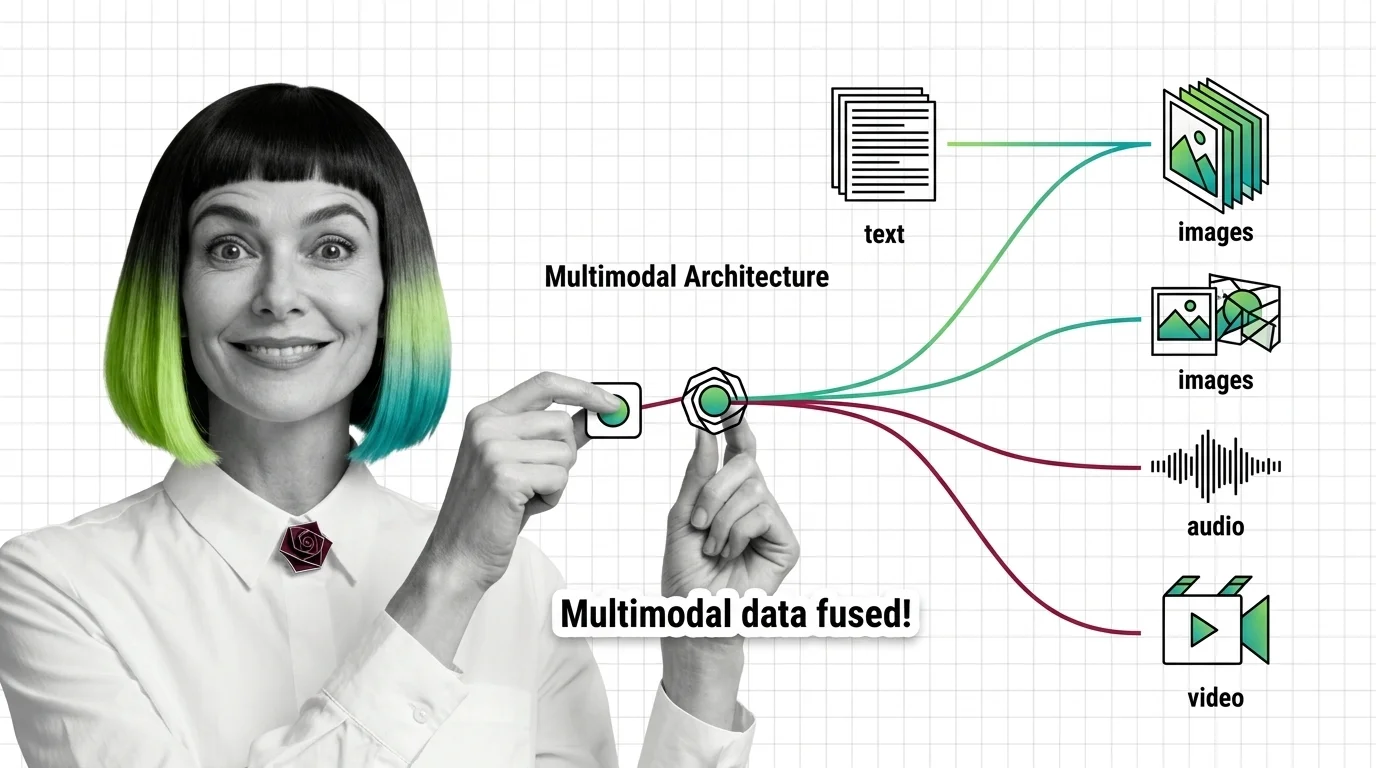

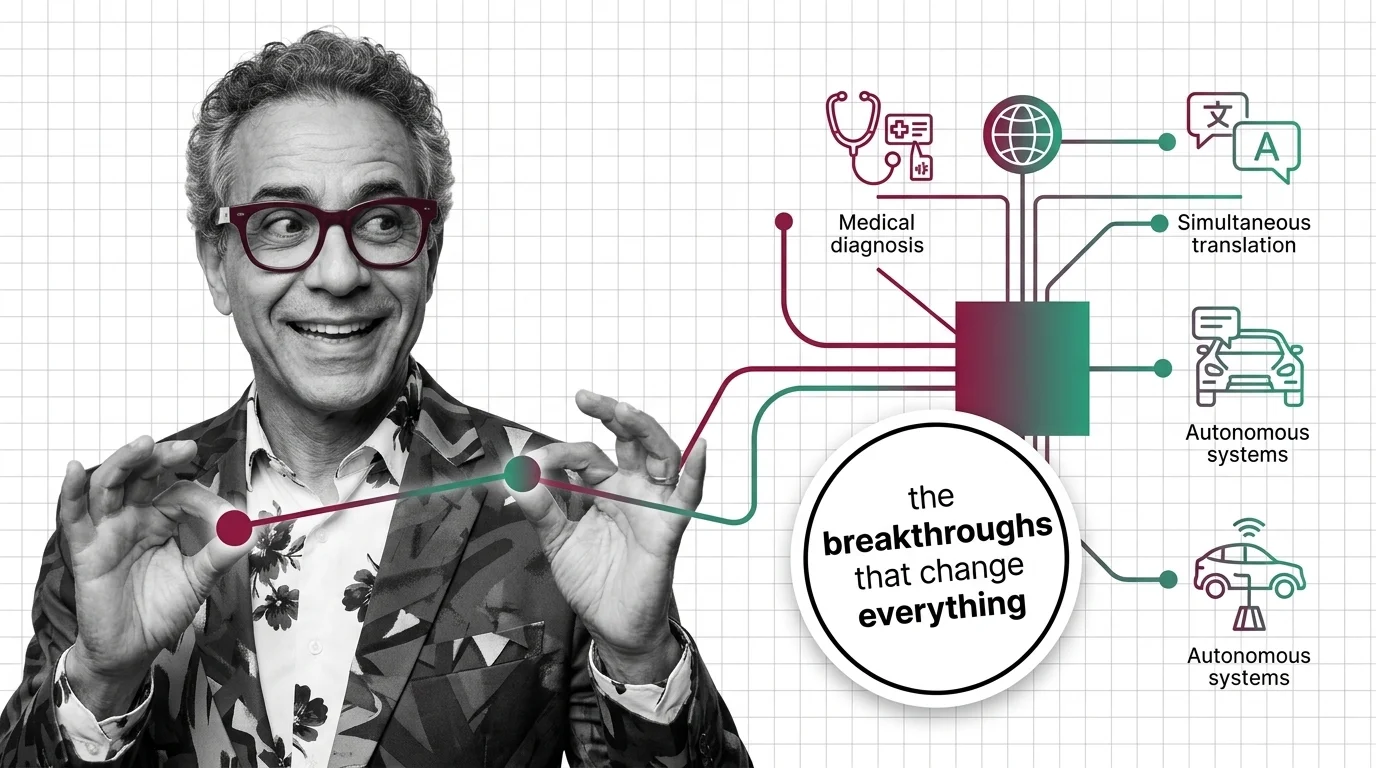

Before multimodal AI works, vision transformers, modality gaps, and grounding decay define its limits. The mechanics of why 2026 models still hallucinate.