Benchmark Contamination, Metric Gaming, and the Hard Limits of LLM Evaluation

Benchmark contamination inflates LLM scores while real-world performance lags. Learn why metric gaming and saturated tests are breaking model evaluation in 2026.

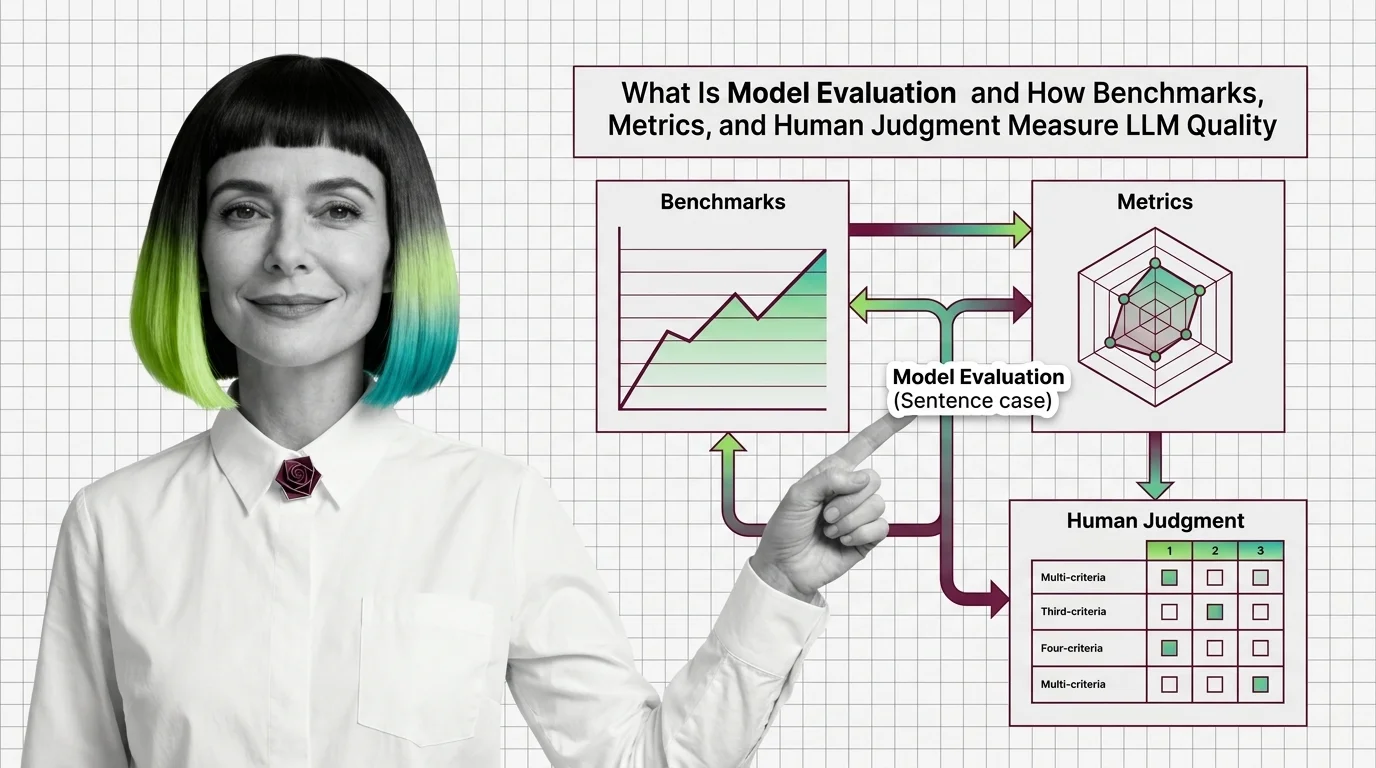

Model evaluation is the process of measuring how well a large language model performs using benchmarks, human judgment, and automated metrics.

Common approaches include standardized tests like MMLU and HumanEval, statistical measures such as perplexity and BLEU, and newer methods like LLM-as-judge and arena-style comparisons. Choosing the right evaluation strategy depends on the specific task and deployment context. Also known as: LLM Evaluation, LLM Benchmarks

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Benchmark contamination inflates LLM scores while real-world performance lags. Learn why metric gaming and saturated tests are breaking model evaluation in 2026.

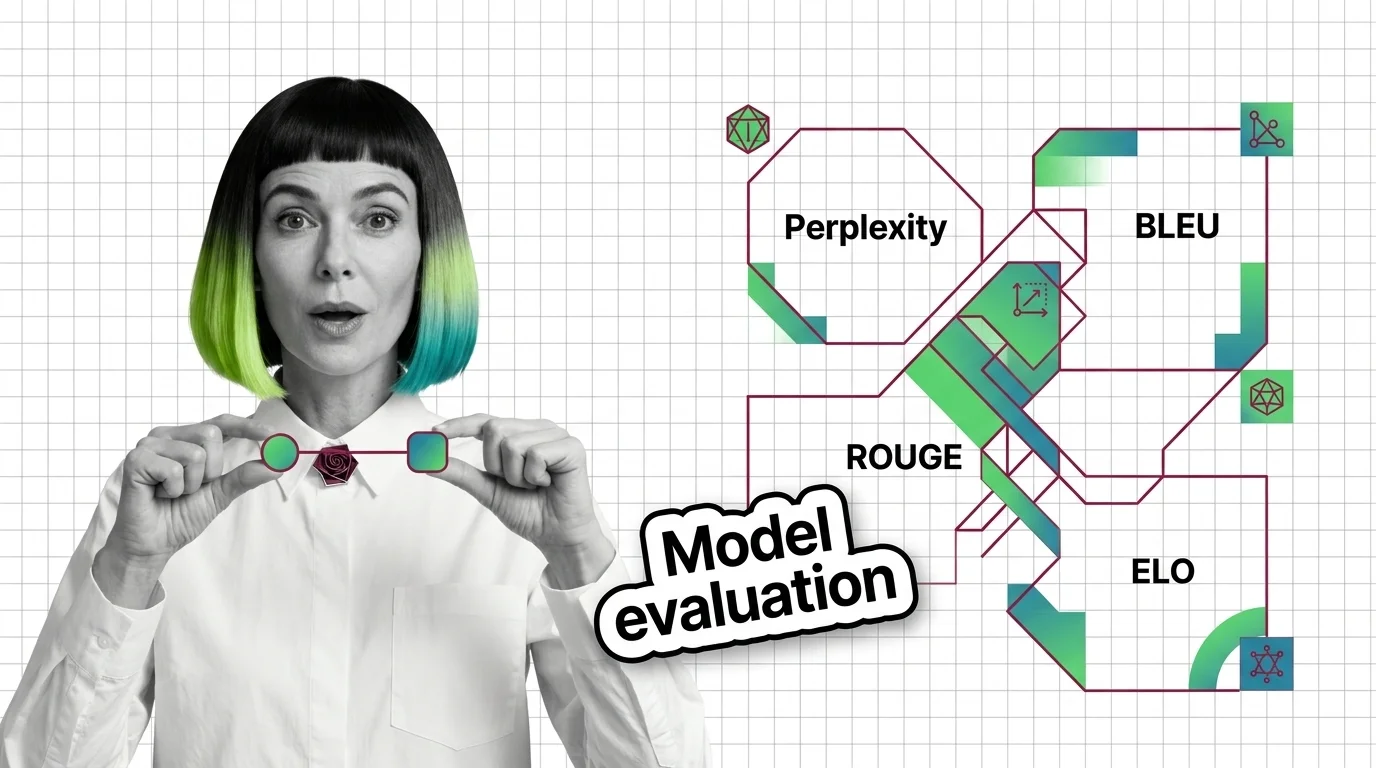

Perplexity, BLEU, ROUGE, and Elo measure fundamentally different properties of language models. Learn when each metric applies, where they diverge, and what they hide.

Model evaluation combines benchmarks, automated metrics, and human judgment to measure LLM quality. Learn why high scores mislead and what the math underneath reveals.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

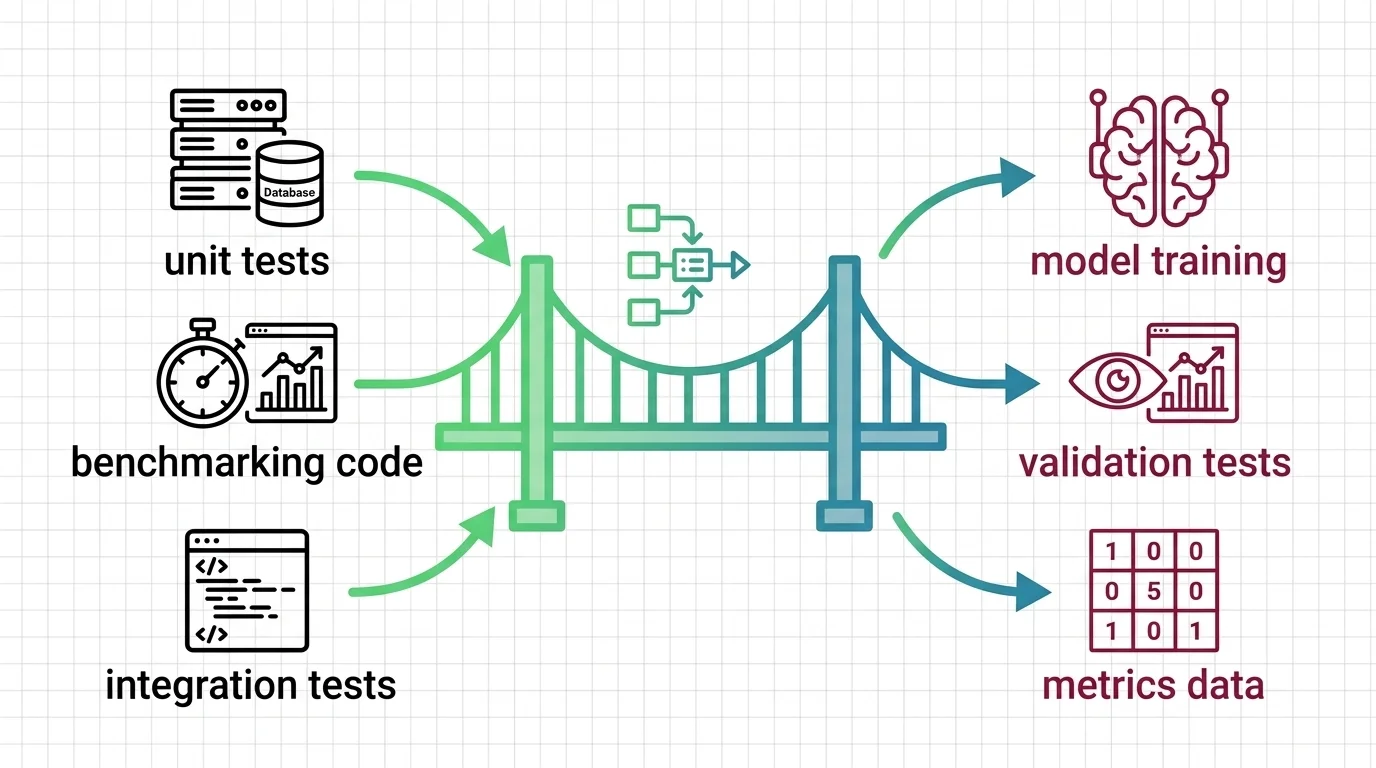

Model evaluation mapped for backend developers. Learn which testing instincts transfer to LLM benchmarks, where scores mislead, and what to evaluate first.

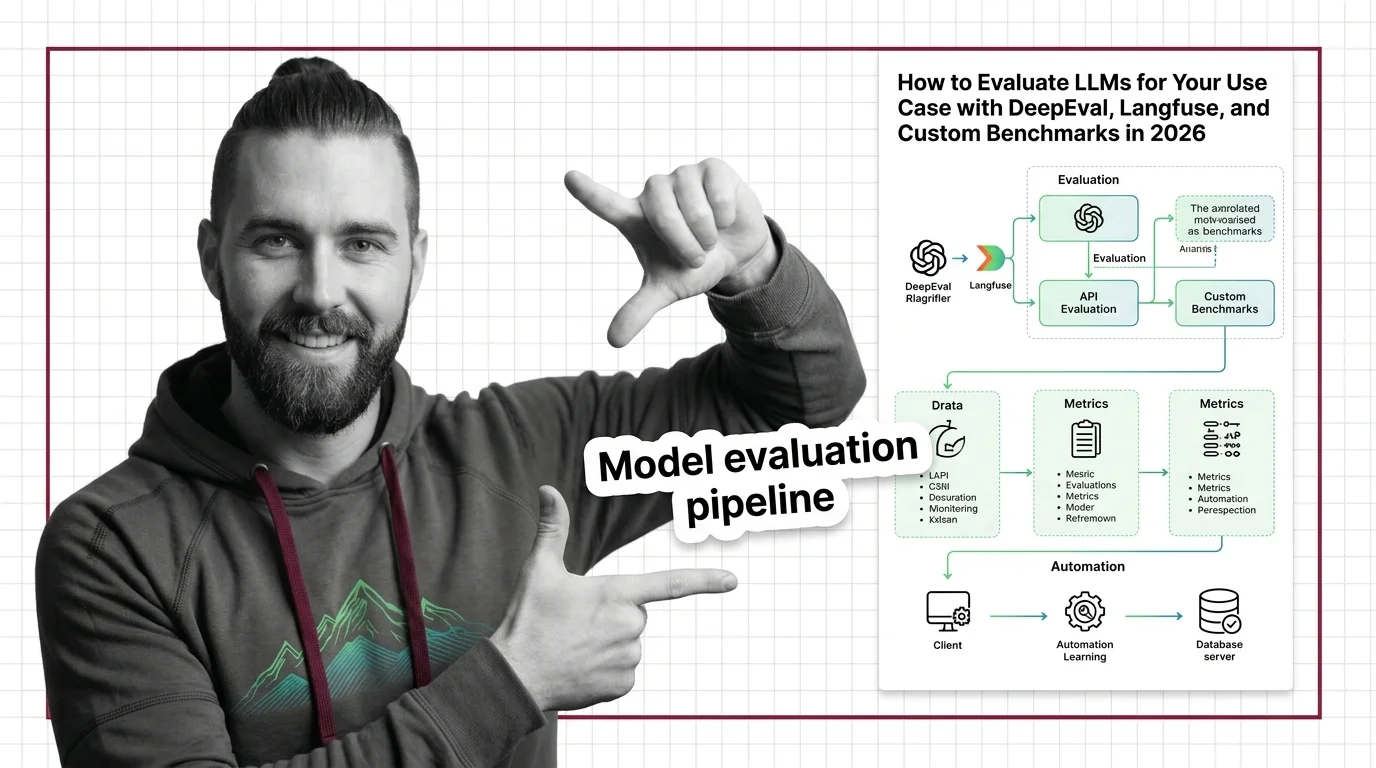

Build an LLM evaluation pipeline with DeepEval, Langfuse, and Promptfoo. Covers metrics selection, production tracing, and CI/CD gating for RAG systems.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

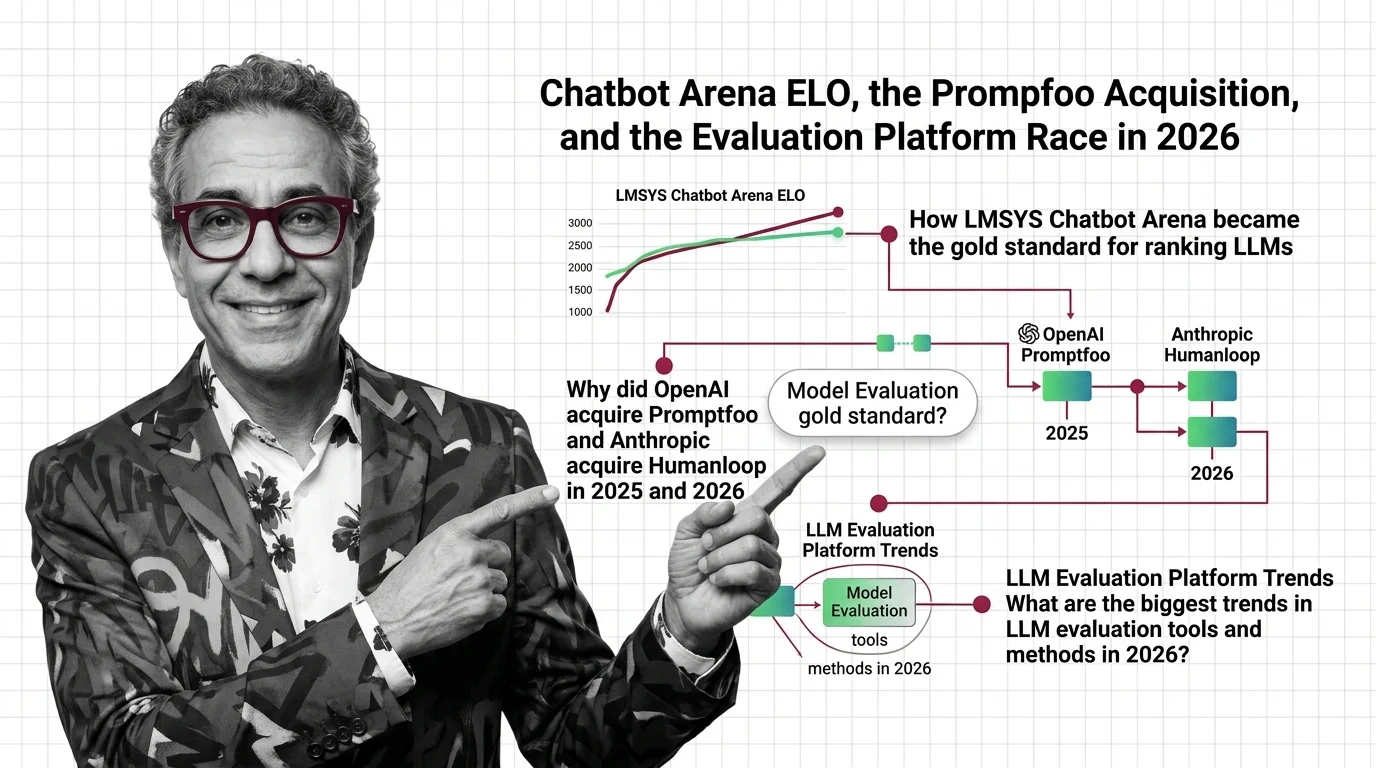

Updated March 2026

OpenAI acquired Promptfoo, Anthropic acqui-hired Humanloop, and Arena hit a $1.7B valuation. Here's why the evaluation layer just became AI's most contested ground.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

LLM benchmarks encode their creators' cultural values. Explore how geographic bias, moral stereotyping, and power asymmetry define what we call AI intelligence.