Model Evaluation

Model evaluation is the process of measuring how well a large language model performs using benchmarks, human judgment, and automated metrics. Common approaches include standardized tests like MMLU and HumanEval, statistical measures such as perplexity and BLEU, and newer methods like LLM-as-judge and arena-style comparisons. Choosing the right evaluation strategy depends on the specific task and deployment context. Also known as: LLM Evaluation, LLM Benchmarks

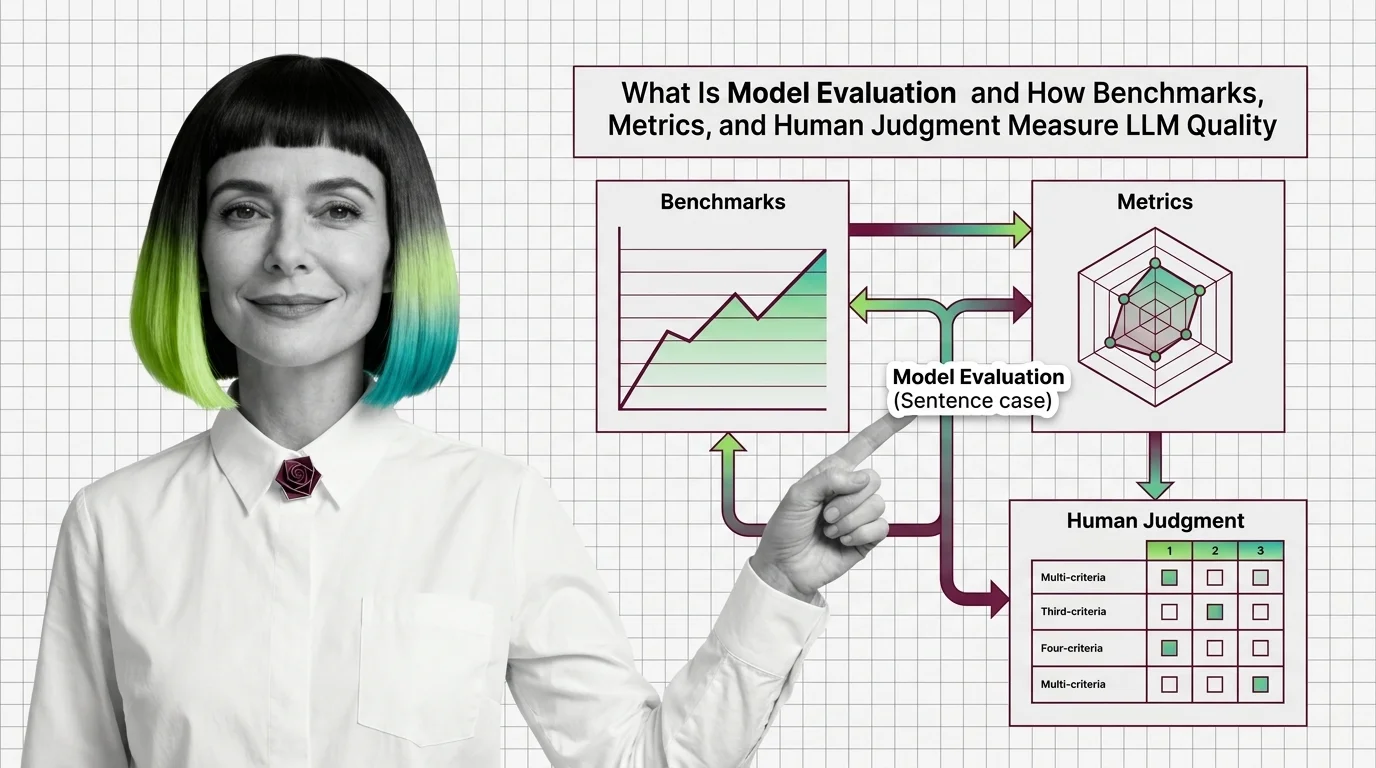

Understand the Fundamentals

Model evaluation determines whether a language model actually does what you need it to do. These articles explain the science behind benchmarks, metrics, and the surprising gaps between leaderboard scores and real-world performance.

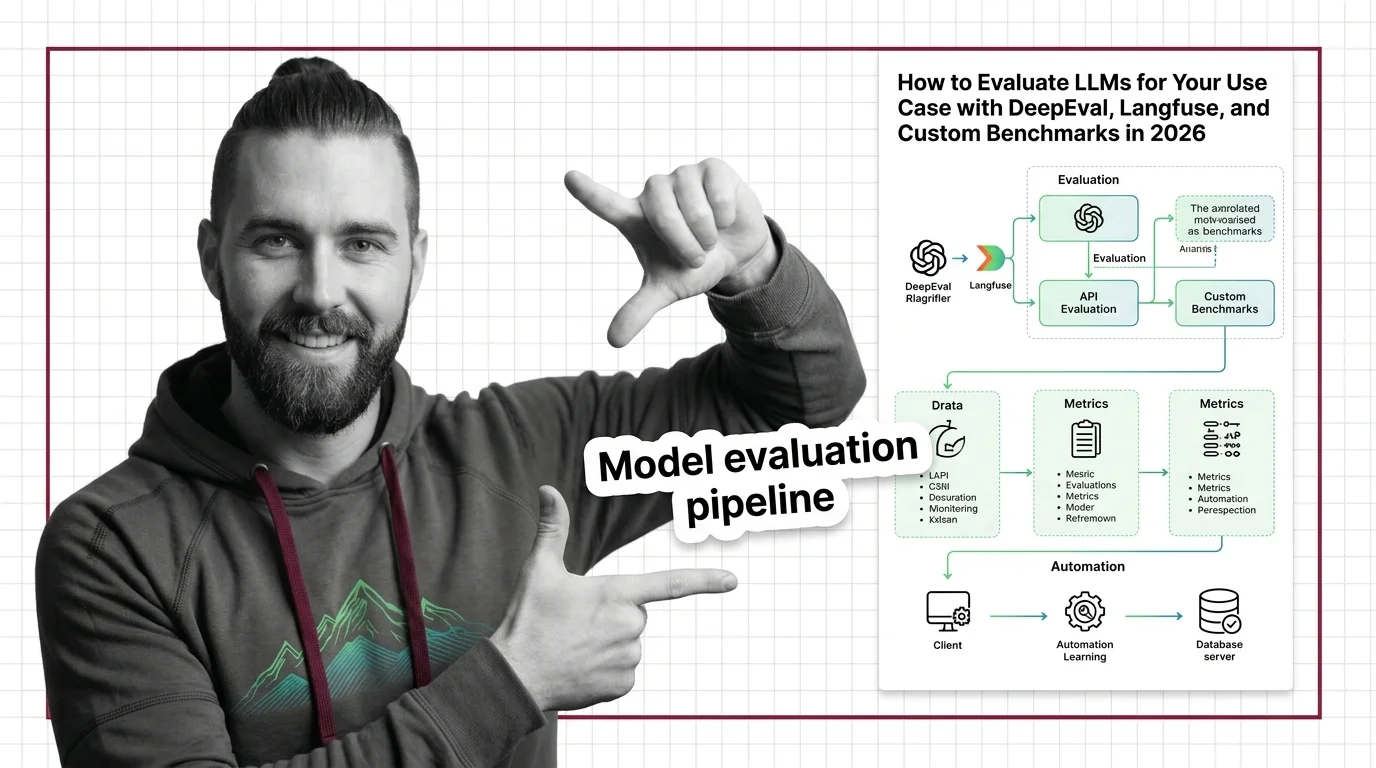

Build with Model Evaluation

Evaluating models in practice means picking the right metrics, avoiding common measurement traps, and building repeatable test pipelines tailored to your specific use case.

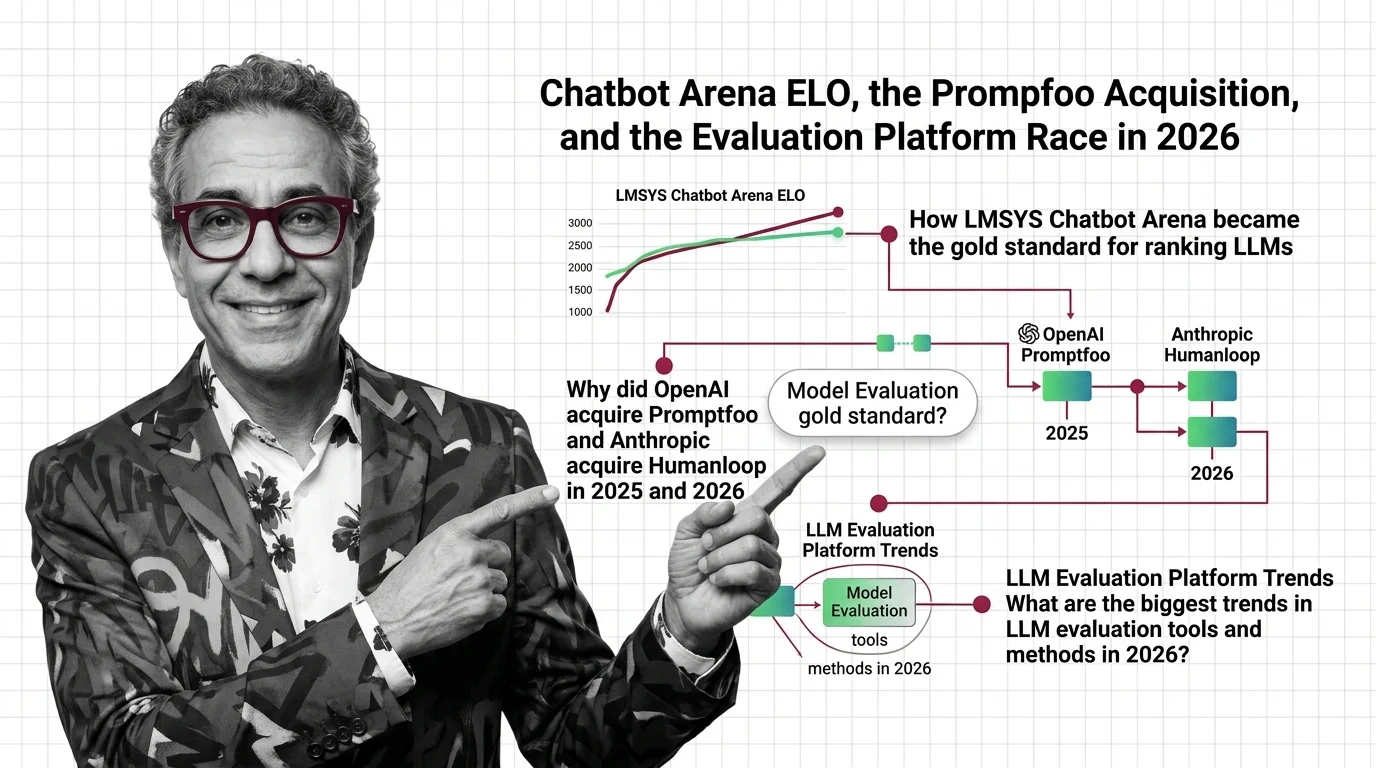

What's Changing in 2026

The evaluation landscape shifts fast as new benchmarks emerge and old ones saturate. Staying current on scoring methods and platform developments helps you separate genuine progress from hype.

Updated March 2026

Risks and Considerations

Benchmark scores can mislead when contamination, cultural bias, or metric gaming go unexamined. These articles explore who defines quality and what gets lost in the measurement process.