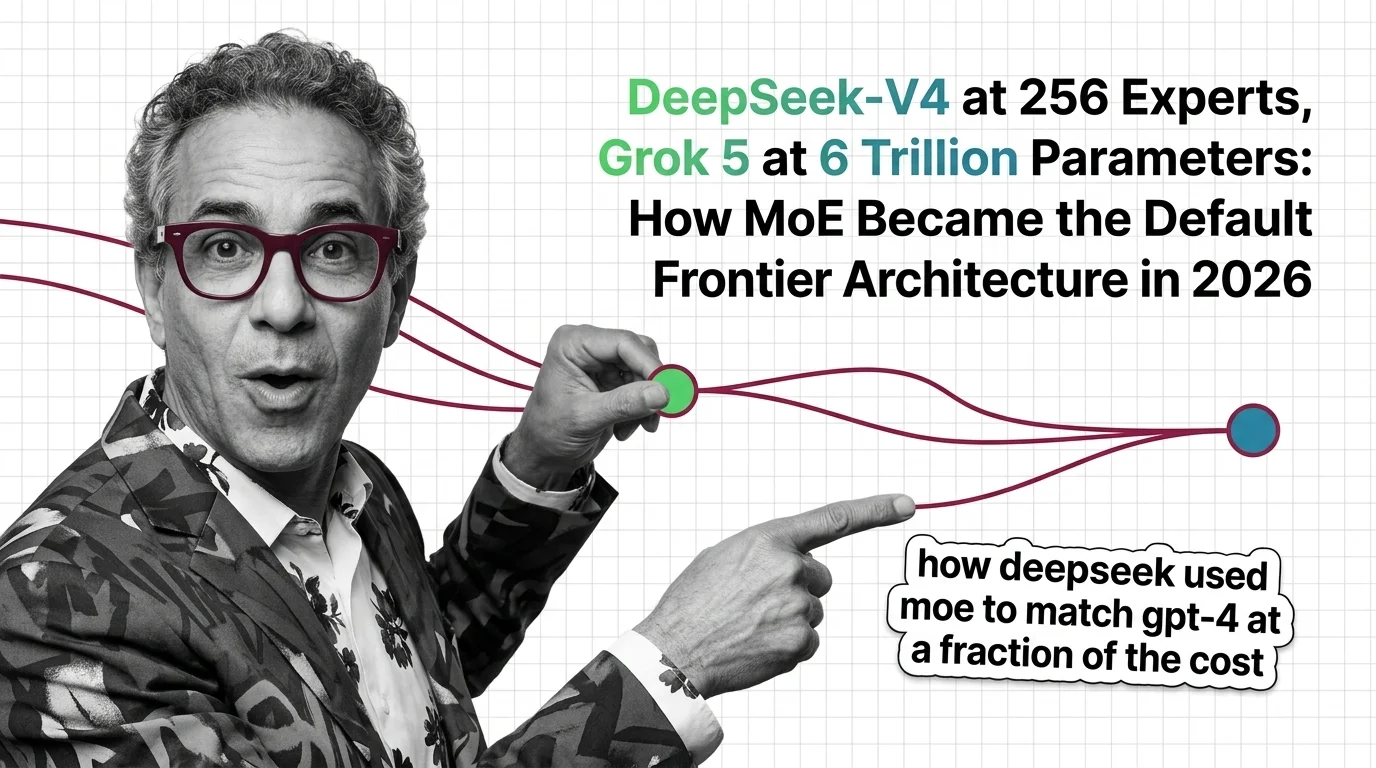

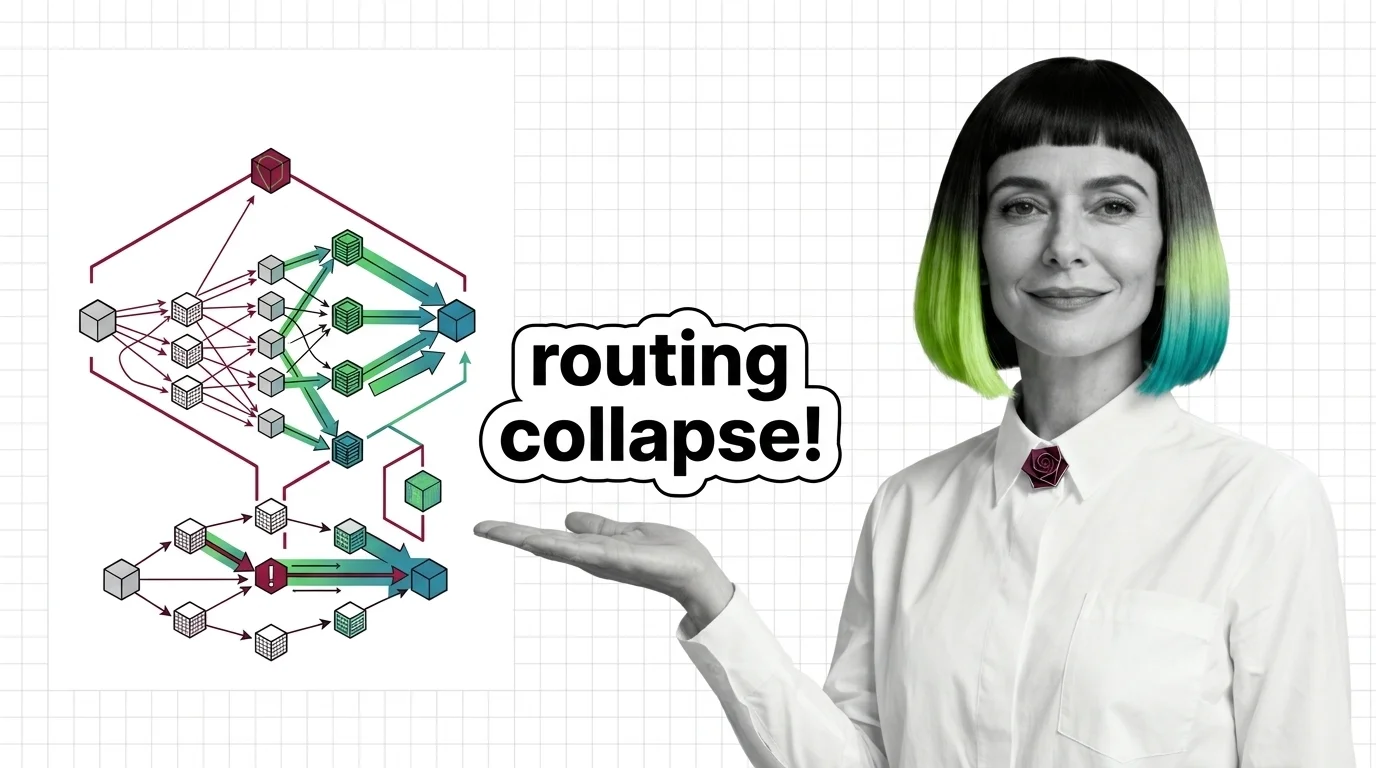

Routing Collapse, Load Balancing Failures, and the Hard Engineering Limits of Mixture of Experts

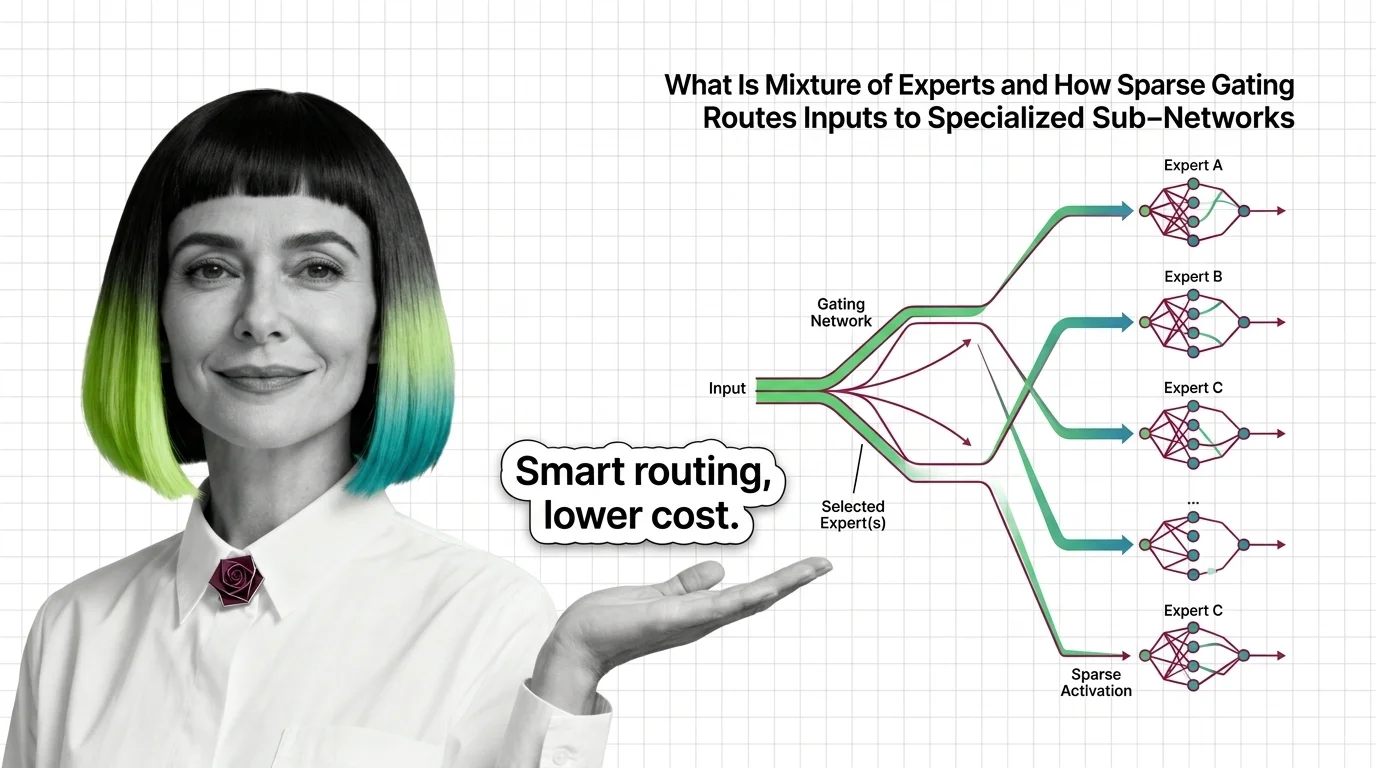

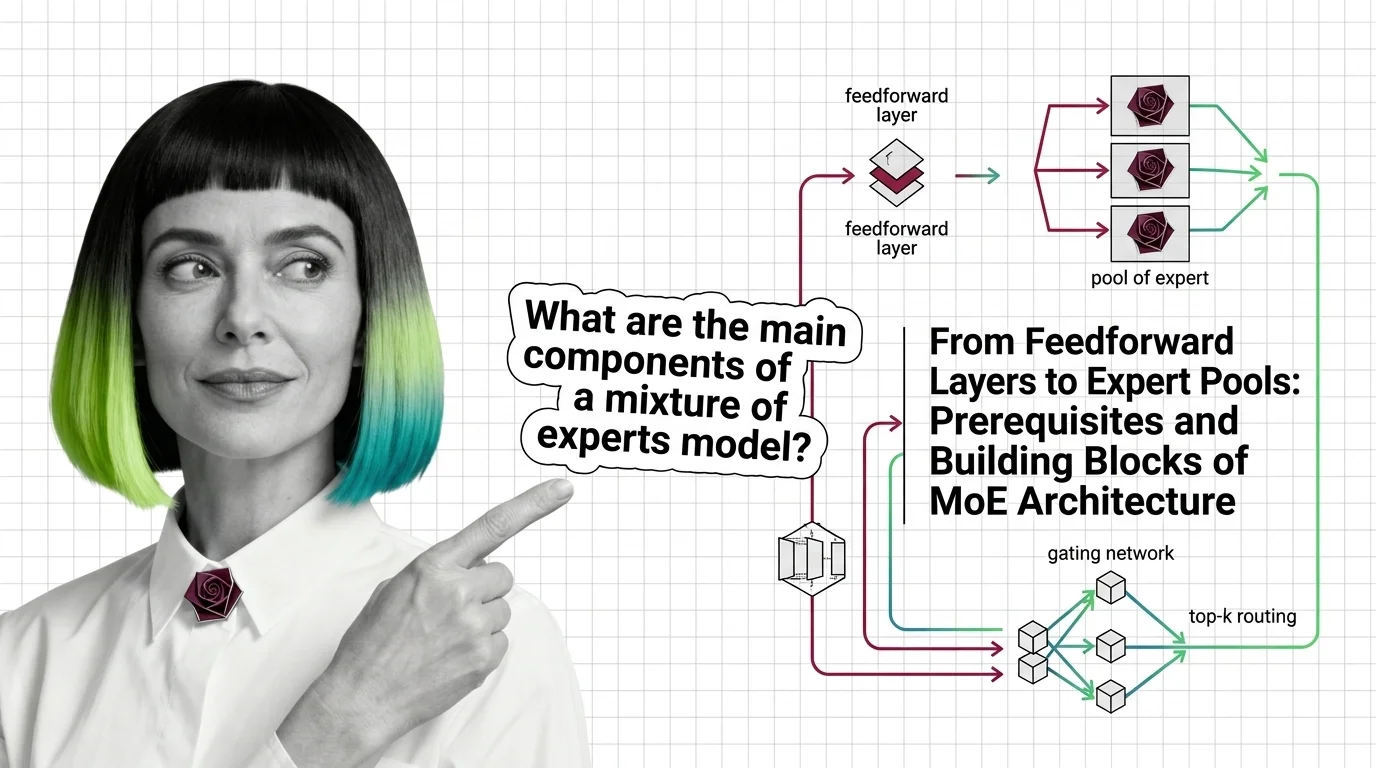

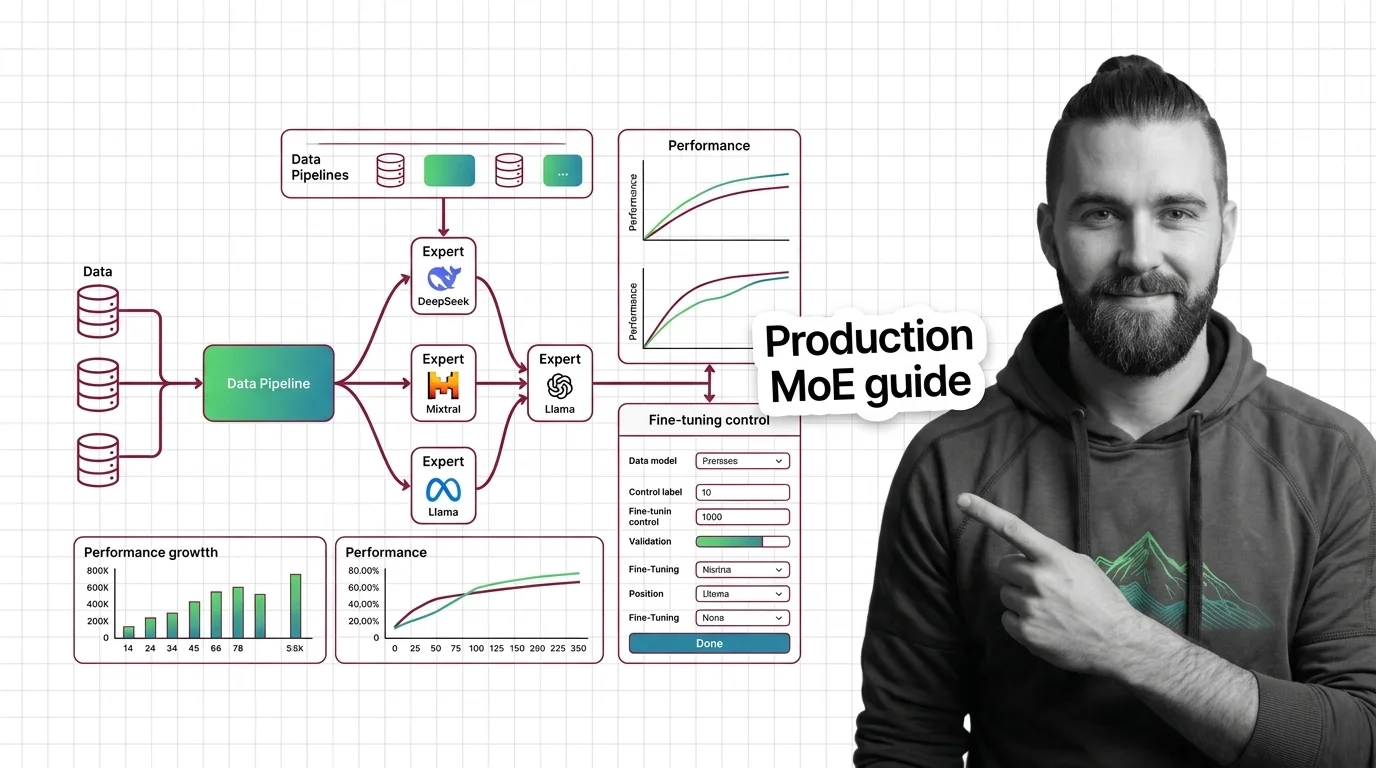

MoE models promise scale at fractional compute cost. Understand routing collapse, memory tradeoffs, and communication overhead — the hard engineering limits.