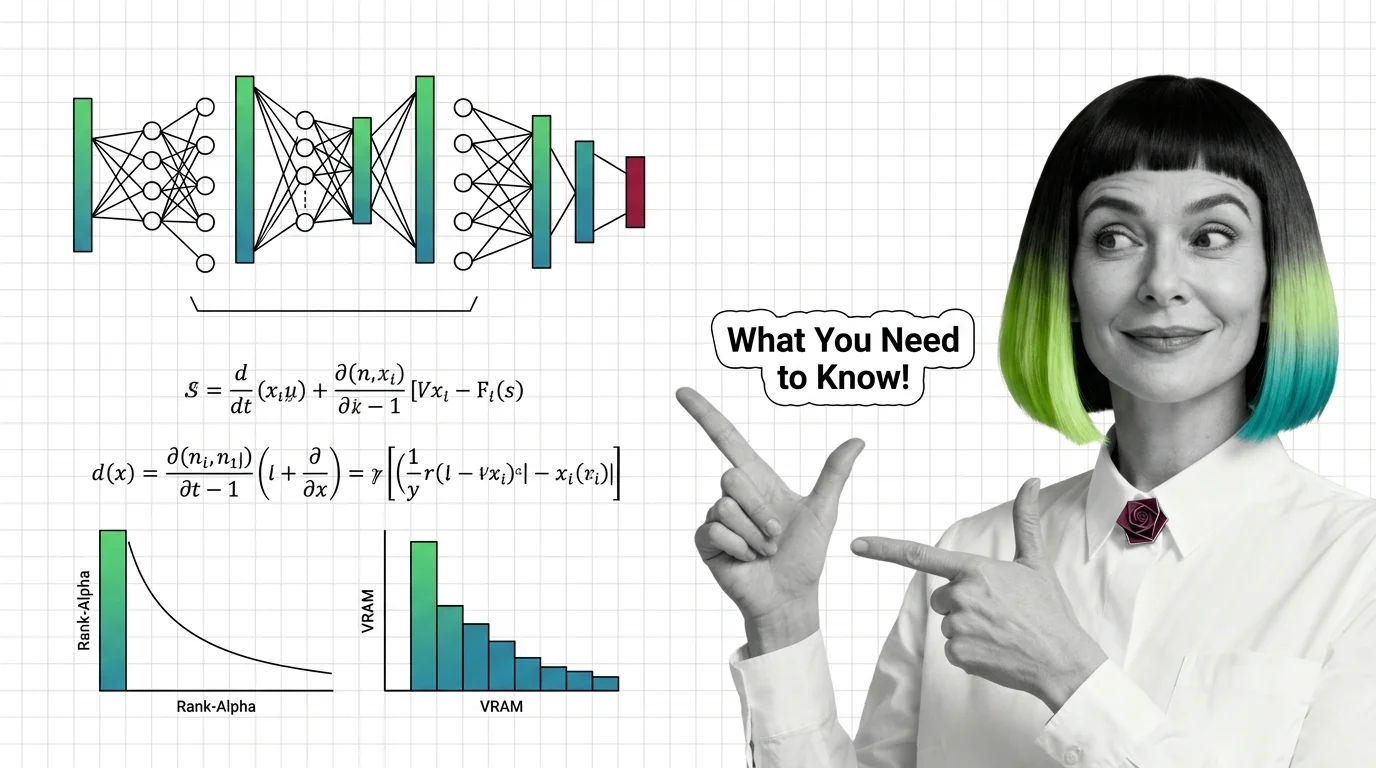

How LoRA Fine-Tunes Diffusion Models for Image Generation

LoRA fine-tunes Stable Diffusion and FLUX without retraining. Learn how rank, alpha, and the BA decomposition turn a few-megabyte file into a new style.

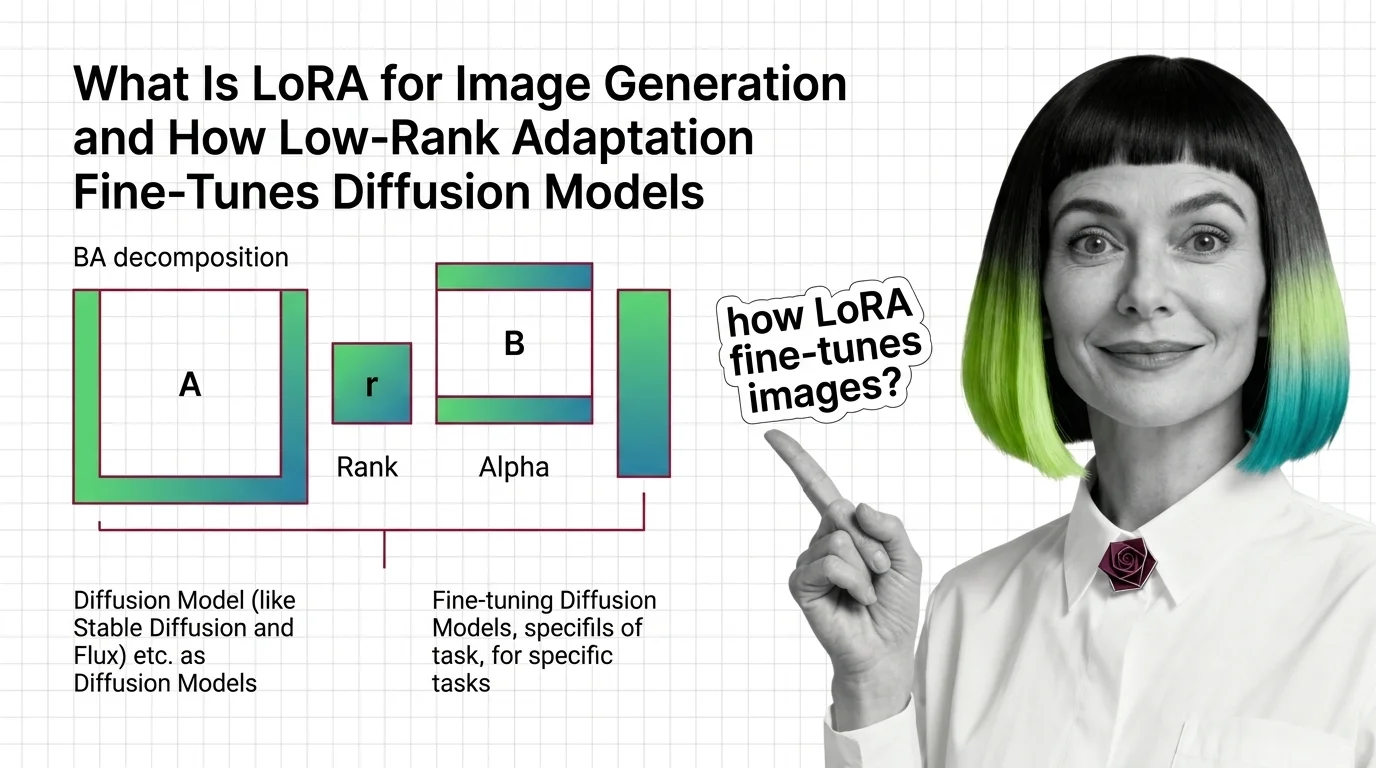

LoRA for image generation is a fine-tuning technique that adds small low-rank weight adapters to a diffusion model to teach it a new style, subject, or character without retraining the full model.

A trained LoRA is typically a small file that loads at inference time using a trigger word, letting users customize Stable Diffusion, SDXL, or Flux for production pipelines. Also known as: Image LoRA, Stable Diffusion LoRA

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

LoRA fine-tunes Stable Diffusion and FLUX without retraining. Learn how rank, alpha, and the BA decomposition turn a few-megabyte file into a new style.

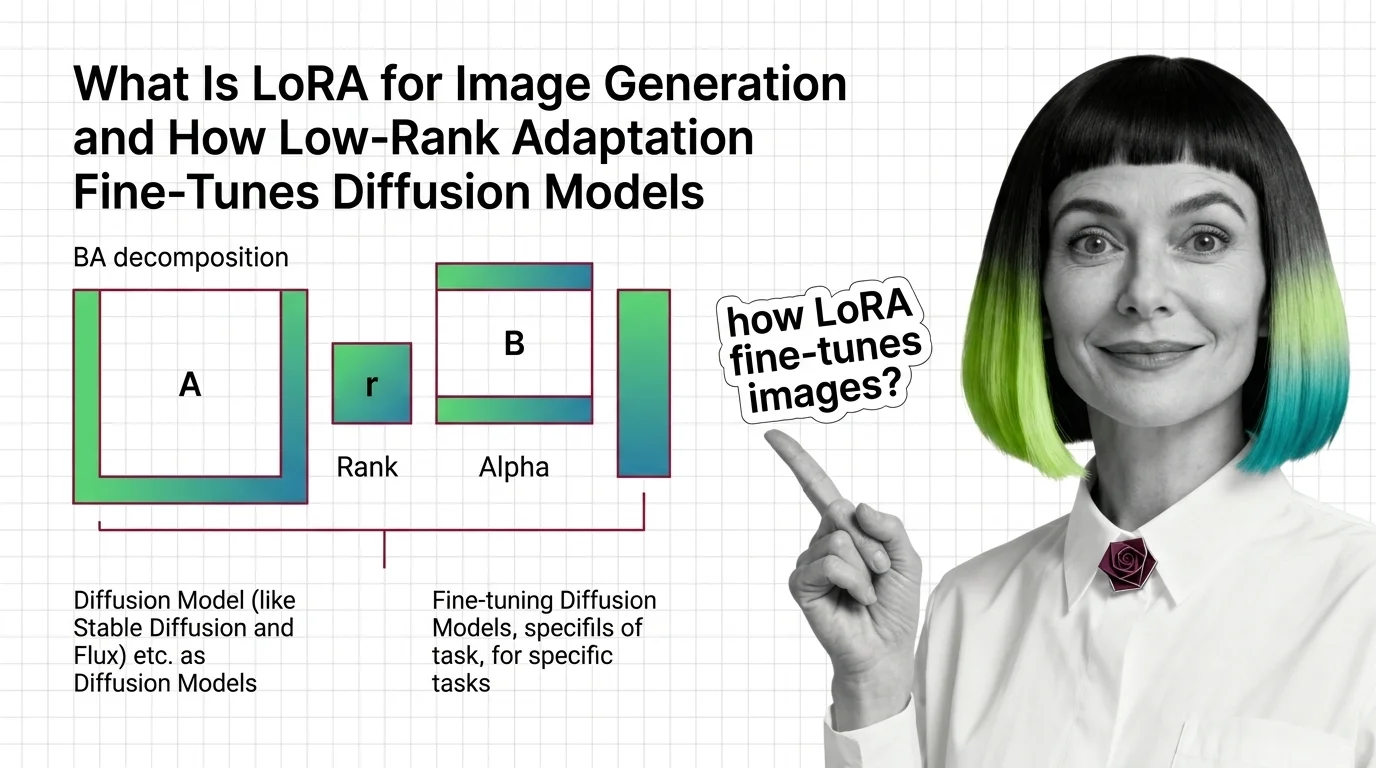

Image LoRAs retarget diffusion models with small adapter files. Learn the rank-alpha math, VRAM ranges from SD 1.5 to Flux, and why most fail.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

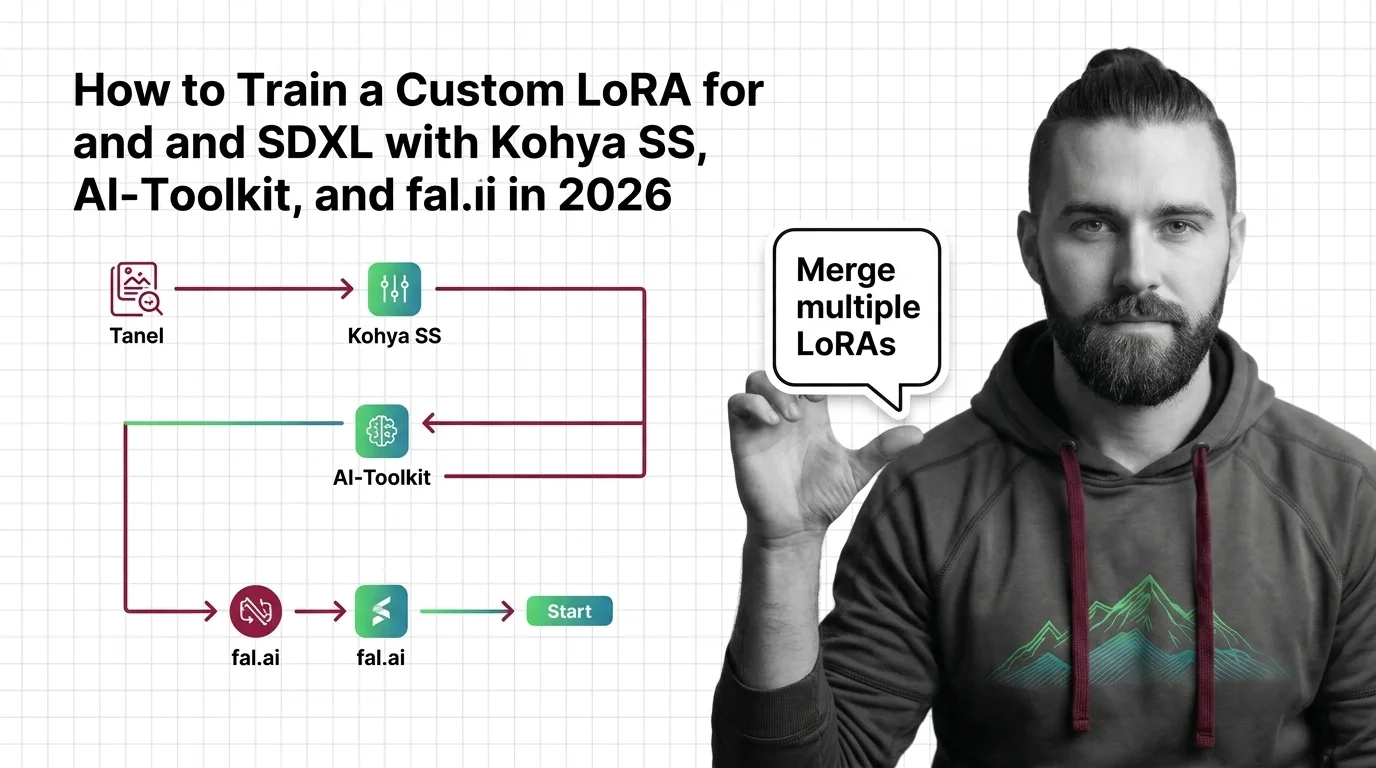

Train custom LoRAs for Flux and SDXL with Kohya SS, AI-Toolkit, or fal.ai. Covers dataset specs, learning rates, trigger words, and multi-LoRA merges in 2026.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

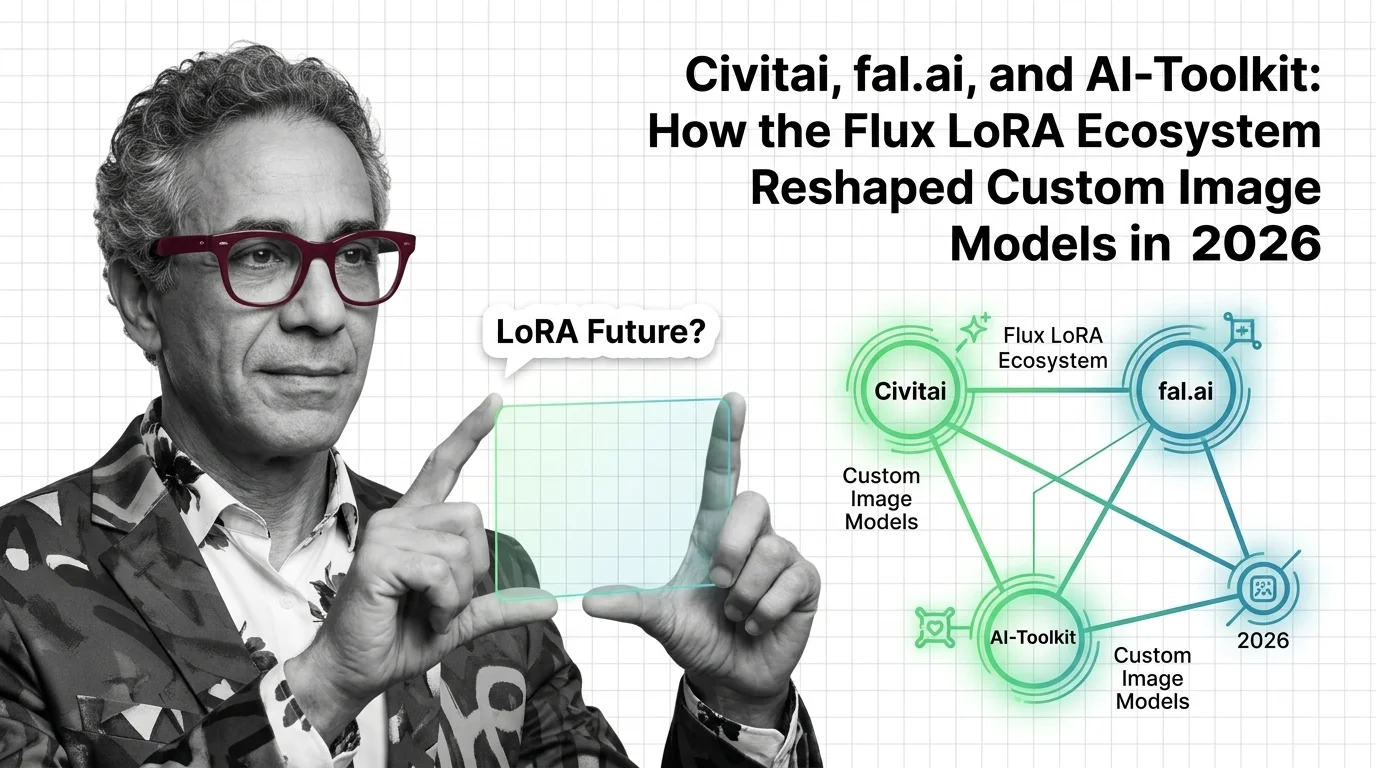

Updated April 2026

The 2026 Flux LoRA stack split into three layers — marketplaces, serverless APIs, open-source trainers. Here's who leads and what Flux.2 just changed.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

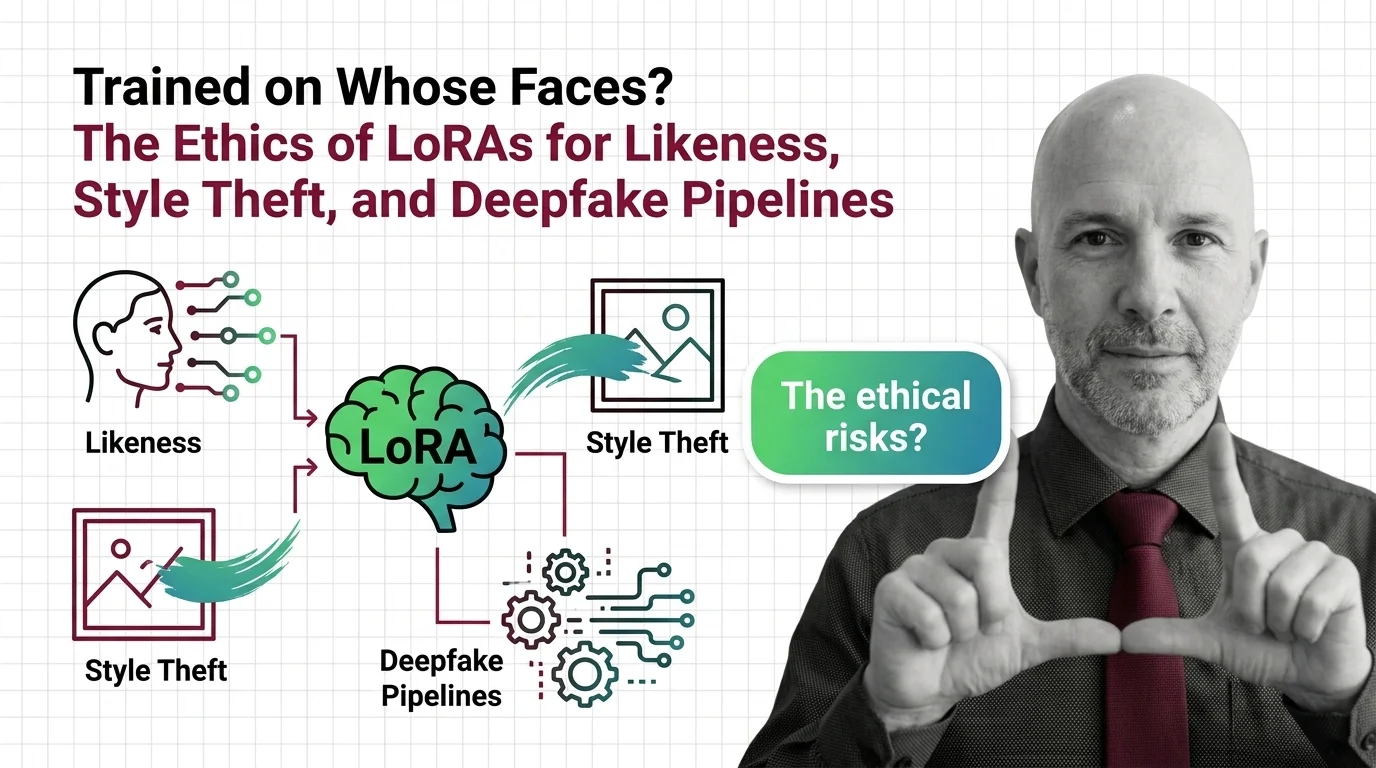

LoRAs made it possible to fine-tune any face in fifteen minutes. The consent gap stopped being hypothetical the moment the toolchain made it routine.