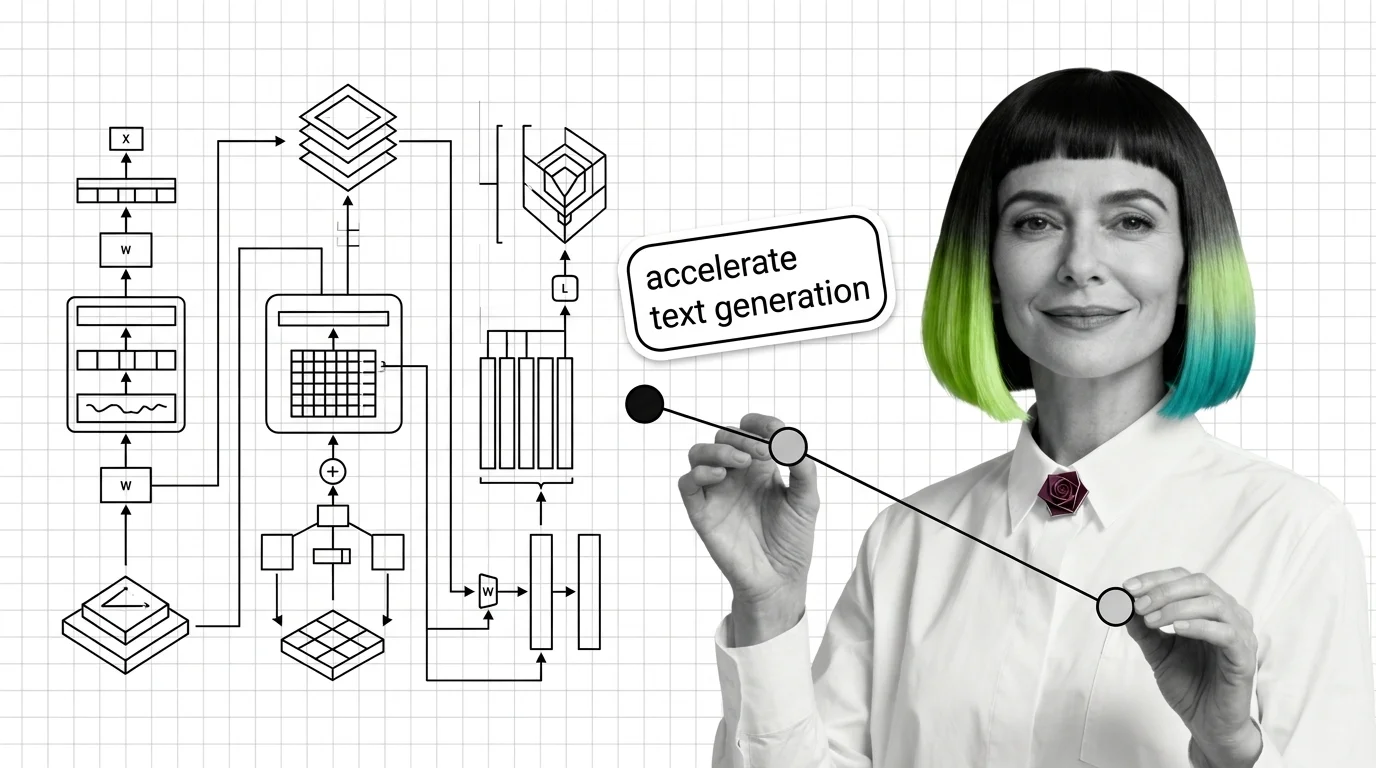

KV-Cache, PagedAttention, and the Building Blocks Every LLM Inference Pipeline Needs

KV-cache, PagedAttention, and continuous batching form the inference pipeline core. Learn how memory management determines your LLM latency and cost.

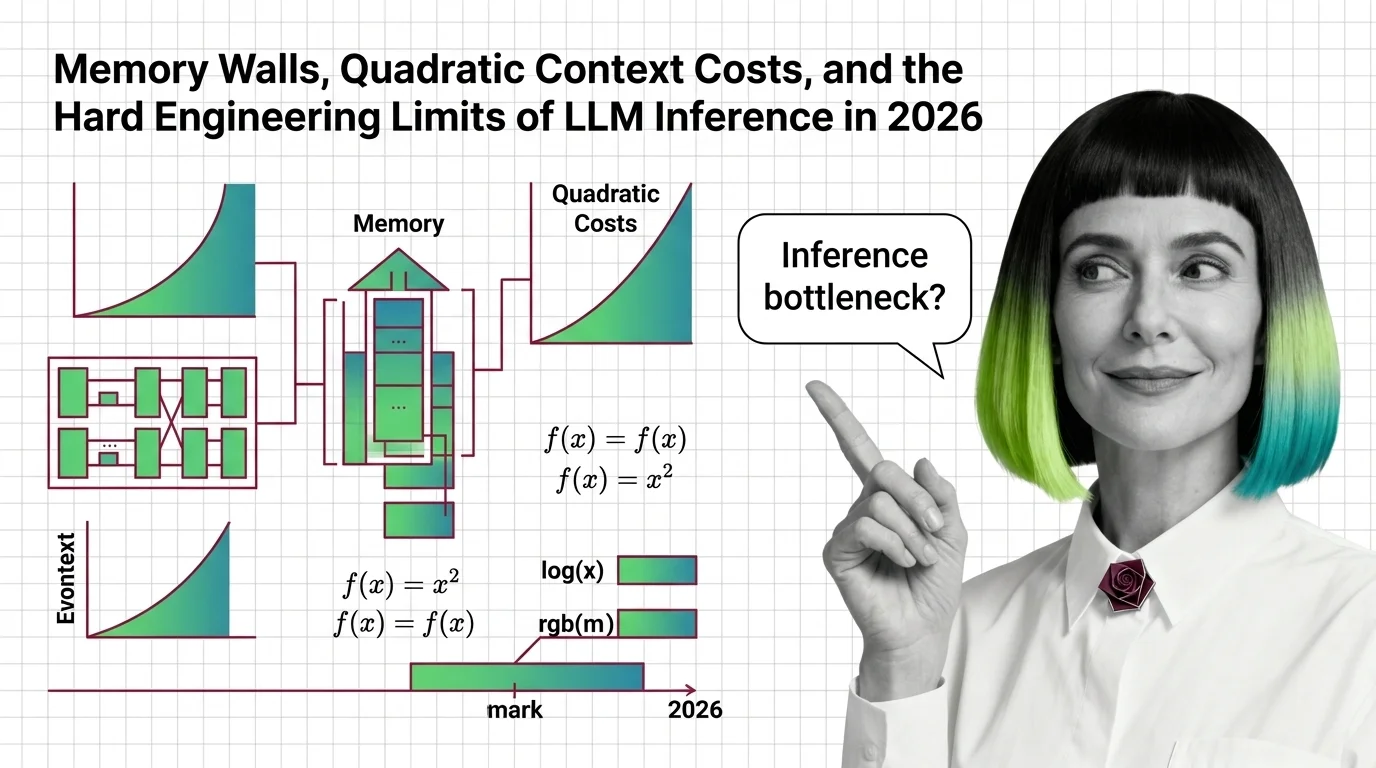

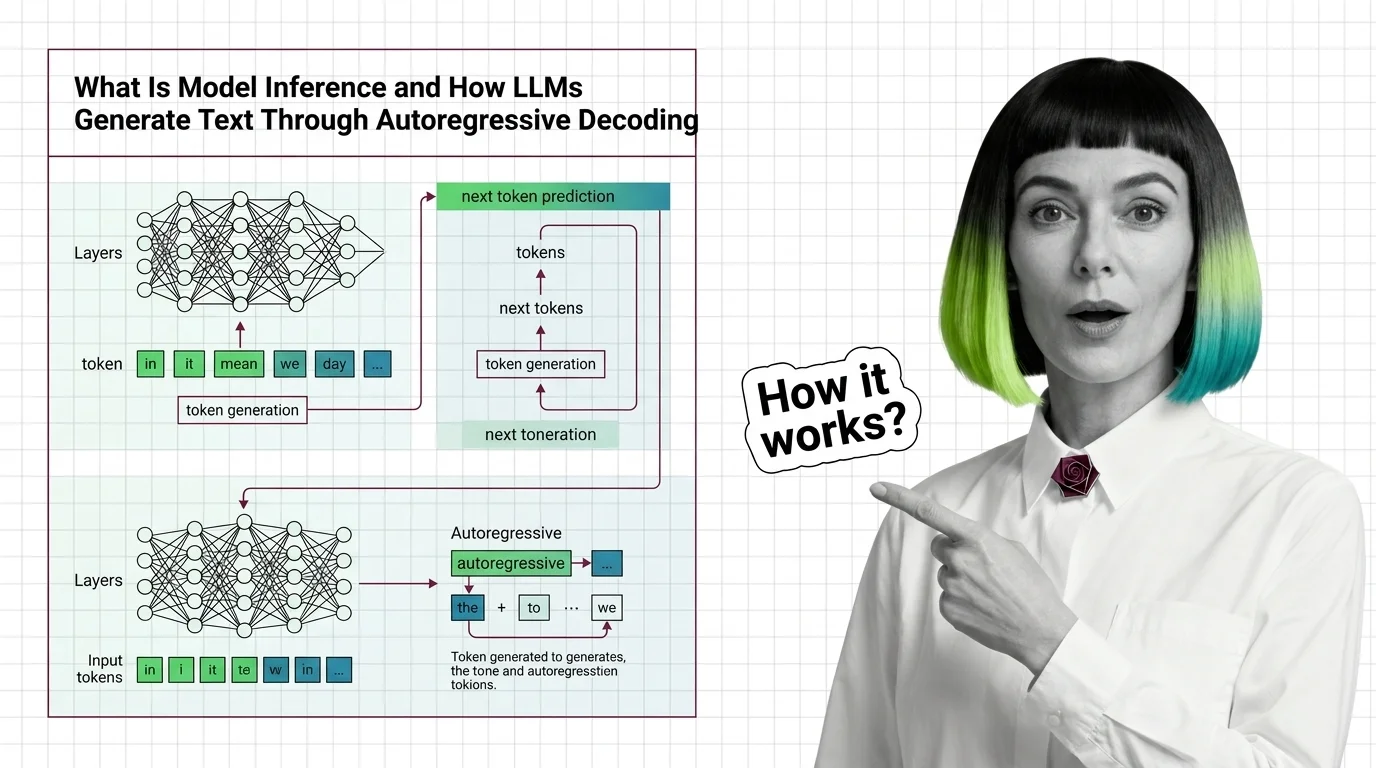

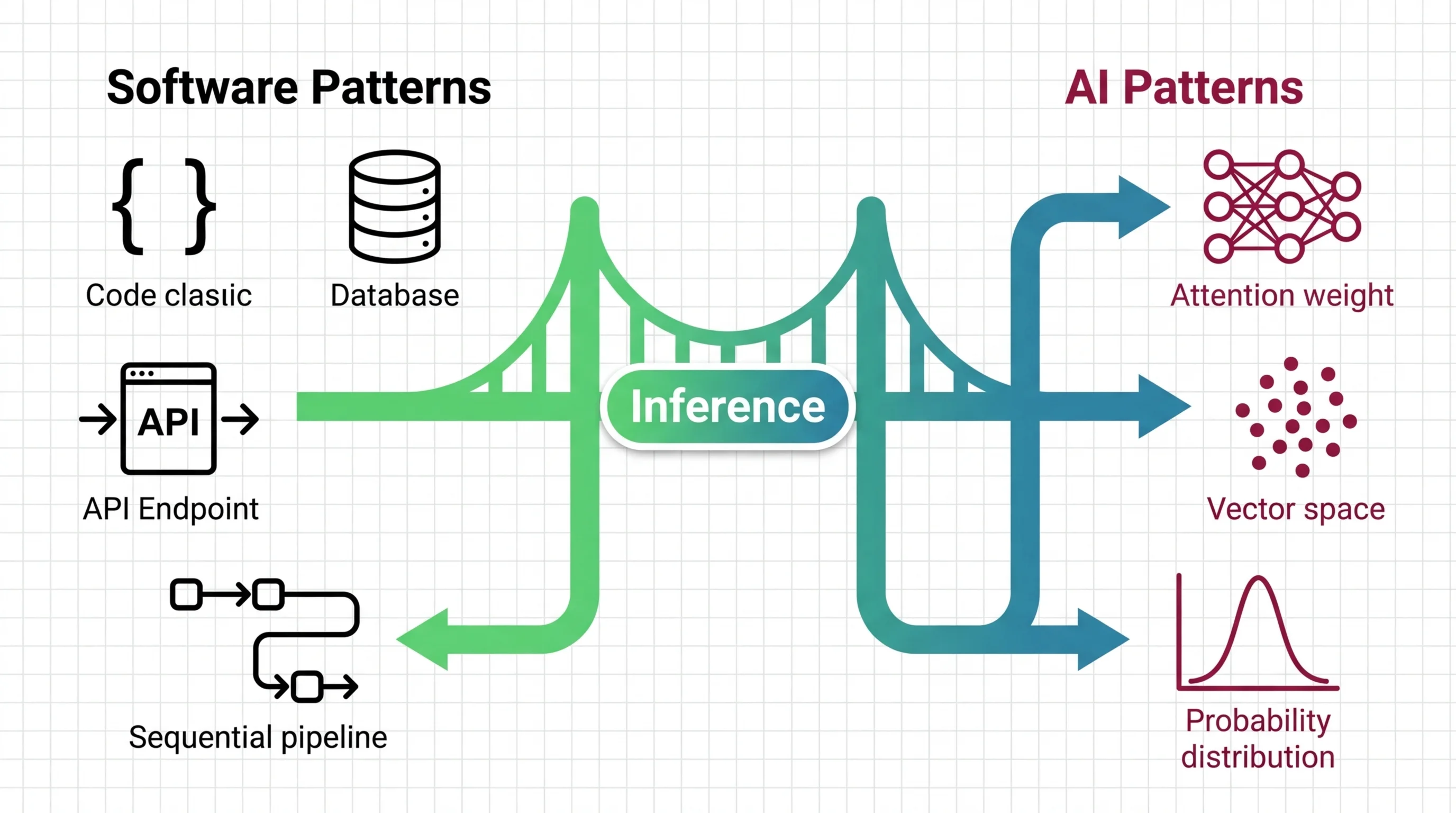

Inference is the process of running a trained machine learning model to generate predictions, classifications, or text in real time.

For large language models, inference involves autoregressive token generation, memory management through KV-cache, and careful balancing of latency against throughput to meet production requirements. Also known as: Model Inference, LLM Inference.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

KV-cache, PagedAttention, and continuous batching form the inference pipeline core. Learn how memory management determines your LLM latency and cost.

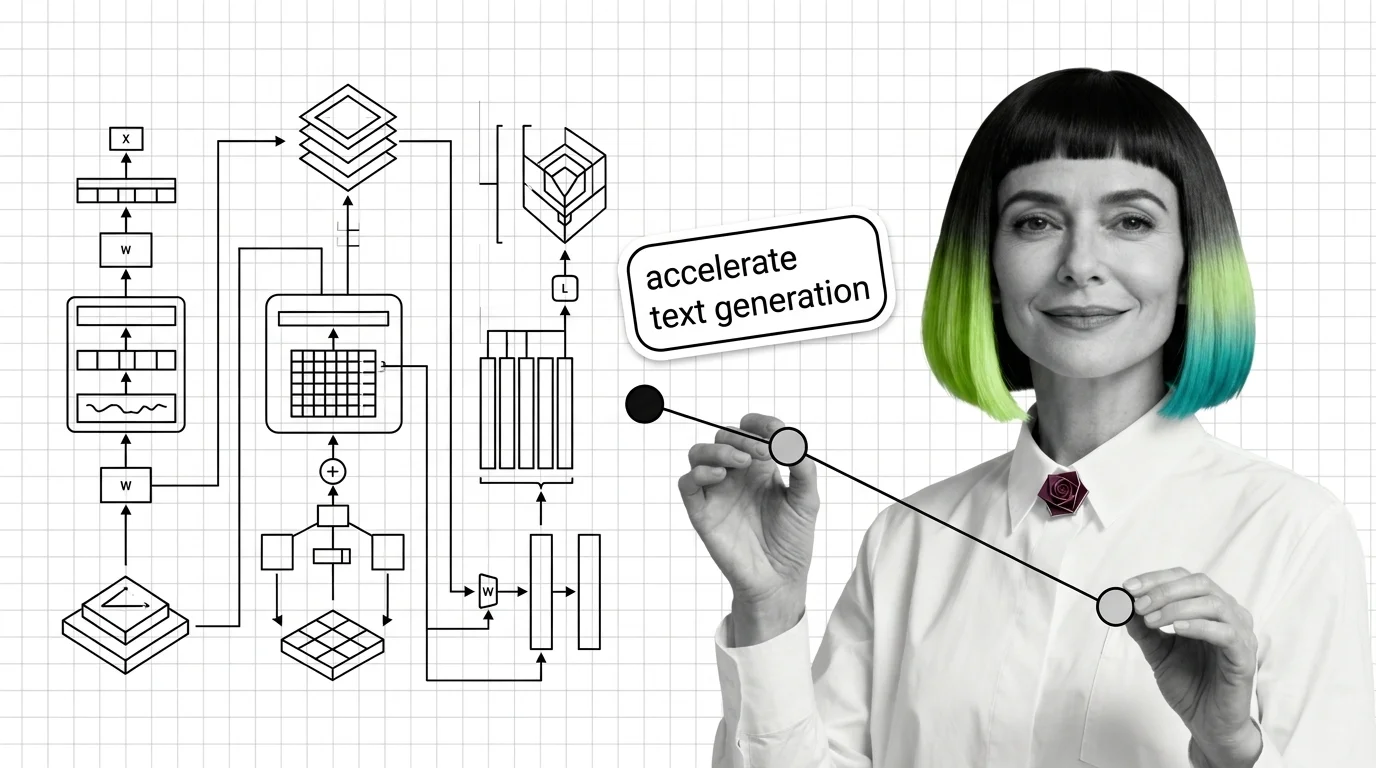

LLM inference hits hard physical walls — memory, quadratic attention, bandwidth. Learn the engineering limits and 2026 workarounds shaping real-world AI costs.

Model inference generates LLM text one token at a time via autoregressive decoding. Learn why this sequential bottleneck shapes every optimization in modern AI serving.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

LLM inference breaks your cost model, scaling instincts, and test expectations. Learn what transfers from backend engineering and what fails silently.

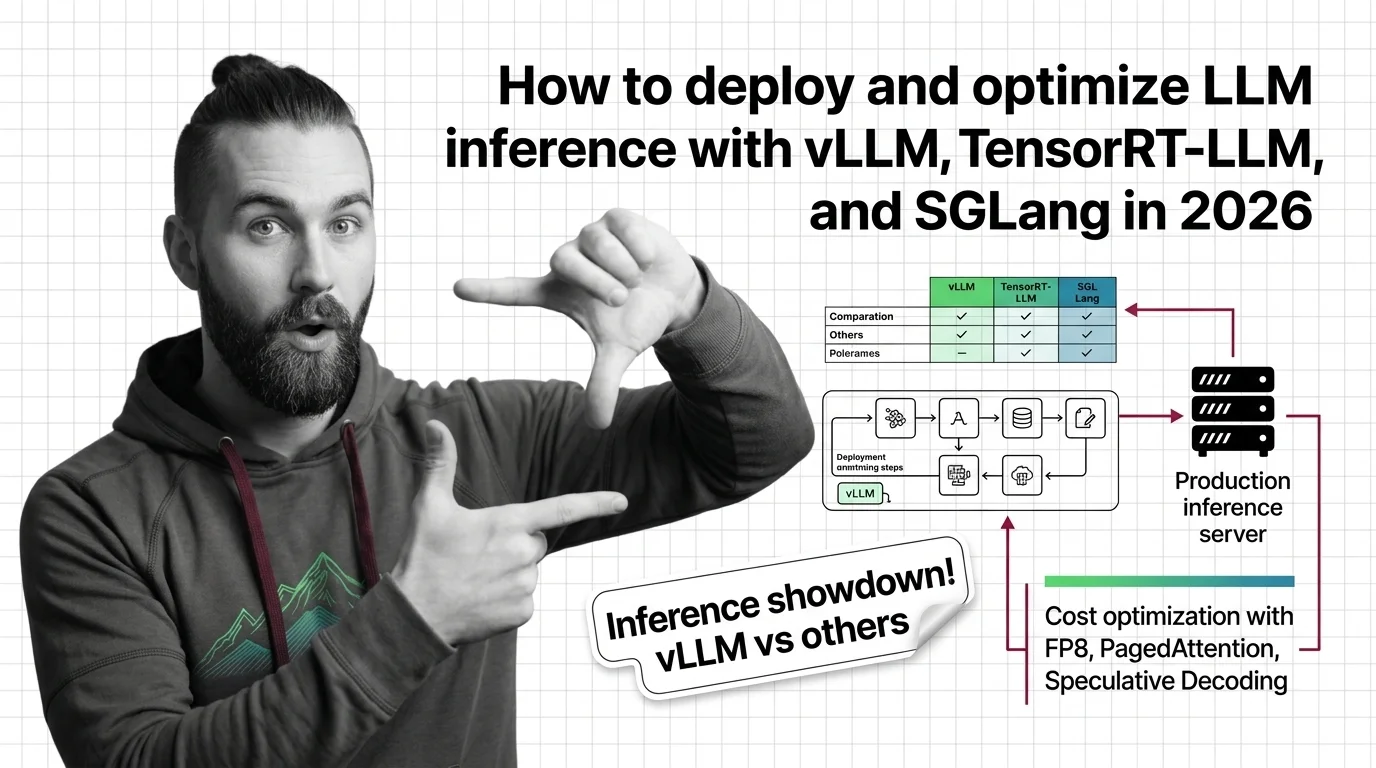

Deploy production LLM inference with vLLM, TensorRT-LLM, or SGLang. Covers workload profiling, engine selection, FP8 quantization, and load testing.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated March 2026

Cerebras, Groq, and SambaNova challenge GPU dominance in LLM inference. The 2026 custom silicon race, real cost shifts, and what it means for your stack.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

AI inference runs 24/7 on energy, water, and carbon. The environmental cost is real, the access gap is widening, and accountability remains an open question.