Inference

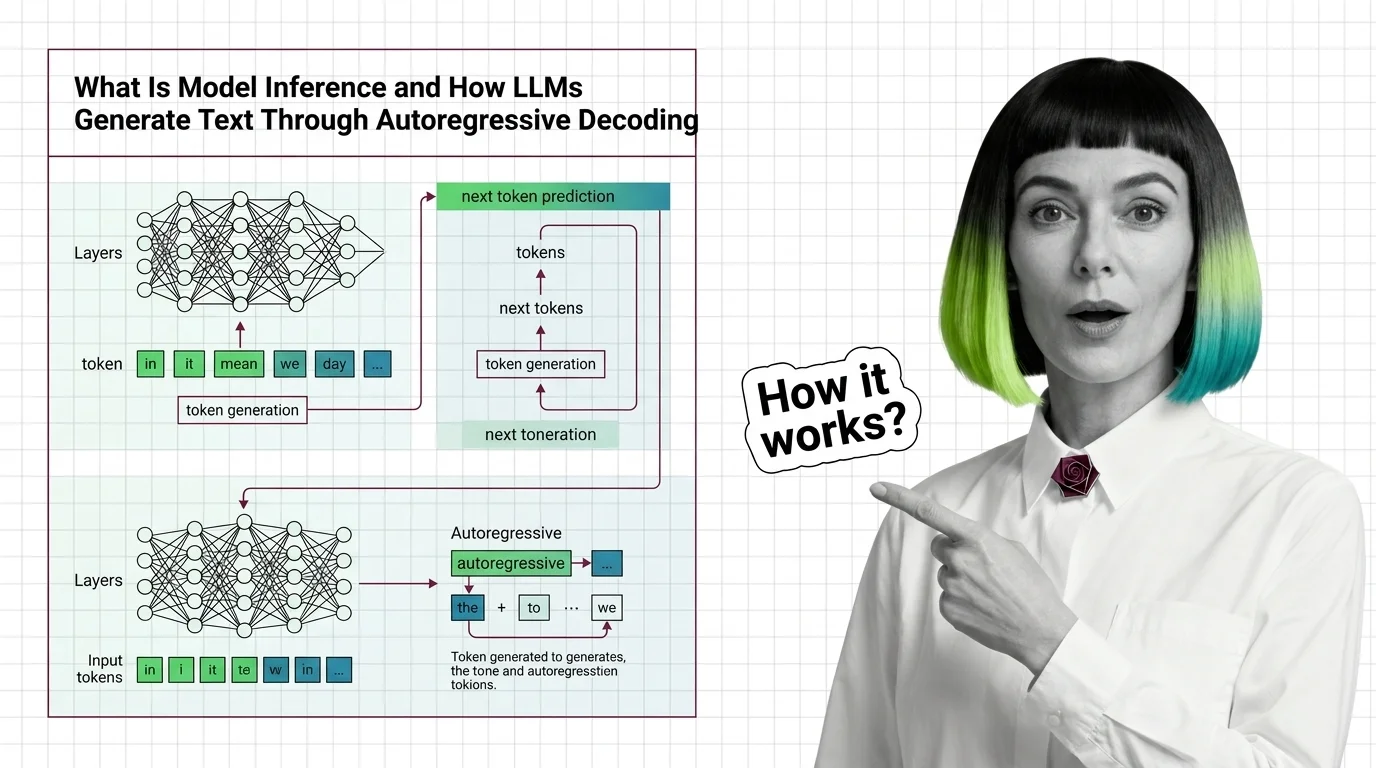

Inference is the process of running a trained machine learning model to generate predictions, classifications, or text in real time. For large language models, inference involves autoregressive token generation, memory management through KV-cache, and careful balancing of latency against throughput to meet production requirements. Also known as: Model Inference, LLM Inference.

Understand the Fundamentals

Inference is where training meets reality, converting static model weights into dynamic output one token at a time. These articles unpack the mechanisms that make generation possible and the constraints that shape it.

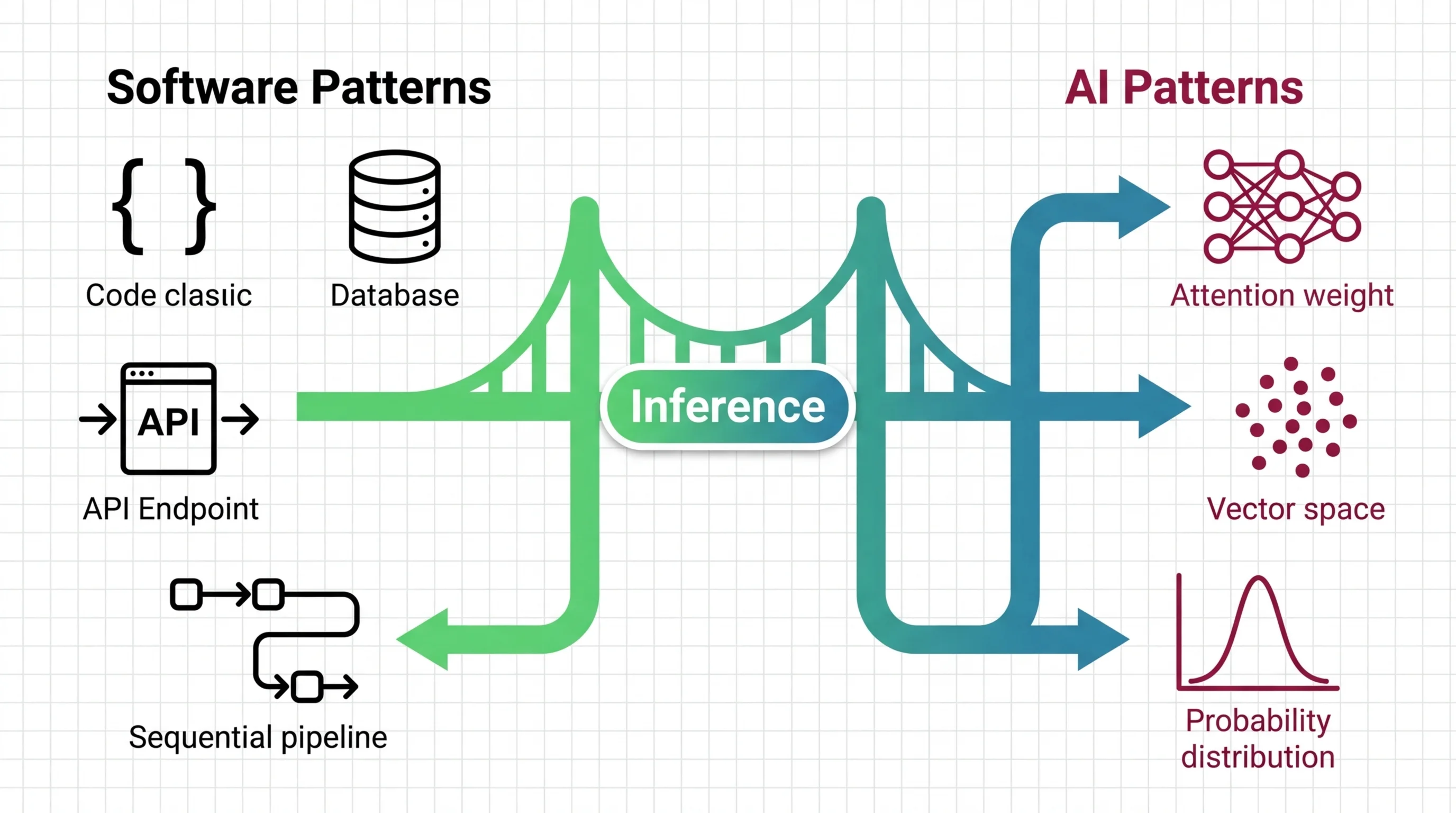

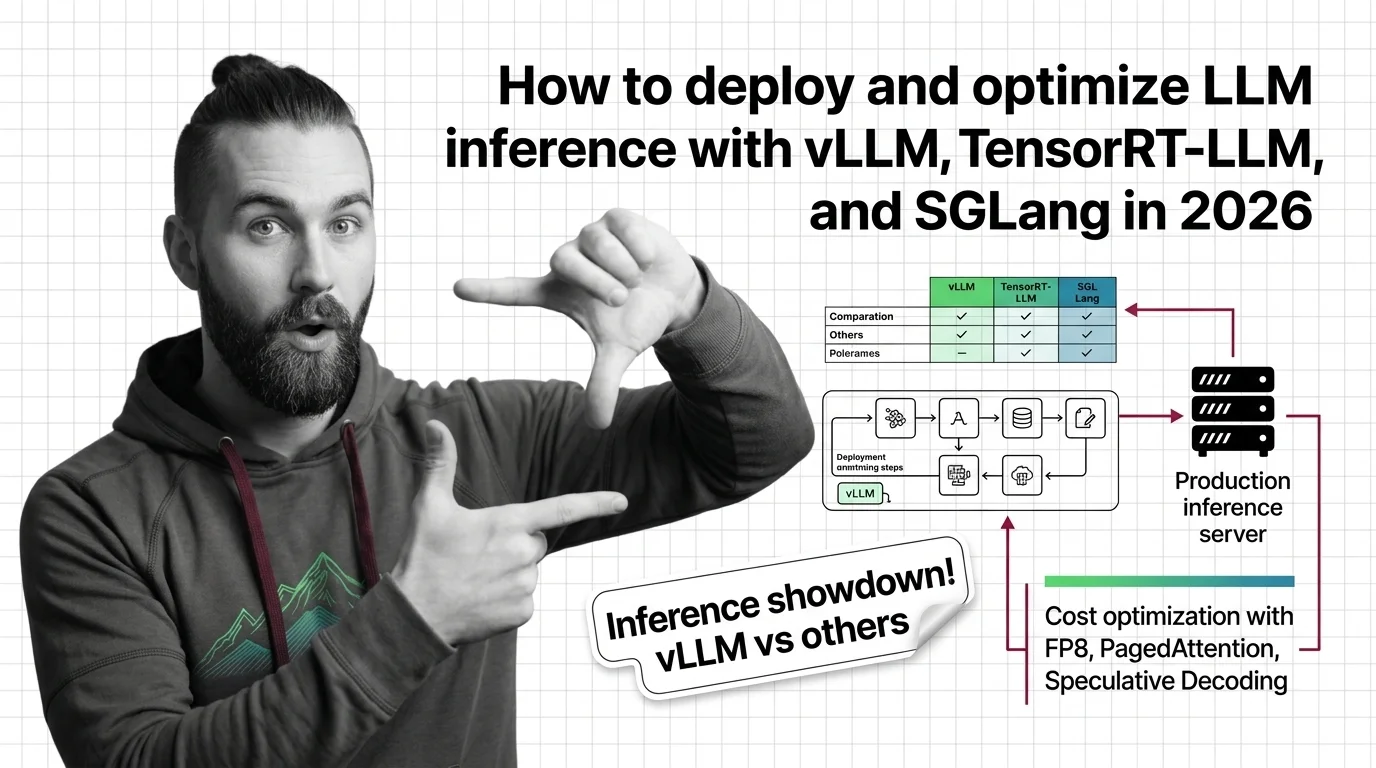

Build with Inference

Deploying inference at scale means choosing the right serving framework, configuring batching strategies, and managing GPU memory under load. These guides walk through the practical decisions that determine cost and speed.

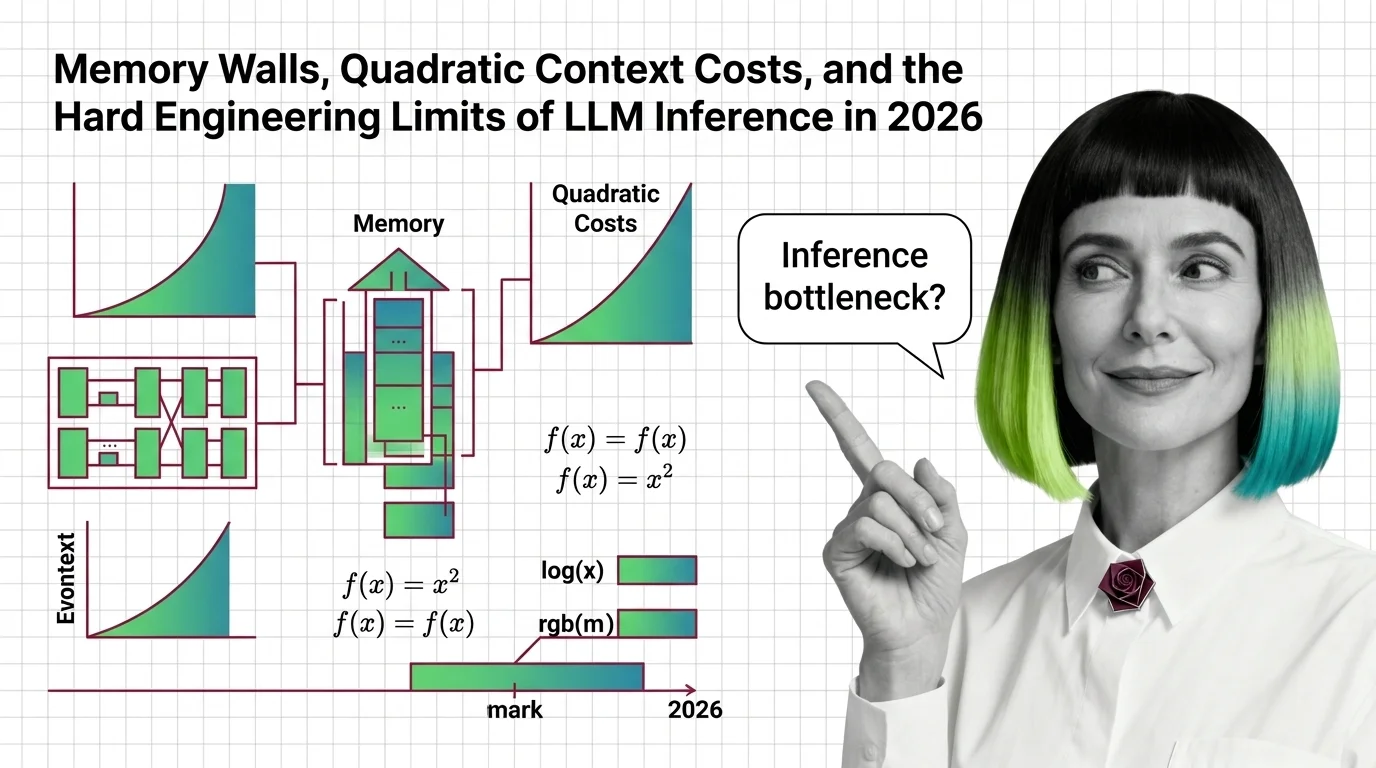

What's Changing in 2026

Inference costs dominate production AI budgets, and the hardware landscape is shifting fast. Staying current on optimization breakthroughs and silicon alternatives can reshape your deployment economics overnight.

Updated March 2026

Risks and Considerations

Running inference at scale raises questions about energy consumption, equitable access, and the hidden costs of always-available AI. These articles examine what responsible deployment looks like beyond raw performance.