Human-in-the-Loop for AI Agents: How Approval Gates Work

Human-in-the-loop for AI agents pauses autonomous workflows at risky steps and routes them to a human gate. Here's how approval works in production.

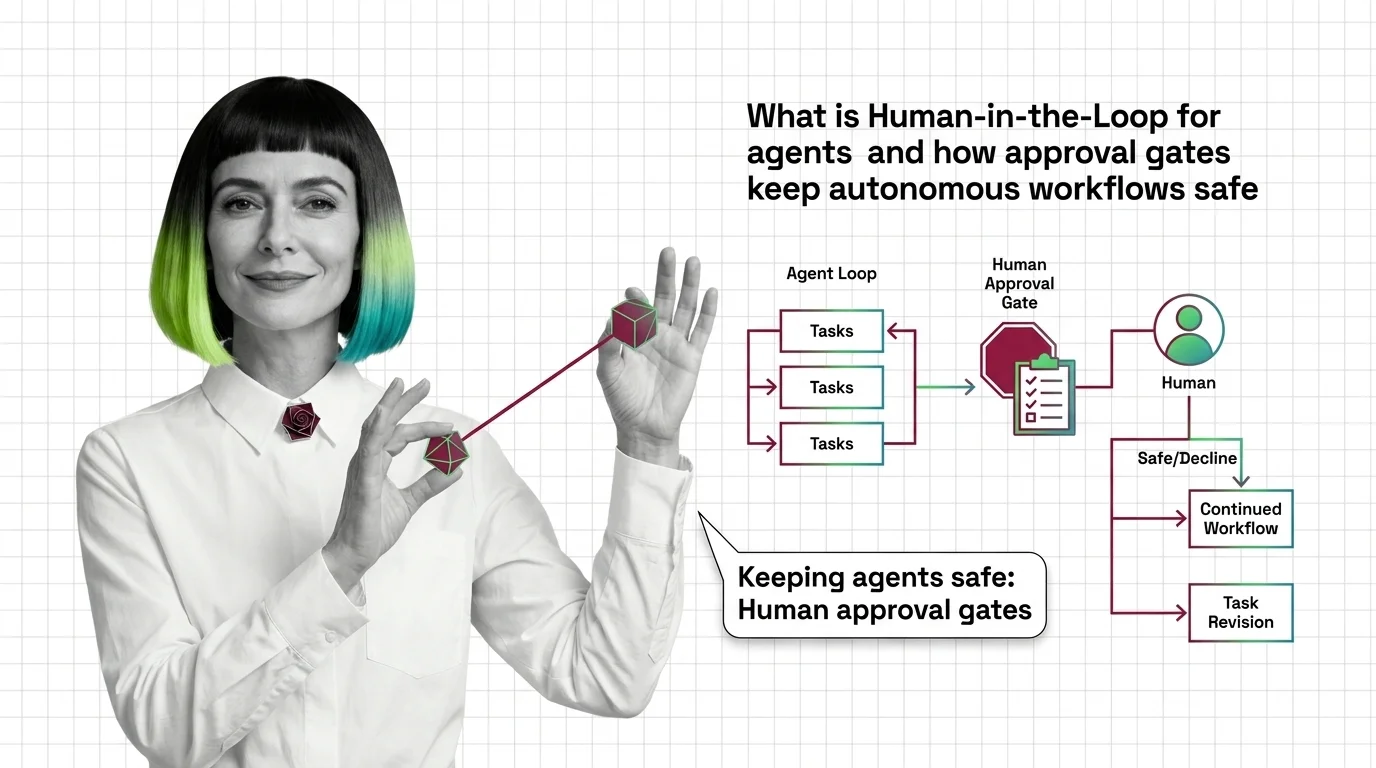

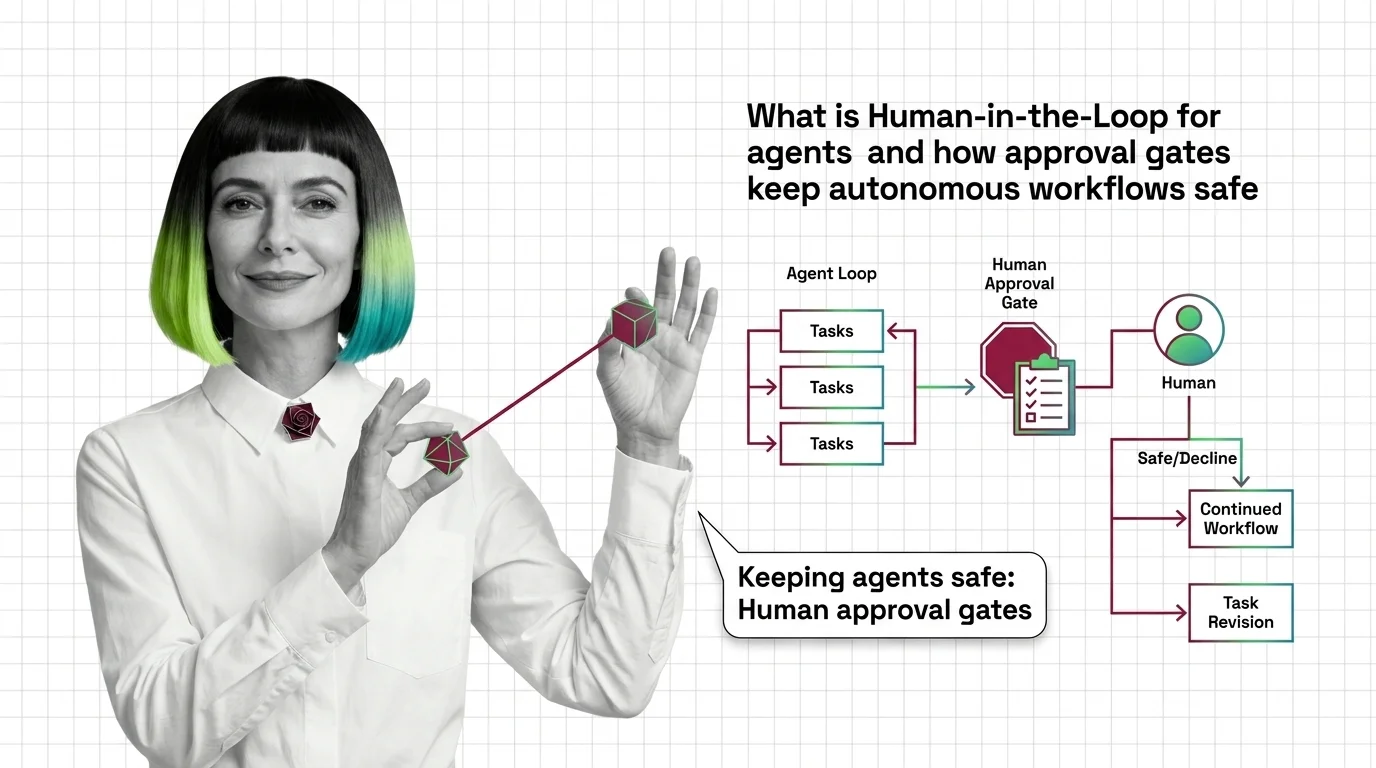

Human-in-the-loop for agents is a design pattern that pauses an autonomous workflow at defined checkpoints so a person can approve, edit, or reject the next action.

It covers approval gates, escalation policies, and feedback loops that let teams hand routine work to agents while keeping a human accountable for sensitive decisions like payments, code merges, or customer messages. Also known as: HITL.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Human-in-the-loop for AI agents pauses autonomous workflows at risky steps and routes them to a human gate. Here's how approval works in production.

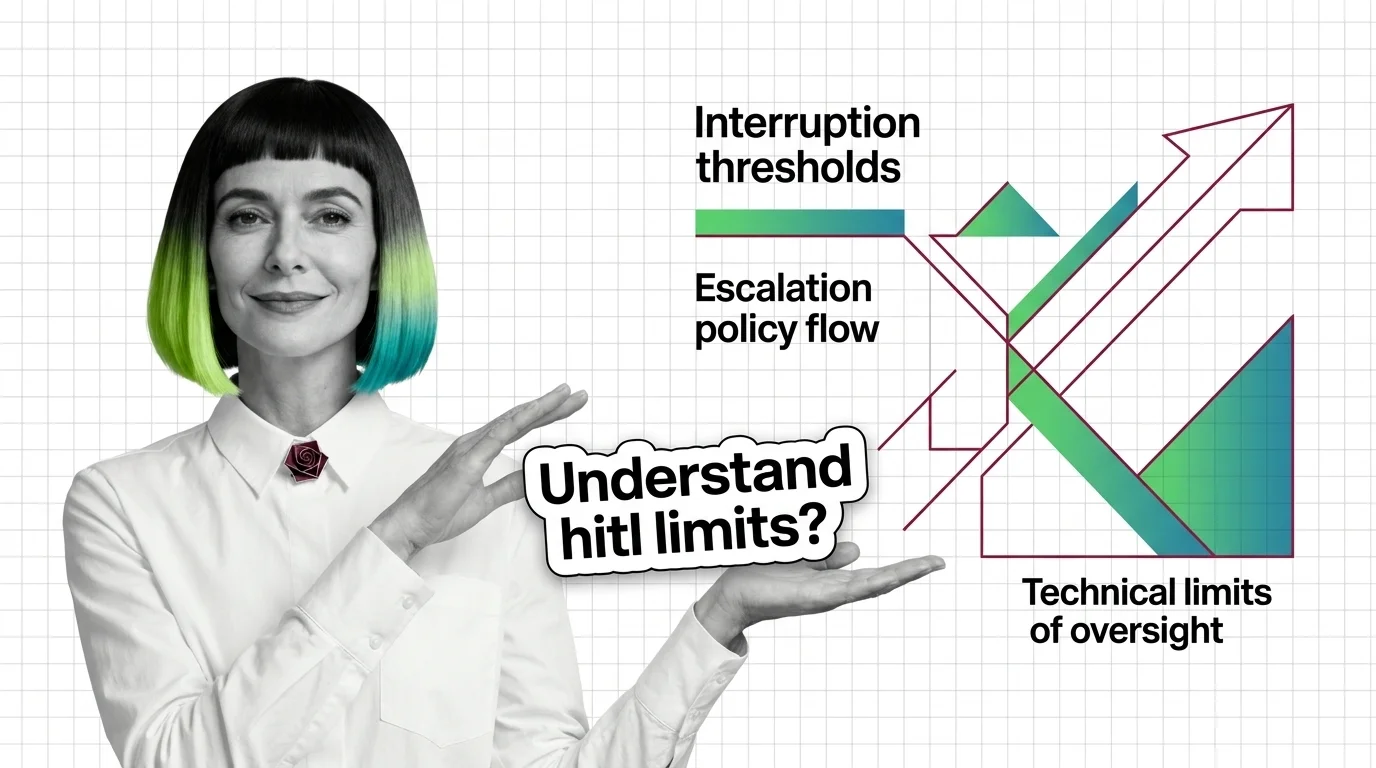

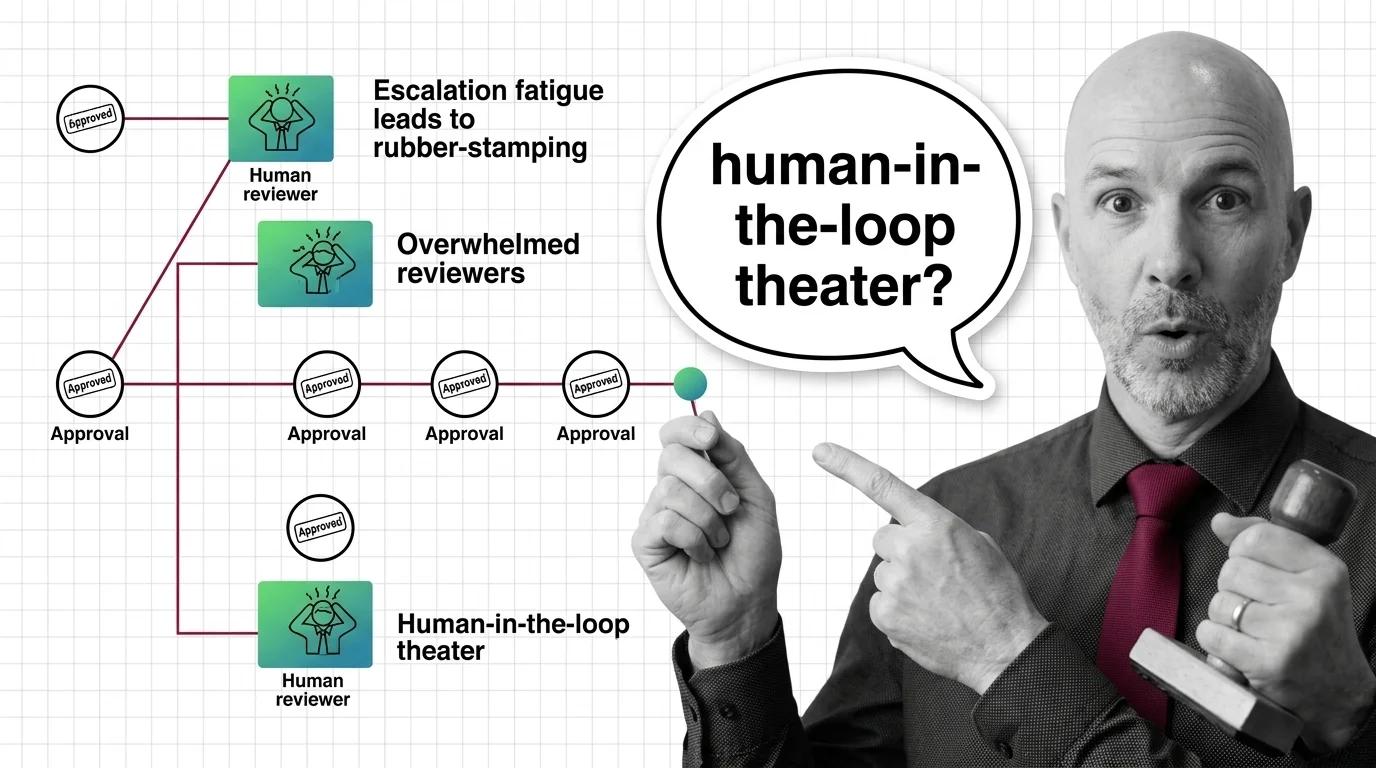

HITL for agents is easy to start and hard to scale. Learn the prerequisites — durable state, idempotency, escalation — and where vigilance breaks.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

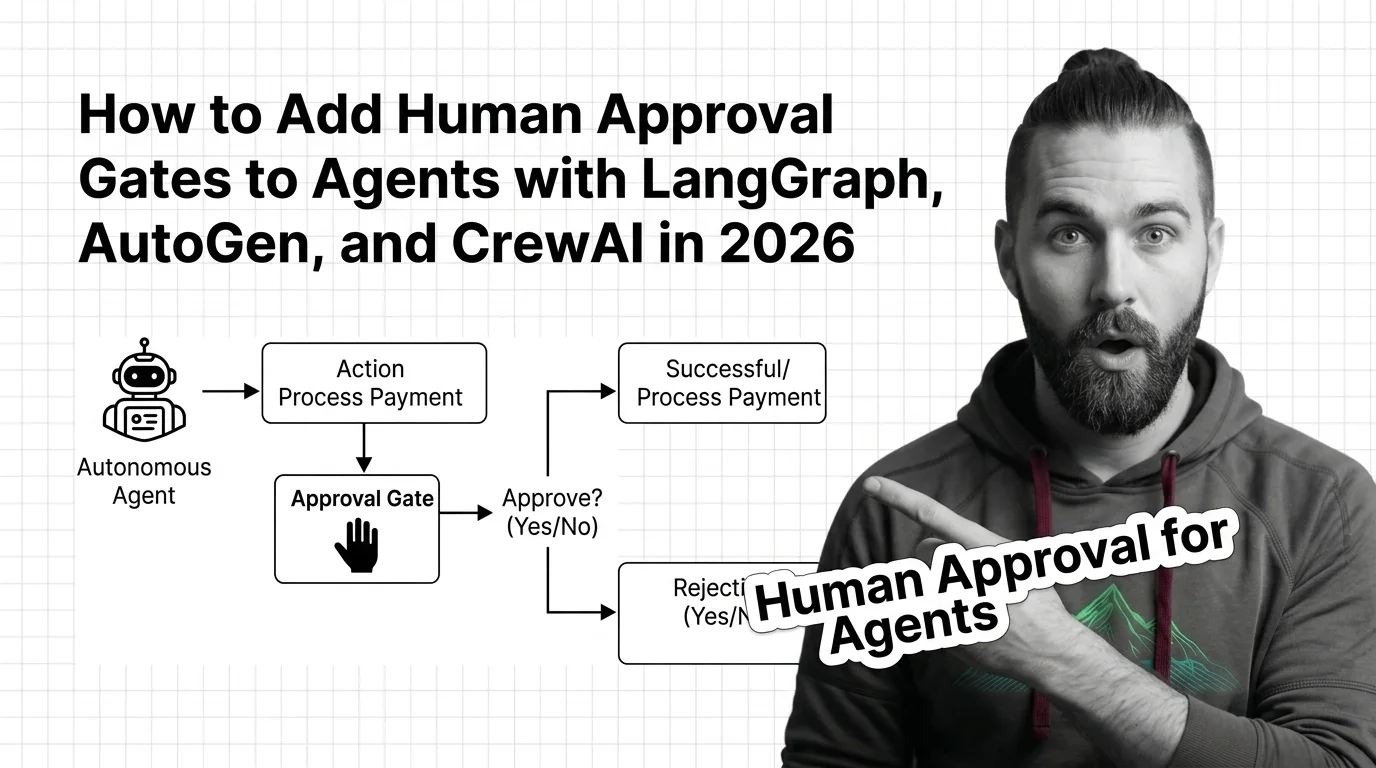

Stop your agent from sending the wrong email or paying the wrong invoice. Spec-first guide to human approval gates in LangGraph, AutoGen, and CrewAI.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

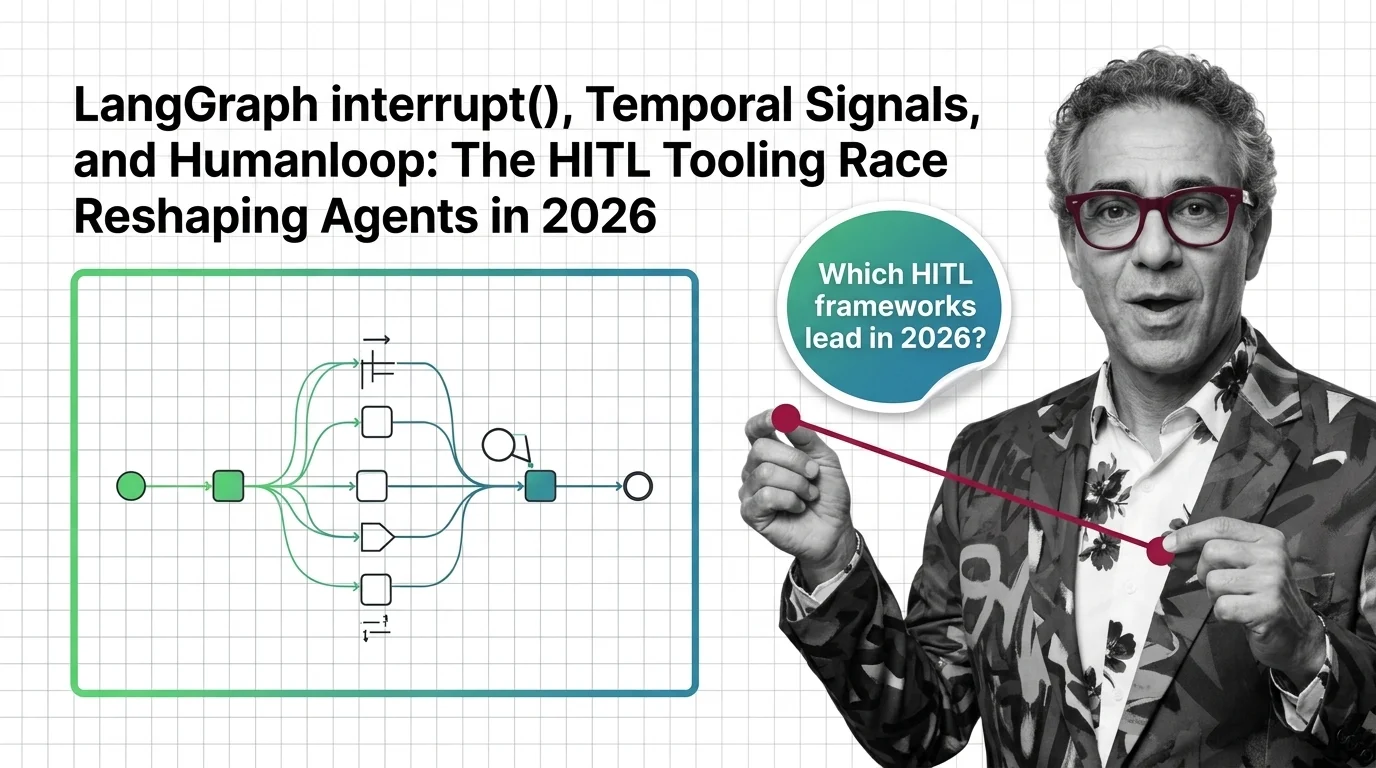

Updated May 2026

LangGraph's interrupt() and Temporal Signals are setting the bar for human-in-the-loop agents in 2026. Humanloop sunset. Here's where HITL tooling is heading.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

Human-in-the-loop oversight collapses when reviewers face approval volume they cannot meet. The ethical cost lands on the human, not the system designer.