Hallucination

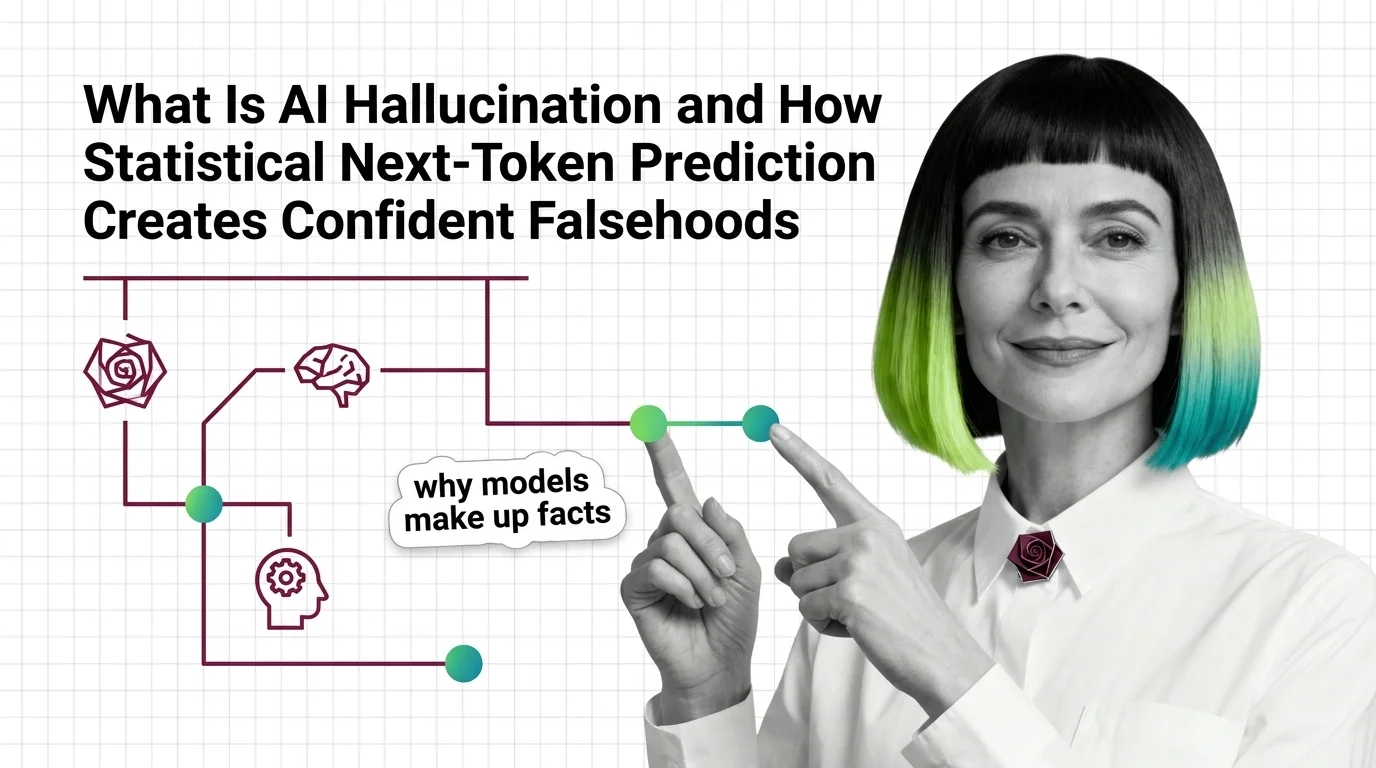

Hallucination is what happens when a large language model generates text that sounds confident and coherent but is factually wrong or entirely fabricated. It stems from the statistical nature of next-token prediction, where the model optimizes for plausibility rather than truth. Detection, mitigation through grounding and retrieval, and careful system design are active areas of research and engineering practice. Also known as: LLM Hallucination, AI Hallucination

Understand the Fundamentals

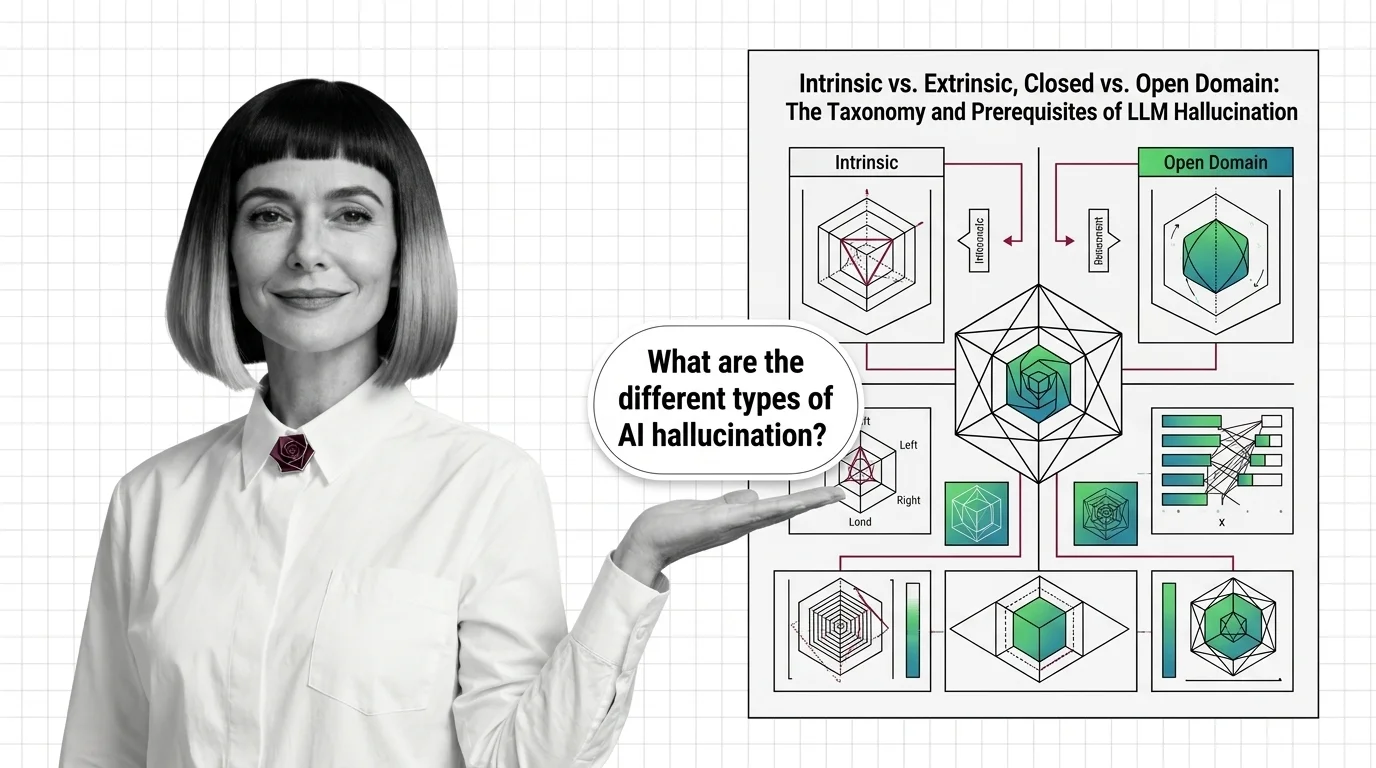

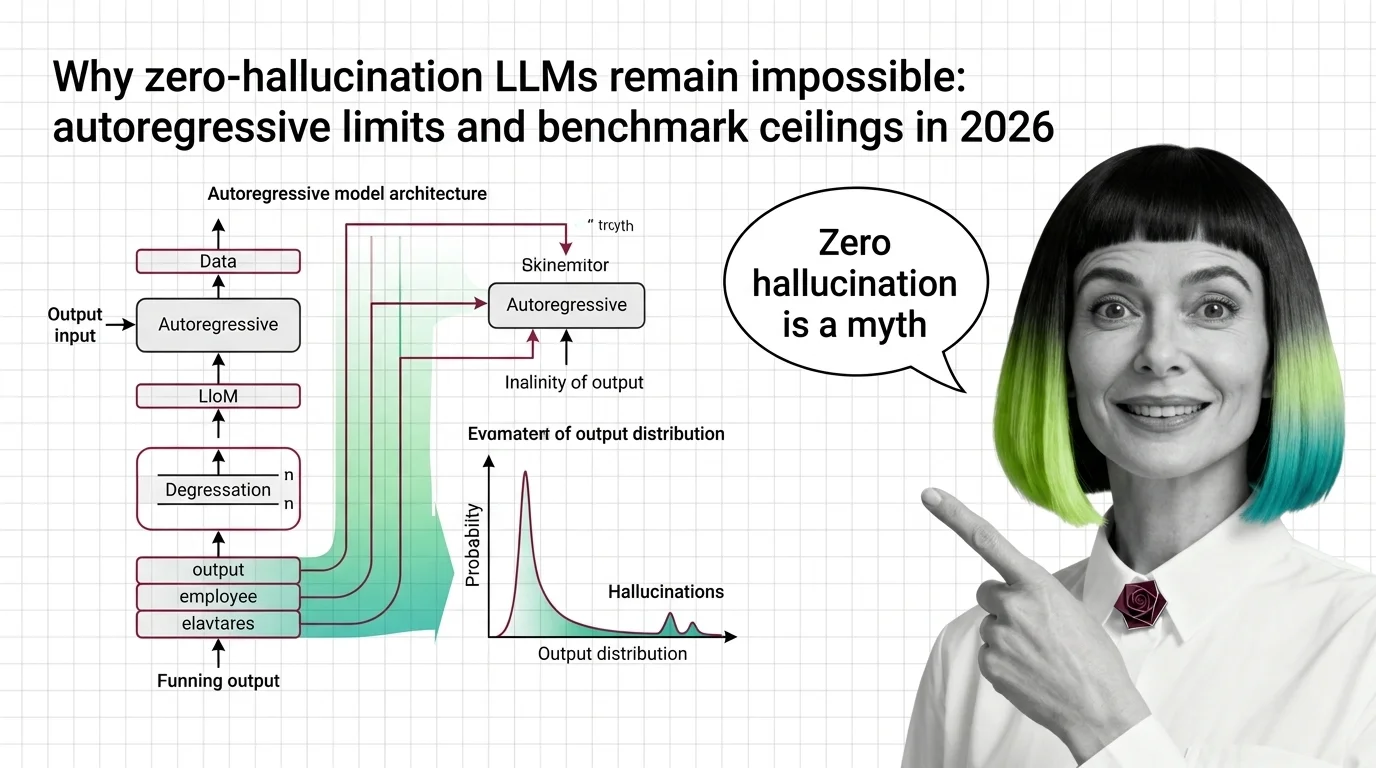

Hallucination reveals a fundamental tension in how language models work: they learn to predict probable sequences, not verify facts. These explainers unpack the mechanics, taxonomy, and theoretical limits behind confident fabrication.

Build with Hallucination

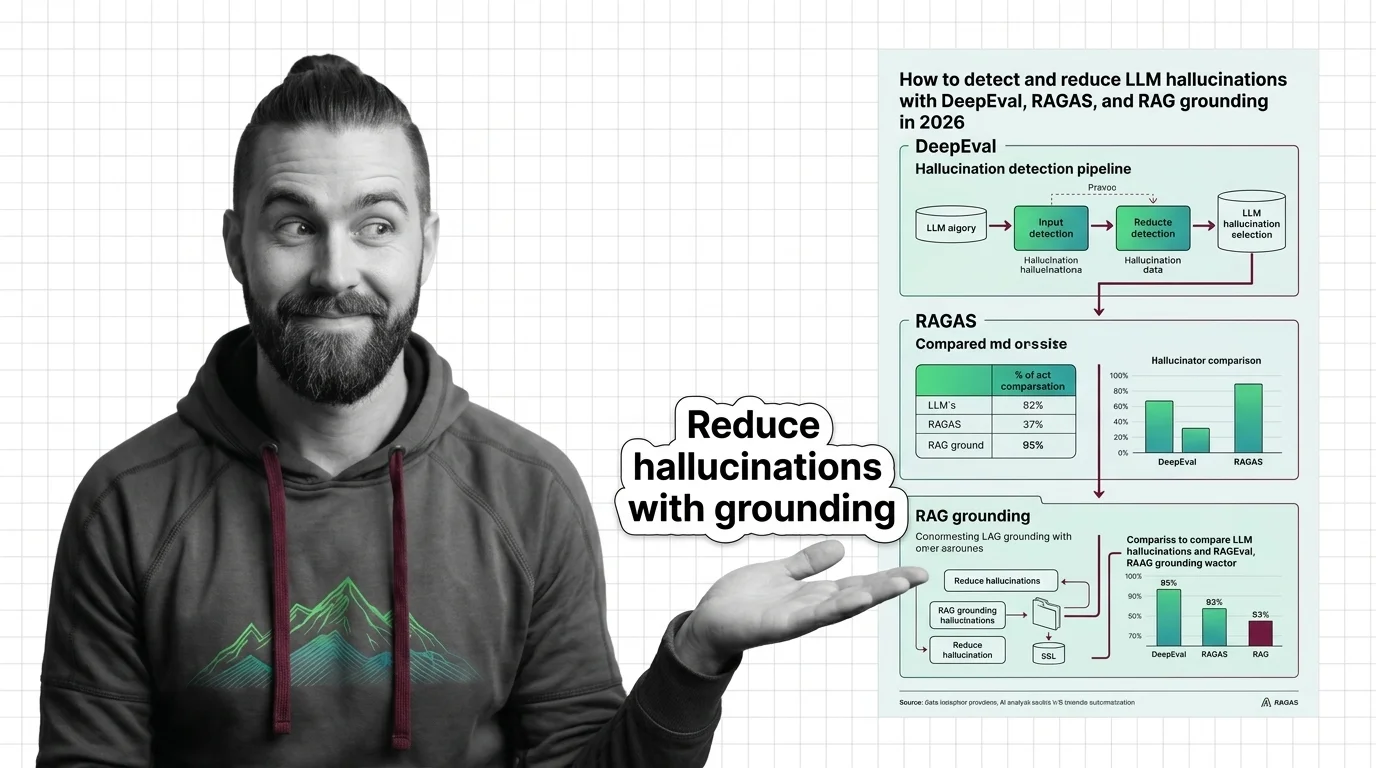

Detecting and reducing hallucinations requires concrete tooling and architectural decisions. These guides walk through grounding strategies, evaluation frameworks, and retrieval-augmented approaches that bring measurable improvement.

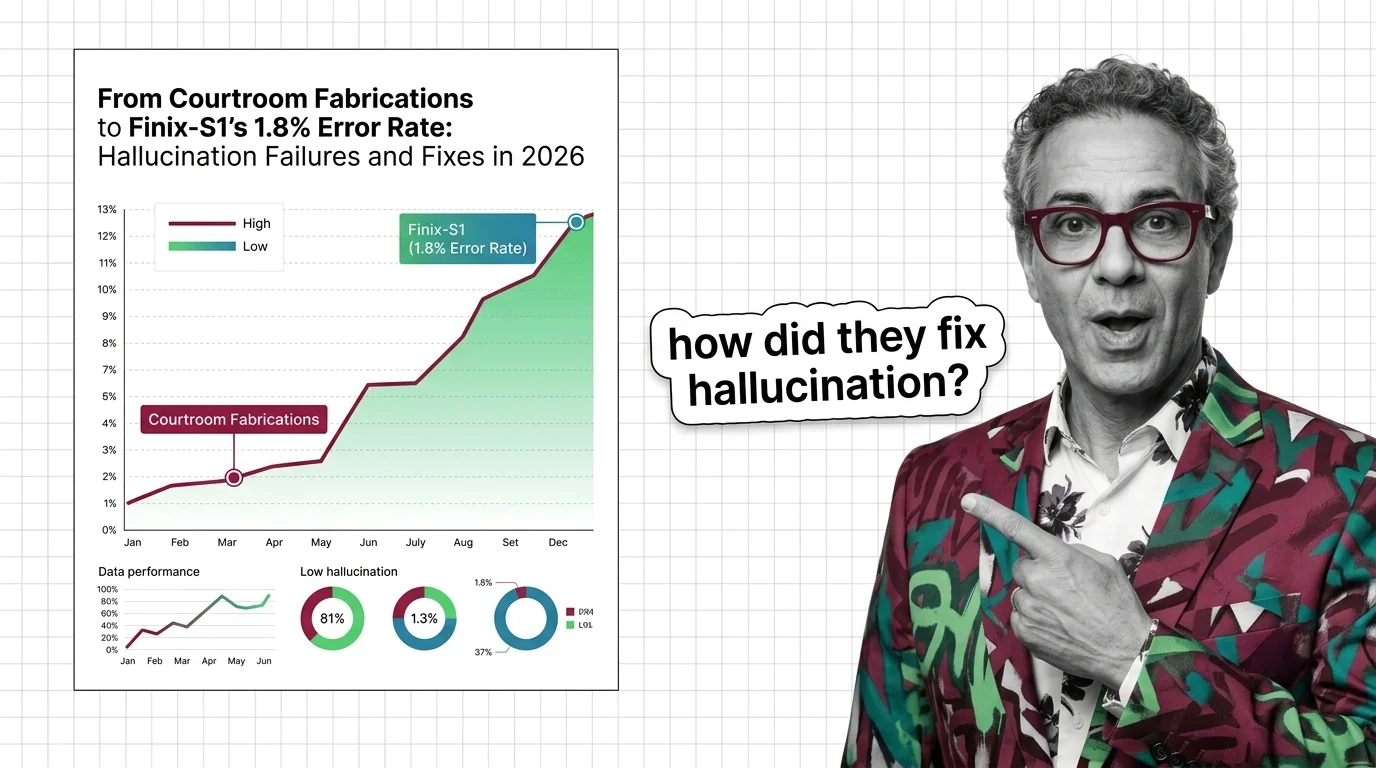

What's Changing in 2026

The race to shrink hallucination rates is reshaping model benchmarks and product launches alike. Staying current on detection breakthroughs and real-world failure cases matters for anyone deploying language models.

Updated March 2026

Risks and Considerations

Hallucinated outputs carry real consequences when users trust them without verification. These pieces examine liability, disclosure obligations, and the ethical weight of systems that present fabrication as fact.