Fine-Tuning

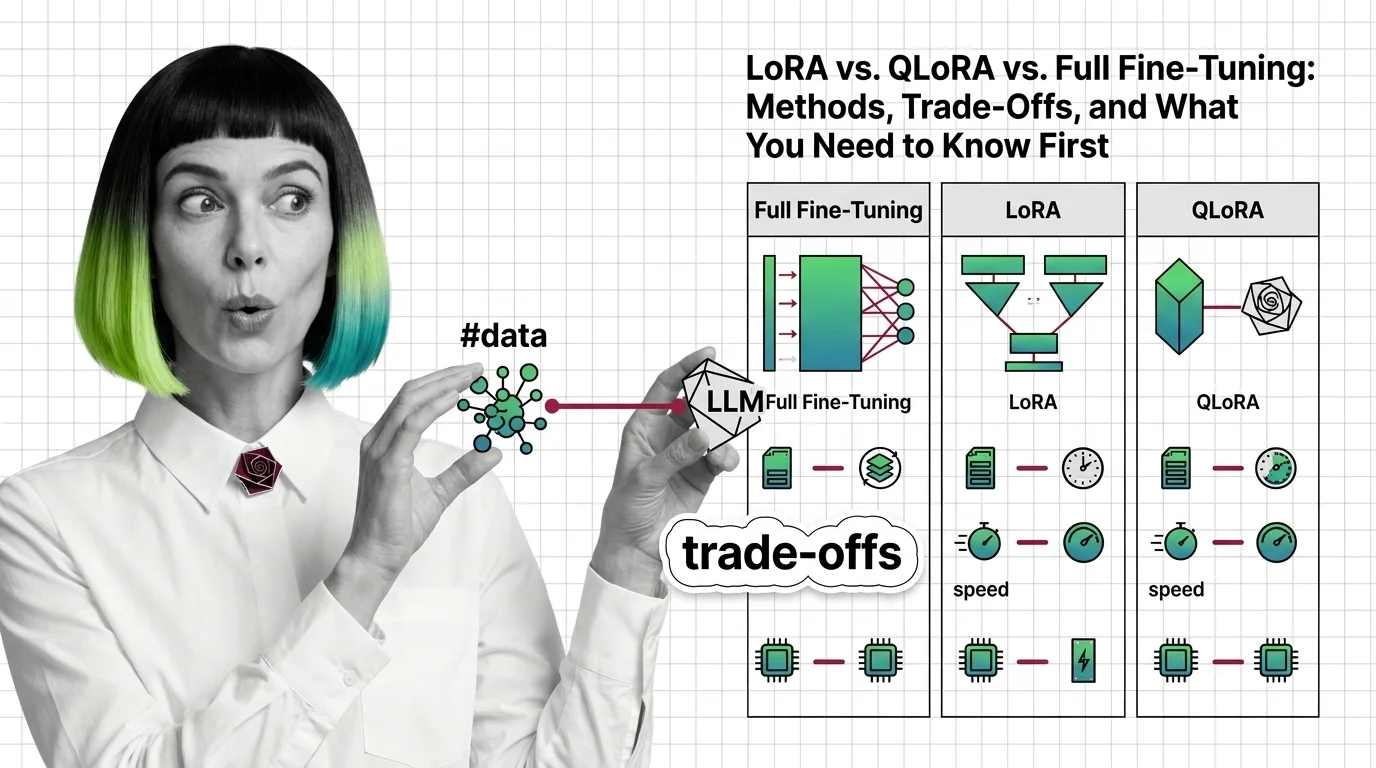

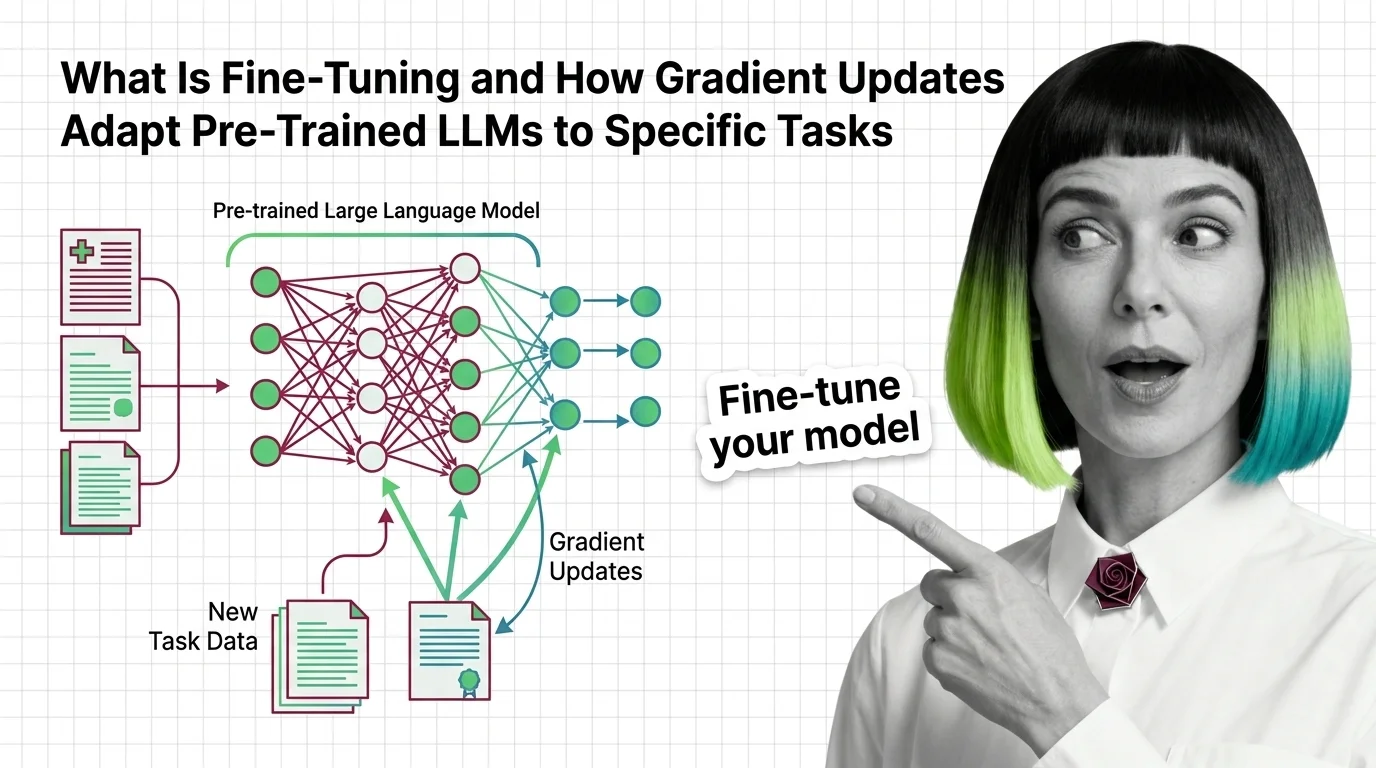

Fine-tuning takes a pre-trained large language model and trains it further on a smaller, task-specific dataset so it performs better at a particular job. Methods range from full fine-tuning, which updates every parameter, to efficient approaches like LoRA and QLoRA that modify only a fraction of weights. Also known as: Model Fine-Tuning.

Understand the Fundamentals

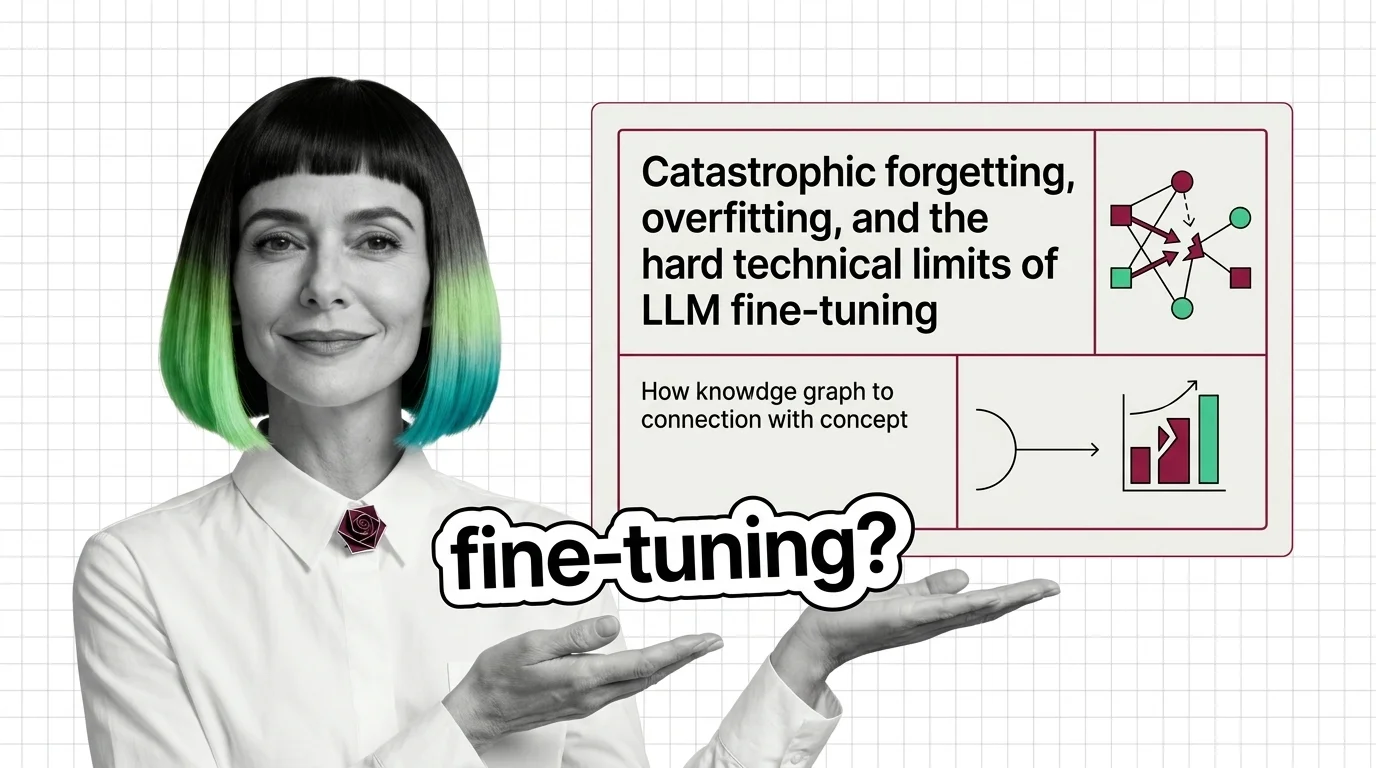

Fine-tuning rewires a general-purpose model into a specialist. These articles explain the gradient mechanics, parameter-efficient methods, and the failure modes that make the difference between a useful model and a broken one.

Build with Fine-Tuning

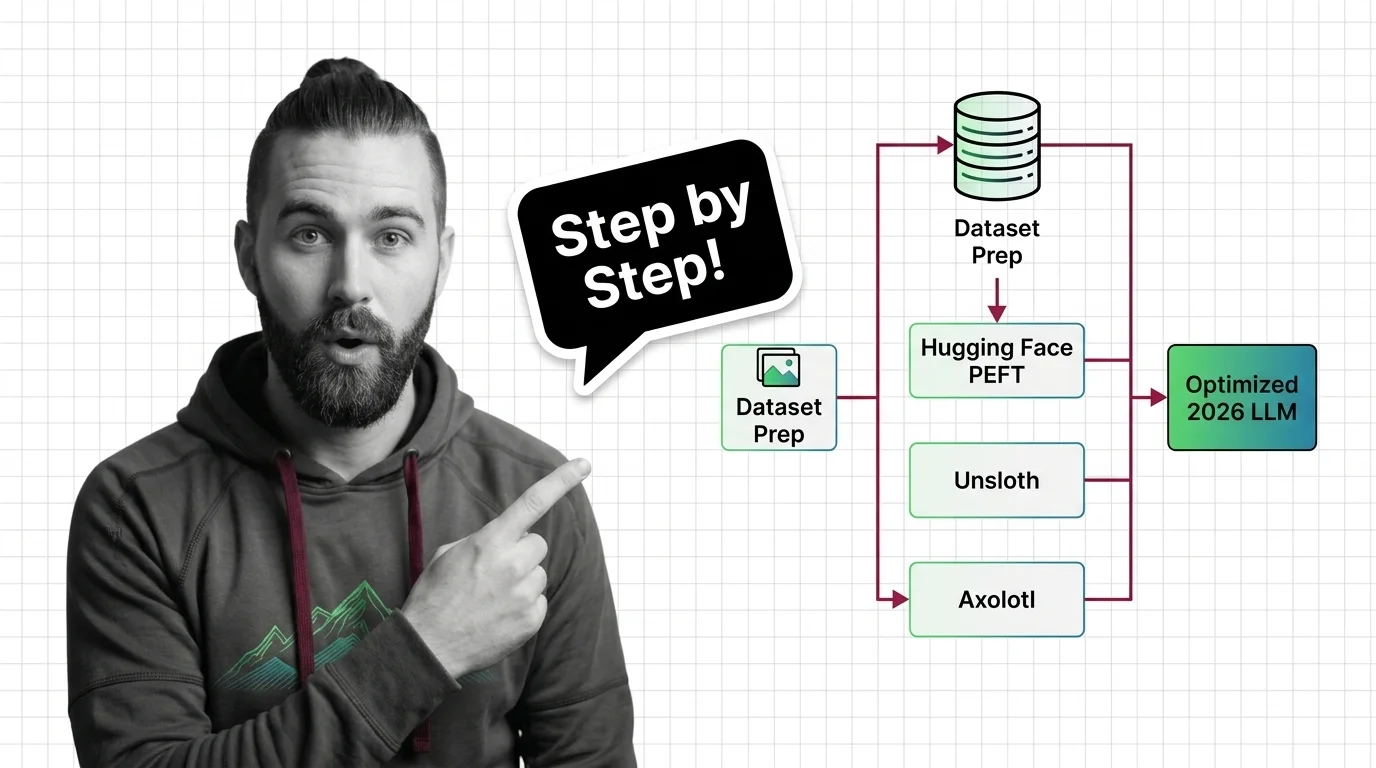

The guides walk through real fine-tuning workflows, covering tooling choices, dataset preparation, and the trade-offs between speed, cost, and model quality you will face at every step.

What's Changing in 2026

The fine-tuning landscape shifts fast as new platforms, pricing models, and efficiency techniques reshape what is practical. Staying current determines whether you overpay or miss a better approach entirely.

Updated March 2026

Risks and Considerations

Fine-tuned models inherit and amplify biases from training data, raise unresolved copyright questions, and blur accountability when something goes wrong in production.