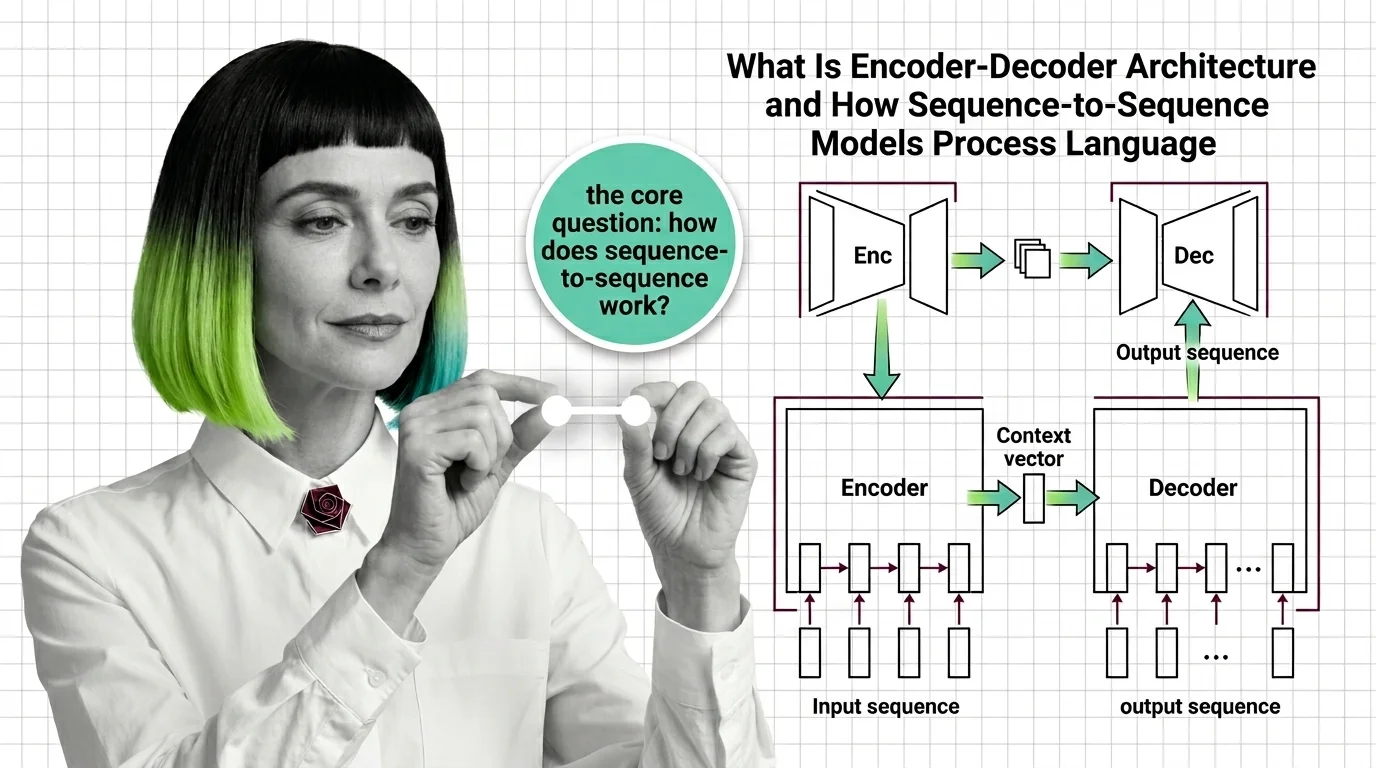

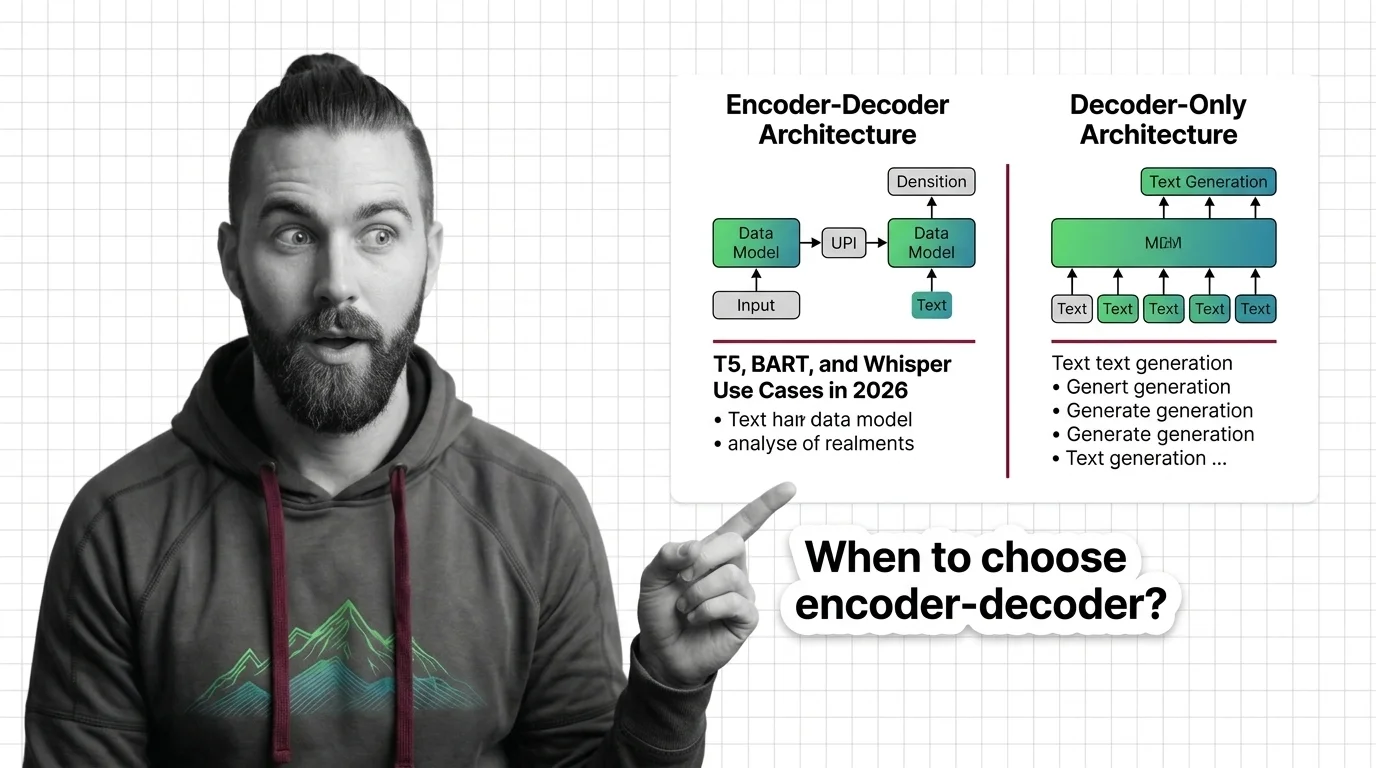

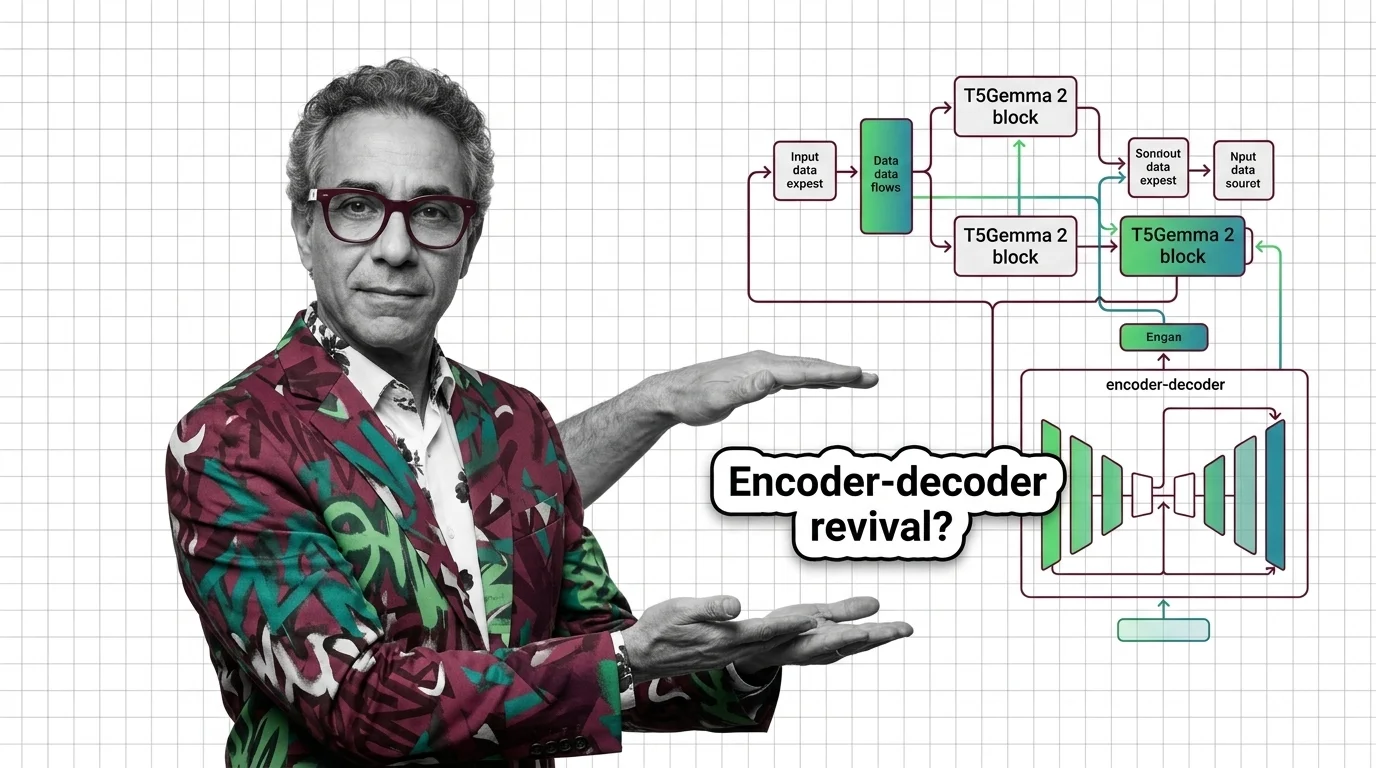

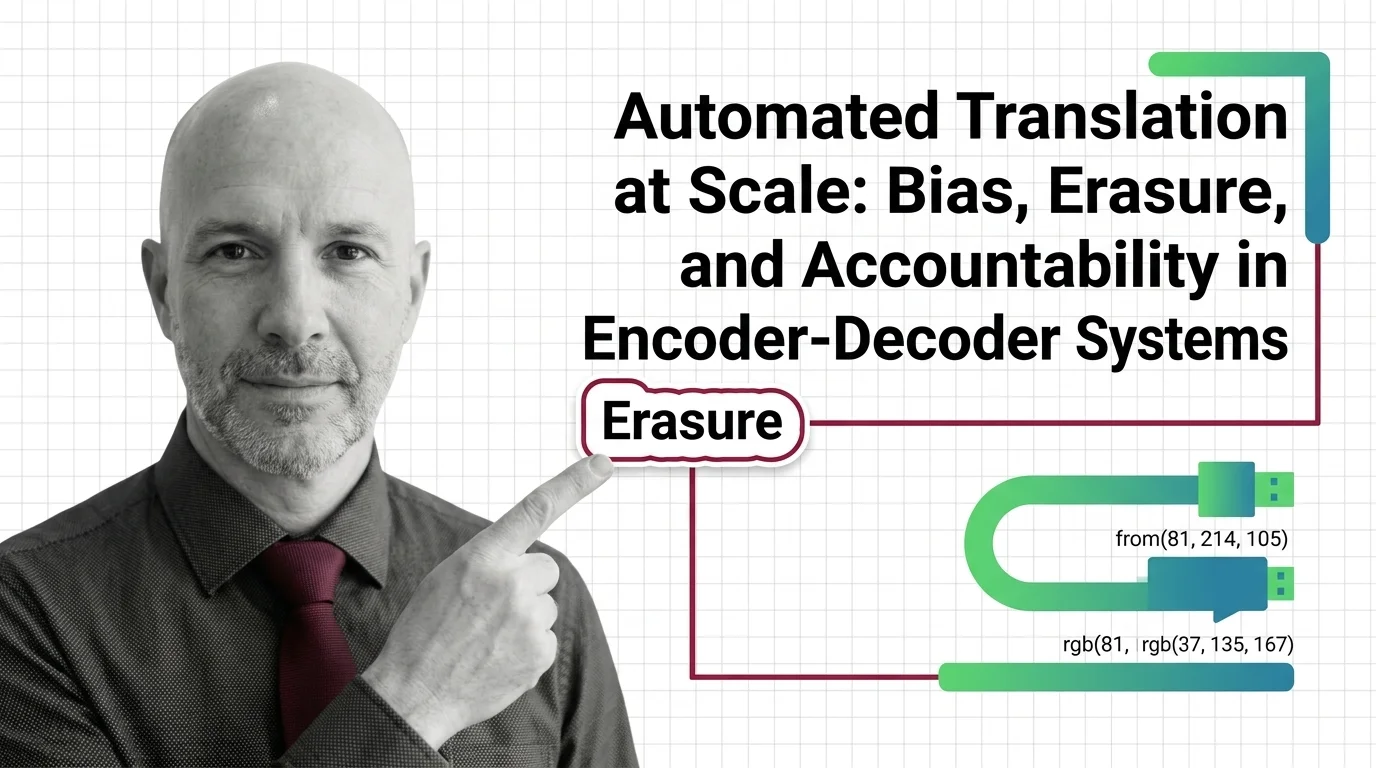

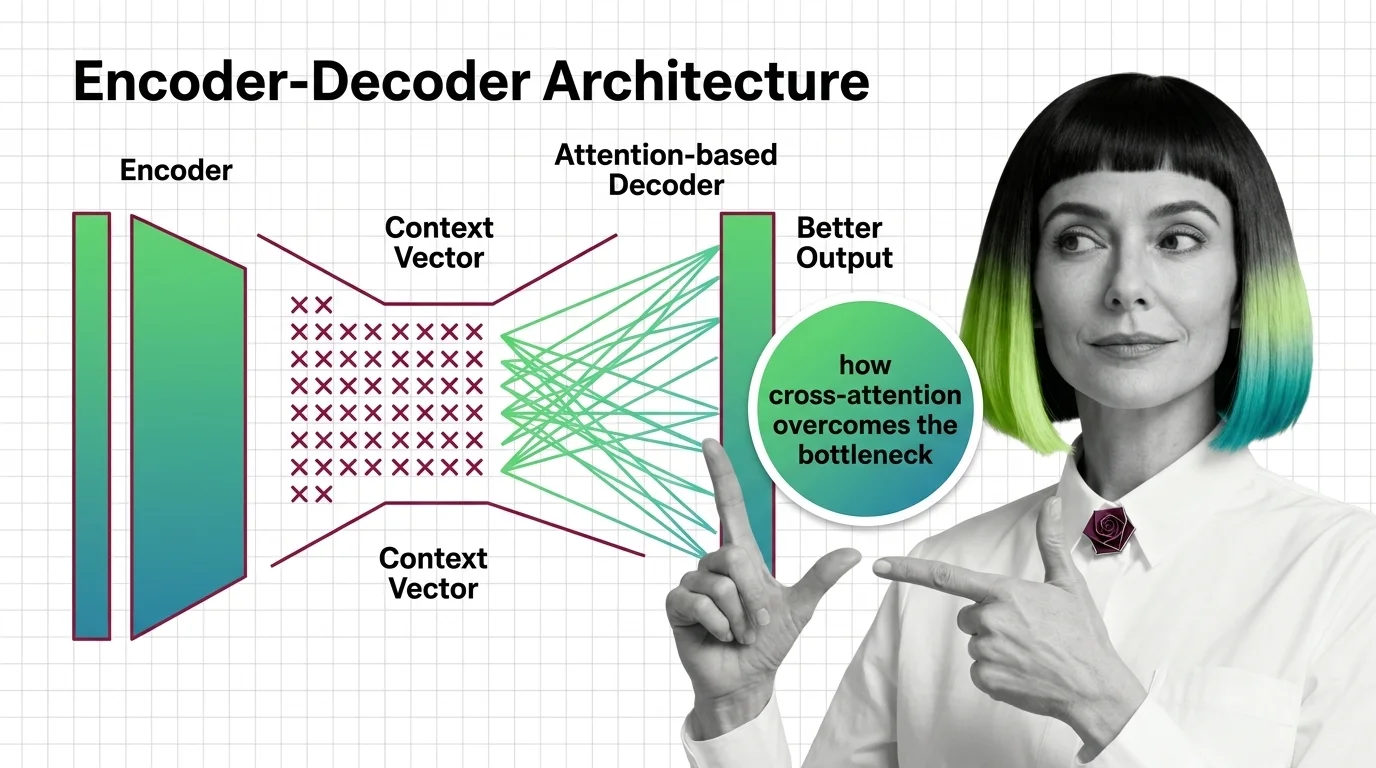

From Context Vectors to Cross-Attention: How Encoder-Decoder Design Overcame the Bottleneck Problem

The encoder-decoder bottleneck crushed long sequences into one vector. Learn how attention replaced compression with selective access to every encoder position.