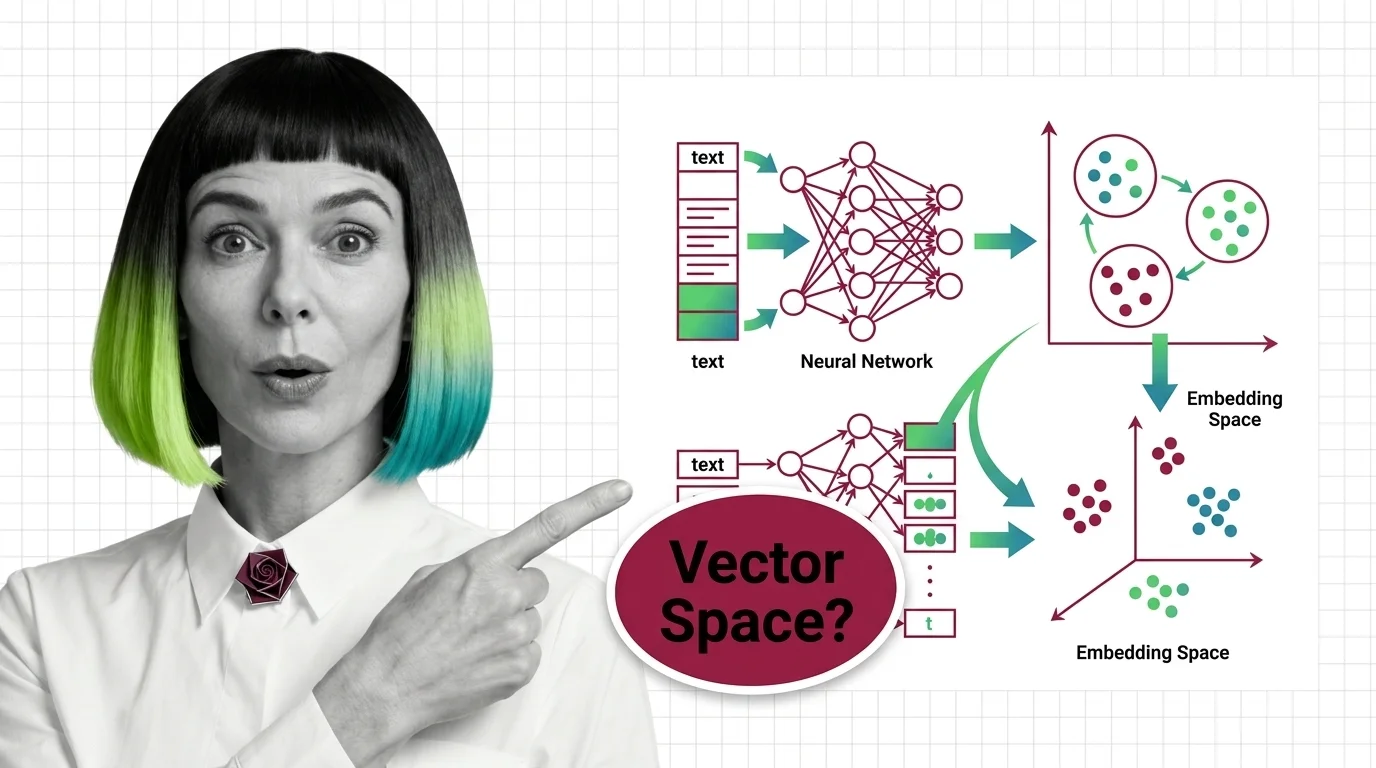

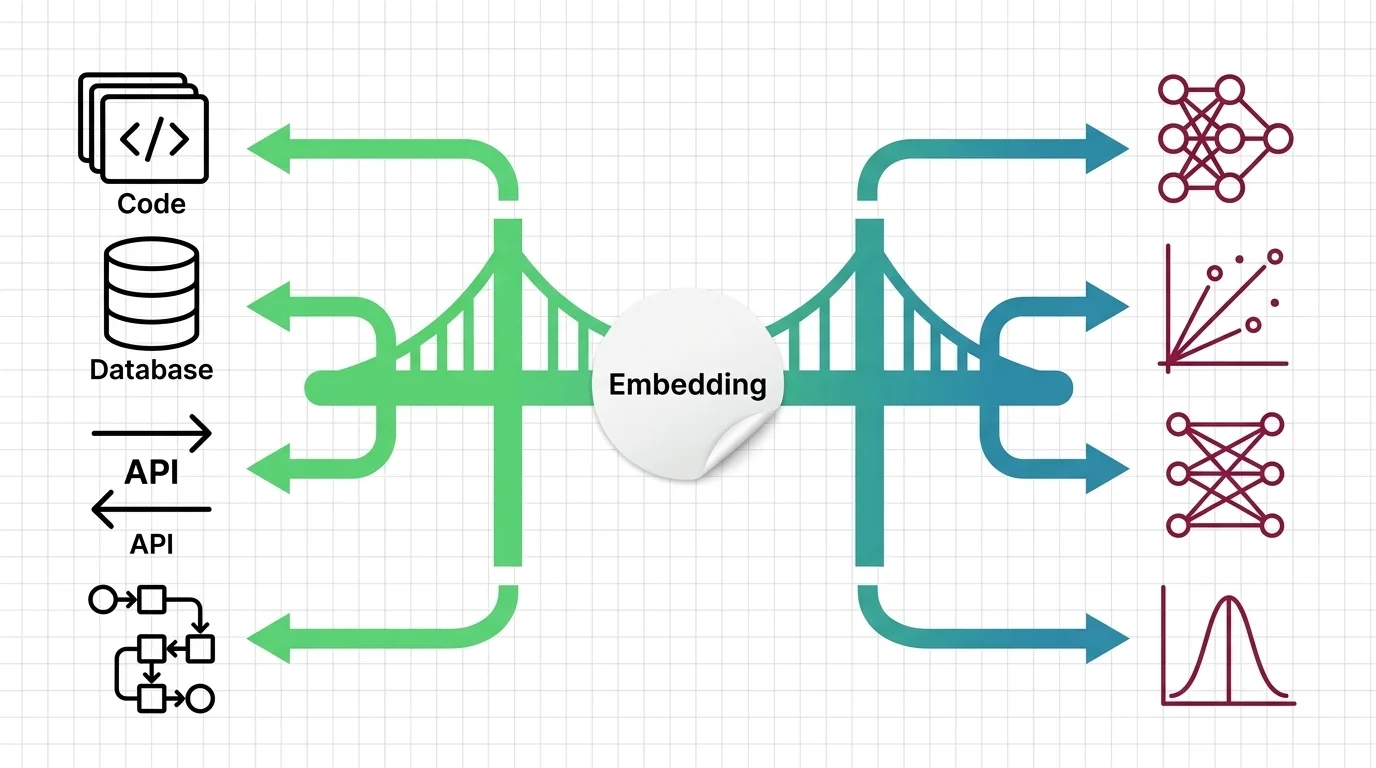

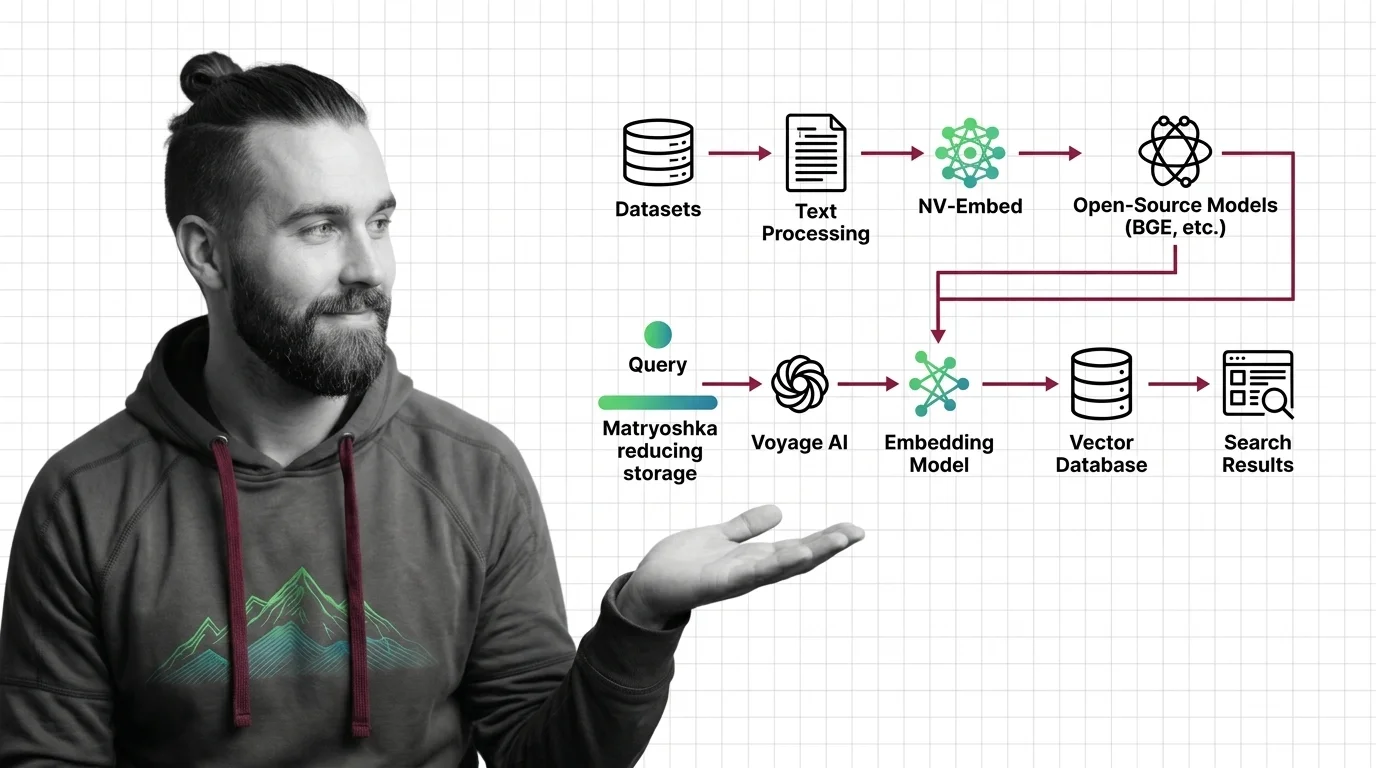

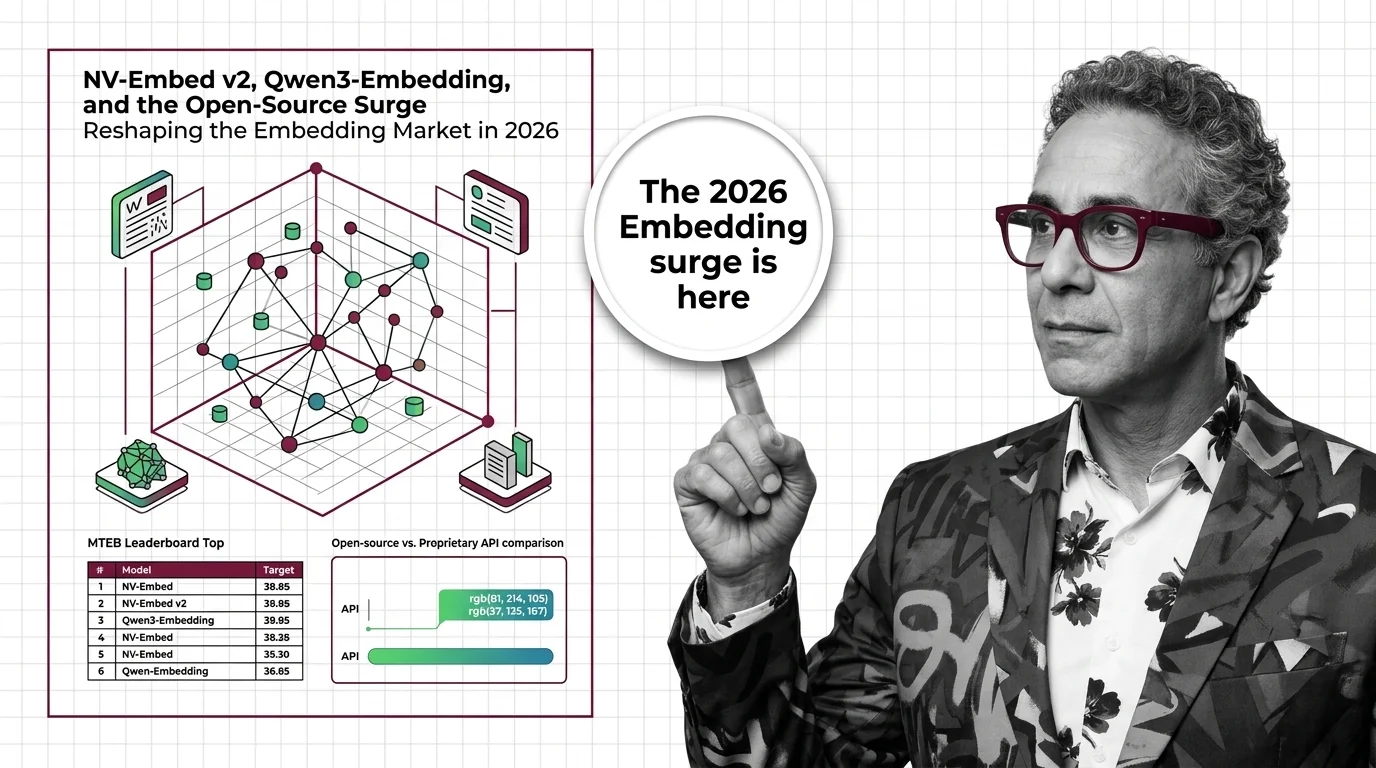

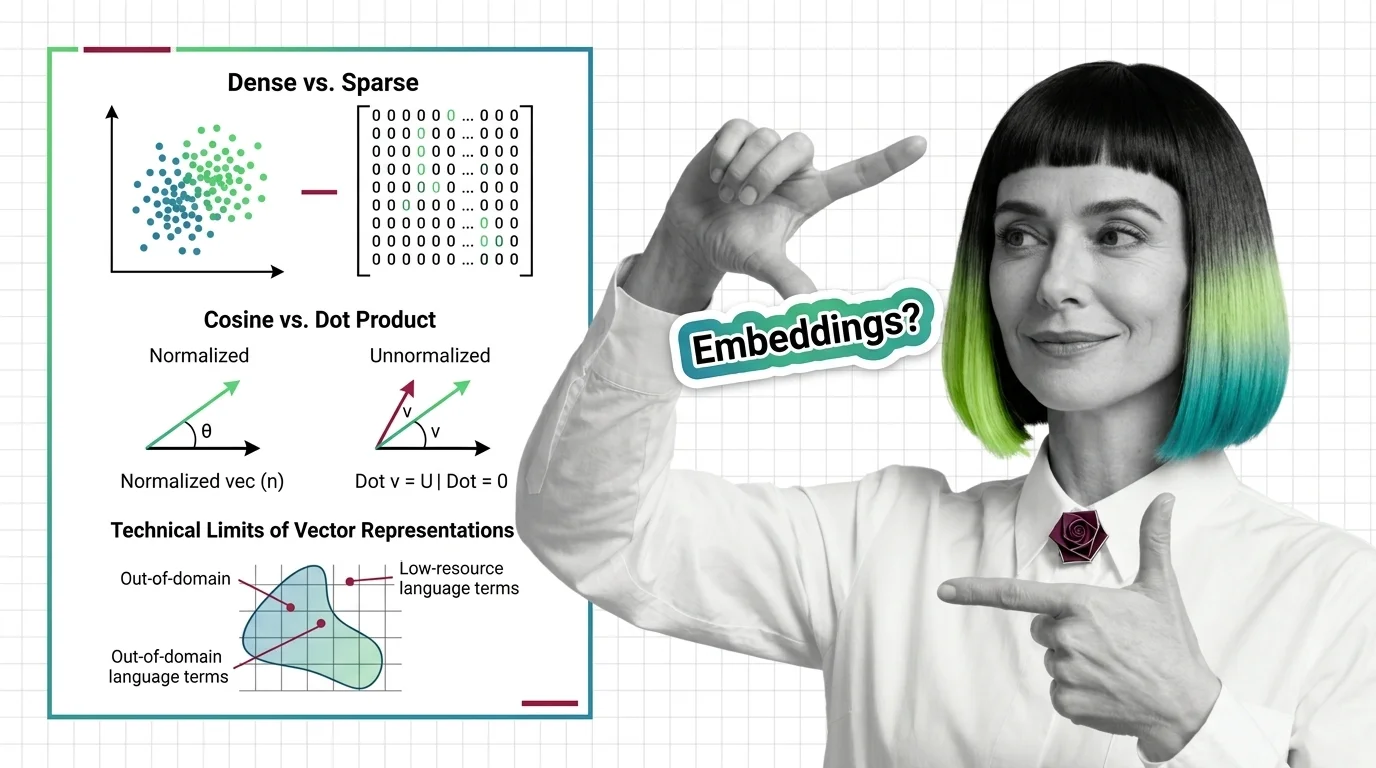

Dense vs. Sparse, Cosine vs. Dot Product, and the Technical Limits of Vector Representations

Dense vs. sparse embeddings encode meaning differently. Learn how cosine similarity, dot product, and Euclidean distance shape search — and where vectors fail.