Embedding

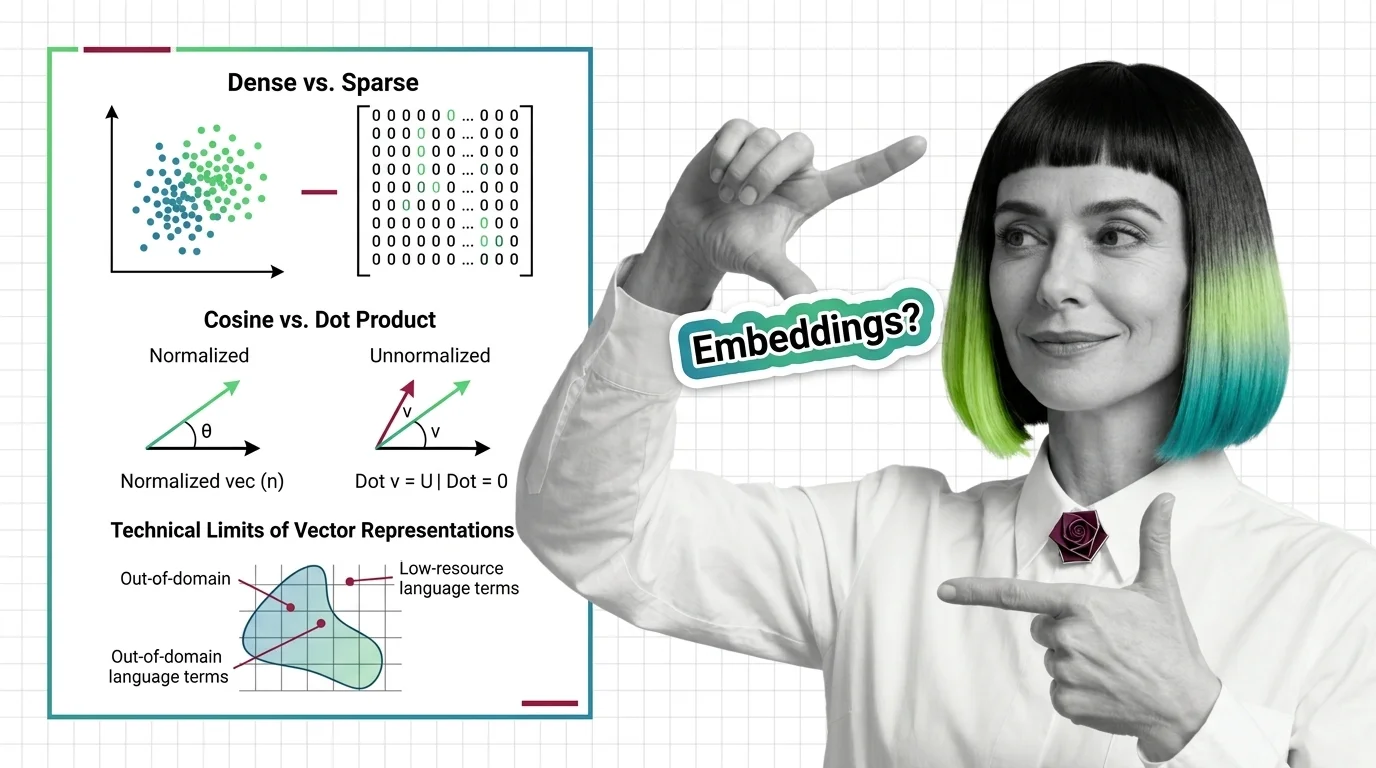

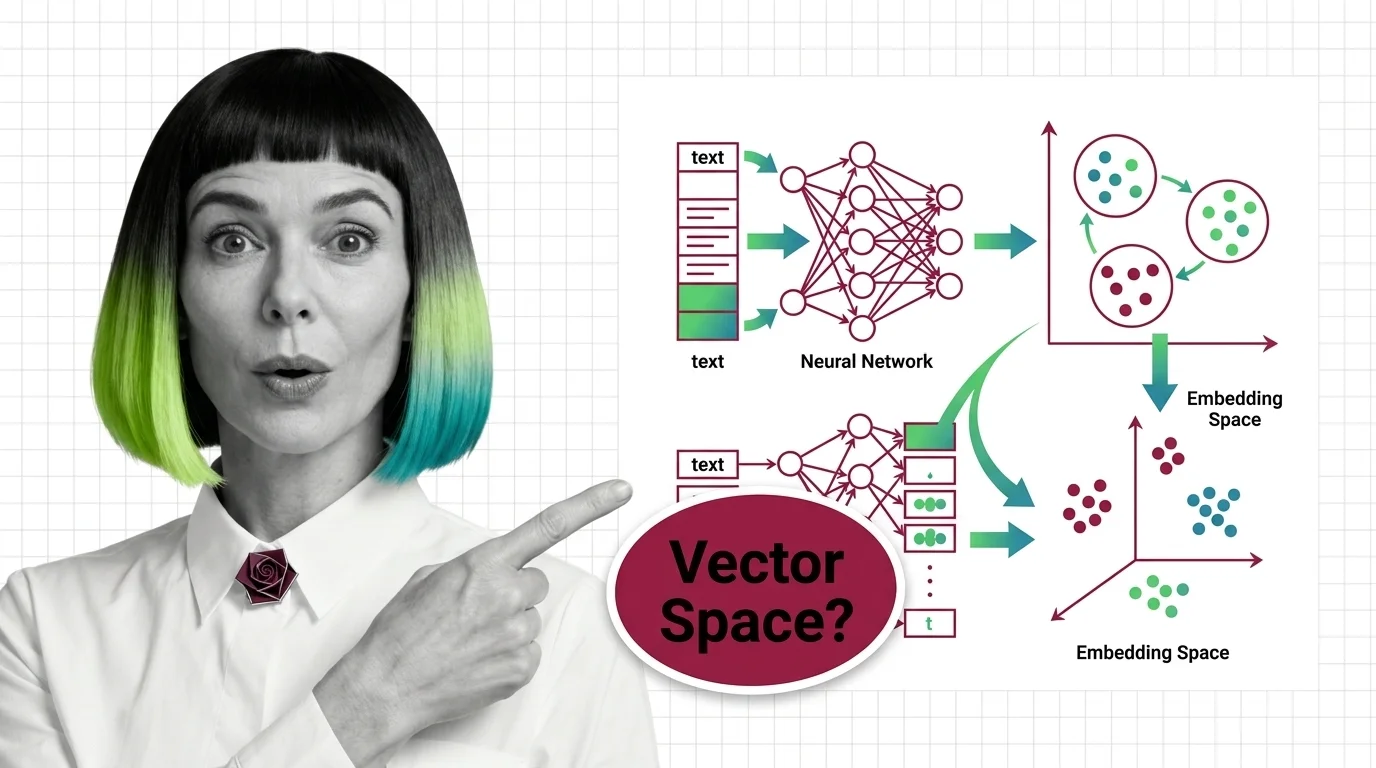

Embeddings are dense vector representations that map words, sentences, or other data into continuous numerical spaces where semantic relationships are preserved as geometric distances. Neural networks learn these representations during training, positioning similar meanings close together and dissimilar meanings far apart. Embeddings power semantic search, recommendation systems, and retrieval-augmented generation by enabling machines to measure meaning through vector similarity rather than exact keyword matching. Also known as: Embeddings, Vector Embedding.

Understand the Fundamentals

Embedding transforms raw text into geometric space where proximity encodes meaning. Understanding how this mapping works reveals why modern AI can reason about language at all.

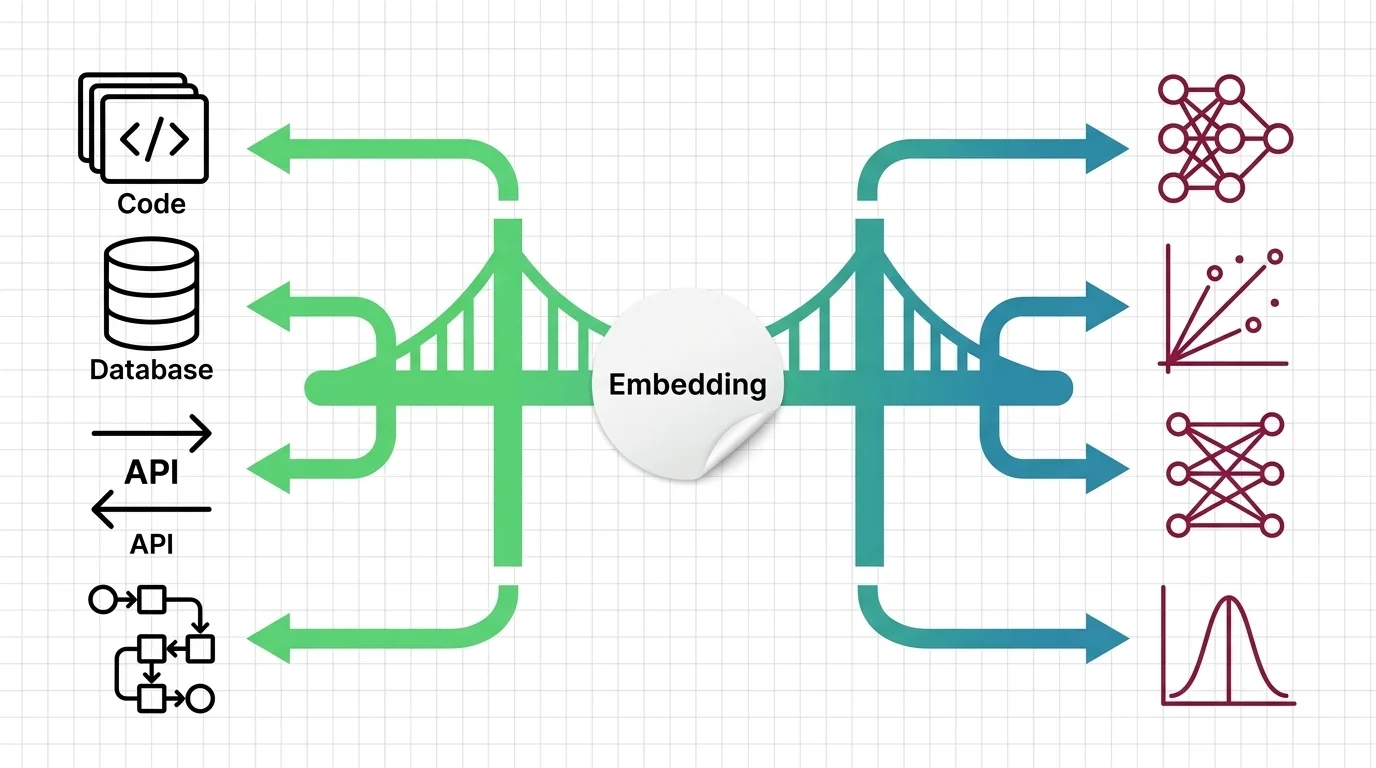

Build with Embedding

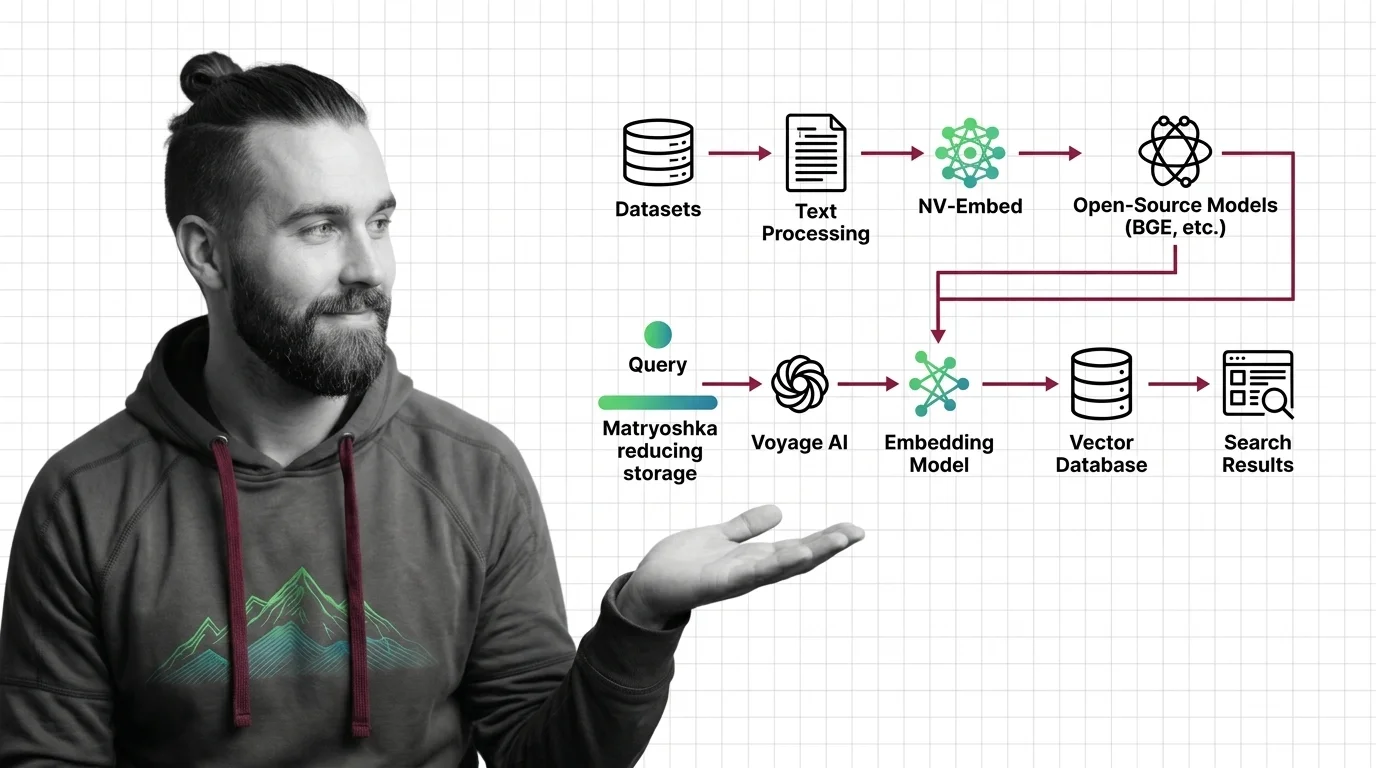

The guides walk through building semantic search pipelines, choosing similarity metrics, and selecting embedding models that fit your latency and accuracy requirements.

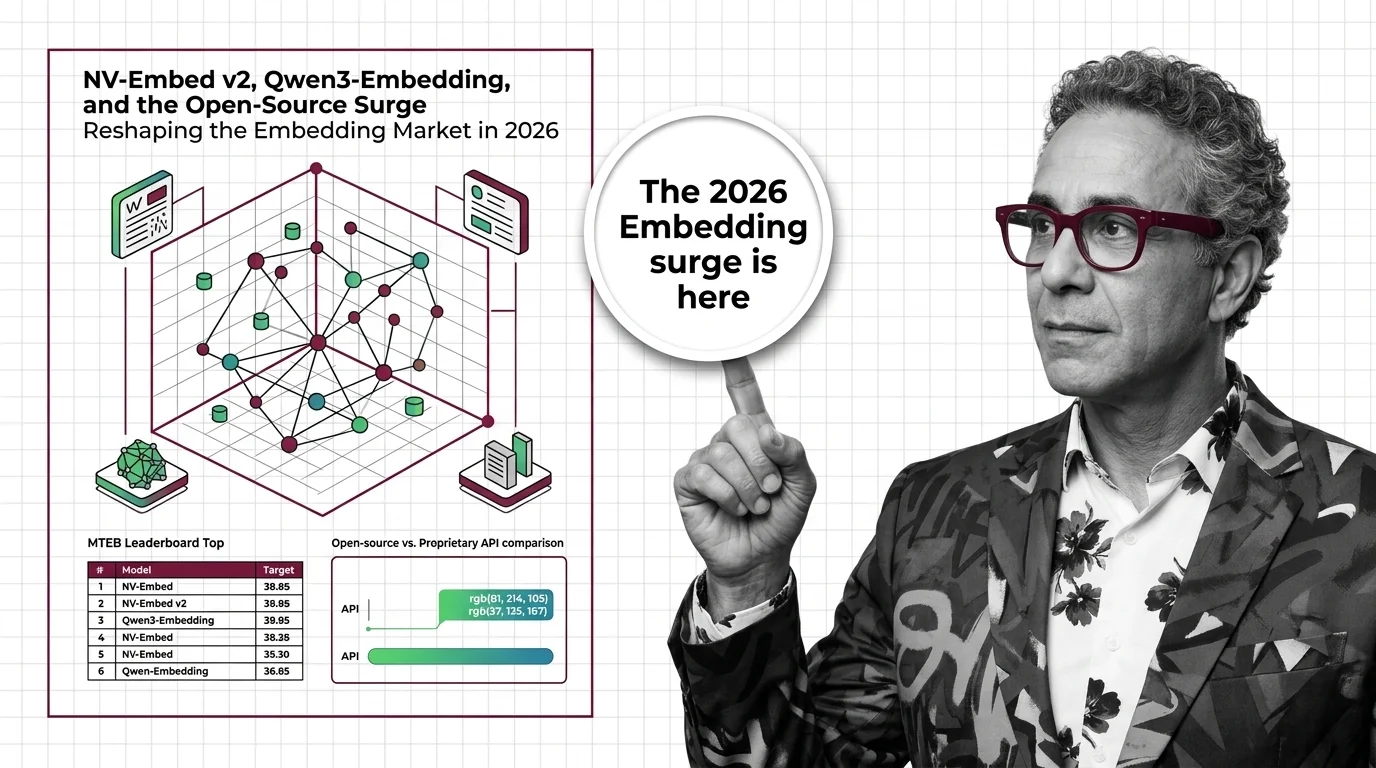

What's Changing in 2026

The embedding landscape is shifting fast as new models challenge established benchmarks. Staying current determines whether your retrieval stack leads or lags.

Updated March 2026

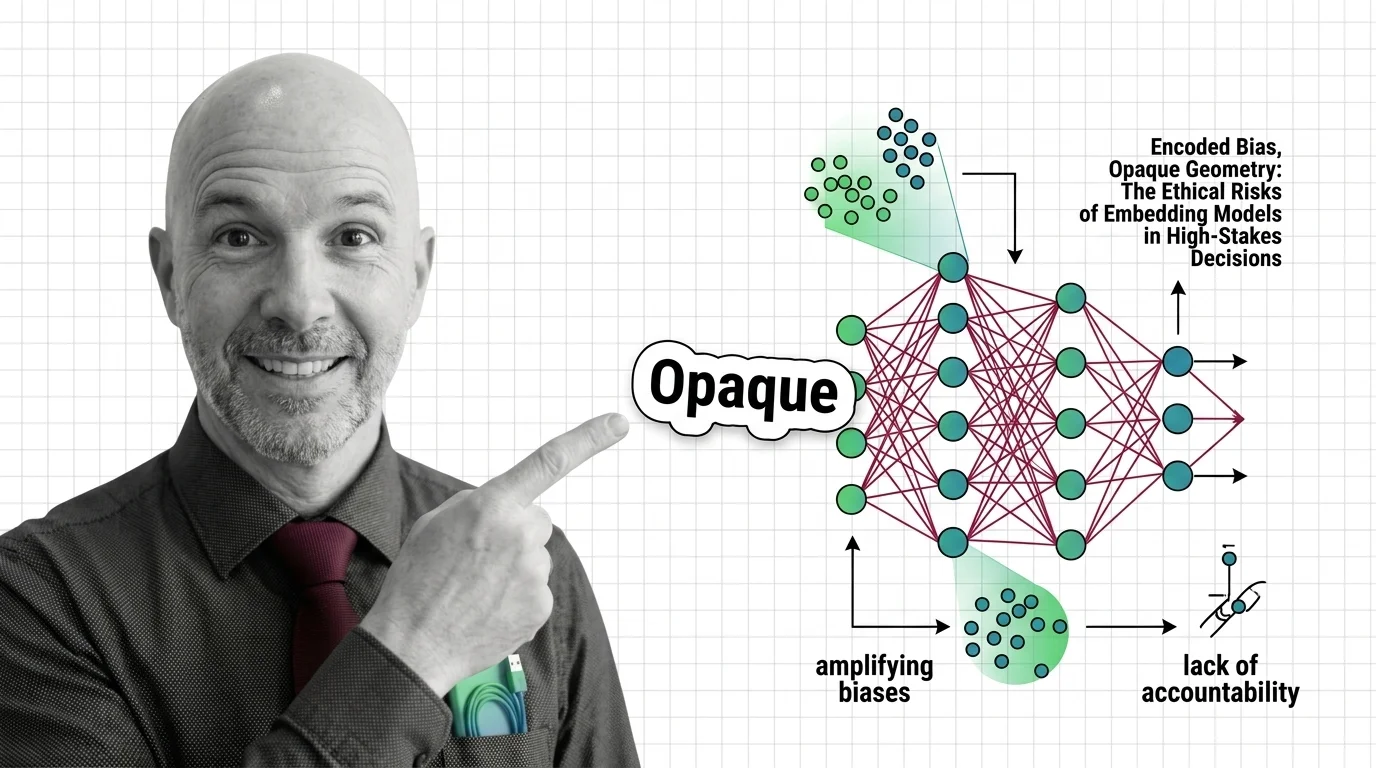

Risks and Considerations

Embedding models encode the biases present in their training data, and their opaque geometry makes those biases difficult to detect or audit in high-stakes applications.