What Is a Diffusion Model? How Reversing Noise Creates Images and Video

Diffusion models generate images by reversing noise. Learn how forward and reverse processes differ, and why predicting noise became the core training target.

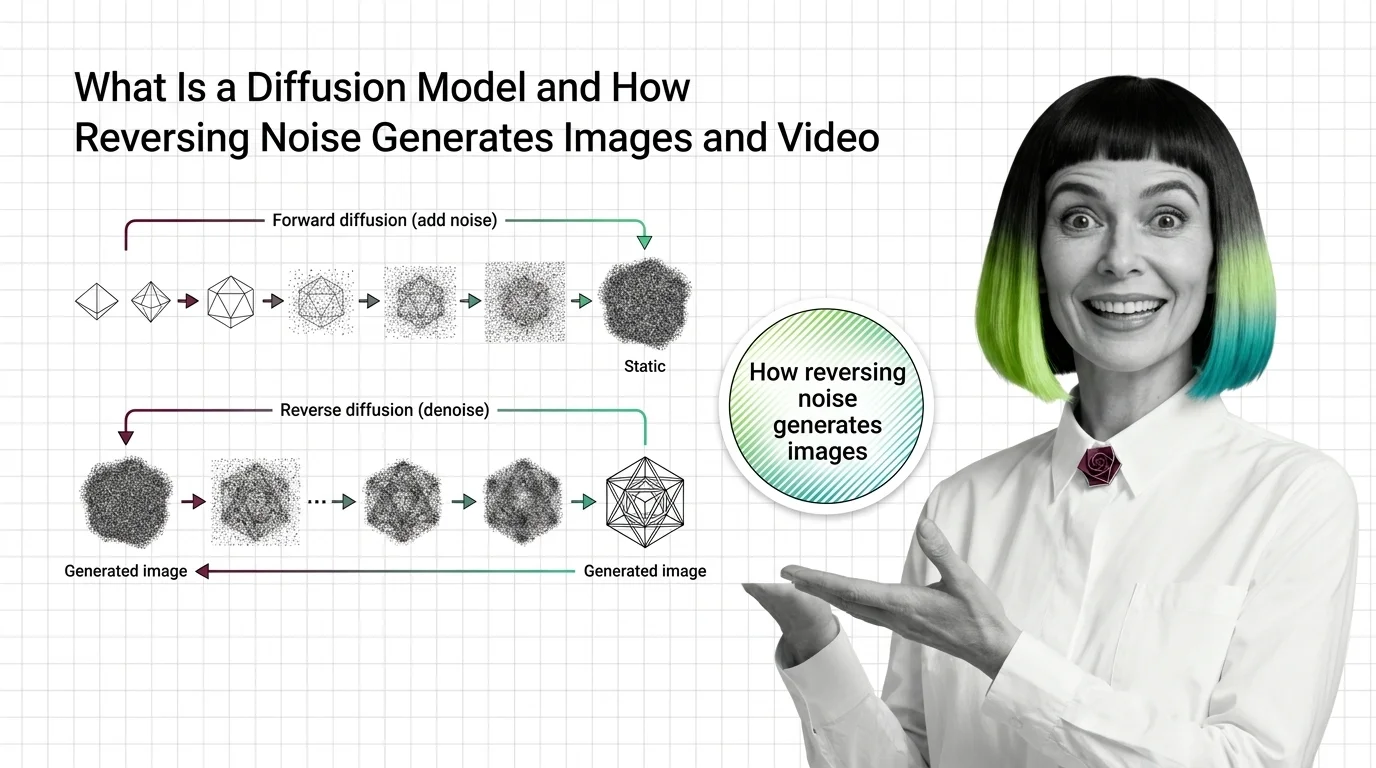

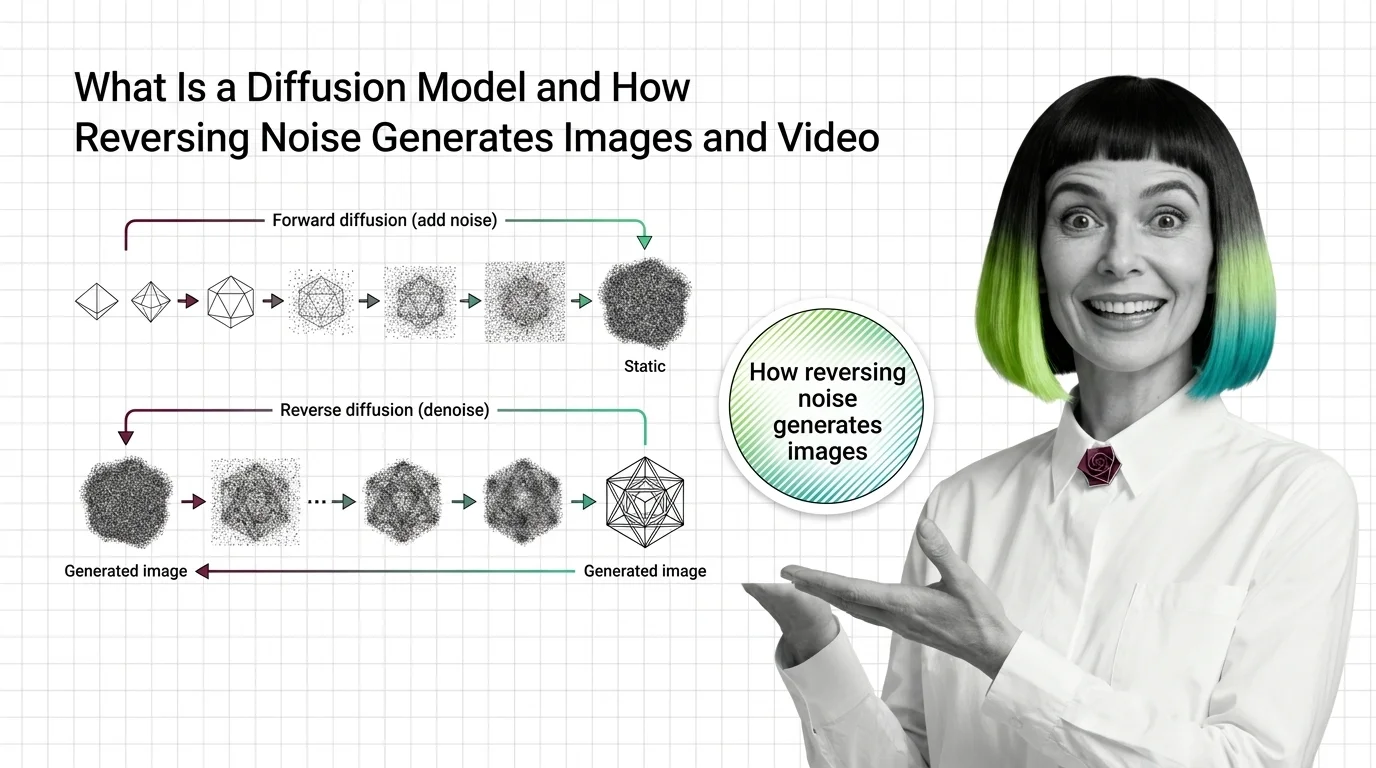

Diffusion models are a type of generative AI that creates images, video, and audio by learning to reverse a step-by-step noise-adding process.

Starting from pure random noise, the model gradually denoises the signal, guided by a text prompt or other conditioning input, until a coherent output emerges. They power most modern text-to-image and text-to-video systems. Also known as: Diffusion Model, Denoising Diffusion.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Diffusion models generate images by reversing noise. Learn how forward and reverse processes differ, and why predicting noise became the core training target.

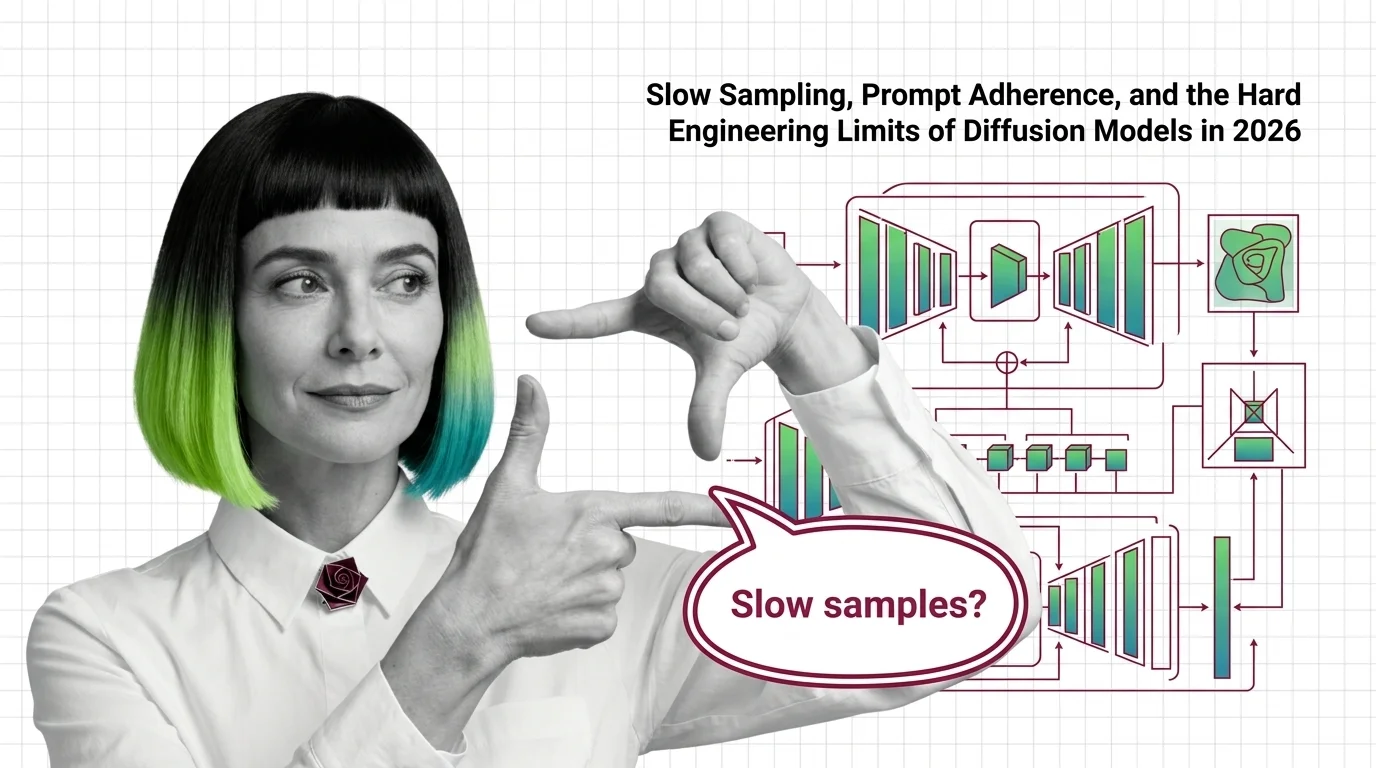

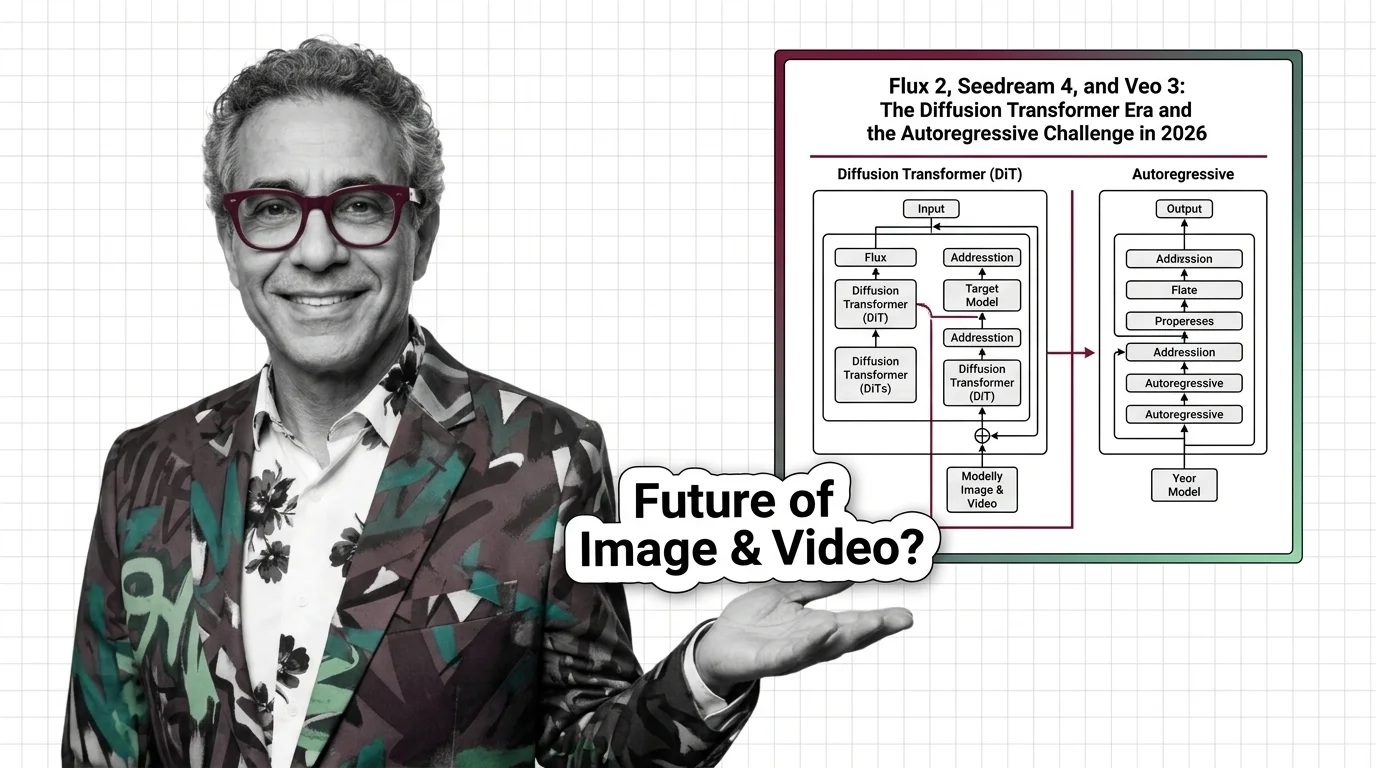

Why diffusion models still need many sampling steps, why FLUX and SD 3.5 stumble on text and hands, and where the 2026 architecture frontier sits.

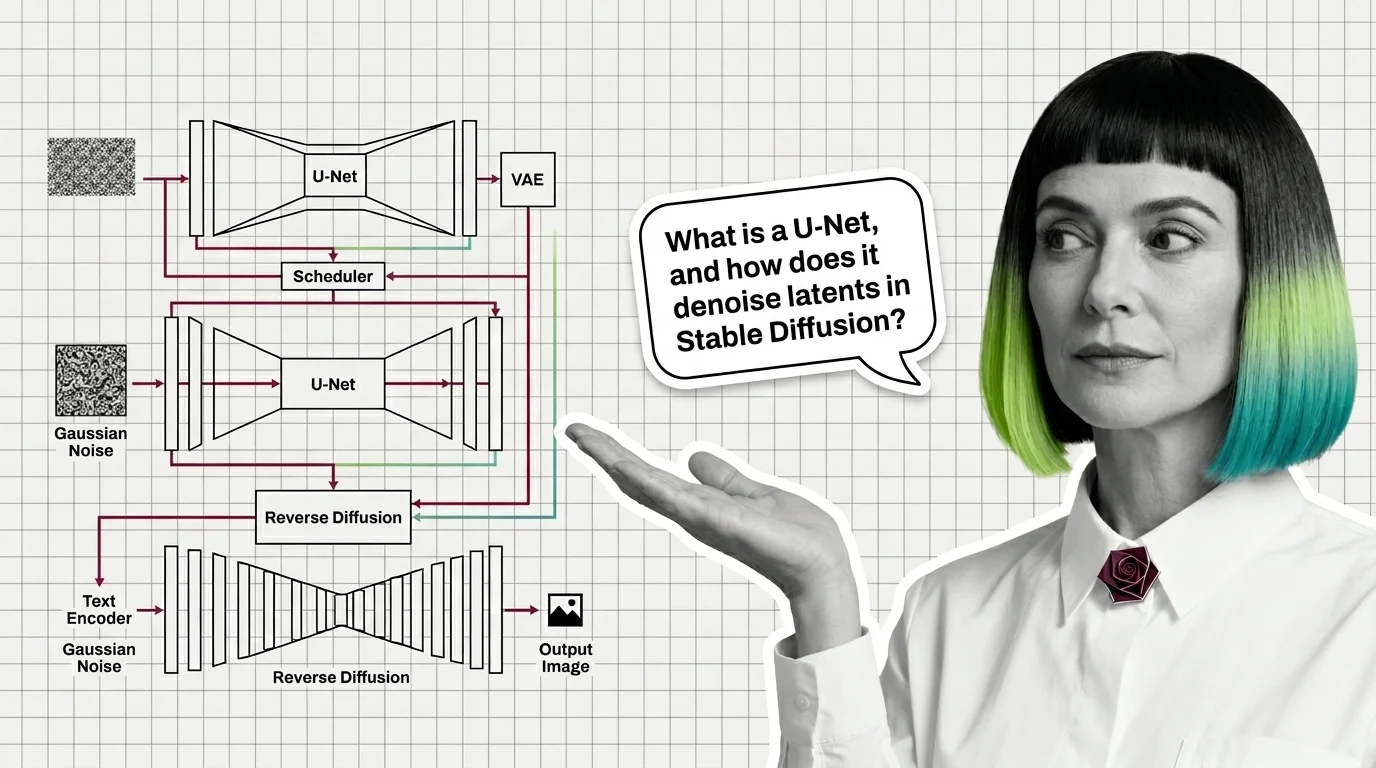

A modern diffusion model is not one network but four: a VAE for compression, a U-Net or DiT denoiser, a text encoder, and a sampler. Here is how they fit.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

Image generation, editing, upscaling, and cutouts mapped for software developers. Learn what gateway instincts transfer and where image stacks break.

Build, fine-tune, and deploy diffusion models in 2026 — spec the four surfaces that separate stable Flux.2 and SD 3.5 pipelines from collapsed runs.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated April 2026

Rectified-flow diffusion transformers now power FLUX.2, Seedance, and Veo. OpenAI and Google counter with autoregressive image models. Inside the 2026 split.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

Diffusion models scraped the internet before asking. Now lawsuits, legislation, and artist tools are forcing a consent conversation we should have had first.