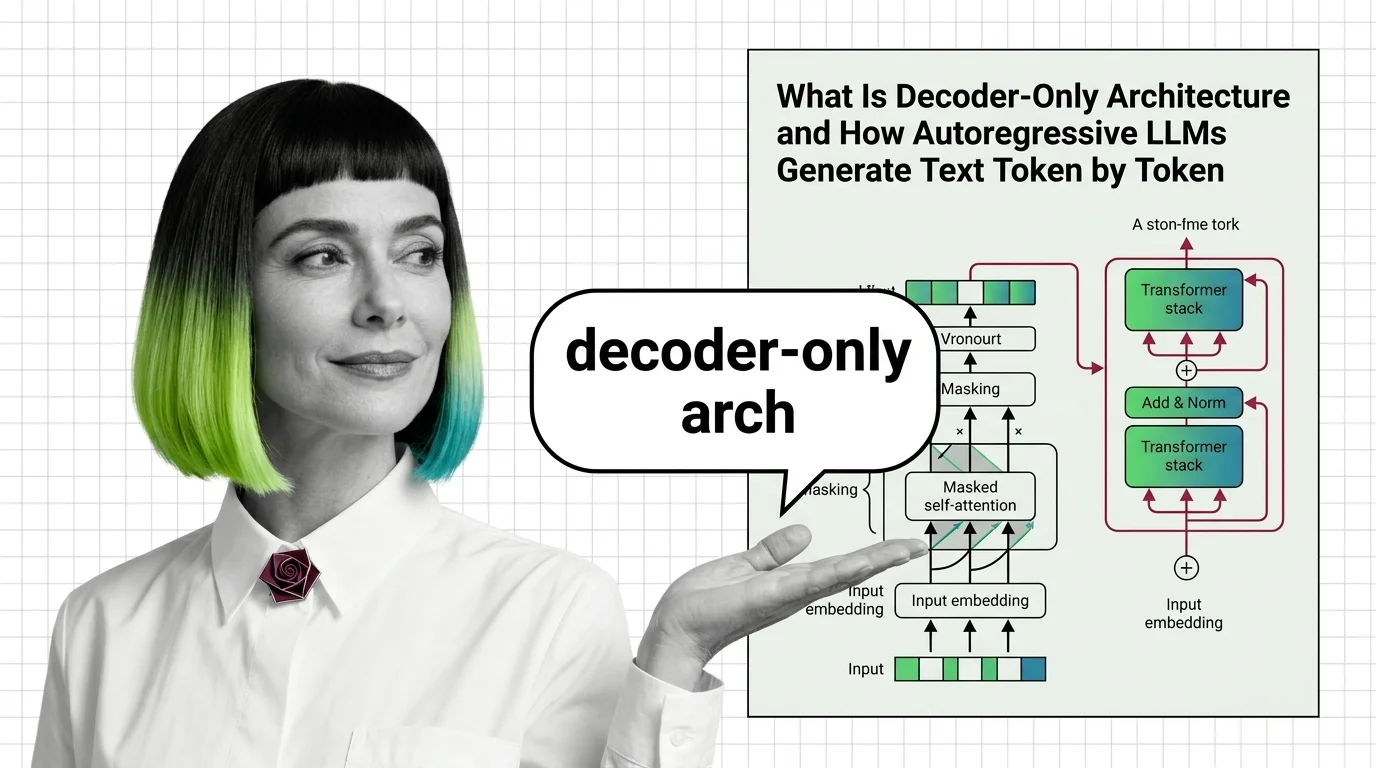

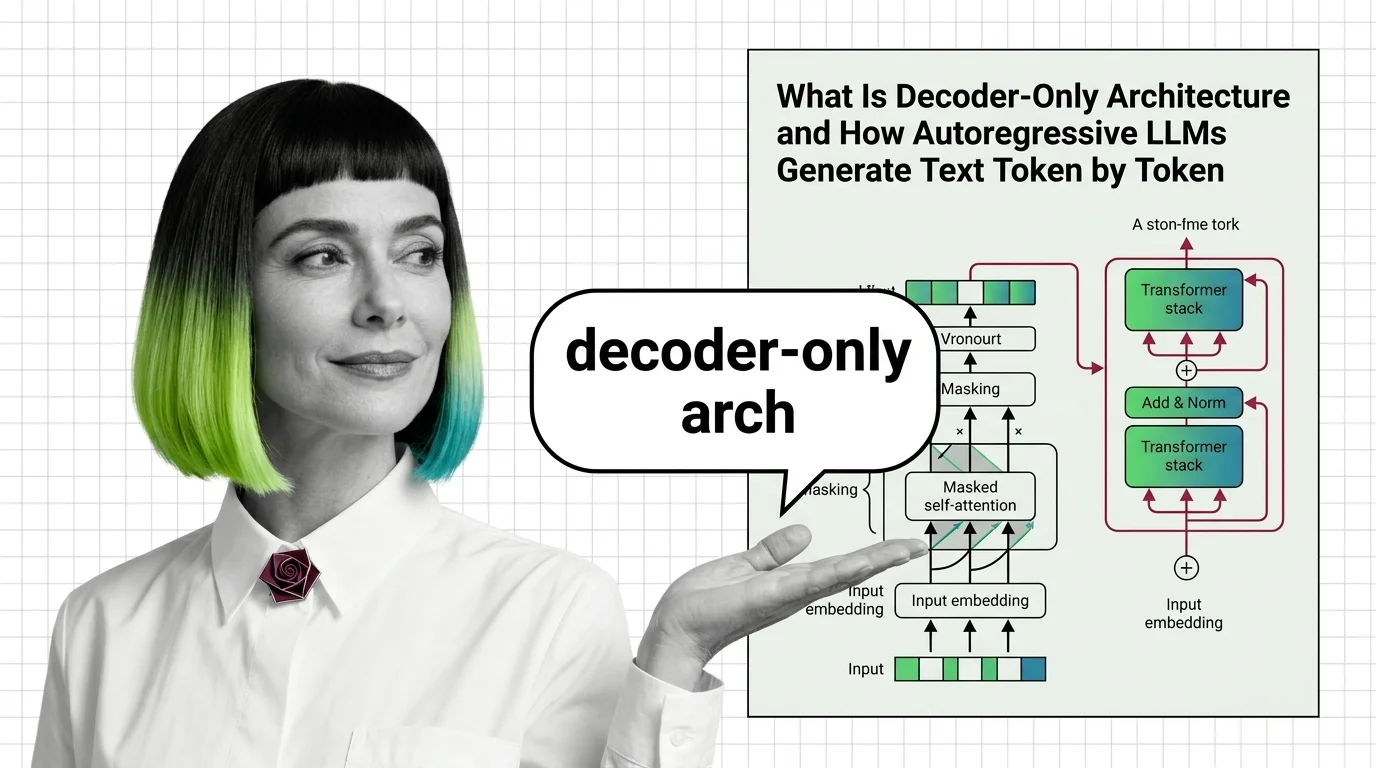

What Is Decoder-Only Architecture and How Autoregressive LLMs Generate Text Token by Token

Decoder-only architecture powers every major LLM today. Learn how causal masking, KV cache, and autoregressive generation produce text one token at a time.

Decoder-only architecture is a transformer design where a single decoder stack generates output tokens one at a time, each conditioned on all previous tokens through causal masking.

Unlike encoder-decoder models that process input and output separately, decoder-only models unify both tasks in one autoregressive pass. This pattern powers GPT, LLaMA, and the vast majority of modern large language models. Also known as: Autoregressive LLM, Causal Language Model.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Decoder-only architecture powers every major LLM today. Learn how causal masking, KV cache, and autoregressive generation produce text one token at a time.

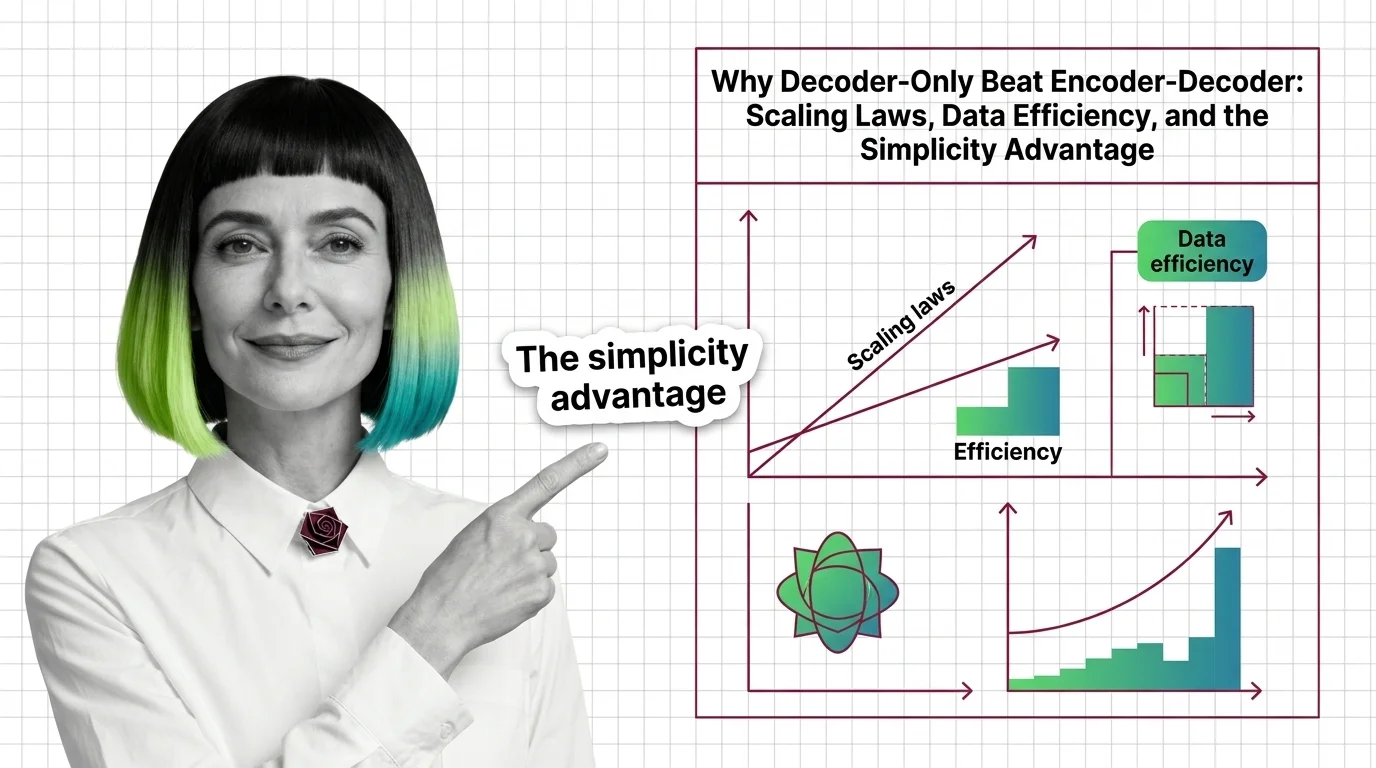

Decoder-only models won the scaling race by doing less. Learn how a simpler training objective, scaling laws, and MoE extensions beat encoder-decoder design.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

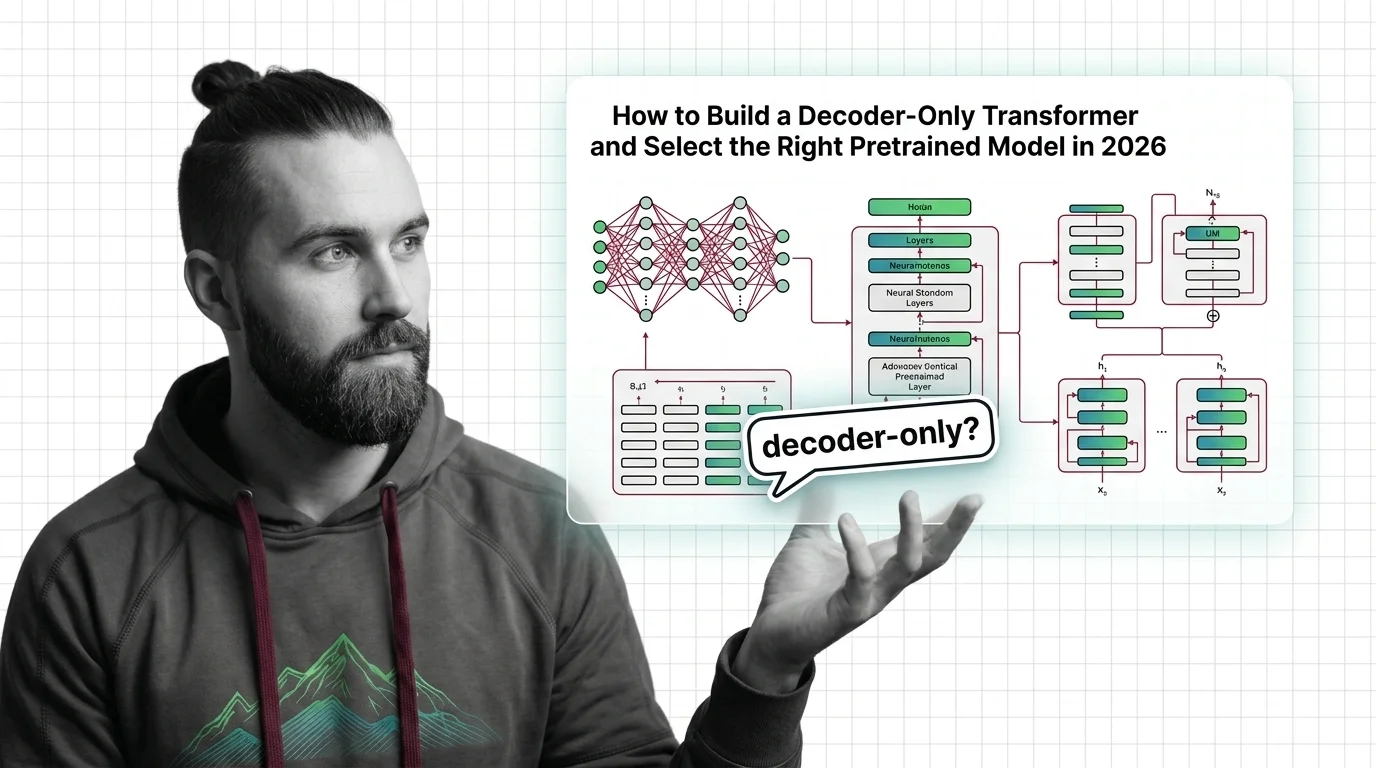

Build a decoder-only transformer with correct causal masking in PyTorch, then pick between GPT-5, LLaMA 4, and DeepSeek V3.2 for 2026 production use.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated March 2026

The decoder-only paradigm fractured. DeepSeek MLA, LLaMA 4 MoE, and NVIDIA Nemotron hybrids compete on inference cost — here is who wins the architecture race.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

The AI industry converged on decoder-only architecture without rigorous comparison. Explore the ethical and structural risks of betting on a single design.