Decoder-Only Architecture

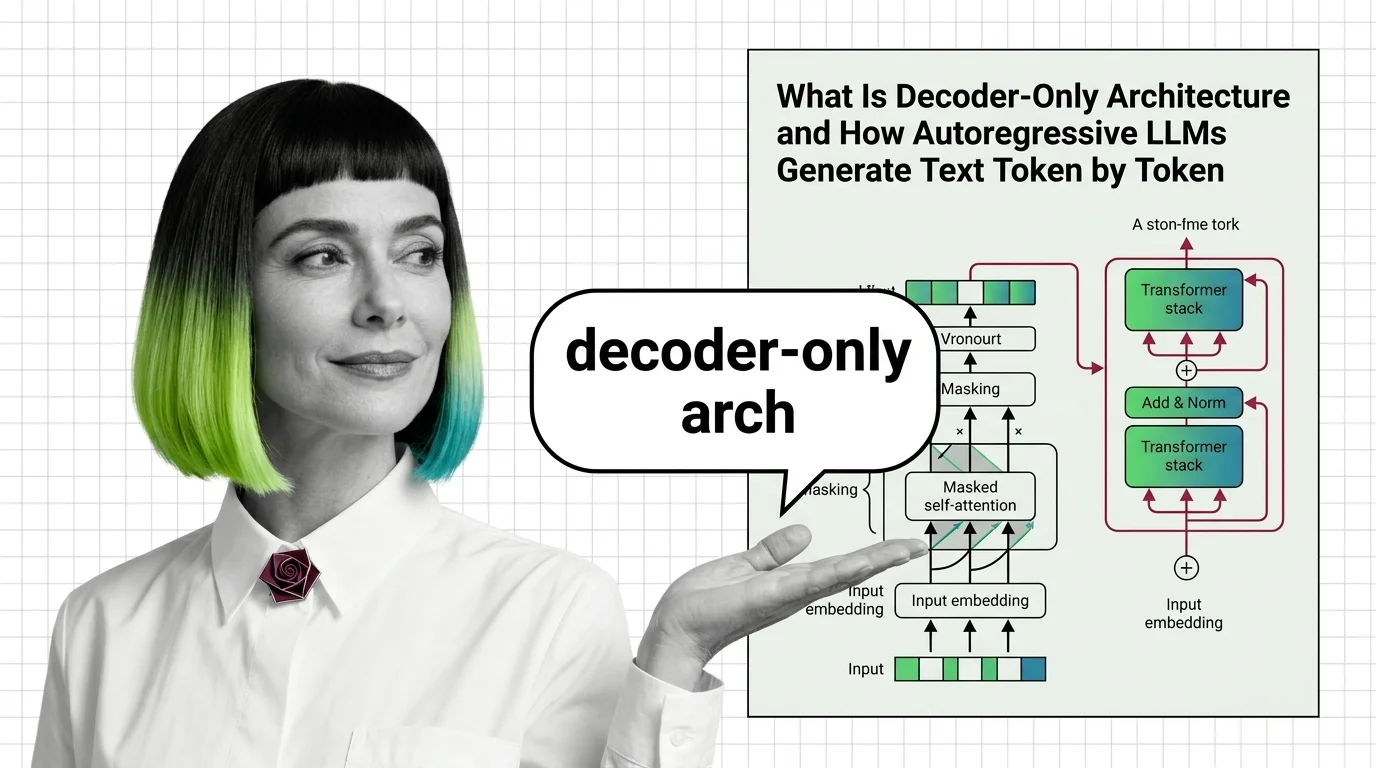

Decoder-only architecture is a transformer design where a single decoder stack generates output tokens one at a time, each conditioned on all previous tokens through causal masking. Unlike encoder-decoder models that process input and output separately, decoder-only models unify both tasks in one autoregressive pass. This pattern powers GPT, LLaMA, and the vast majority of modern large language models. Also known as: Autoregressive LLM, Causal Language Model.

Understand the Fundamentals

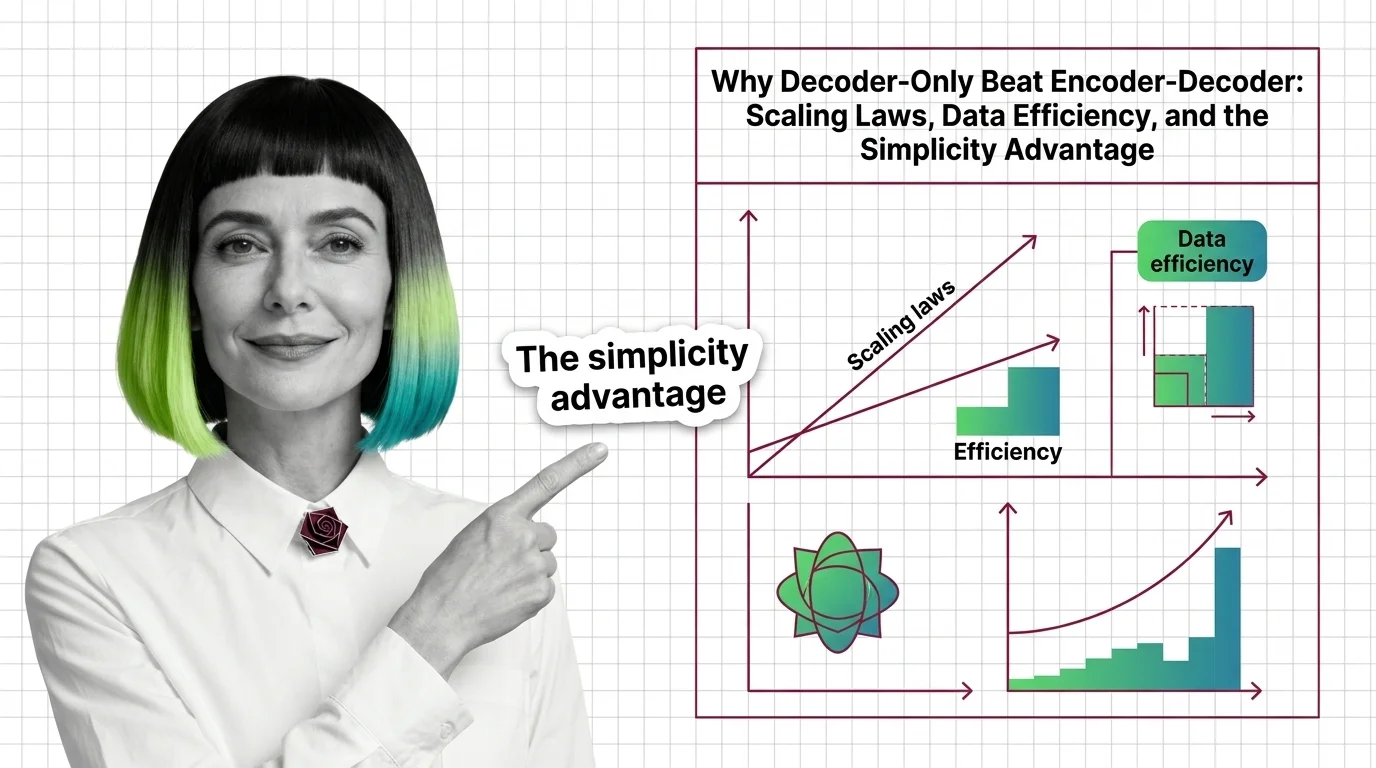

Decoder-only architecture strips the transformer down to its generative core. Understanding how causal masking forces left-to-right token prediction reveals why this design dominates language modeling.

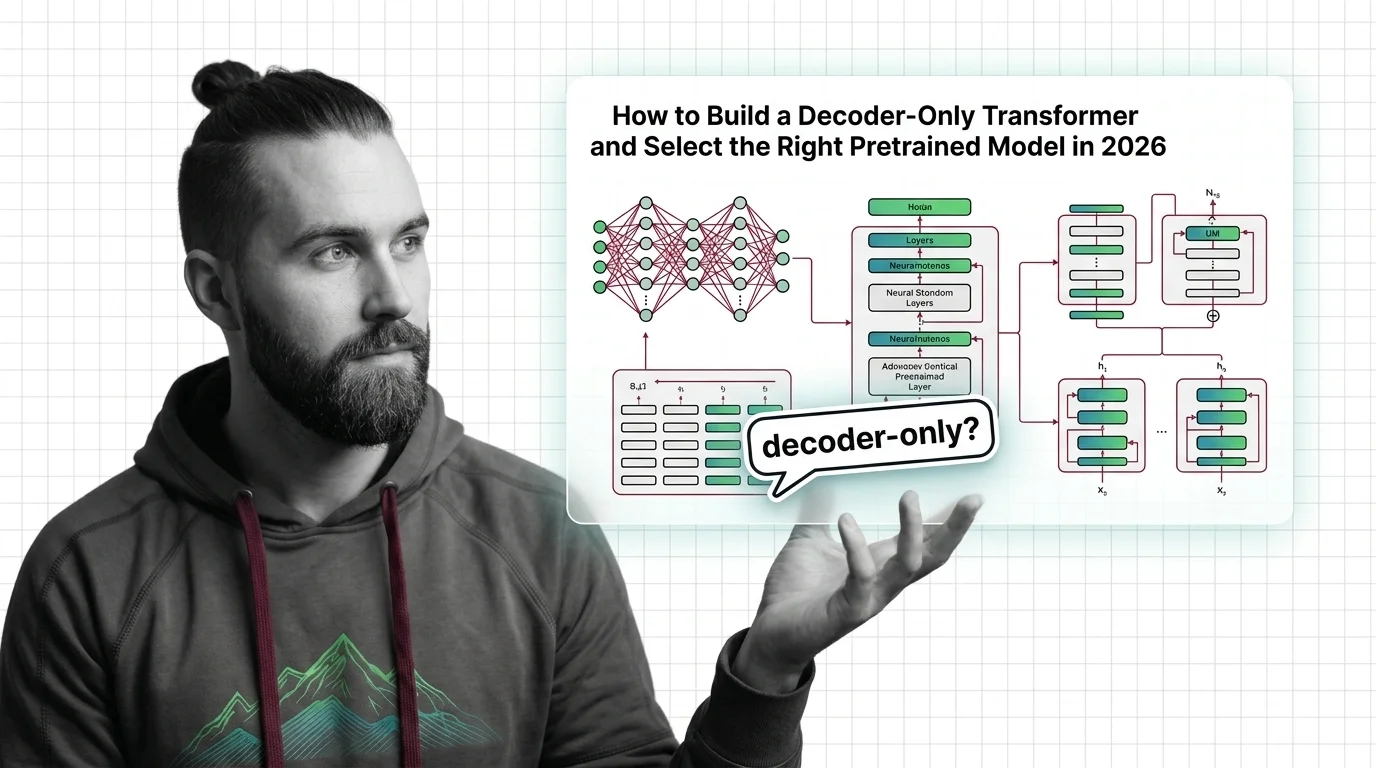

Build with Decoder-Only Architecture

These guides walk through selecting, fine-tuning, and deploying decoder-only models. Expect practical trade-offs between context length, inference cost, and task-specific accuracy.

What's Changing in 2026

The decoder-only design keeps evolving through mixture-of-experts variants, hybrid architectures, and efficiency breakthroughs. Tracking these shifts matters for anyone choosing or building on foundation models.

Updated March 2026

Risks and Considerations

Concentrating the entire AI industry on one architectural pattern creates fragility. Consider what happens when decoder-only assumptions fail and whether alternatives deserve more investment.