Confusion Matrix

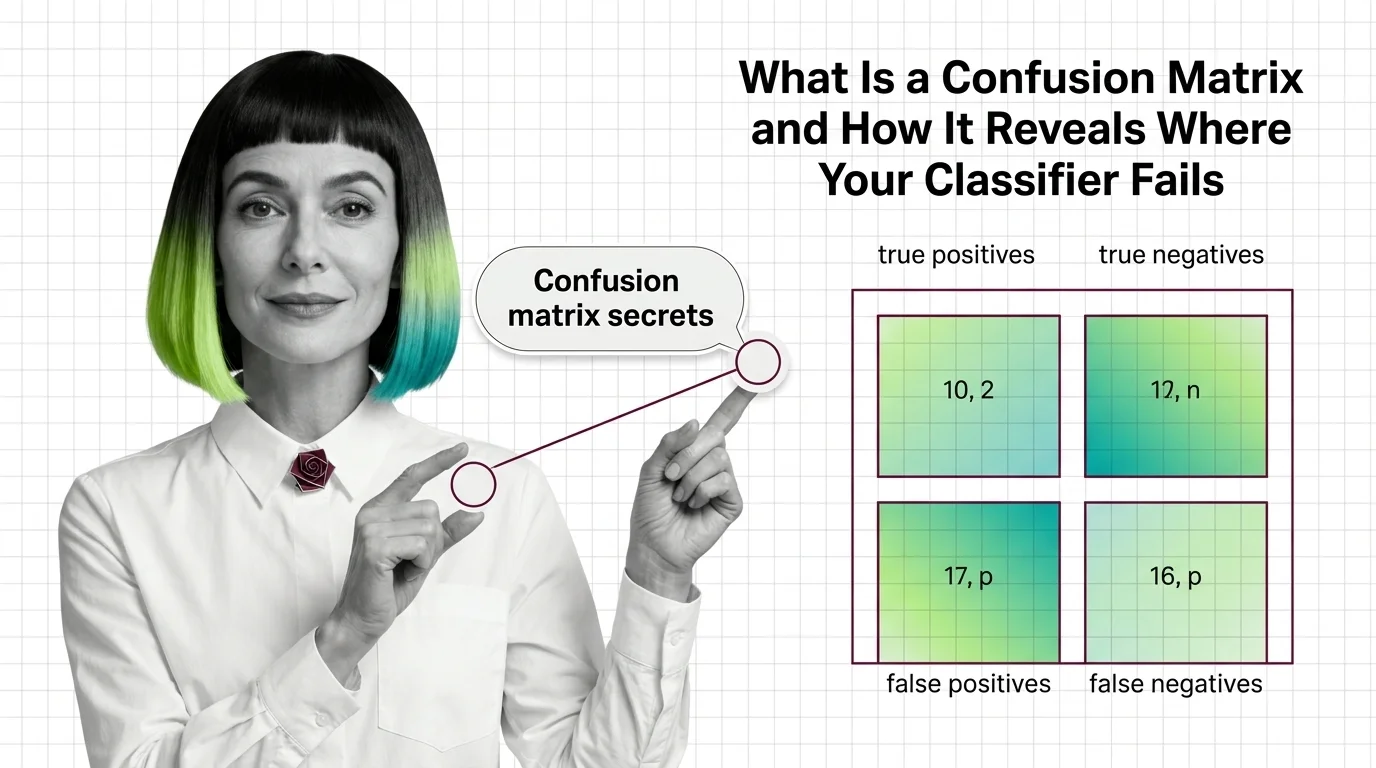

A confusion matrix is a table that summarizes how well a classification model performs by breaking predictions into four categories: true positives, false positives, true negatives, and false negatives. Each cell shows how many instances the model classified correctly or incorrectly for a given class. By reading the matrix, practitioners can identify systematic errors, diagnose class-specific weaknesses, and choose the right metric for their use case. Also known as: Error Matrix

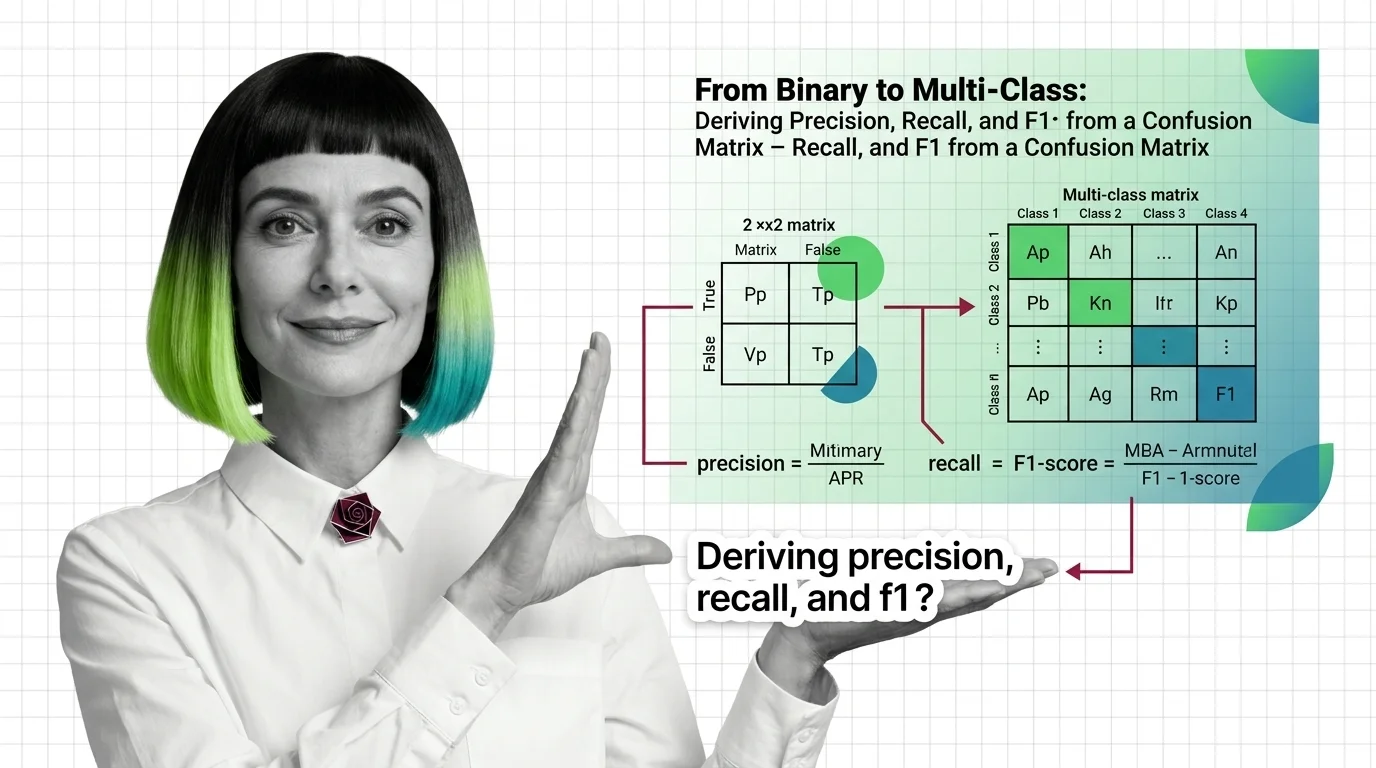

Understand the Fundamentals

A confusion matrix decomposes classifier output into four fundamental outcome types. These explainers unpack how the quadrants relate to each other and reveal why surface-level accuracy so often masks the real story of model performance.

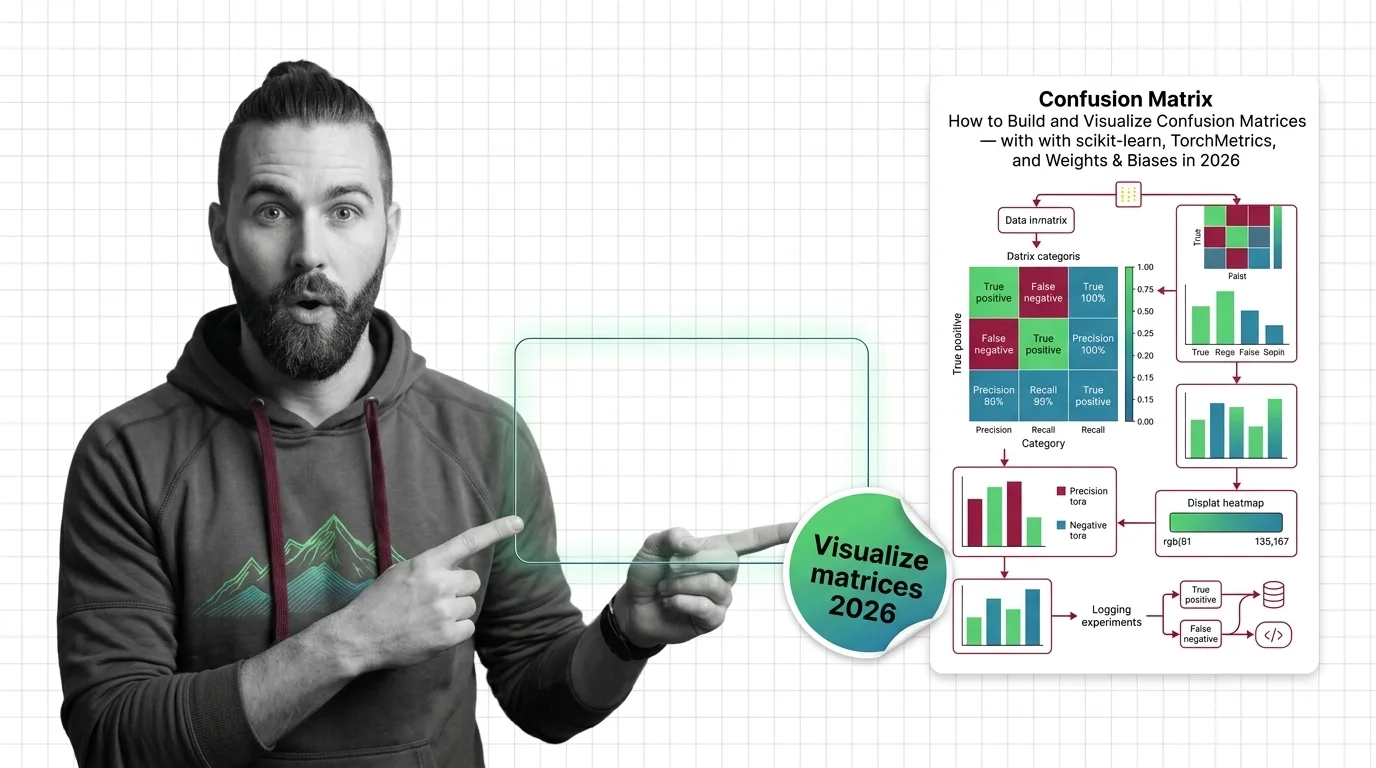

Build with Confusion Matrix

These guides walk through building, visualizing, and interpreting confusion matrices in real production workflows. Expect hands-on tooling choices, normalization tradeoffs, and practical decisions that shape what your evaluation dashboard actually reveals.

What's Changing in 2026

Evaluation methods are evolving rapidly as models grow more complex and deploy into increasingly higher-stakes domains. Staying current on confusion matrix tooling and emerging interpretation practices keeps your evaluation pipeline relevant and competitive.

Updated April 2026

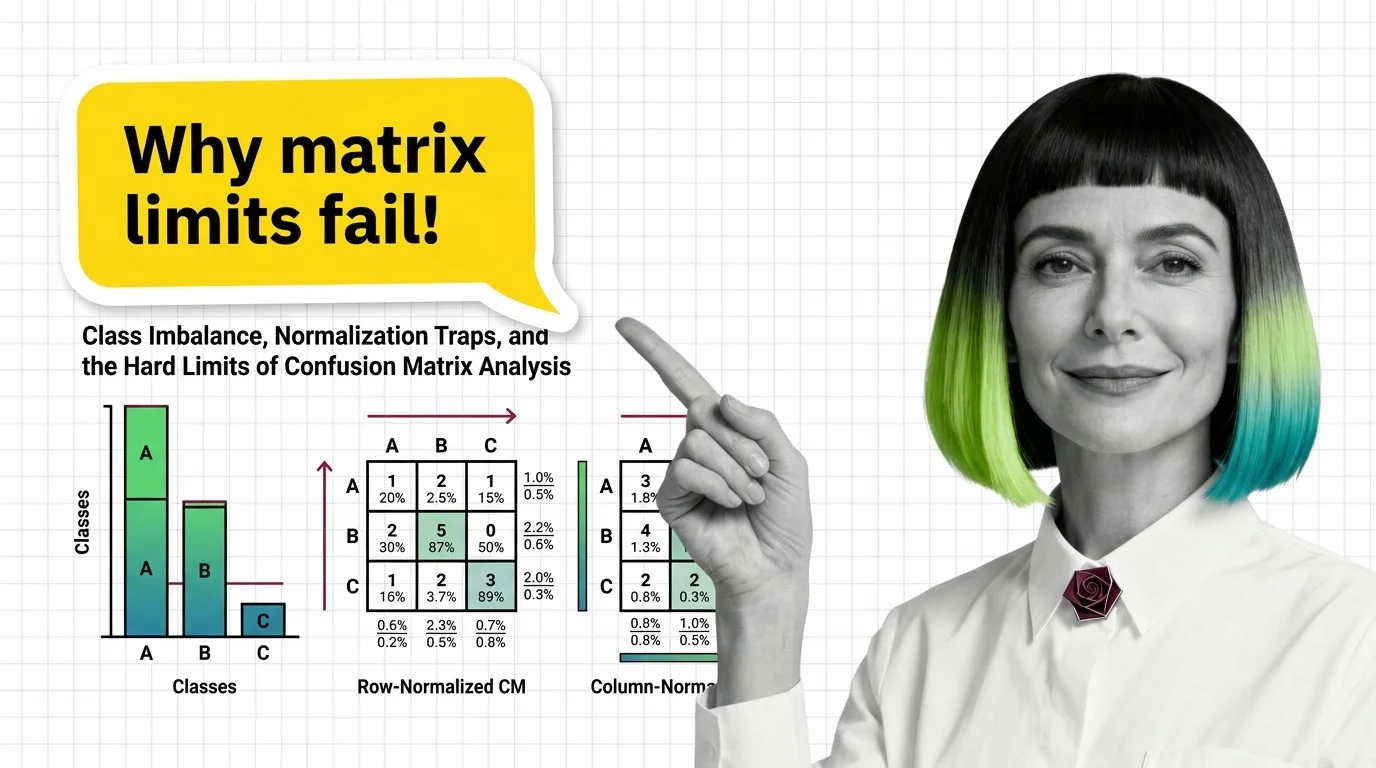

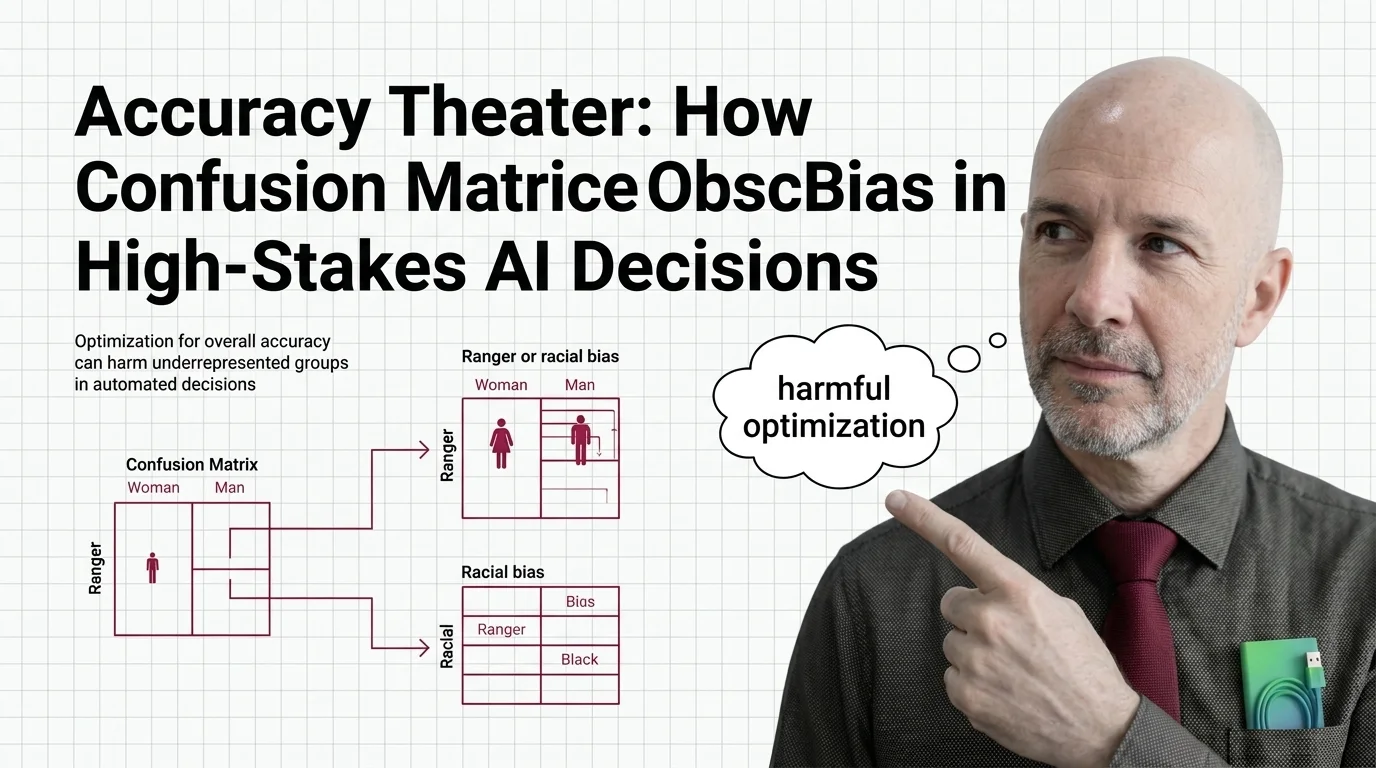

Risks and Considerations

A well-formatted confusion matrix can create false confidence when class imbalance, label noise, or normalization choices obscure the true error distribution. These articles examine where the standard evaluation approach falls short.