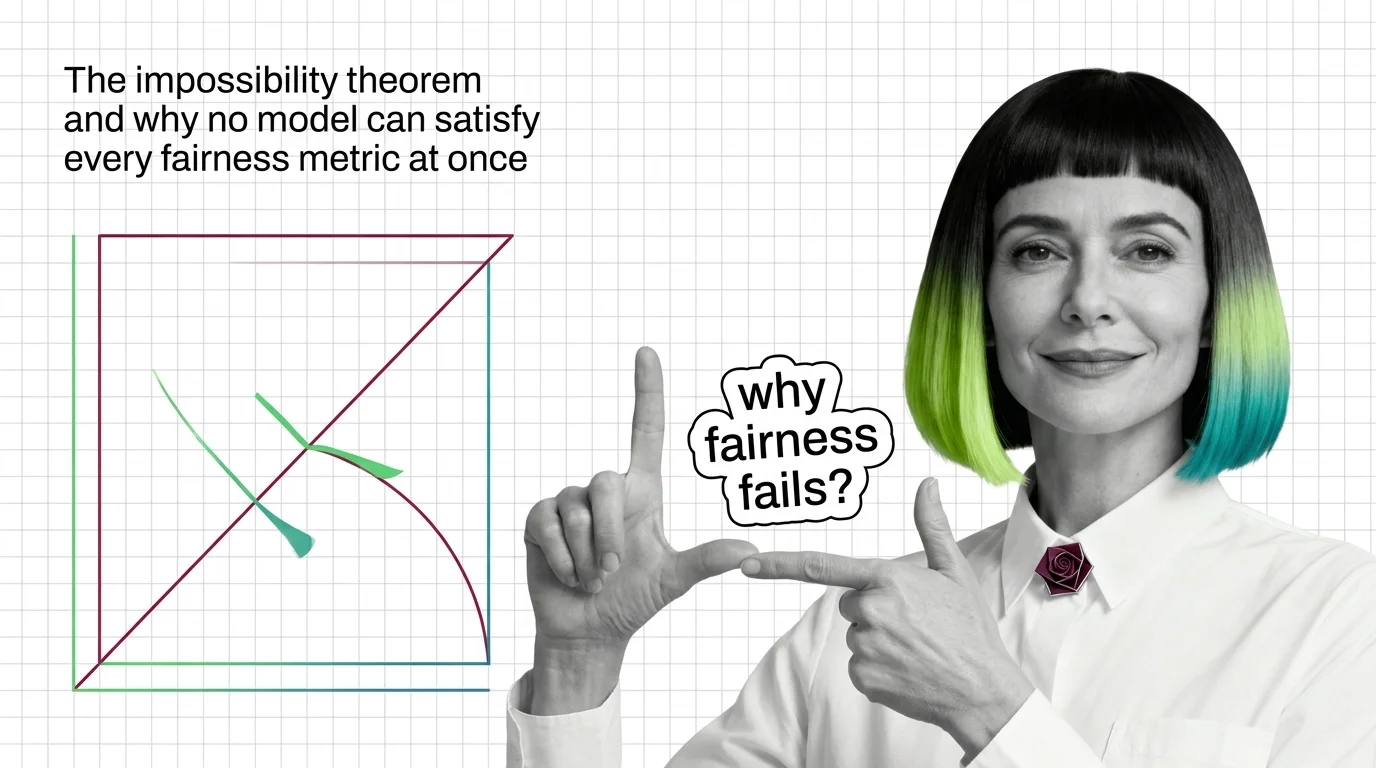

The Impossibility Theorem and Why No Model Can Satisfy Every Fairness Metric at Once

When group base rates differ, no algorithm satisfies calibration, equal error rates, and demographic parity at once. Learn the math behind fairness trade-offs.

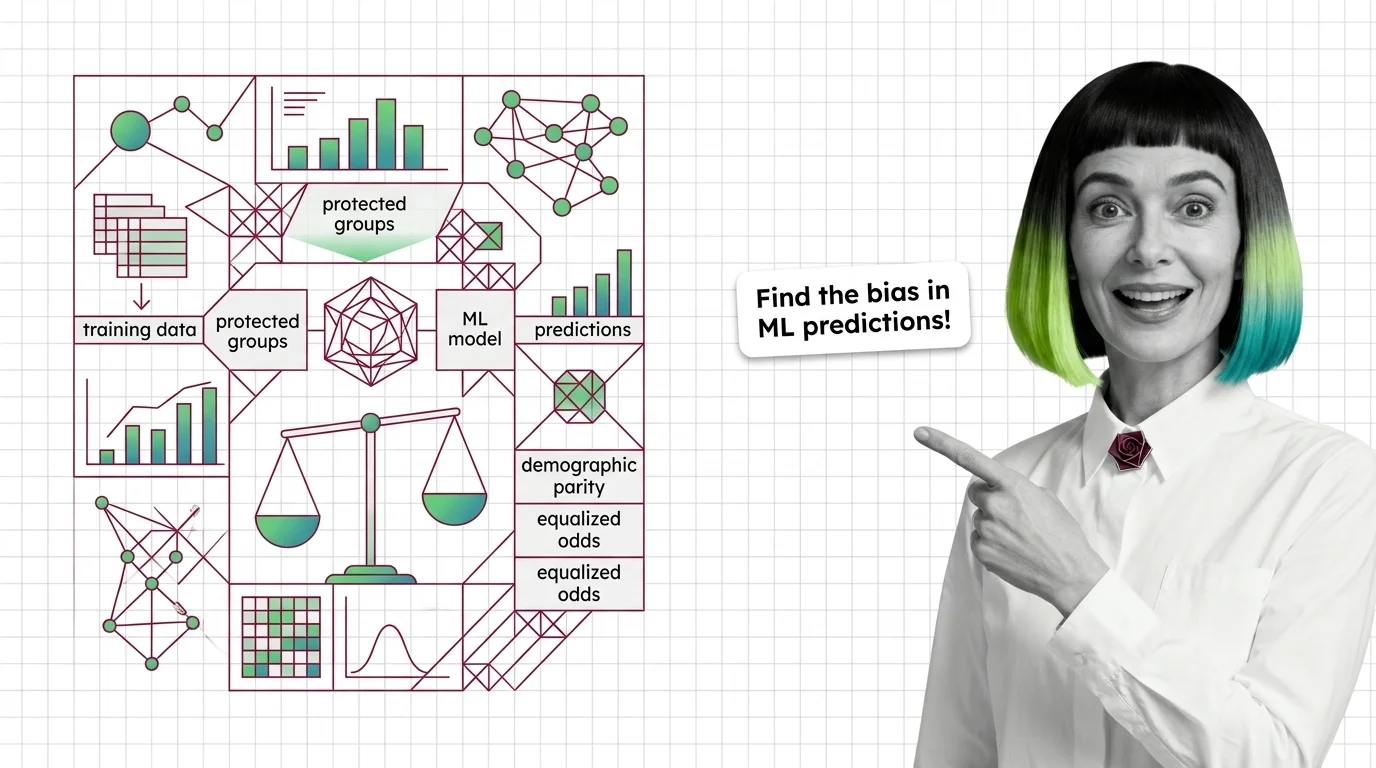

Bias and fairness metrics are quantitative measures used to detect, quantify, and report systematic disparities in machine learning model predictions across protected demographic groups.

Common metrics include demographic parity, equalized odds, and disparate impact ratio. These measures help teams audit models before deployment, satisfy regulatory requirements, and track whether mitigation efforts actually reduce harm. Also known as: Fairness Metrics, Algorithmic Fairness Evaluation.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

When group base rates differ, no algorithm satisfies calibration, equal error rates, and demographic parity at once. Learn the math behind fairness trade-offs.

Fairness metrics test whether ML models discriminate by group. Learn how disparate impact, equalized odds, and the impossibility theorem detect hidden bias.

Demographic parity, equalized odds, and calibration define fairness differently and cannot all be satisfied at once. Learn what that trade-off means.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

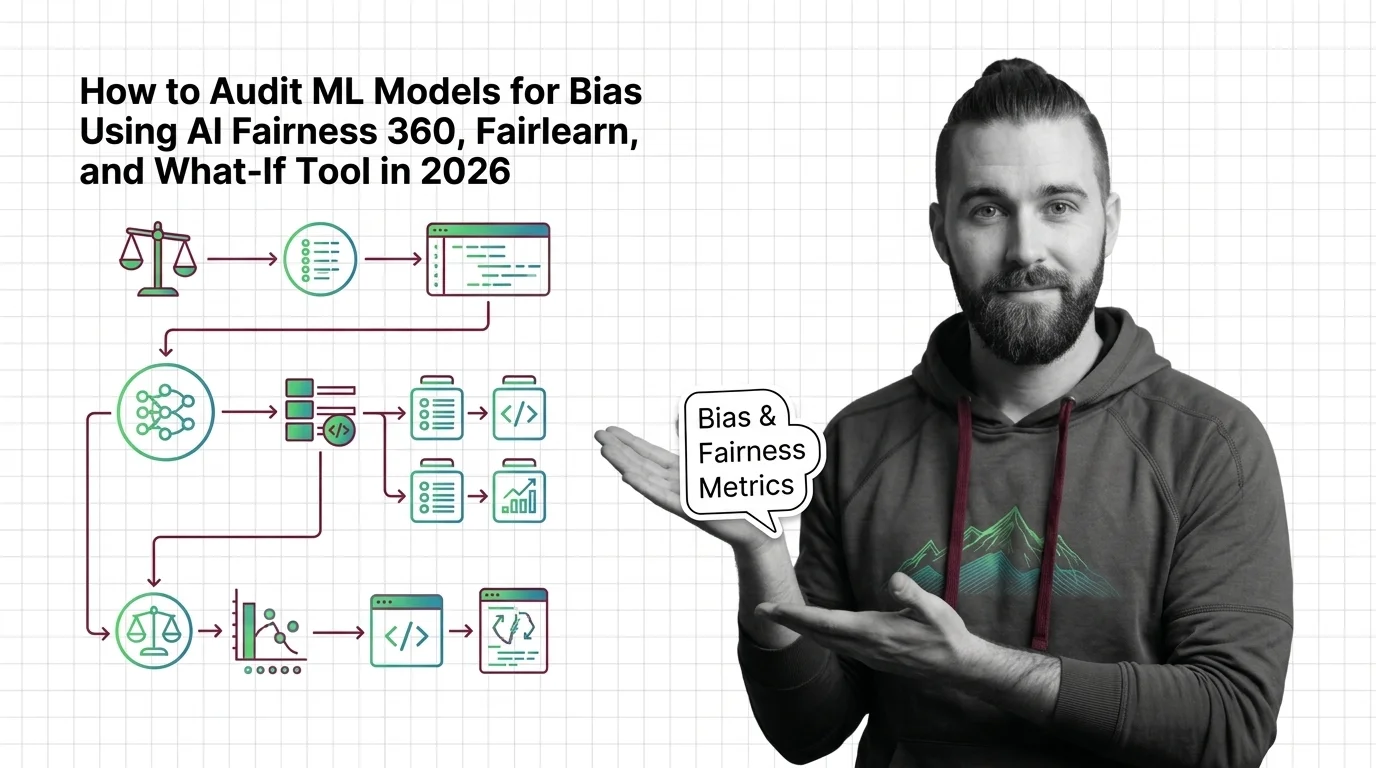

Audit ML models for bias with AI Fairness 360, Fairlearn, and What-If Tool. Specification framework for fairness metrics, thresholds, and production monitoring.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

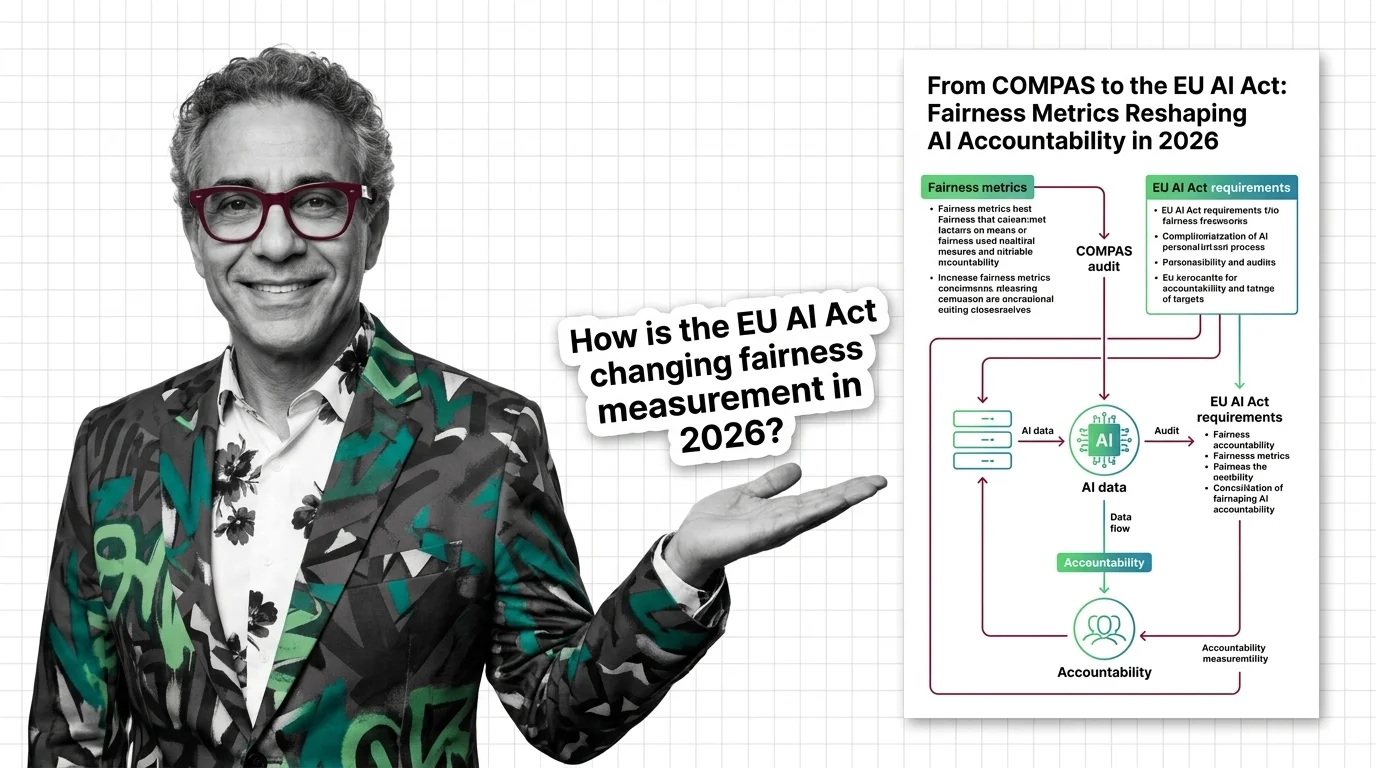

Updated March 2026

Fairness metrics moved from research papers to courtrooms. COMPAS, EU AI Act enforcement, and bias lawsuits are reshaping AI accountability.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

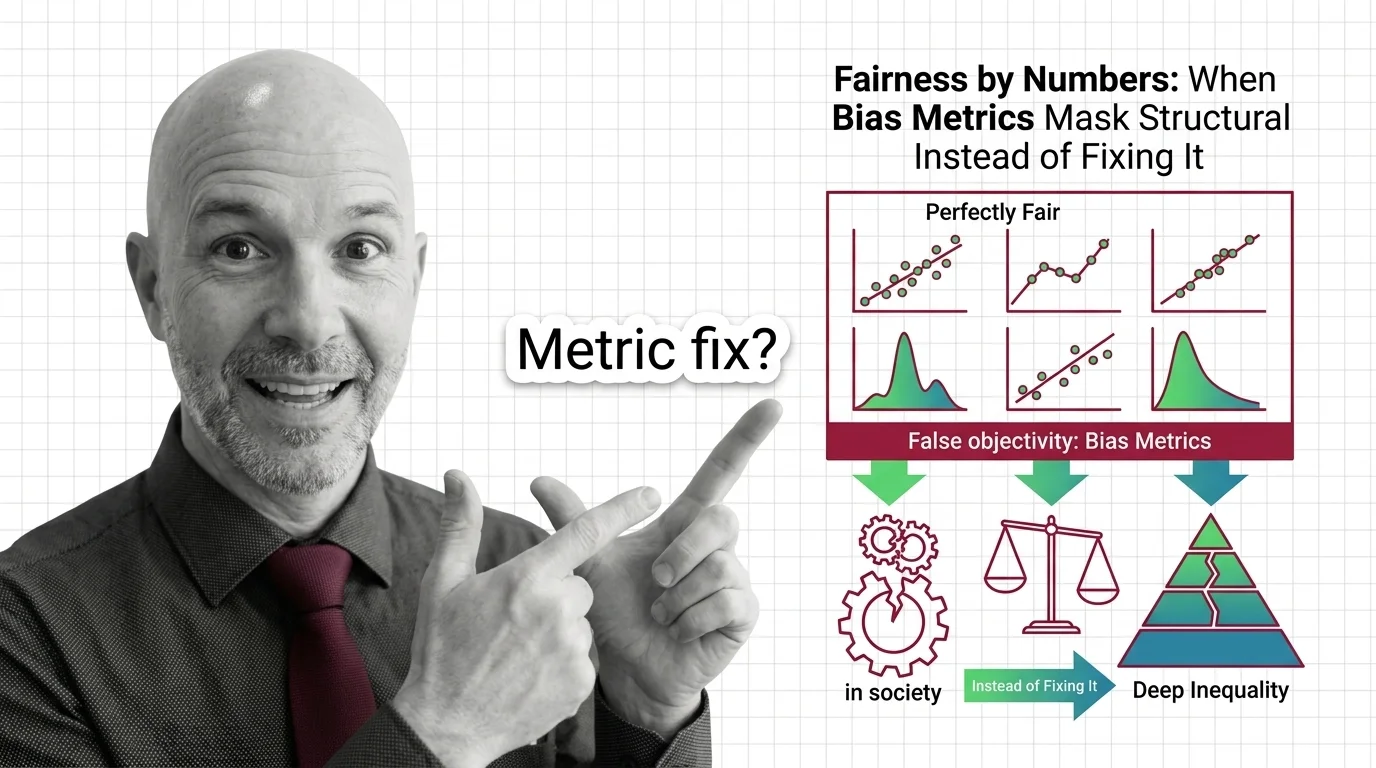

Fairness metrics promise objectivity but can mask structural inequality. Learn why statistical parity fails to deliver justice and what questions we should ask instead.