Bias and Fairness Metrics

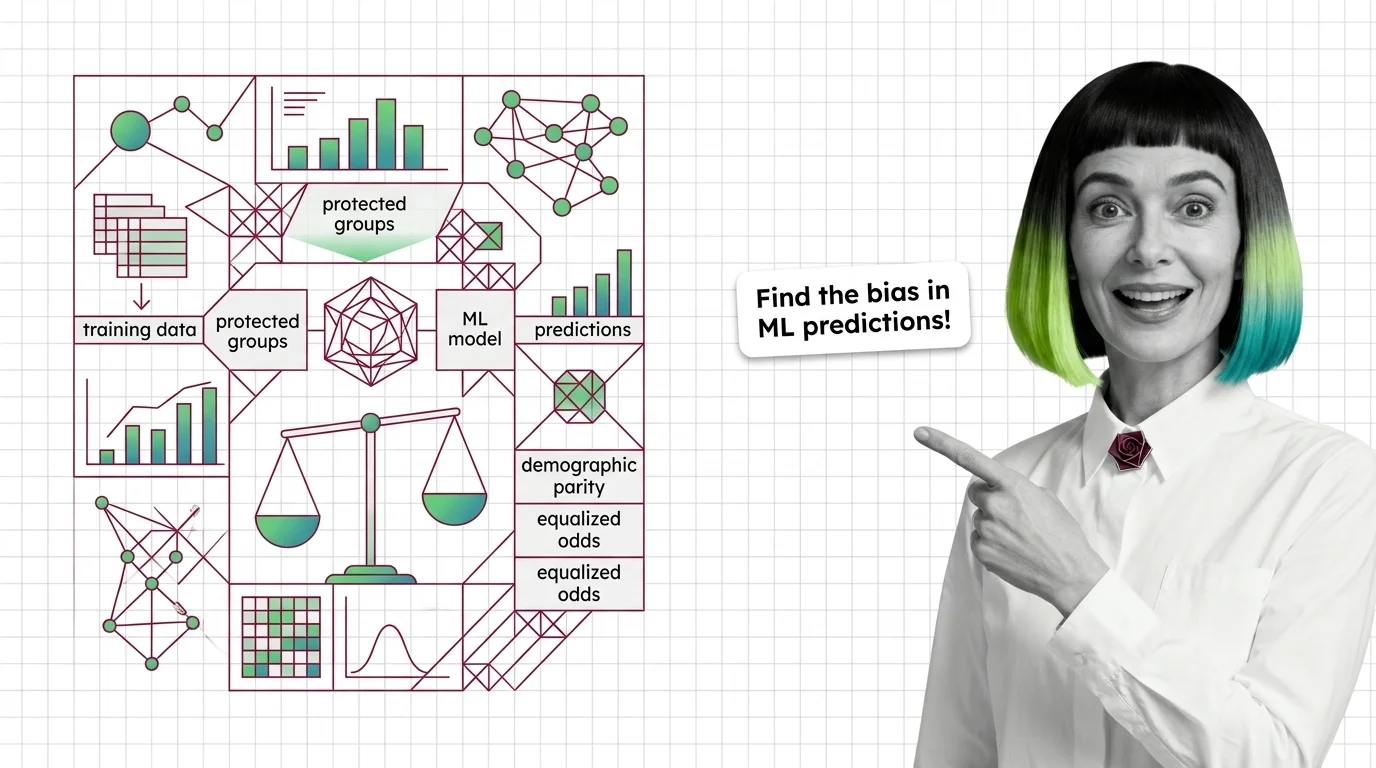

Bias and fairness metrics are quantitative measures used to detect, quantify, and report systematic disparities in machine learning model predictions across protected demographic groups. Common metrics include demographic parity, equalized odds, and disparate impact ratio. These measures help teams audit models before deployment, satisfy regulatory requirements, and track whether mitigation efforts actually reduce harm. Also known as: Fairness Metrics, Algorithmic Fairness Evaluation.

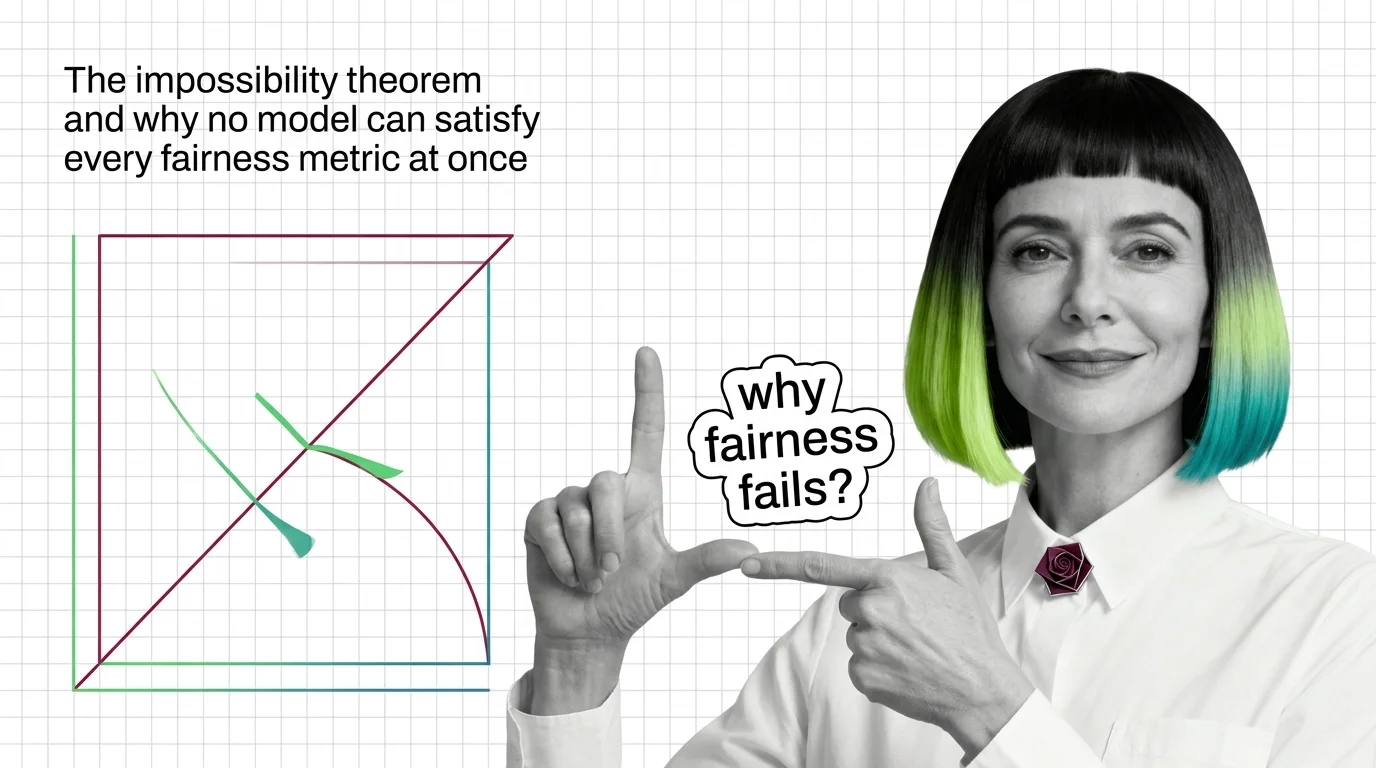

Understand the Fundamentals

Bias and fairness metrics formalize intuitions about equitable treatment into testable hypotheses. Understanding what each metric actually measures, and where it stays silent, is the foundation for responsible model evaluation.

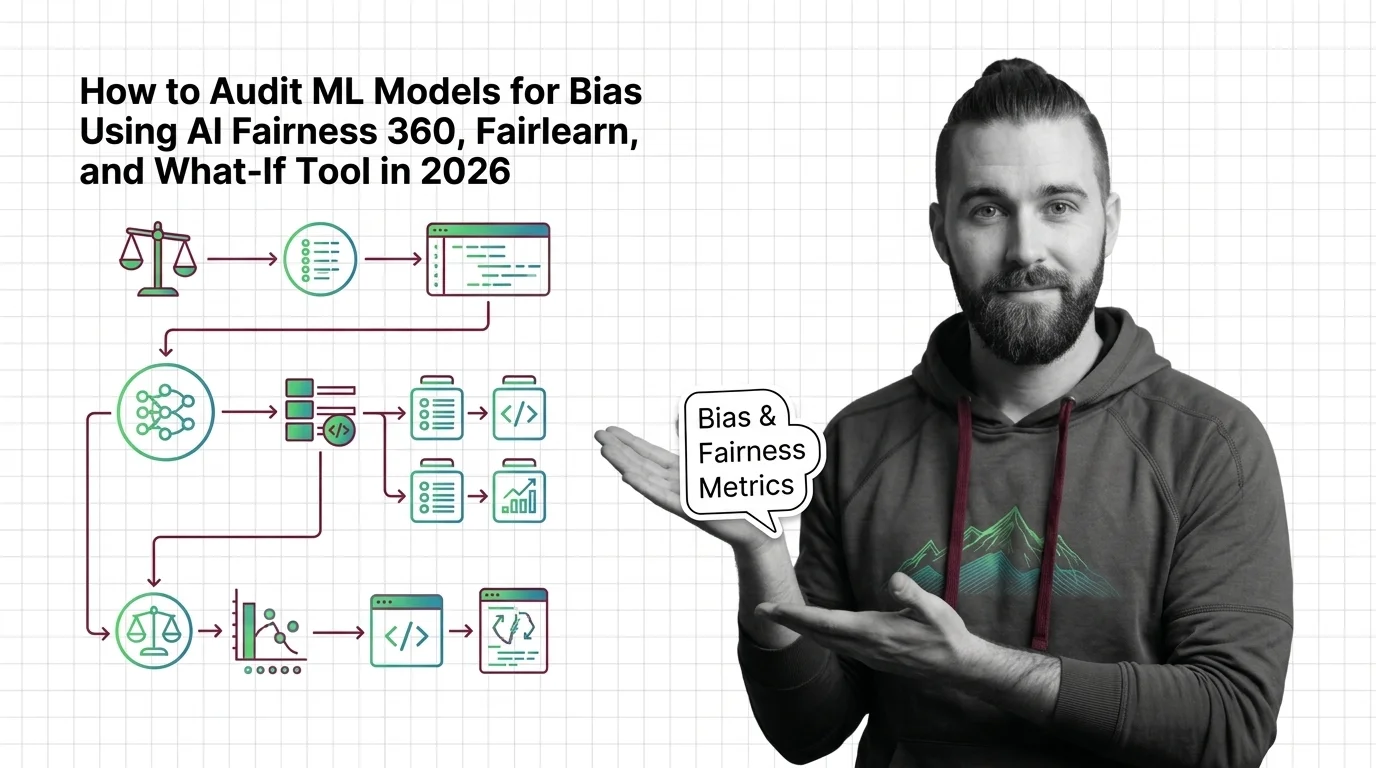

Build with Bias and Fairness Metrics

Implementing bias and fairness metrics means choosing which definitions of fairness apply to your use case, integrating measurement into your evaluation pipeline, and deciding what thresholds trigger action.

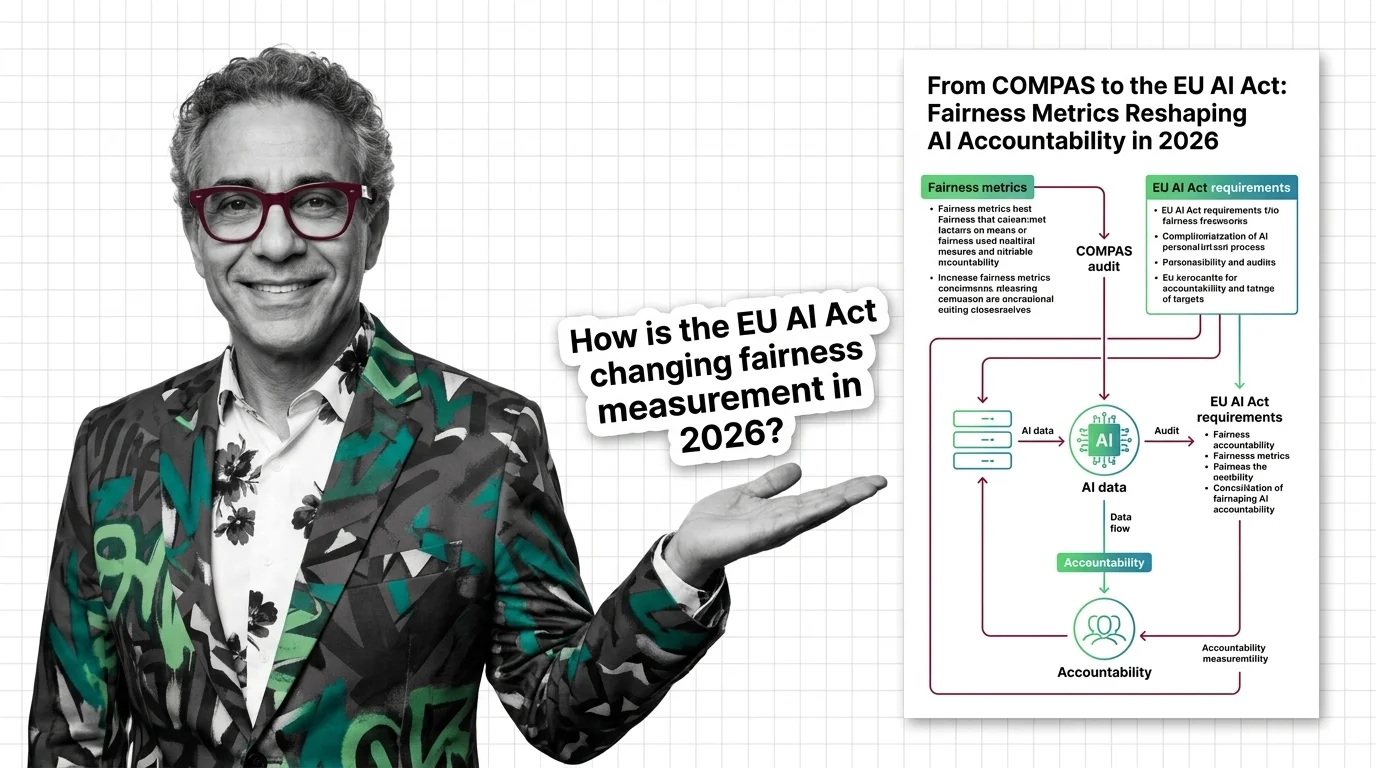

What's Changing in 2026

Regulatory frameworks and industry standards around bias and fairness metrics are evolving rapidly. Staying current on which metrics regulators expect and which new approaches are gaining traction directly affects compliance timelines.

Updated March 2026

Risks and Considerations

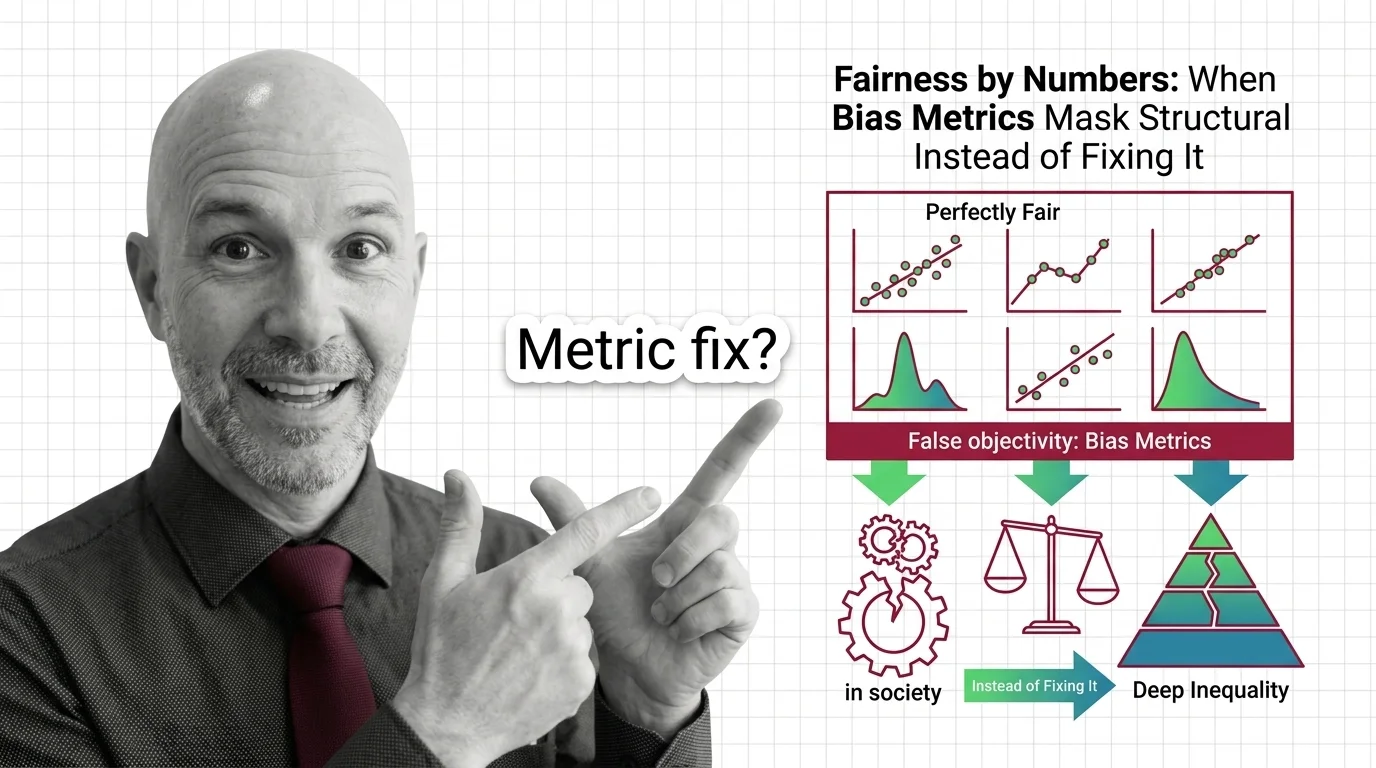

No single fairness metric captures every dimension of harm, and optimizing for one can degrade another. Before relying on any measurement framework, consider what forms of bias it cannot detect and who bears the residual risk.