Attention Mechanism

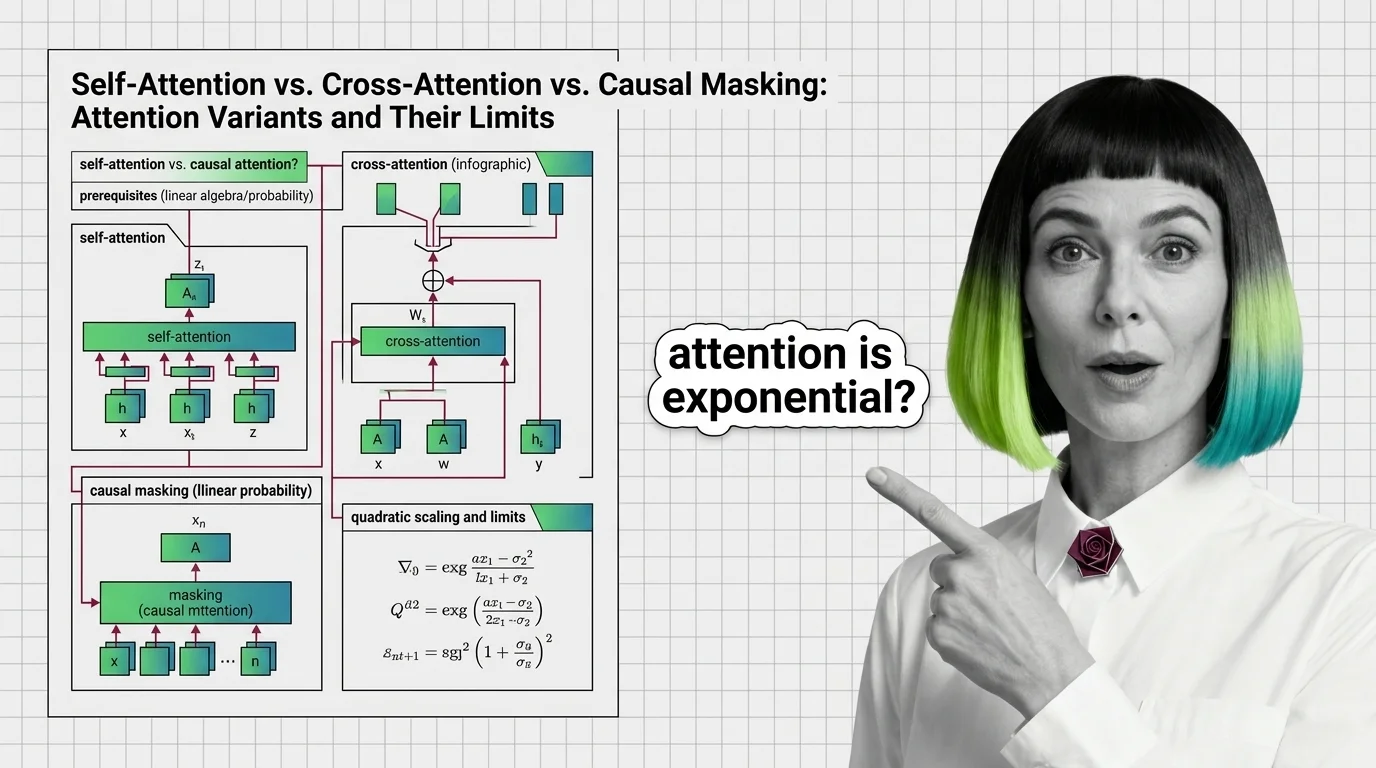

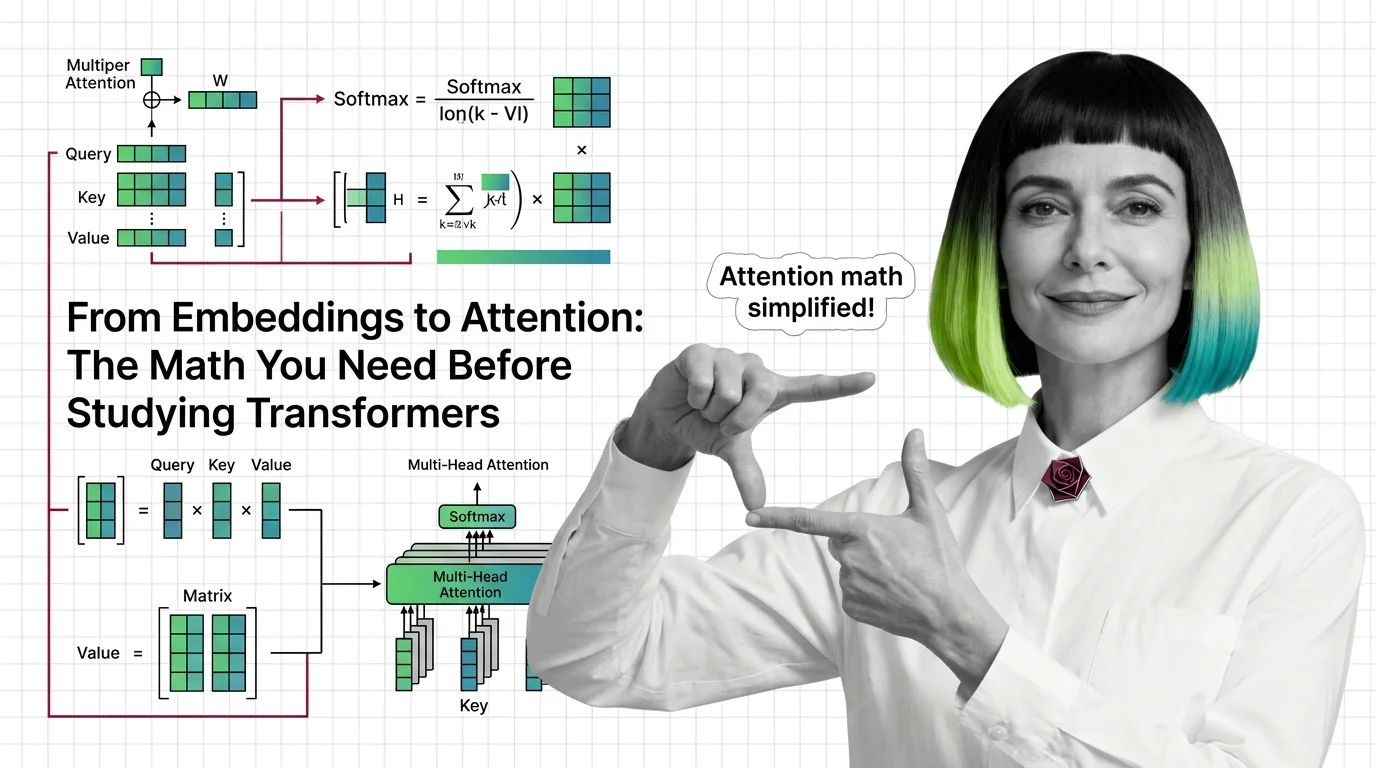

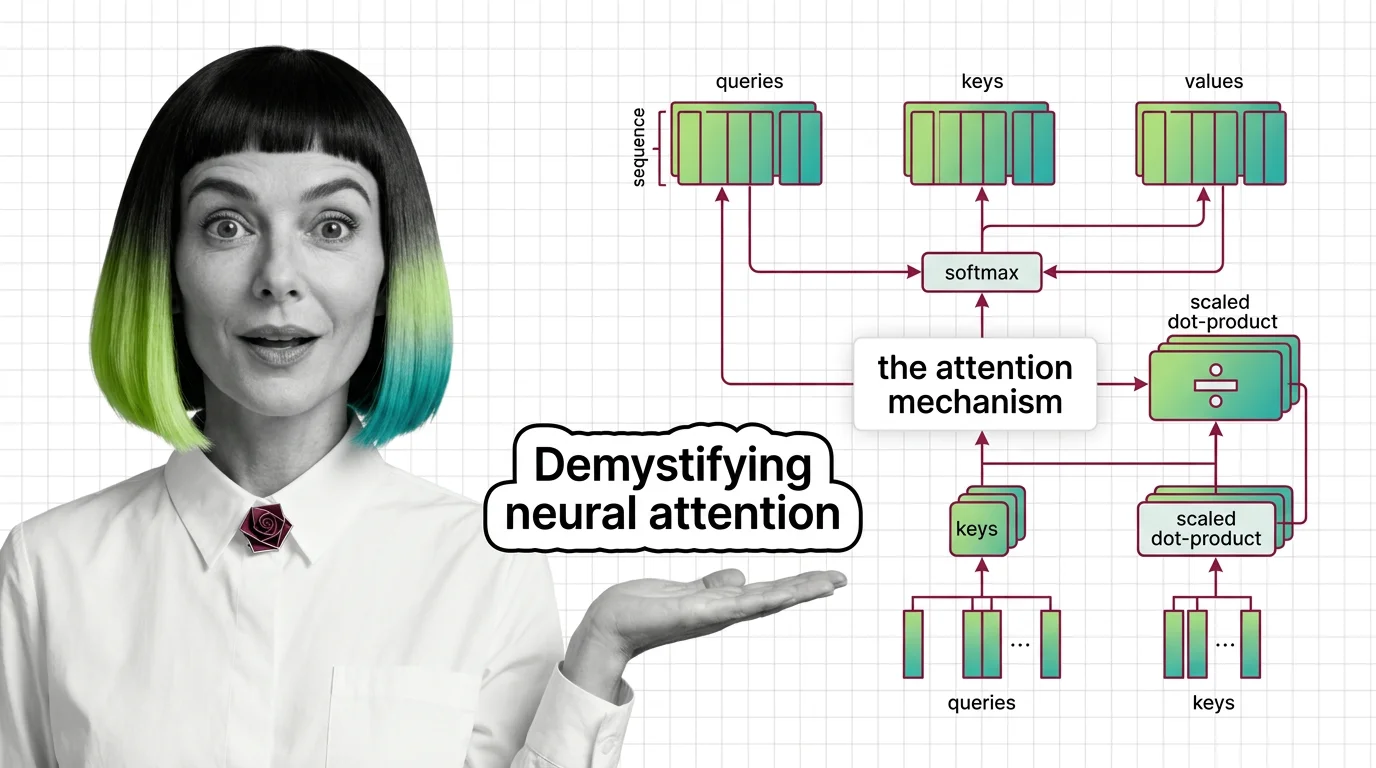

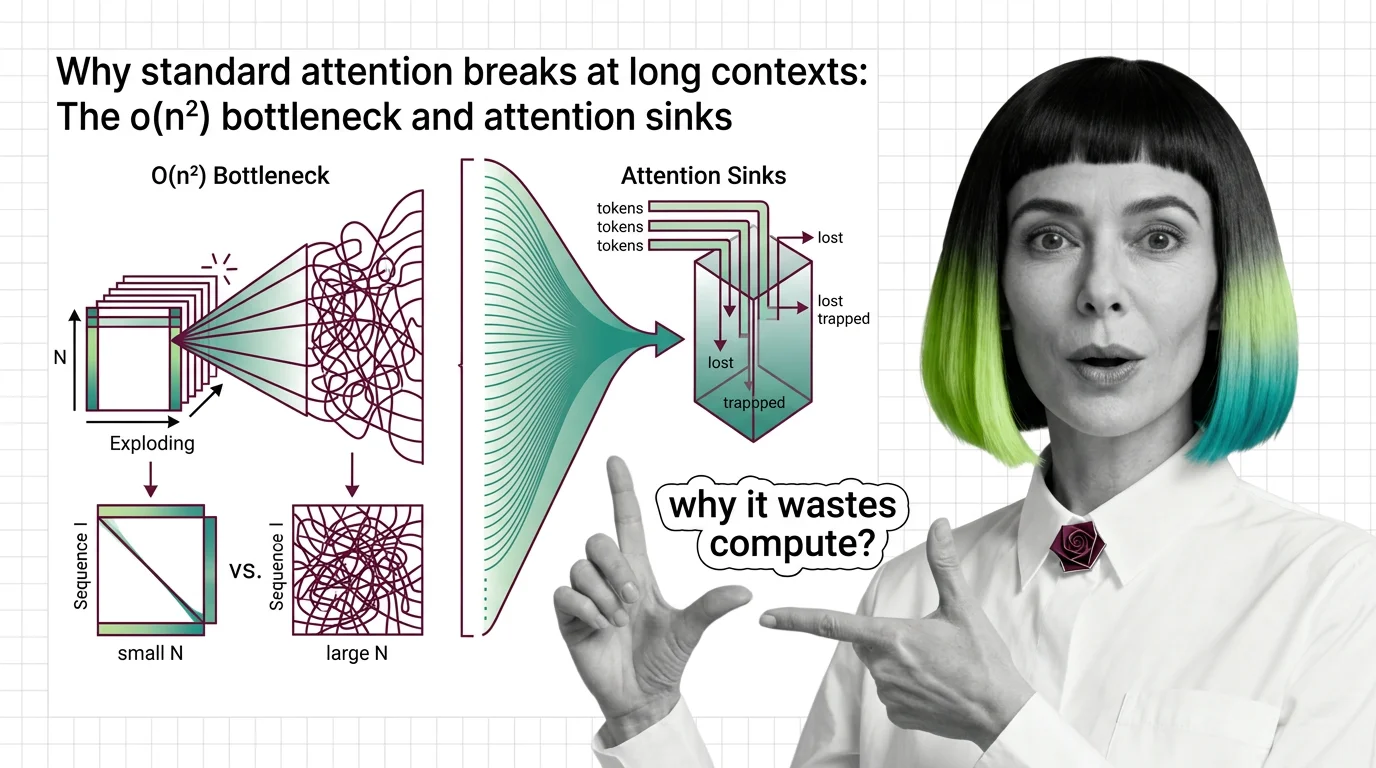

An attention mechanism is a neural network component that lets a model dynamically focus on the most relevant parts of its input when generating each piece of output. Instead of treating every input token equally, attention computes weighted relevance scores, so the model can prioritize context that matters most. Variants include self-attention, cross-attention, and scaled dot-product attention. Also known as: Self-Attention, Attention

Understand the Fundamentals

Attention mechanisms are the reason modern language models can connect a pronoun to a noun paragraph away. These explainers unpack the math and intuition behind how relevance scores are computed and why architecture choices matter.

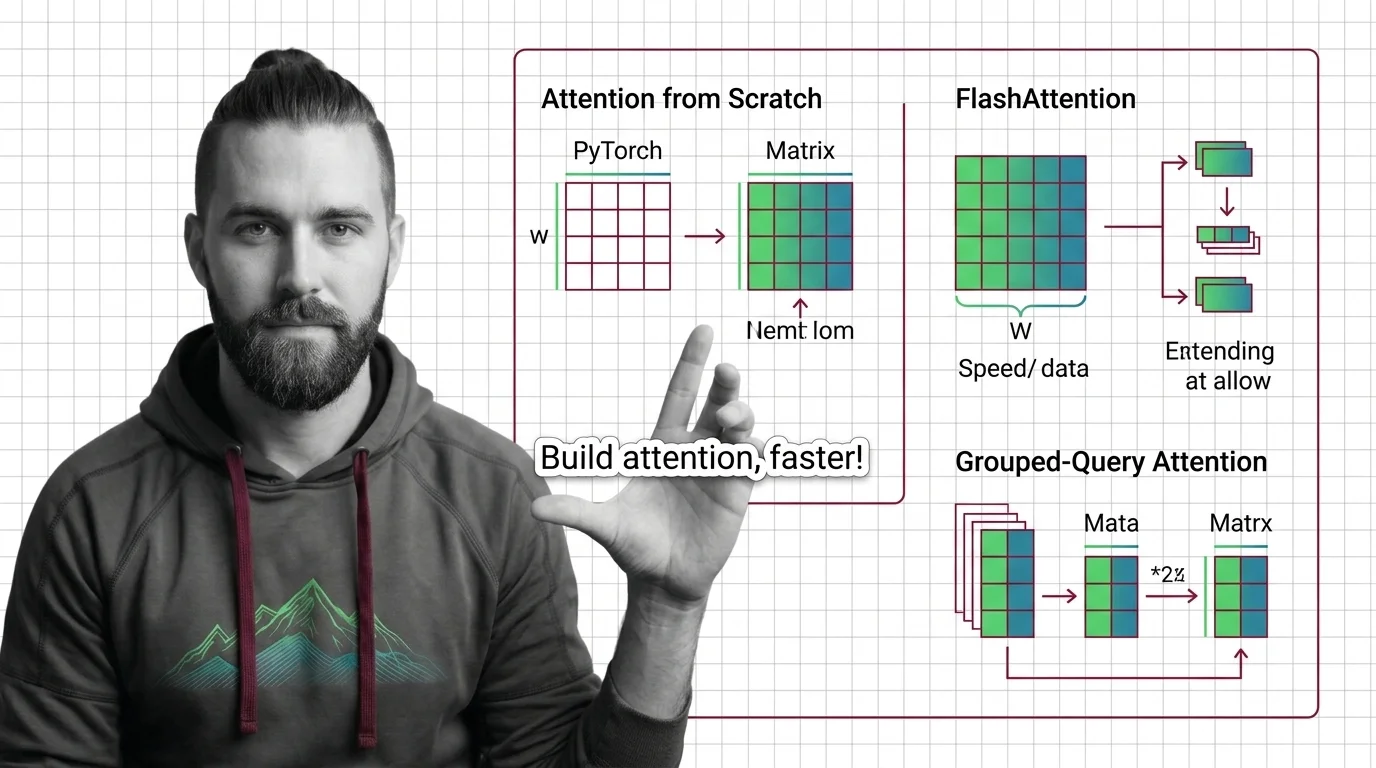

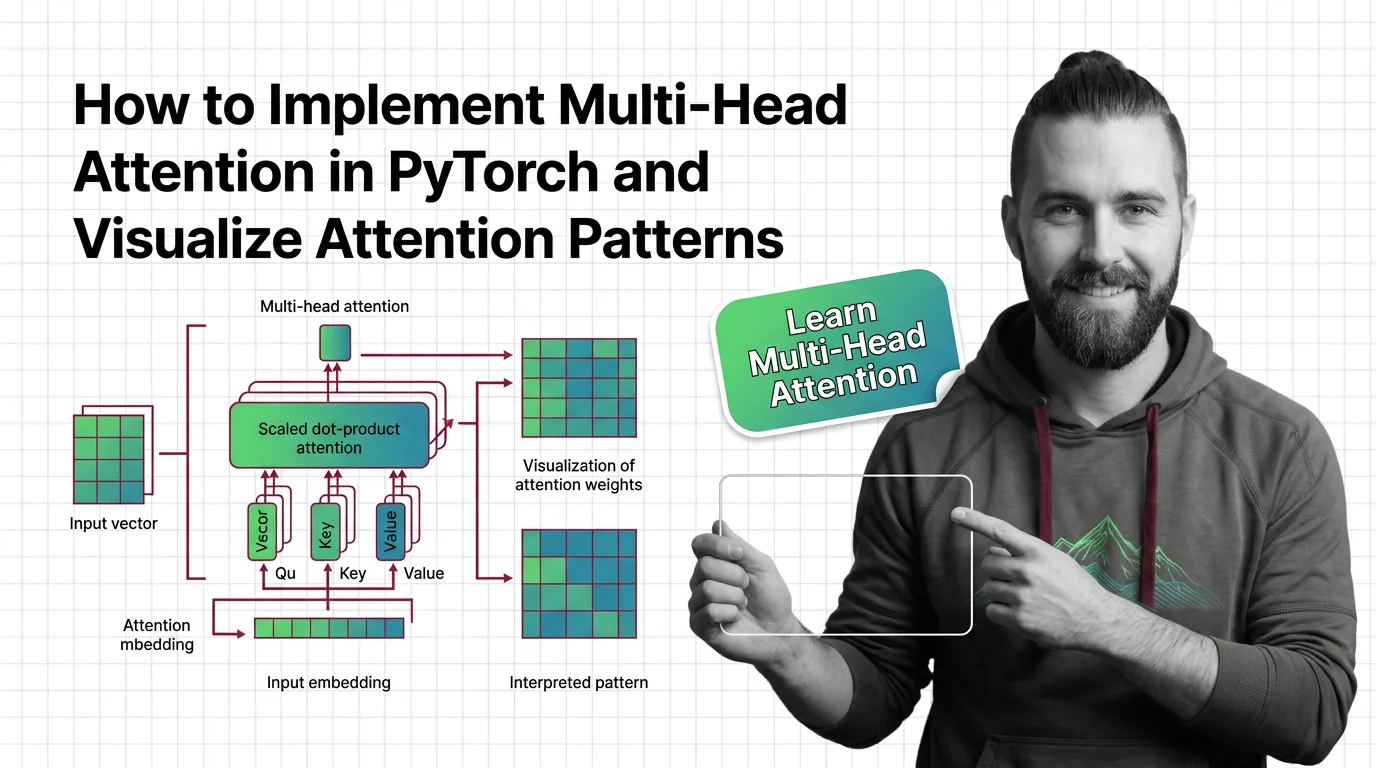

Build with Attention Mechanism

Implementing attention from scratch reveals trade-offs between memory, speed, and expressiveness that library abstractions hide. These guides walk through real code and visualization techniques you can adapt to your own projects.

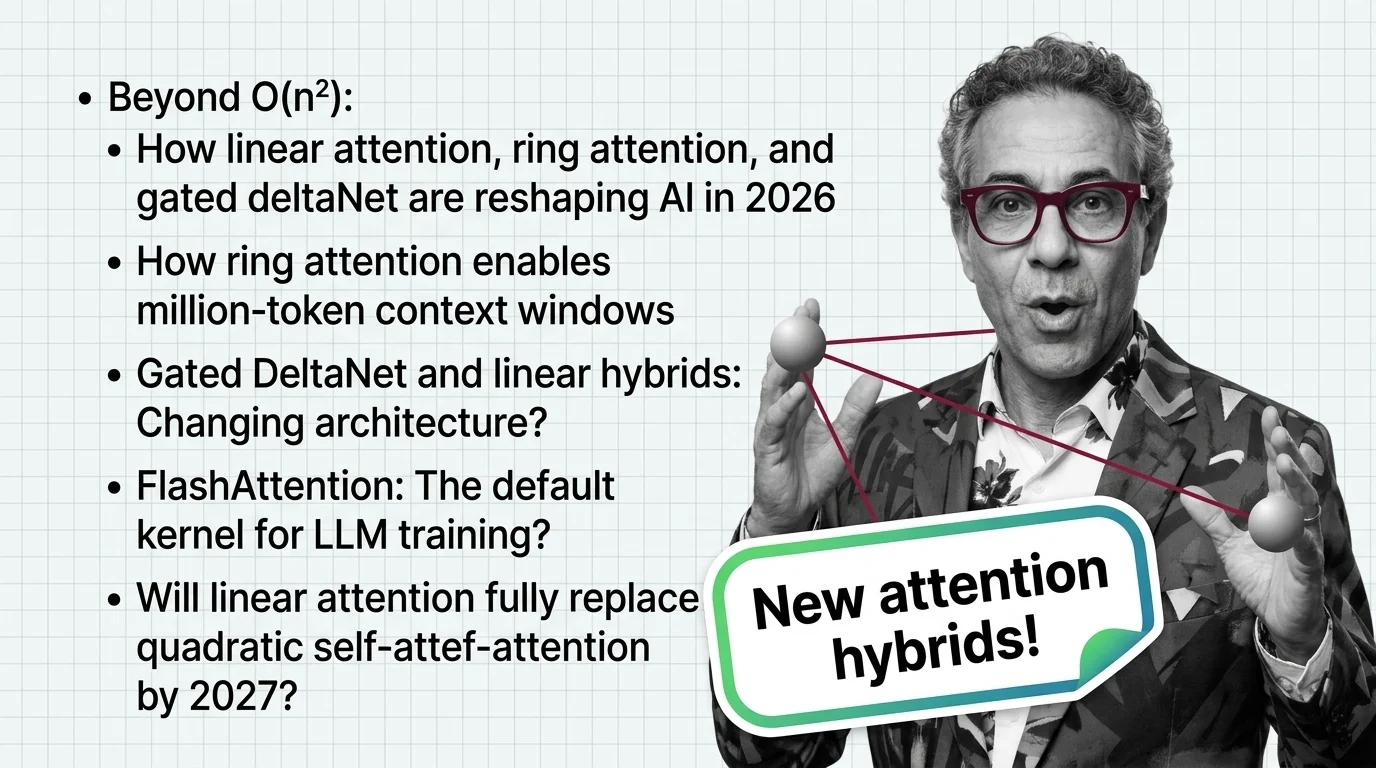

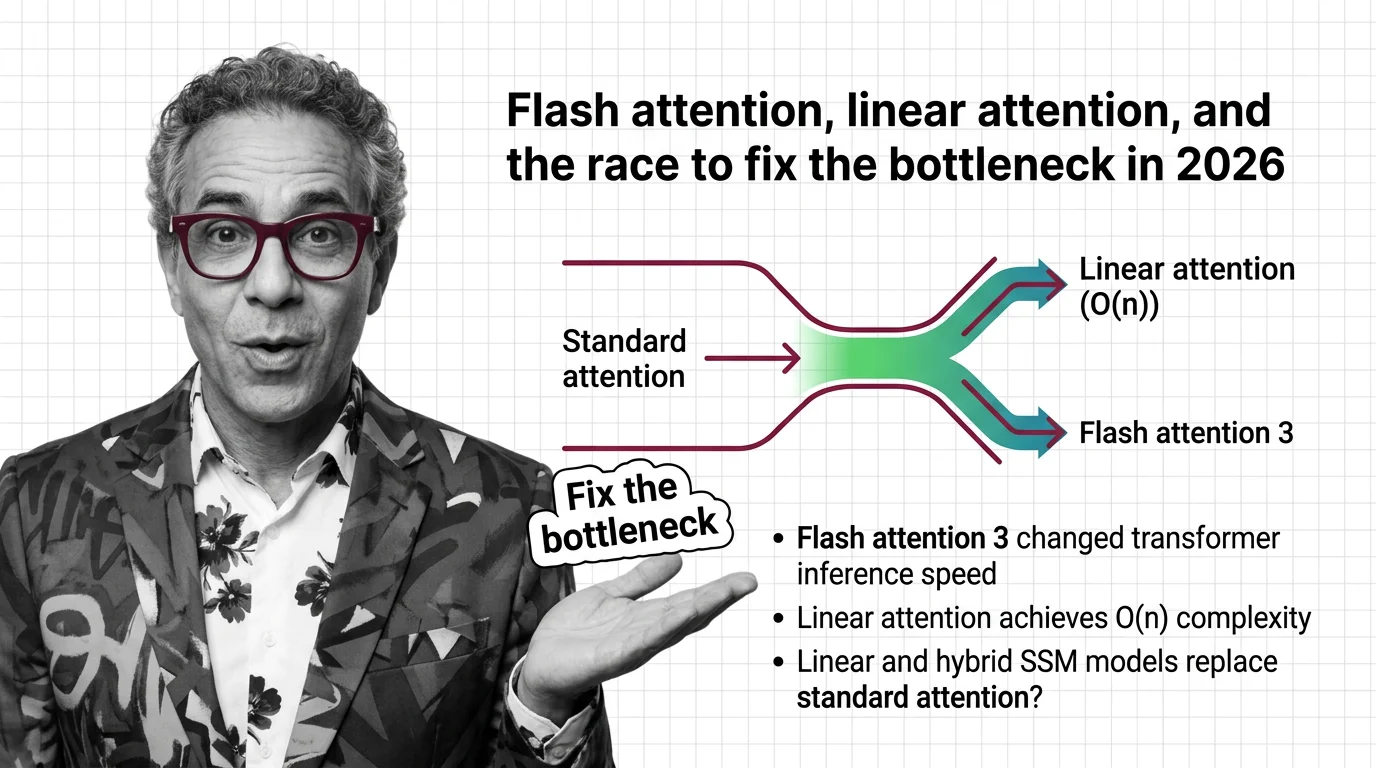

What's Changing in 2026

Attention efficiency is one of the most active research frontiers in AI, with new variants emerging that challenge long-standing computational limits. Staying current here means understanding which breakthroughs will reshape model capabilities next.

Updated March 2026

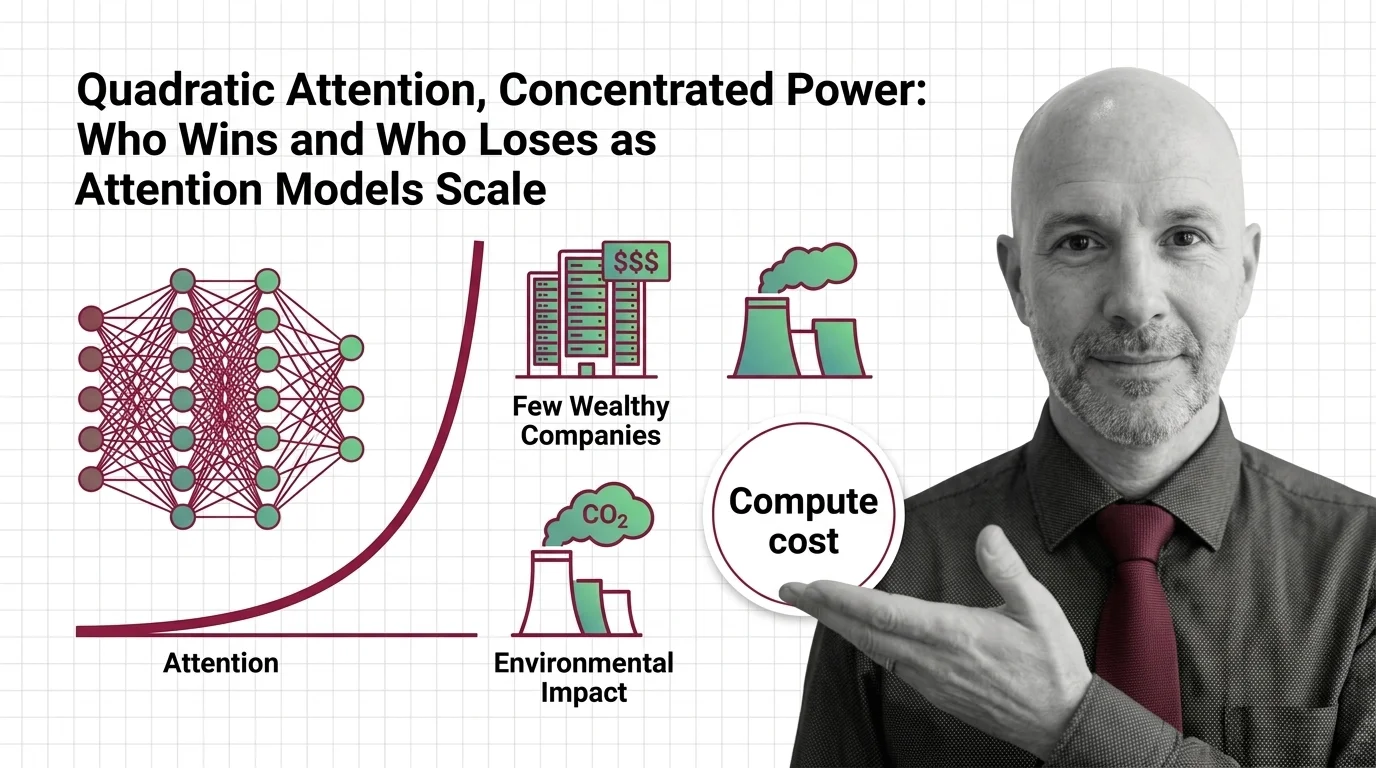

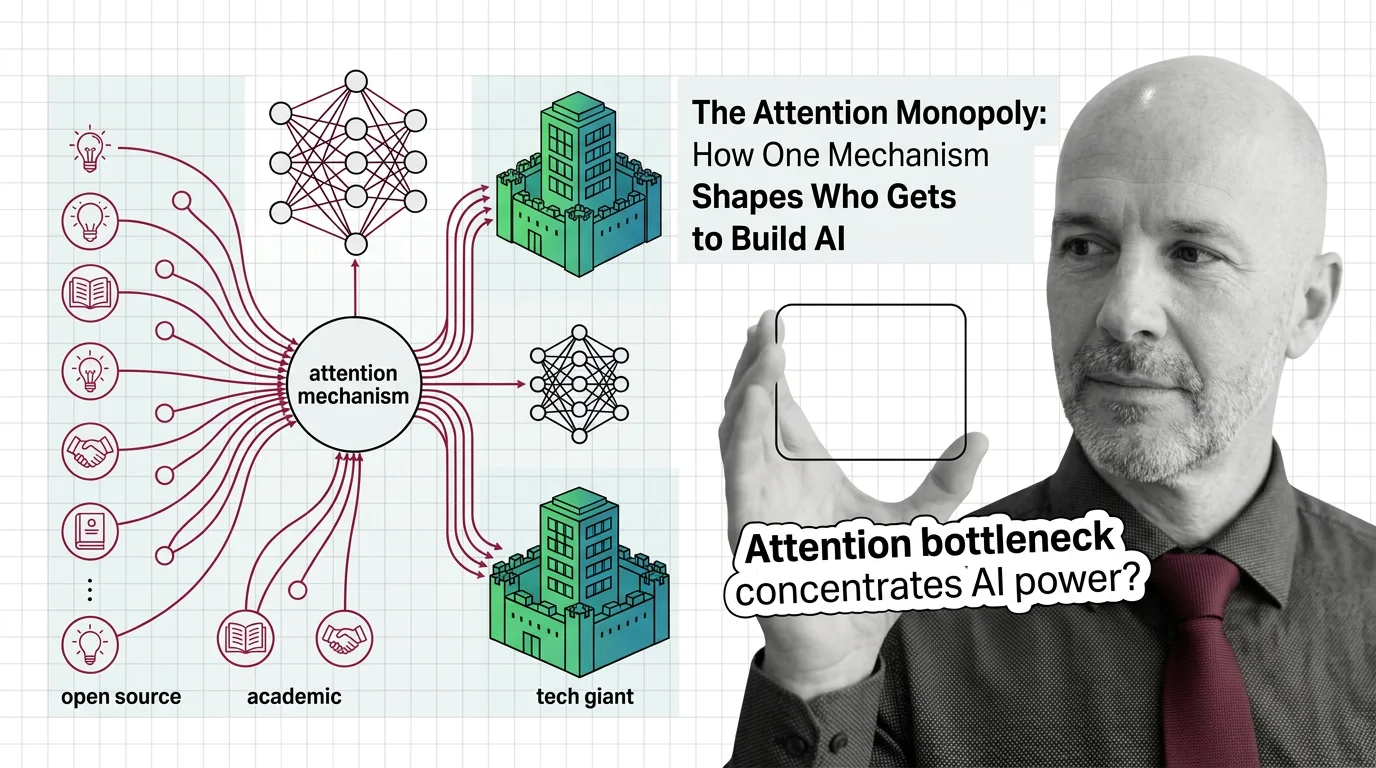

Risks and Considerations

The computational cost of attention concentrates advanced AI development among well-resourced organizations. Understanding these dynamics is essential for anyone concerned about equitable access to the technology.