From Diffusion to InstructPix2Pix: AI Image Editing Prerequisites

Before using GPT Image or FLUX, understand diffusion, classifier-free guidance, and why InstructPix2Pix made instruction-based editing tractable.

AI image editing uses machine learning models to modify existing photos or artwork through text instructions, masked regions, or style references.

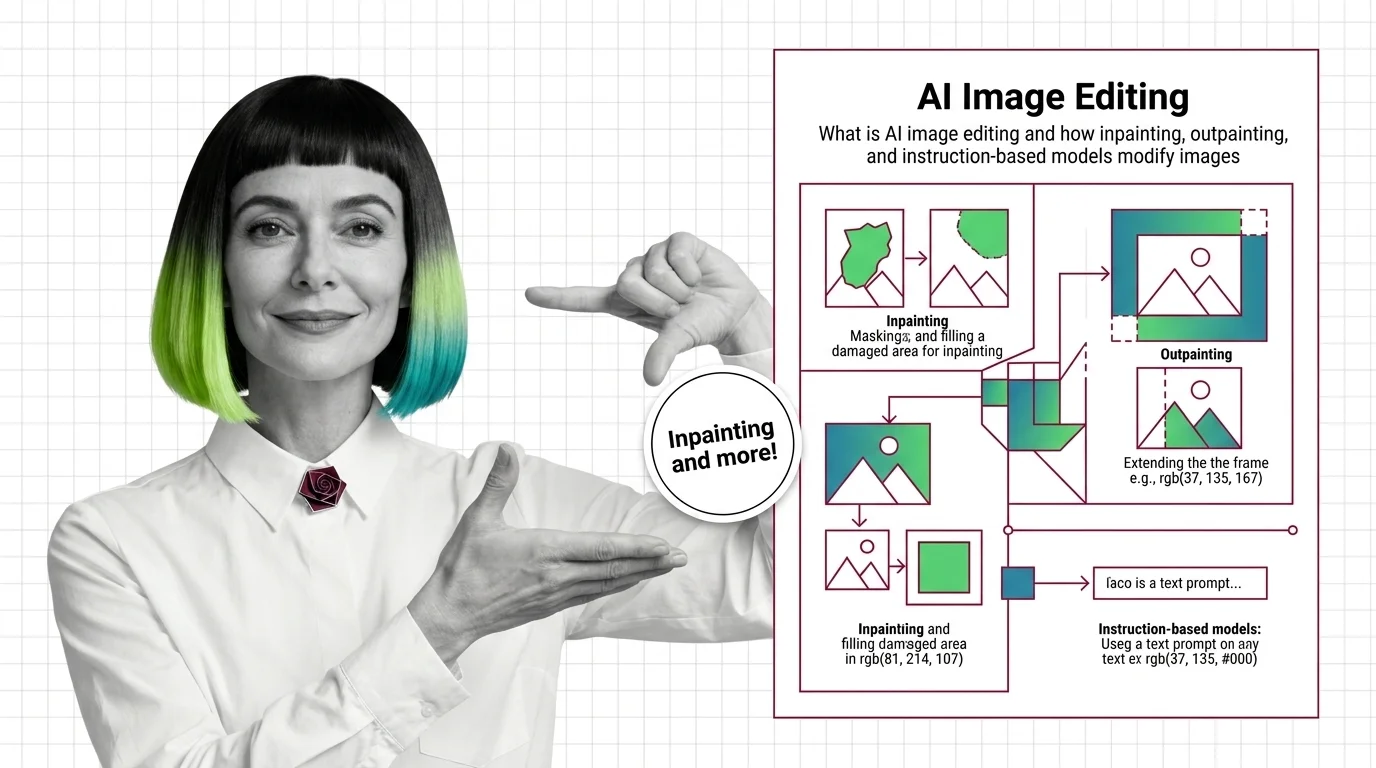

Techniques include inpainting (filling masked areas), outpainting (extending beyond edges), and instruction-based editing where a user types a change in plain language and the model rewrites only what needs to change. Also known as: Generative Fill, AI Inpainting

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Before using GPT Image or FLUX, understand diffusion, classifier-free guidance, and why InstructPix2Pix made instruction-based editing tractable.

AI image editing uses diffusion to modify pixels under a mask or follow text instructions. Learn how inpainting, outpainting, and edit models work.

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

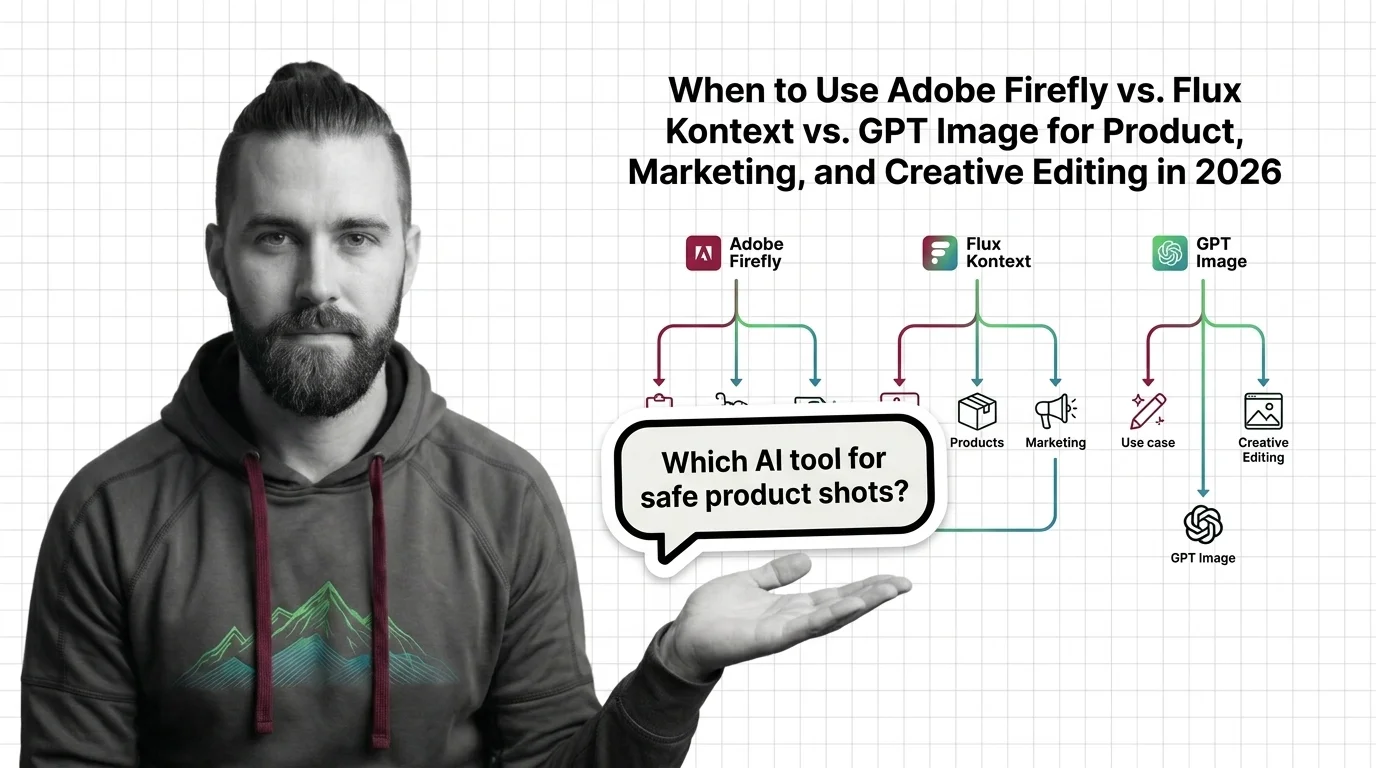

Pick the right AI image editor for commercial work: Adobe Firefly indemnifies, Flux Kontext iterates, GPT Image follows instructions. Here is how to spec each.

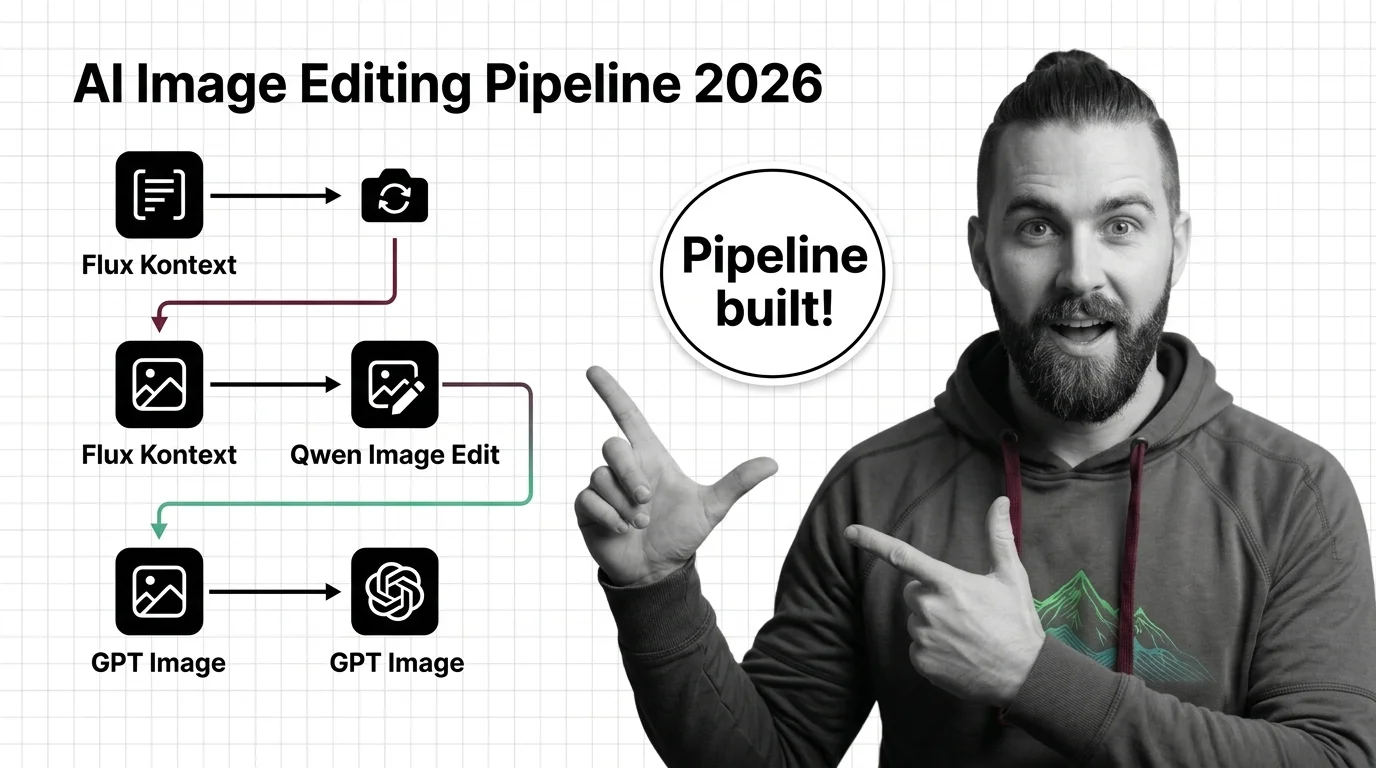

Build a production AI image editing pipeline in 2026. Spec Flux Kontext, Qwen Image Edit, and GPT Image 1.5 as swappable edit engines behind one router.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

Updated April 2026

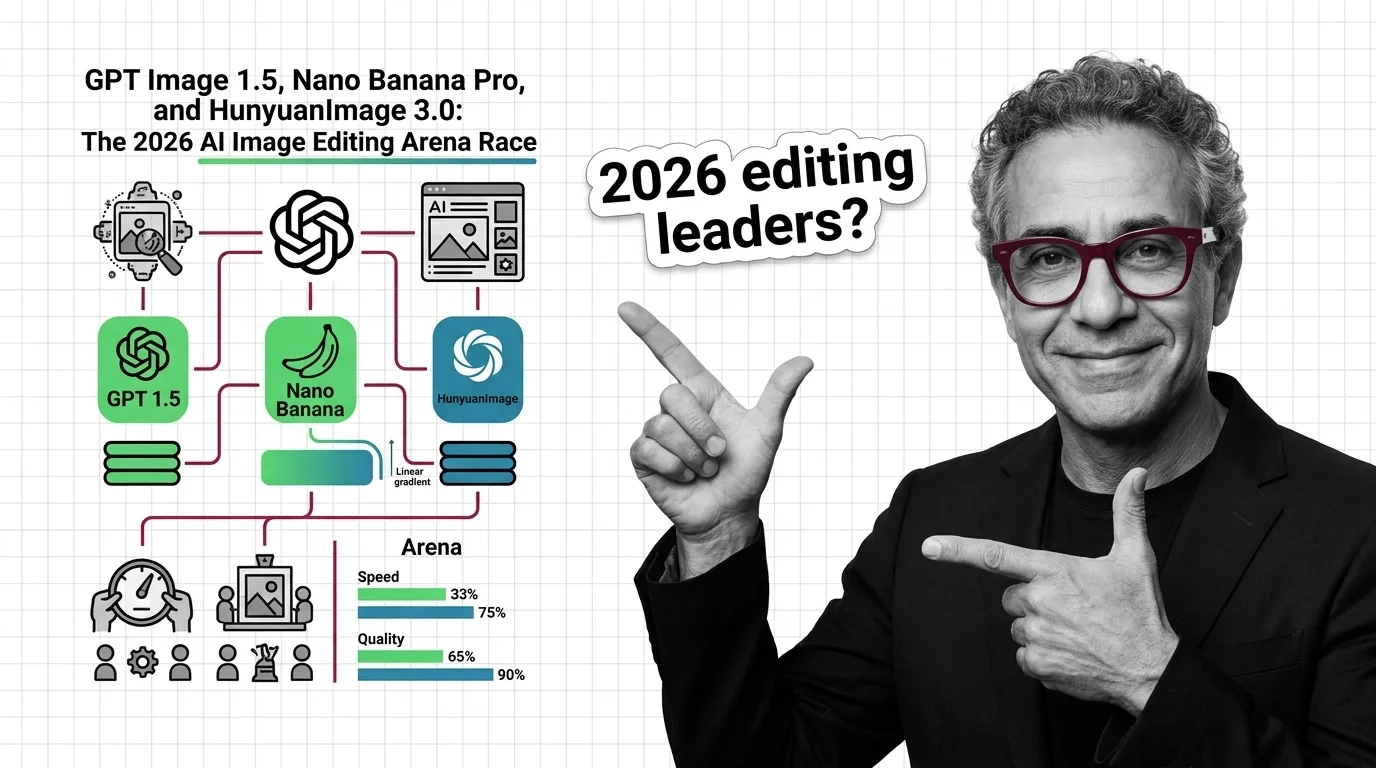

The Artificial Analysis editing arena compressed into a four-way race in 2026. What GPT Image 1.5, Nano Banana Pro, and HunyuanImage 3.0 mean for builders.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

AI image editing has industrialized the act of lifting someone's likeness. Consent law, C2PA metadata, and new legislation still don't match its scale.