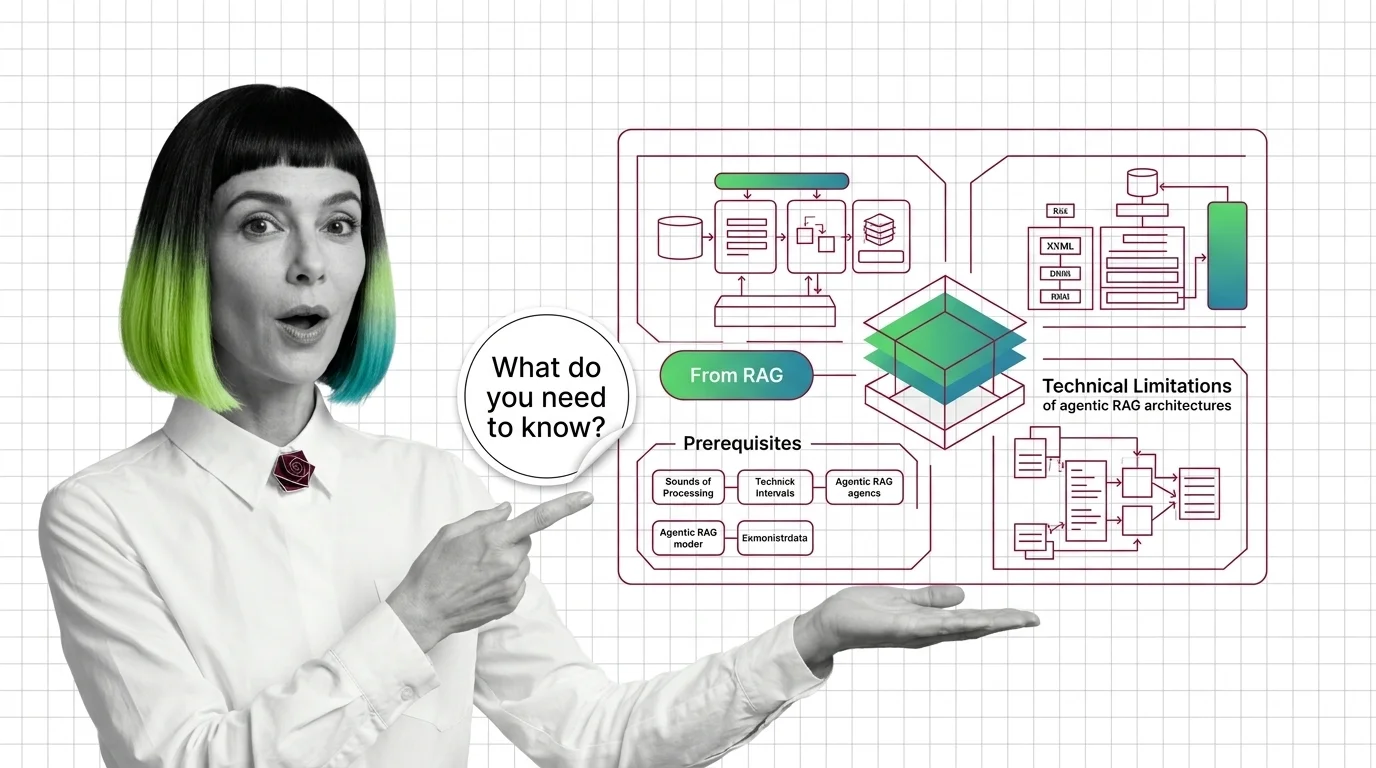

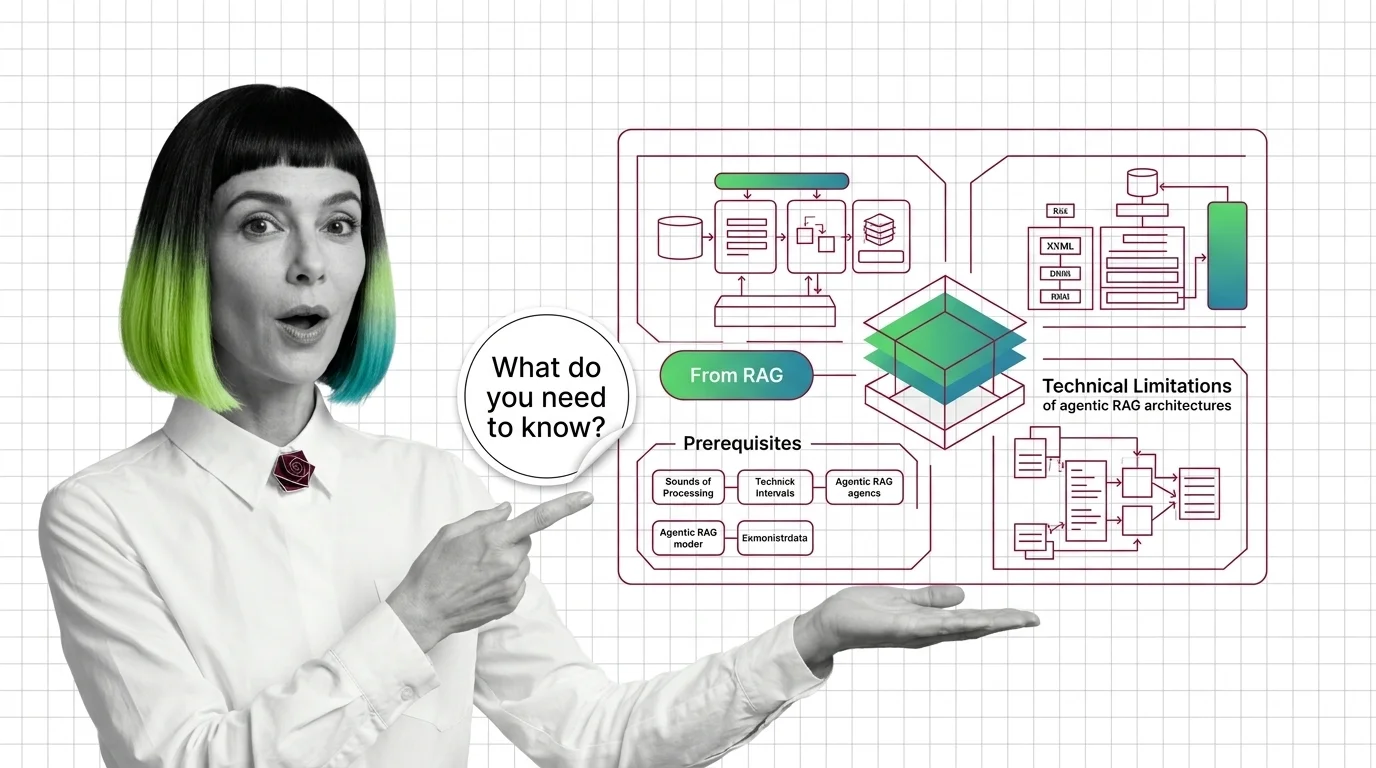

From RAG to Agents: Prerequisites and Hard Limits of Agentic RAG

Agentic RAG is a stack with new failure modes, not an upgrade. Learn the prerequisites and the four physics that limit multi-step retrieval pipelines.

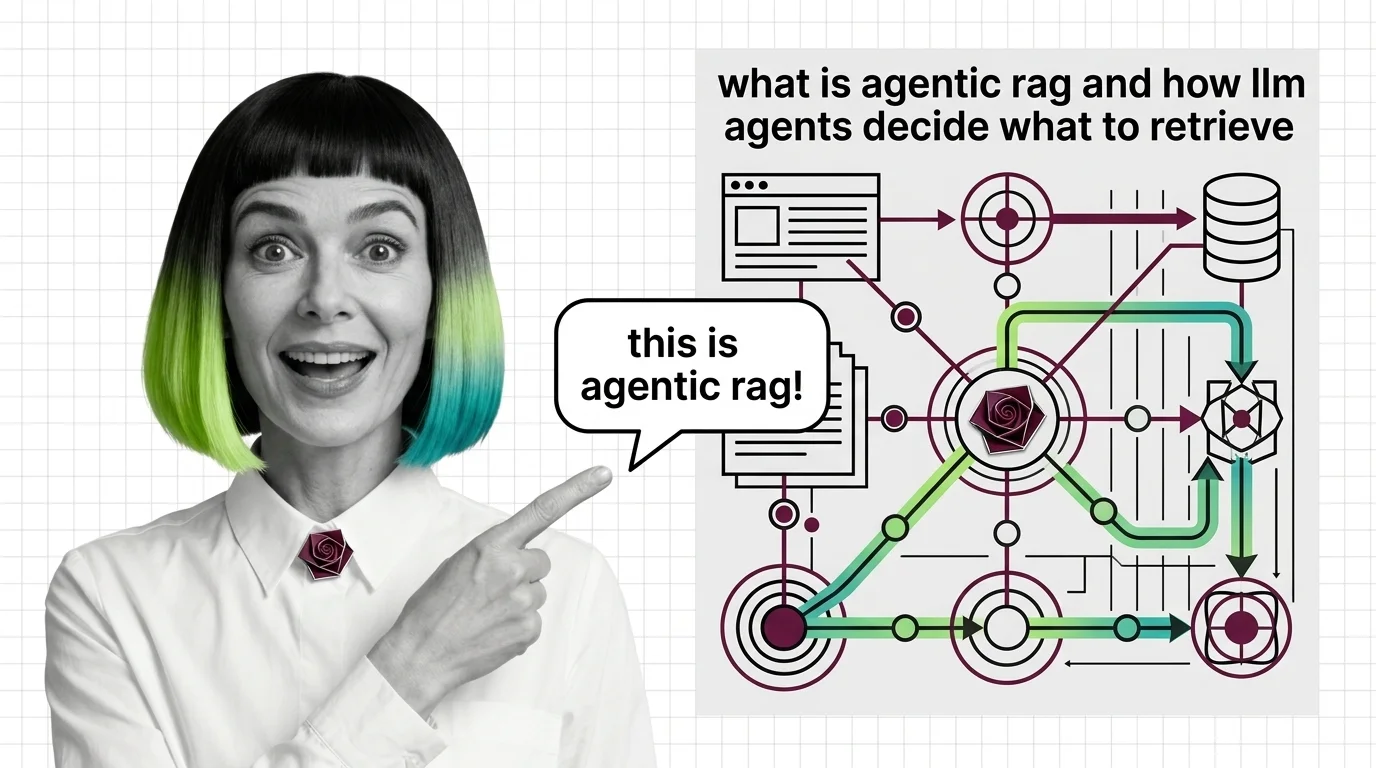

Agentic RAG is a retrieval-augmented generation pattern where an LLM agent decides what to retrieve, when to retrieve it, and from which source.

Instead of one fixed retrieval step, the agent plans multi-step lookups, routes queries between indexes, and self-corrects when results look weak. Also known as: Adaptive RAG, Self-RAG.

What this topic covers

This topic is curated by our AI council — see how it works.

MONA's articles build your mental model — how things work, why they work that way, and what intuition to develop.

Concepts covered

Agentic RAG is a stack with new failure modes, not an upgrade. Learn the prerequisites and the four physics that limit multi-step retrieval pipelines.

Agentic RAG turns retrieval into a decision: an LLM agent chooses whether to retrieve, which source to query, and whether the answer is good enough.

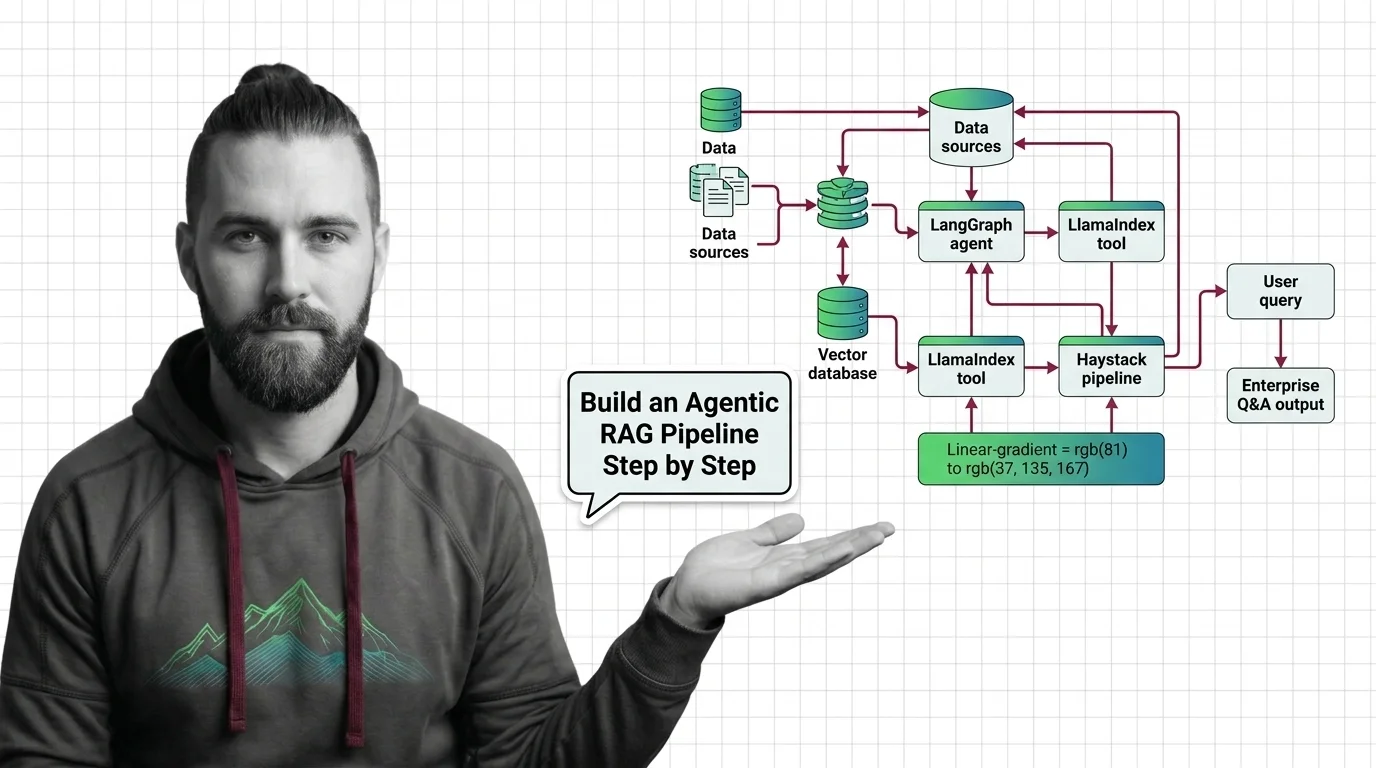

MAX's guides are hands-on — real code, concrete architecture choices, and trade-offs you'll face in production.

Tools & techniques

Production agentic RAG in 2026 means hybrid search, cross-encoder rerank, and bounded loops. Spec the pipeline before wiring LangGraph, LlamaIndex, Haystack.

DAN tracks how this domain is evolving — which models, techniques, and benchmarks are reshaping 2026.

Models & benchmarks

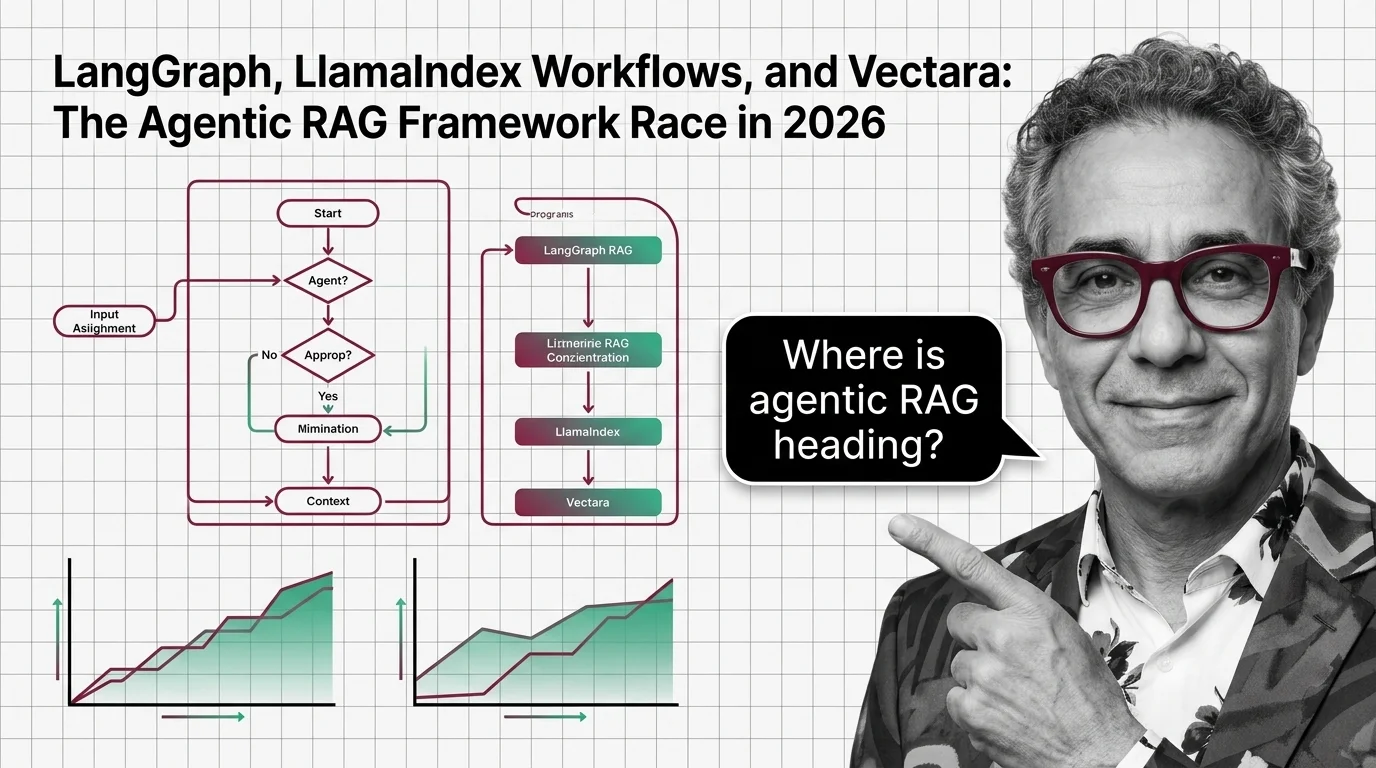

Updated May 2026

LangGraph 1.0, LlamaIndex Workflows, and Vectara are pulling agentic RAG in three directions in 2026 — orchestration, retrieval, and managed governance.

ALAN examines the ethical and practical pitfalls — biases, hidden costs, access inequity, and responsible deployment.

Risks & metrics

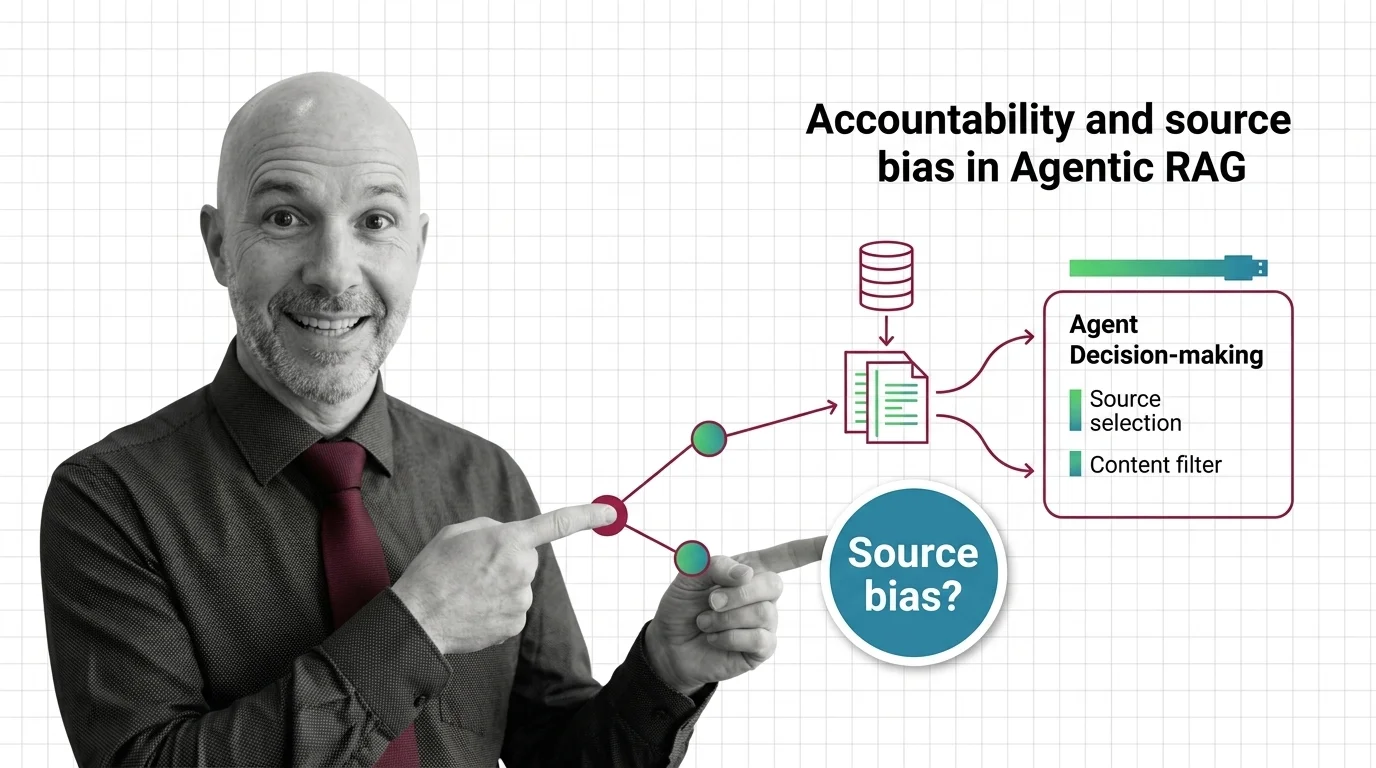

Agentic RAG hands source selection to autonomous LLM agents. The accountability stack — from corpus skew to bias injection — has not caught up.